Guide to Data-Centric AI Development for Defense

By Umang Dayal

July 24, 2025

Artificial intelligence systems in defense are entering a critical inflection point. For years, the dominant approach to building AI models has focused on refining algorithms, adjusting neural network architectures, optimizing hyperparameters, and deploying increasingly larger models with greater computational resources.

This model-centric paradigm has yielded impressive benchmarks in controlled settings. Yet, in the real-world complexity of defense operations, characterized by dynamic battlefields, sensor noise, and rapidly evolving adversarial tactics, this approach often breaks down. Models that perform well in lab environments may fail catastrophically in live scenarios due to blind spots in the data they were trained on.

In high-risk defense applications such as surveillance, autonomous targeting, battlefield analytics, and decision support systems, the stakes could not be higher. Models must function under uncertain conditions, reason with partial information, and maintain performance across edge cases.

In this blog, we discuss why a data-centric approach is critical for defense AI, how it contrasts with traditional model-centric development, and explore recommendations for shaping the future of mission-ready intelligence systems.

Why Defense Needs a Data-Centric Approach

Defense applications of AI differ from commercial ones in one crucial respect: failure is not just a business risk; it is a national security liability. In this context, continuing to iterate on models without critically examining the underlying data introduces systemic vulnerabilities.

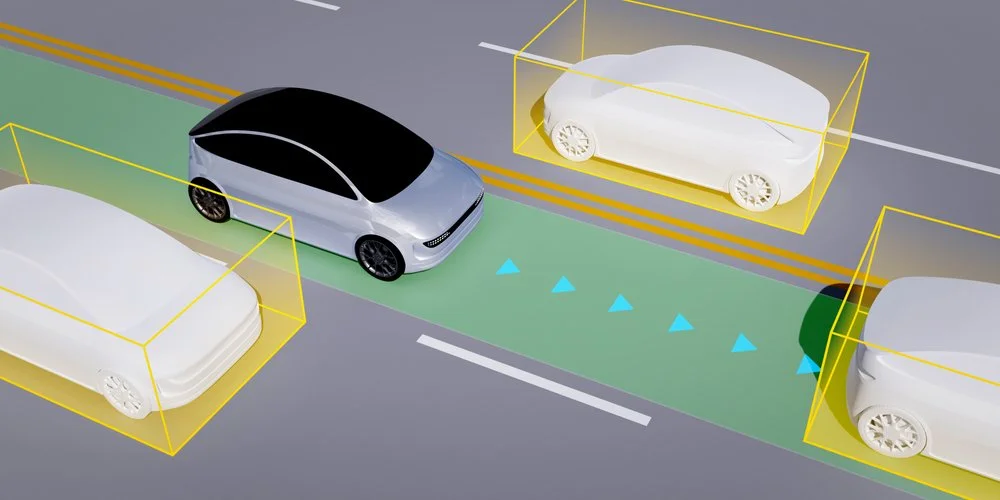

Defense AI systems are expected to perform in extreme and unpredictable environments, such as war zones with degraded sensors, contested electromagnetic spectrums, and adversarial interference. A model trained on curated, noise-free data may perform flawlessly in simulation but collapse under the ambiguity and uncertainty of live operations.

The traditional model-centric approach often overlooks the quality, completeness, and diversity of the data itself. This creates what might be termed a data-blind development loop, one where developers attempt to compensate for poor data coverage by tuning models further, leading to overfitting, brittleness, and hallucinated outputs. For example, a visual detection model that performs well on clear, daylight images may fail to detect camouflaged threats in low-light conditions or misidentify non-combatants due to contextual ambiguity. These are not just technical failures; they are operational liabilities.

Military AI systems demand a far higher bar for robustness, explainability, and assurance than typical commercial systems. These requirements are not optional; they are essential for compliance with military ethics, international laws of armed conflict, and public accountability. Robustness means the system must generalize across unseen terrains and scenarios. Explainability requires that decisions, especially lethal ones, are traceable and interpretable by human operators. Assurance means that AI behavior under stress, uncertainty, and edge conditions can be rigorously validated before deployment.

In this context, data becomes a strategic asset, on par with weapons systems and supply chains. Programs are shifting from viewing data as a byproduct of operations to treating it as an enabler of next-generation capabilities. Whether it is building autonomous platforms that navigate in cluttered terrain or decision support tools for real-time battlefield analytics, the quality and stewardship of the underlying data is what ultimately determine trust and effectiveness in AI systems.

Defense organizations that embrace a data-centric paradigm are not simply changing their engineering process; they are evolving their strategic doctrine.

Data Challenges in Defense AI

Building reliable AI systems in defense is not just a matter of model architecture; the complexity, sensitivity, and inconsistency of the data fundamentally constrain it. Unlike commercial datasets that can be openly scraped, labeled, and standardized at scale, defense data is fragmented, classified, and operationally diverse. These conditions introduce a unique set of challenges that traditional machine learning workflows cannot solve.

Data Availability and Fragmentation

One of the most persistent issues is the limited availability and fragmented nature of defense data. Critical information is often distributed across siloed systems, each governed by distinct security protocols, formats, and access restrictions. Many defense organizations still operate legacy platforms where data collection was not designed for AI use. Systems may generate low-fidelity logs, lack metadata, or be stored in incompatible formats. Moreover, classified datasets are typically confined to secure enclaves, limiting collaborative development and cross-validation. The result is a fractured data ecosystem that impedes the creation of coherent, AI-ready training sets.

Data Quality and Bias

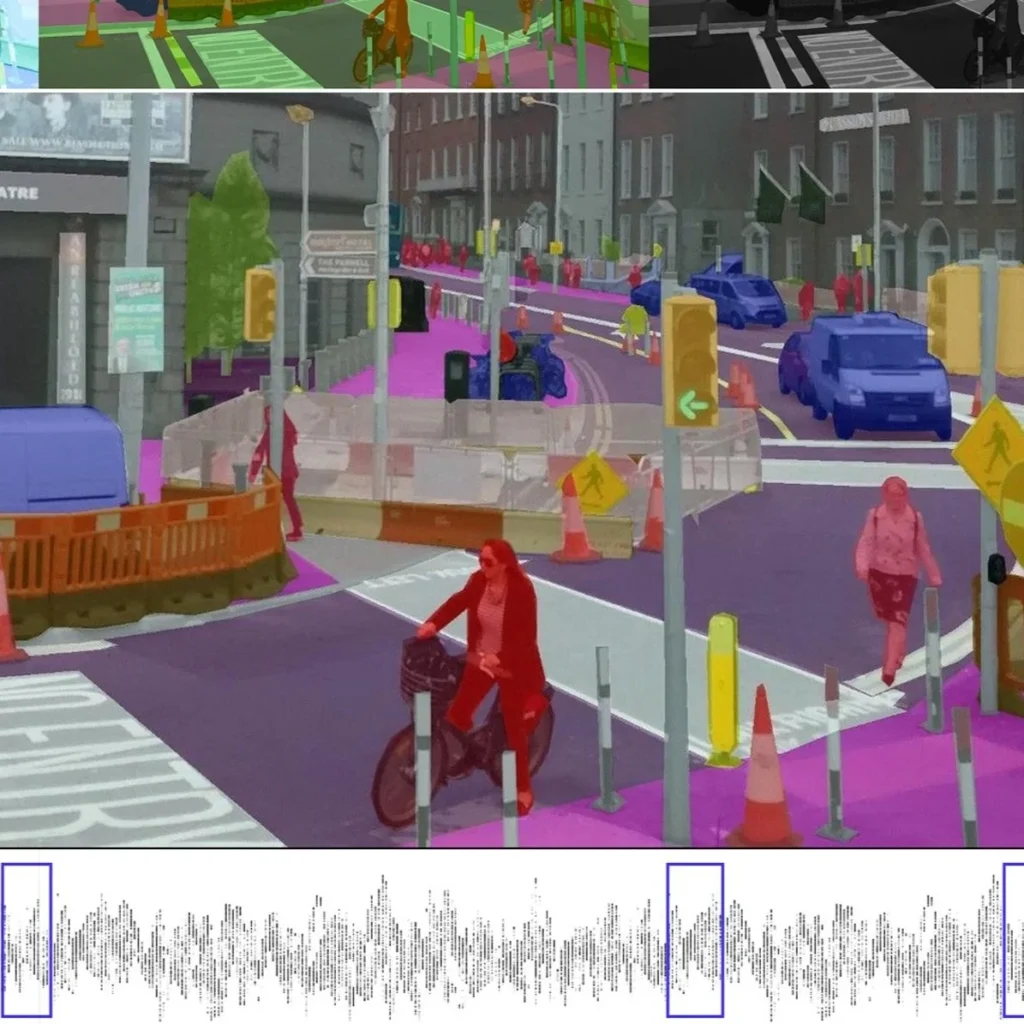

Even when data is accessible, quality and representativeness remain a significant concern. Data Annotation errors, missing context, or low-resolution inputs can severely impact model performance. More critically, biased datasets, whether due to overrepresentation of specific terrains, lighting conditions, or adversary types, can lead to dangerous generalization failures. For instance, a surveillance model trained predominantly on arid environments may underperform in jungle or urban settings. In adversarial contexts, data that lacks exposure to deceptive techniques such as camouflage or decoys risks enabling manipulation at deployment. The consequences are not theoretical; they can manifest in false positives, misidentification of friendlies, or critical situational awareness gaps.

Data Labeling in High-Risk Environments

Labeling defense data is uniquely difficult as operational footage may be sensitive, encrypted, or captured in chaotic conditions where even human interpretation is uncertain. Annotators often require specialized military knowledge to identify relevant objects, behaviors, or threats, making generic outsourcing infeasible. Furthermore, indiscriminately labeling large volumes of data is neither cost-effective nor strategically sound. The defense community is beginning to adopt smart-sizing approaches, prioritizing annotation of rare, ambiguous, or high-risk scenarios over routine ones. This aligns with recent research insights, such as those highlighted in the “Achilles Heel of AI” paper, which underscore the value of targeted labeling for performance gains in edge cases.

Multi-Modal and Real-Time Data Fusion

Modern military operations generate data from a wide range of sources: radar, electro-optical/infrared (EO/IR) sensors, satellite imagery, cyber intelligence, and battlefield telemetry. These modalities differ in resolution, frequency, reliability, and interpretation frameworks. Training AI systems that can reason across such disparate streams is a major challenge. Fusion models must handle asynchronous inputs, conflicting signals, and incomplete information, all while operating under real-time constraints. Achieving this demands not only sophisticated modeling but also high-quality, temporally-aligned multi-modal datasets, a resource that remains scarce and difficult to construct under operational constraints.

Emerging Innovations in Data-Centric Defense AI

To meet the demanding requirements of military AI, the defense sector is investing in a range of innovations that rethink how data is curated, annotated, and used for model training. These approaches aim to overcome the limitations of traditional workflows by targeting data quantity, quality, and strategic value. Rather than relying solely on brute-force data collection or generic annotation pipelines, these innovations focus on adaptive, secure, and context-aware data practices that are better suited to high-risk environments.

Smart-Sizing and Adaptive Annotation

One of the most impactful shifts is the move toward smart-sizing datasets, actively curating smaller but more meaningful subsets of data rather than collecting and labeling everything indiscriminately. Adaptive data annotation techniques focus human labeling efforts on the most informative samples, such as rare mission scenarios, ambiguous imagery, or areas with high model uncertainty. This approach helps reduce annotation cost while significantly improving model performance on operational edge cases. Defense organizations are integrating uncertainty sampling, active learning, and counterfactual analysis to ensure that annotated data maximally contributes to model robustness and generalizability.

Neuro-Symbolic Defense Models

To address the limitations of purely statistical models in complex decision-making environments, defense researchers are exploring neuro-symbolic systems that combine data-driven learning with human-defined logic. These models leverage symbolic rules, such as engagement criteria, no-fire zones, or identification thresholds, in conjunction with neural networks that process high-dimensional sensor data. The result is a hybrid model architecture that can both learn from data and reason with constraints, improving explainability and policy compliance. In domains like autonomous targeting or mission planning, neuro-symbolic AI offers a path toward greater control and transparency without sacrificing performance.

Synthetic Data for Combat Simulation

Real-world combat data is often scarce, classified, or unsafe to collect. Synthetic data generation, powered by techniques such as generative adversarial networks (GANs), procedural rendering, and simulation engines, is emerging as a key tool for augmenting training datasets. These synthetic environments can replicate rare or dangerous battlefield conditions, such as urban combat under smoke cover, enemy deception tactics, or night-time infrared scenarios, enabling more thorough training and validation. When combined with real-world sensor signatures and physics-based models, synthetic data can help close coverage gaps in ways that would otherwise be impractical or impossible.

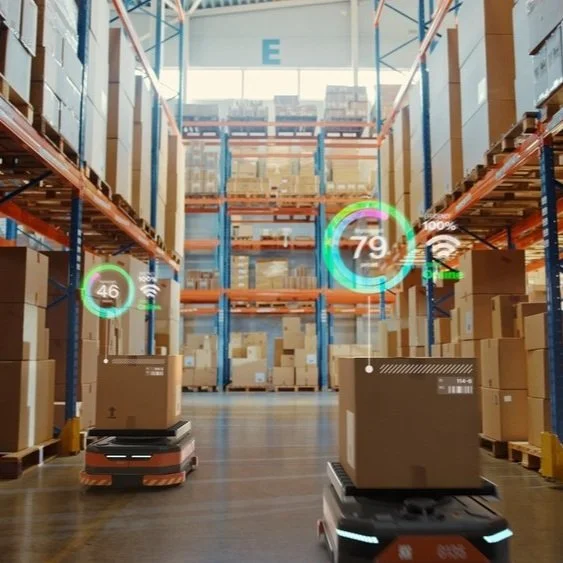

Federated AI Training

Defense organizations frequently face data-sharing restrictions across national, organizational, or classification boundaries. Federated learning addresses this by enabling decentralized model training across multiple secure nodes, without ever transferring raw data. Each participant trains locally on its own encrypted data, and only model updates are aggregated centrally. This approach preserves data sovereignty while enabling collaborative development, an essential feature for coalition operations. Federated learning also supports compliance with regulatory constraints and information assurance policies, making it a compelling option for future multi-domain AI systems.

Together, these innovations represent a fundamental shift from static, siloed data practices toward dynamic, secure, and operationally aware data ecosystems. They pave the way for defense AI systems that are not only more accurate but also more aligned with real-world complexity, ethical norms, and strategic imperatives.

Read more: Applications of Computer Vision in Defense: Securing Borders and Countering Terrorism

Recommendations for Data-Centric AI Development

As defense organizations transition toward a data-centric AI development paradigm, they must realign both technical workflows and strategic planning. This shift demands more than adopting new tools; it requires a foundational change in how data is treated across the lifecycle of AI systems, from acquisition and labeling to deployment and auditing. The following recommendations are intended for practitioners, data scientists, program leads, and acquisition officers tasked with operationalizing AI in sensitive, high-stakes defense environments.

Invest in Data-Centric Metrics

Traditional model evaluation metrics, such as accuracy or precision, are insufficient for defense applications where failure modes can be mission-critical. Practitioners should adopt data-centric evaluation frameworks that assess:

-

Completeness: Does the dataset sufficiently cover all operational environments, adversary tactics, and sensor conditions?

-

Edge-Case Density: Are rare or ambiguous scenarios adequately represented and labeled?

-

Adversarial Robustness: How well do models trained on this dataset perform against manipulated or deceptive inputs?

Incorporating these metrics into both procurement and model assessment pipelines ensures that data quality is not an afterthought but a formalized requirement.

Design Domain-Specific Assurance Protocols

Defense AI systems cannot rely on generic validation procedures. Assurance protocols must be tailored to the mission context. For example, a surveillance drone should meet minimum confidence thresholds before flagging an object for escalation, while an autonomous vehicle may need to demonstrate behavior predictability under degraded GPS conditions. These protocols should integrate:

-

Scenario-specific test datasets.

-

Stress-testing for edge conditions.

-

Human-in-the-loop override and auditability mechanisms.

By embedding assurance into the AI development lifecycle, defense teams can reduce risk and increase confidence in real-world deployment.

Create Shared Annotation Schemas for Interoperability

In coalition or joint-force settings, the lack of consistent annotation standards often hampers data fusion and model integration. Practitioners should push for shared taxonomies and labeling protocols across services and partner nations. A standardized schema allows for:

-

Cross-validation of models across theaters.

-

Aggregation of training data without semantic misalignment.

-

Faster deployment of AI systems in multinational operations.

Developing these schemas in tandem with doctrine and operational policy also ensures they remain relevant and actionable in live missions.

Leverage Synthetic Data Pipelines to Fill Gaps

When operational data is unavailable or insufficient, synthetic data should be used strategically to fill high-risk blind spots. This includes:

-

Simulating rare events such as chemical exposure or infrastructure sabotage.

-

Generating low-visibility EO/IR conditions using physics-informed rendering.

-

Modeling multi-force engagements to train decision-support tools on coalition dynamics.

Synthetic data is not a replacement for real data, but when calibrated carefully, it serves as a powerful force multiplier, especially in training models to anticipate the unexpected.

Align Dual-Use Datasets for Civil-Military Synergy

Many datasets collected for defense purposes have applications in civilian domains such as disaster response, infrastructure monitoring, or border management. By designing datasets and labeling workflows with dual-use alignment, agencies can reduce duplication, increase scale, and improve public trust. This also facilitates smoother transitions of AI systems from military innovation pipelines to civilian applications, creating broader societal benefits.

Taken together, these recommendations reflect a proactive, mission-aligned approach to data-centric development. Rather than treating data curation as a one-off task, practitioners must embed data governance, representational integrity, and real-world relevance into every stage of the AI lifecycle. In doing so, they lay the groundwork for systems that are not only technically sound but operationally trusted.

Conclusion

As AI becomes embedded across the modern defense enterprise, the assumptions that once guided model development must evolve. It is no longer sufficient to build high-performing models in isolation and hope they generalize in the field. In the unforgiving context of defense operations, where a misclassification can mean mission failure, collateral damage, or escalation, data quality, completeness, and context-awareness are non-negotiable.

A data-centric approach reorients the focus from chasing incremental model gains to systematically improving the foundation on which all AI performance rests: the data. It compels practitioners to ask not just how well the model performs, but why it performs the way it does, on what data, and under what assumptions. This shift in perspective is especially critical in defense, where trust, traceability, and tactical alignment are core operational requirements.

The future of defense AI is not about bigger models or faster training cycles. It is about building the right data pipelines, validation protocols, and human-in-the-loop systems to ensure that artificial intelligence can serve as a reliable partner in mission execution. Those who invest in data strategically, structurally, and continuously will not just lead in AI capability. They will lead in operational advantage.

Partner with DDD to operationalize data-centric intelligence at scale. Talk to our experts

References:

Kapusta, A. S., Jin, D., Teague, P. M., Houston, R. A., Elliott, J. B., Park, G. Y., & Holdren, S. S. (2025, April 3). A framework for the assurance of AI‑enabled systems [Preprint]. arXiv.

Proposes a DoD‑aligned claims-based assurance framework critical for guaranteeing trustworthiness in defense AI arXiv.

National Defense Magazine. (2023, July 25). AI in defense: Navigating concerns, seizing opportunities.

Highlights the importance of data bias mitigation in ISR and command‑control AI systems U.S. Department of Defense+3National Defense Magazine+3arXiv+3.

U.S. Cybersecurity and Infrastructure Security Agency. (2023, December). Roadmap for artificial intelligence.

Outlines federal efforts to secure AI systems by design and manage data‑centric vulnerabilities, WIRED.

Frequently Asked Questions (FAQs)

1. Is a data-centric approach only relevant for defense applications?

No. While the blog emphasizes its importance in defense, the data-centric paradigm is highly relevant across industries, especially in domains where data is messy, scarce, or high-stakes (e.g., healthcare, finance, law enforcement, and autonomous driving). The lessons from defense can be adapted to commercial and civilian sectors, particularly in ensuring robustness and fairness in AI systems.

2. How does data-centric AI influence the role of data engineers and ML ops teams?

In a data-centric AI workflow, the role of data engineers and MLOps expands significantly. They are no longer just responsible for data pipelines but also for:

-

Ensuring dataset versioning and lineage

-

Enabling reproducibility through data tracking tools

-

Facilitating dynamic data validation and augmentation pipelines

This blurs the traditional boundary between data infrastructure and model development, encouraging deeper collaboration across roles.

3. How can teams assess whether their current pipeline is model-centric or data-centric?

Key indicators of a model-centric workflow include:

-

Frequent model re-training without modifying the dataset

-

Little analysis of labeling errors or distribution gaps

-

Success measured solely by model metrics (e.g., accuracy, F1)

In contrast, a data-centric pipeline will:

-

Actively curate and monitor dataset quality

-

Log and prioritize edge case failure modes

-

Use tools to automate and analyze dataset’s impact on performance

4. Can pre-trained foundation models eliminate the need for data-centric approaches?

No. Pre-trained models still rely on their training data, which may be:

-

Biased or misaligned with the defense context

-

Lacking in classified or high-risk operational scenarios

Fine-tuning or aligning foundation models with defense-specific data is essential. Thus, even when using large models, data-centric techniques remain critical to ensure operational fitness.

5. How does a data-centric approach help with adversarial robustness?

Adversarial robustness depends significantly on how well the training data represents real-world threats. A data-centric approach allows:

-

Curating examples of adversarial tactics (e.g., camouflage, spoofing)

-

Augmenting datasets with synthetic adversarial scenarios

-

Incorporating uncertainty-aware sampling and labeling

-

This strengthens model resilience by making it harder to exploit blind spots.

6. What are the risks of overly relying on synthetic data?

While synthetic data is powerful, over-reliance can:

-

Introduce simulation bias if not calibrated to real-world sensor characteristics

-

Fail to capture unanticipated human behaviors or environmental edge cases

-

Lead to overconfidence if synthetic scenarios are too “clean” or predictable

The key is to blend synthetic with real, noisy, and annotated data to maintain realism and robustness.

Guide to Data-Centric AI Development for Defense Read Post »