Comparing Prompt Engineering vs. Fine-Tuning for Gen AI

By Umang Dayal

18 Aug, 2025

Adapting large language models (LLMs) to specific business needs has become one of the most pressing challenges in the current wave of generative AI adoption. Organizations quickly discover that while off-the-shelf models are powerful, they are not always optimized for the unique vocabulary, workflows, and compliance standards of a given domain. The question then becomes how to bridge the gap between general capability and specialized performance without overextending time, budget, or technical resources.

Two primary approaches have emerged to address this challenge: prompt engineering and fine-tuning. Prompt engineering focuses on shaping model behavior through carefully crafted instructions, contextual cues, and formatting strategies. It is lightweight, flexible, and can be applied immediately, often with little to no technical overhead. Fine-tuning, in contrast, adapts the model itself by training on domain-specific or task-specific data. This approach requires more investment but yields greater stability, consistency, and alignment with specialized requirements.

Choosing between these methods is a strategic decision that involves considering cost, implementation speed, level of control, and the ability to scale reliably.

This blog explores the advantages and limitations of Prompt Engineering vs. Fine-Tuning for Gen AI, offering practical guidance on when to apply each approach and how organizations can combine them for scalable, reliable outcomes.

Understanding Prompt Engineering in Gen AI

Prompt engineering is the practice of shaping how a large language model responds by carefully designing the inputs it receives. Rather than changing the underlying model itself, prompt engineering relies on structured instructions, contextual framing, and task-specific cues to guide the output. At its core, it is about communicating with the model in a way that maximizes clarity and minimizes ambiguity.

It can be implemented quickly, often without any specialized infrastructure or datasets. Teams can iterate rapidly, testing variations of instructions to discover which phrasing yields the most reliable results. This makes prompt engineering particularly attractive during early experimentation or when working across multiple use cases, since it does not require altering the model or investing heavily in training pipelines.

However, this flexibility comes with limitations as prompts can be fragile, with small changes in wording producing inconsistent or unintended outputs. Maintaining quality over time often requires ongoing iteration, which can introduce operational overhead as applications scale. Additionally, prompts have limited capacity to enforce deep domain knowledge or stylistic consistency, especially in areas where accuracy and reliability are critical.

Prompt engineering is therefore best viewed as a fast, cost-effective way to extract value from a general-purpose model, but not always sufficient when tasks demand precision, control, and domain-specific expertise.

When to Choose Prompt Engineering

Prompt engineering is often the first step organizations take when adopting generative AI. It provides a way to shape outputs through carefully designed instructions without altering the model itself. This approach is lightweight, accessible, and adaptable, making it well suited to scenarios where speed, flexibility, and experimentation are more important than absolute precision.

A Starting Point for Exploration and Prototyping

Prompt engineering is the most practical entry point for organizations exploring how generative AI might integrate into their workflows. By simply adjusting instructions, teams can quickly test a model’s ability to handle tasks such as summarization, drafting, or information retrieval. The process requires little upfront investment, making it ideal for early-stage exploration.

In this stage, the goal is not perfection but discovery. Teams can evaluate whether the model adds value to specific processes, identify areas of strength, and uncover limitations. Because prompts can be modified instantly, experimentation is fast and iterative. This agility allows organizations to validate ideas before deciding whether to commit resources to a more permanent solution like fine-tuning.

Flexibility Across Multiple Use Cases

Another strength of prompt engineering is its ability to adapt a single model across many tasks. With thoughtful prompt design, organizations can shift the model’s output tone, style, or level of detail depending on the situation. A single system can, for instance, provide concise bullet-point summaries in one workflow and detailed narrative explanations in another.

This adaptability makes prompt engineering particularly effective for creative industries, productivity tools, or internal business functions where occasional inconsistency is not a major concern. In these contexts, the priority is responsiveness and breadth of capability rather than strict reliability. Prompt engineering gives teams the versatility they need without requiring separate models for each task.

A Low-Risk Entry Point into Customization

For organizations that are new to generative AI, prompt engineering serves as a safe and low-risk way to begin customizing model behavior. Unlike fine-tuning, which requires curated datasets and training infrastructure, prompt engineering can be implemented by non-technical teams with little more than a structured process for testing instructions.

This approach also provides valuable insights into where a model struggles. For instance, if prompts consistently fail to produce accurate results in compliance-heavy content, this signals that fine-tuning may be necessary. By starting with prompts, organizations gather evidence about performance gaps, helping them make informed decisions about whether a deeper investment in fine-tuning is warranted.

Supporting Continuous Learning and Improvement

Prompt engineering encourages a cycle of experimentation and learning. Teams observe how small changes in instructions influence outputs, gradually building an understanding of the model’s behavior. This process not only improves results but also develops internal expertise in working with generative AI.

As organizations refine prompts, they also identify where additional data or governance might be needed. This incremental approach minimizes risk while building a foundation for more advanced customization. It allows organizations to grow their AI capabilities step by step rather than committing to large-scale projects from the outset.

Best Suited for Speed, Experimentation, and Versatility

Ultimately, prompt engineering is most effective in contexts where speed matters more than absolute precision. It empowers organizations to innovate quickly, try out multiple applications, and adapt models to diverse needs without significant investment. While it may not deliver the consistency required for regulated or mission-critical applications, it is a powerful tool for prototyping, creative exploration, and general-purpose tasks.

By leveraging prompt engineering first, organizations can harness the versatility of generative AI while keeping costs and risks under control. This makes it an essential strategy for early adoption and ongoing experimentation, even if fine-tuning becomes the preferred option later in the development lifecycle.

Understanding Fine-Tuning in Gen AI

Fine-tuning takes a different path by adapting the model itself rather than relying solely on instructions. It involves training a pre-existing large language model on additional domain-specific or task-specific data so that the model learns new patterns, vocabulary, and behaviors. The outcome is a version of the model that is more aligned with a particular use case and less dependent on carefully worded prompts to achieve consistent results.

One of the main advantages of fine-tuning is the stability it provides. Once a model has been fine-tuned, its responses tend to be more predictable, reducing the variability that often arises with prompt-based approaches. This makes it particularly valuable in scenarios where accuracy and reliability are essential, such as customer-facing applications, specialized professional services, or regulated industries. Fine-tuning also enables organizations to embed proprietary knowledge directly into the model, ensuring it reflects the language, standards, and expectations unique to that domain.

The trade-off lies in the cost and complexity of the process. Fine-tuning requires high-quality datasets that are representative of the intended tasks, along with the compute resources and expertise to train the model effectively. Ongoing governance is equally important, since poorly curated data can introduce bias, inaccuracies, or compliance risks. Additionally, a fine-tuned model is less flexible across varied tasks, as it has been tailored to excel in specific areas.

In practice, fine-tuning offers a path toward stronger control and customization, but it demands a greater upfront investment and careful oversight to ensure that the benefits outweigh the risks.

When to Choose Fine-Tuning

Fine-tuning is not always necessary, but it becomes the superior strategy when precision, consistency, and domain alignment are more important than speed or flexibility. Unlike prompt engineering, which relies on instructions to shape behavior, fine-tuning adapts the model itself, embedding knowledge and standards directly into its architecture. Below are the scenarios and reasons why fine-tuning may be the most effective approach.

High-Stakes Applications Where Errors Are Costly

Fine-tuning is particularly well-suited for environments where mistakes carry significant consequences. Customer-facing applications in regulated industries such as banking, insurance, or healthcare cannot afford inconsistent or inaccurate responses. Similarly, mission-critical tools used in legal services, compliance-driven content generation, or government communications demand reliability and adherence to strict rules.

In these scenarios, prompt engineering alone often falls short. While prompts can guide the model, they remain sensitive to wording variations and may generate unpredictable results under slightly different contexts. Fine-tuning addresses this by instilling domain-specific expertise into the model, ensuring predictable behavior across use cases. This reduces the risk of costly errors and helps maintain trust with end users.

Leveraging Proprietary Data for Competitive Advantage

Organizations that hold proprietary datasets can extract significant value from fine-tuning. By training a model on curated, domain-specific data, companies can embed knowledge that is unavailable in general-purpose models. This includes specialized terminology, workflows unique to the business, or datasets reflecting cultural or linguistic nuances.

For example, a pharmaceutical company may fine-tune a model on internal research papers to support drug discovery workflows, while a financial institution may train the model on compliance documents to ensure regulatory accuracy. Beyond improving accuracy, this process also creates differentiation. A fine-tuned model reflects expertise that competitors cannot replicate simply by adjusting prompts, providing a lasting strategic edge.

Alignment with Organizational Standards and Brand Voice

Consistency across outputs is another critical advantage of fine-tuning. Organizations often need models to reflect a specific tone, style, or set of communication guidelines. While prompt engineering can approximate these requirements, it is rarely able to enforce them with complete reliability at scale.

Fine-tuning solves this by embedding stylistic and compliance rules into the model’s parameters. A fine-tuned model can consistently generate outputs aligned with brand identity, customer communication policies, or legal standards. This uniformity is particularly important for large organizations where customer-facing content must maintain a professional, reliable image across thousands of interactions.

Long-Term Efficiency and Reduced Operational Overhead

One of the trade-offs of prompt engineering is the need for constant iteration. As applications scale, teams may spend significant time refining, testing, and updating prompt libraries to keep outputs consistent. This creates operational overhead and may slow down deployment timelines.

Fine-tuning requires a greater upfront investment in training data, compute resources, and governance processes. However, once completed, it provides long-term efficiency. The model becomes less dependent on fragile prompts, reducing the need for continuous adjustments and freeing teams to focus on higher-value innovation. Over time, this stability leads to faster scaling and lower maintenance costs.

Balancing Investment with Strategic Value

The most important consideration is whether the benefits of fine-tuning justify the investment. For smaller projects or low-stakes experimentation, the cost and complexity may not be warranted. But for organizations that prioritize accuracy, compliance, and brand consistency, fine-tuning offers a sustainable path forward.

Preparing high-quality training data, managing governance, and ensuring ethical oversight are challenges, but they also create a more reliable and trusted system. For organizations willing to make this commitment, fine-tuning provides more than just incremental improvement. It becomes a foundation for enterprise-level generative AI that can operate at scale with confidence.

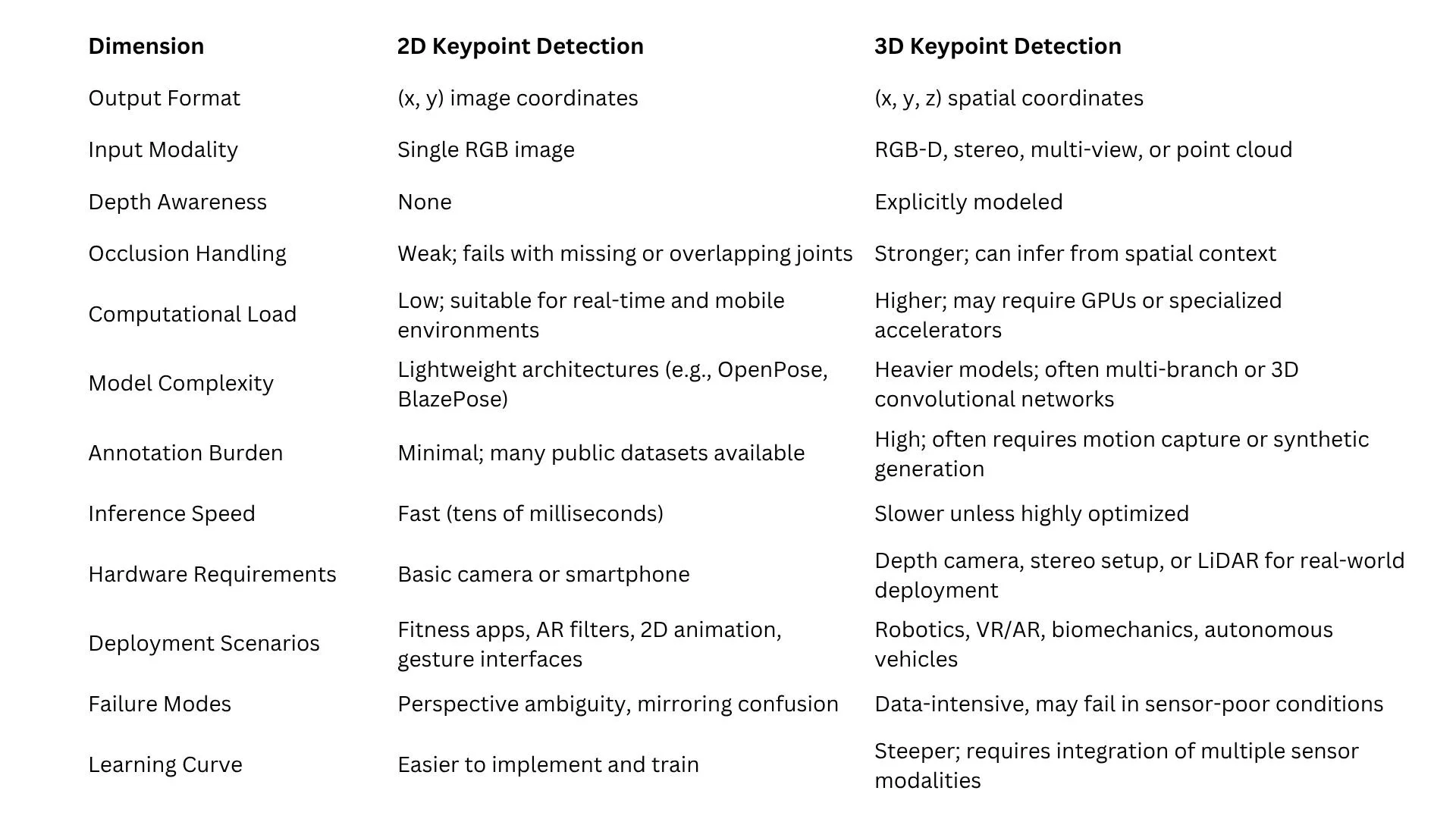

Comparing Prompt Engineering vs. Fine-Tuning

While both prompt engineering and fine-tuning aim to adapt large language models for specific needs, they differ significantly in cost, reliability, scalability, and governance. Understanding these distinctions helps organizations decide which approach best fits their goals.

Speed and Cost

Prompt engineering delivers immediate results with minimal investment. It requires little more than iterative testing and refinement of instructions, making it an accessible option for teams exploring possibilities or working within limited budgets. Fine-tuning, by contrast, demands upfront resources to prepare data, allocate compute power, and manage training cycles. Although this investment is greater, it can deliver long-term savings by reducing reliance on constant prompt adjustments.

Consistency and Reliability

Prompts can produce varying outputs depending on how instructions are phrased or how the model interprets subtle contextual shifts. This unpredictability can be manageable for experimentation but problematic in high-stakes environments. Fine-tuned models are more consistent, as the adjustments are embedded directly in the model parameters, leading to greater reliability over repeated use.

Domain Adaptation

Prompt engineering allows lightweight customization, such as shifting tone or formatting, but it struggles to capture deep expertise in technical or regulated fields. Fine-tuning, on the other hand, excels at domain adaptation. By training on curated datasets, the model internalizes specific knowledge, enabling it to perform accurately and consistently in specialized areas like healthcare, finance, or legal services.

Scalability and Maintenance

At a small scale, prompts are easy to manage. However, as applications grow, maintaining prompt libraries, testing variations, and ensuring consistent results across multiple tasks can become burdensome. Fine-tuned models require periodic retraining, but once adapted, they offer a more efficient long-term solution with reduced operational overhead.

Risk and Governance

Prompt engineering carries the risk of hidden vulnerabilities. Poorly designed prompts may inadvertently expose loopholes, generate unsafe content, or produce outputs that drift from compliance standards. Fine-tuning provides tighter control, but this comes with its risks. The quality of the training data directly shapes model behavior, so governance around data collection, annotation, and validation becomes critical.

In summary, prompt engineering prioritizes flexibility and speed, while fine-tuning emphasizes stability and control. The choice depends on whether an organization values rapid experimentation or long-term reliability in its generative AI strategy.

Read more: Why Quality Data is Still Critical for Generative AI Models

Blended Approach of Fine-tuning and Prompt Engineering

In practice, organizations rarely view prompt engineering and fine-tuning as mutually exclusive. Instead, many adopt a layered approach that leverages the strengths of both methods at different stages of development. This blended strategy allows teams to maximize flexibility during experimentation while building toward long-term stability as solutions mature.

A common workflow begins with prompt engineering. Teams use carefully structured instructions to explore what the model can achieve and identify areas where outputs fall short. This phase provides valuable insights into task complexity, data requirements, and user expectations. Once the limits of prompting are clear, fine-tuning can be introduced to address persistent gaps, embed domain knowledge, and ensure greater reliability.

Emerging techniques are making blended strategies even more practical. Parameter-efficient tuning methods, such as adapters or low-rank adaptation (LoRA), allow organizations to fine-tune models with fewer resources. These approaches reduce the cost and complexity of training while still delivering many of the benefits of customization. They serve as a bridge between lightweight prompt engineering and full fine-tuning, enabling teams to scale gradually without overcommitting resources upfront.

This combination of prompt iteration, evaluation, and targeted fine-tuning creates a more sustainable path for deploying generative AI. It gives organizations the ability to experiment quickly, validate ideas, and then invest in deeper model adaptation, where it creates the most value. The result is a balanced strategy that keeps both short-term agility and long-term performance in focus.

How We Can Help

Adapting large language models to specific business needs requires more than just technical choices between prompt engineering and fine-tuning. Success depends on the availability of high-quality data, rigorous evaluation processes, and the ability to scale efficiently while maintaining control over accuracy and compliance. This is where Digital Divide Data (DDD) plays a critical role.

DDD specializes in building and curating domain-specific datasets that form the foundation for effective fine-tuning. Our teams ensure that training data is accurate, representative, and free from inconsistencies that could undermine model performance. By combining data preparation with human-in-the-loop validation, we help organizations create models that are not only smarter but also more trustworthy.

We also support organizations in the earlier stages of model development, where prompt engineering is often the primary focus. DDD helps design structured evaluation frameworks to test prompt effectiveness, reduce brittleness, and improve consistency. This allows teams to maximize the value of prompt engineering before deciding whether fine-tuning is necessary.

Whether your organization is just experimenting with generative AI or preparing for enterprise-grade deployment, DDD provides the end-to-end support needed to move from exploration to production with confidence.

Read more: Quality Control in Synthetic Data Labeling for Generative AI

Conclusion

The decision to rely on prompt engineering or fine-tuning should not be seen as an either-or choice. Both approaches offer unique strengths, and together they provide a complete toolkit for adapting generative AI models to practical business needs. Prompt engineering excels as the first step because it is fast, inexpensive, and highly adaptable. It allows teams to experiment quickly, validate ideas, and uncover where models succeed or struggle. For organizations that are still exploring how generative AI fits into their workflows, prompt engineering offers a low-risk way to test possibilities without committing significant resources.

For most organizations, the most effective strategy is a combination approach. Starting with prompts offers speed and flexibility, while targeted fine-tuning addresses the gaps that prompts alone cannot close. Parameter-efficient methods such as adapters and LoRA have made this combined approach even more practical, reducing the cost and complexity of customization while retaining its benefits. By treating prompt engineering and fine-tuning as complementary rather than competing, organizations can remain agile in the short term while building systems that deliver stable, reliable performance over time.

The key is recognizing that both strategies are tools in the same toolbox, each designed to solve different aspects of the challenge of adapting large language models to real-world applications.

Ready to take the next step in your generative AI journey? Partner with Digital Divide Data to design, evaluate, and scale solutions that combine the agility of prompt engineering with the reliability of fine-tuning.

References

DeepMind. (2024, November). Prompting considered harmful. DeepMind. https://deepmind.google

Hugging Face. (2025, January). Can RLHF with preference optimization help? Hugging Face Blog. https://huggingface.co/blog

OpenAI. (2024). Model optimization: When to use prompt engineering or fine-tuning. OpenAI. https://platform.openai.com/docs/guides

Soylu, D., Potts, C., & Khattab, O. (2024). Fine-tuning and prompt optimization: Two great steps that work better together. arXiv. https://arxiv.org/abs/2407.10930

Frequently Asked Questions (FAQs)

Can prompt engineering and fine-tuning improve each other?

Yes. Well-designed prompts can highlight where fine-tuning will provide the most benefit. Similarly, once a model is fine-tuned, prompts can still be used to fine-tune outputs in real time, such as adjusting tone, length, or style for different audiences.

How do organizations decide when to transition from prompting to fine-tuning?

The transition usually happens when prompts no longer deliver reliable or efficient results. If teams find themselves creating large prompt libraries, spending significant time on trial and error, or needing consistency in a high-stakes environment, fine-tuning often becomes the more sustainable path.

Are there risks in over-relying on fine-tuning?

Yes. Over-tuning a model to one dataset can make it less flexible, causing it to underperform on tasks outside that scope. It can also amplify biases present in the training data. Ongoing governance and balanced data selection are essential to avoid these issues.

What role does human oversight play in both methods?

Human oversight is critical for both approaches. With prompts, humans validate whether outputs meet expectations and refine instructions accordingly. With fine-tuning, humans ensure the data used is accurate, representative, and free from bias. In both cases, human-in-the-loop processes safeguard quality and trust.

Can small organizations benefit from fine-tuning, or is it only for large enterprises?

Small and mid-sized organizations can benefit as well, especially with the rise of parameter-efficient techniques such as LoRA. These approaches reduce the cost of training while making it possible to tailor models to specific business needs without requiring enterprise-scale infrastructure.

Comparing Prompt Engineering vs. Fine-Tuning for Gen AI Read Post »