How Synthetic Data Accelerates Training in Defense Tech

3 Sep, 2025

Artificial intelligence has become a cornerstone of defense tech, shaping how militaries analyze intelligence, plan missions, and operate autonomous systems. The ability of AI to process vast amounts of information faster than human analysts creates a decisive edge in contested environments. From identifying hidden threats in complex sensor data to guiding unmanned vehicles through hostile terrain, defense applications increasingly depend on the quality of the data used to train and validate these systems.

Yet data itself has become a strategic bottleneck. Collecting military datasets is expensive, time-consuming, and often constrained by security classifications. Many critical scenarios, such as rare adversarial tactics or extreme weather conditions, occur so infrequently that gathering enough real-world examples is nearly impossible. These challenges slow down the pace of AI development at a time when defense organizations are under pressure to innovate rapidly.

Synthetic data has emerged as a practical solution to this challenge. Generated through simulations, physics-based models, or advanced generative AI techniques, synthetic data provides the diversity and scale required to train robust military AI without exposing classified raw information.

In this blog, we explore how synthetic data accelerates training in defense tech by addressing data challenges, expanding applications across domains, and preparing AI systems for future operational demands.

The Data Challenges in Defense Tech

Building effective military AI systems depends on large volumes of high-quality data, yet defense organizations face unique obstacles that make this requirement difficult to meet. Unlike commercial applications, where data is abundant and openly accessible, military contexts are defined by secrecy, scarcity, and operational complexity. These conditions create barriers that slow down development cycles and limit the performance of deployed systems.

One of the most significant constraints is the strict security environment in which defense data is generated and stored. Intelligence and surveillance outputs are often classified, which restricts how they can be shared or reused across different units or allied nations. This siloed approach protects sensitive information but also prevents researchers and developers from accessing the breadth of data required for advanced AI training.

Another challenge is the rarity of edge cases. Many of the scenarios that military AI systems must learn to handle, such as detecting concealed threats, operating in extreme weather, or responding to unconventional tactics, occur infrequently in real-world operations. This lack of representation means that training datasets tend to be biased toward common and predictable patterns, leaving AI models underprepared for the unexpected.

The cost and logistics of data collection add further complexity. Gathering real-world sensor data requires field exercises, deployment of specialized equipment, or flight operations, each of which involves significant time and financial resources. In addition, annotating this data for training purposes is labor-intensive and often demands domain-specific expertise, compounding the expense.

Synthetic Data in Defense Tech

Synthetic data addresses the core limitations of real-world military datasets by creating scalable, secure, and flexible alternatives. Rather than relying exclusively on data collected during operations or training exercises, defense organizations can now generate large volumes of artificial data tailored to the needs of AI development. This shift not only accelerates the pace of training but also expands the scope of what AI systems can be prepared to handle.

There are several approaches to producing synthetic data. Simulation-based methods model operational environments such as battlefields, urban terrain, or maritime zones, enabling AI to learn from realistic but controlled scenarios. Physics-based approaches replicate the behavior of sensors like radar or infrared systems, ensuring that outputs are consistent with how equipment performs in the field. Generative AI techniques further enrich these methods by creating lifelike imagery, signals, or environmental variations that expand the diversity of training sets. Hybrid workflows, which combine multiple approaches, are increasingly used to balance realism, variability, and efficiency.

Scalability

With the right tools, defense teams can generate millions of samples in a fraction of the time and cost required for field collection. This allows AI models to be trained on balanced datasets that include both common and rare events, reducing the risk of blind spots in deployment.

Security

By training AI systems on synthetic datasets that do not contain sensitive or classified information, organizations can share resources across teams and even with allies while maintaining strict data protection standards. This makes it possible to pursue collaborative defense AI projects without compromising national security.

Flexibility

Defense organizations can tailor datasets to specific mission profiles, whether preparing systems for desert operations, maritime surveillance, or contested electromagnetic environments. This adaptability ensures that AI models are not just effective in general conditions but are also fine-tuned for the unique demands of each operational theater.

Applications Across Military Domains

The impact of synthetic data in defense becomes most evident when examining its applications across various operational domains. By providing scalable and realistic training inputs, synthetic datasets enhance the performance of AI systems that are central to modern military missions.

Intelligence, Surveillance, and Reconnaissance (ISR):

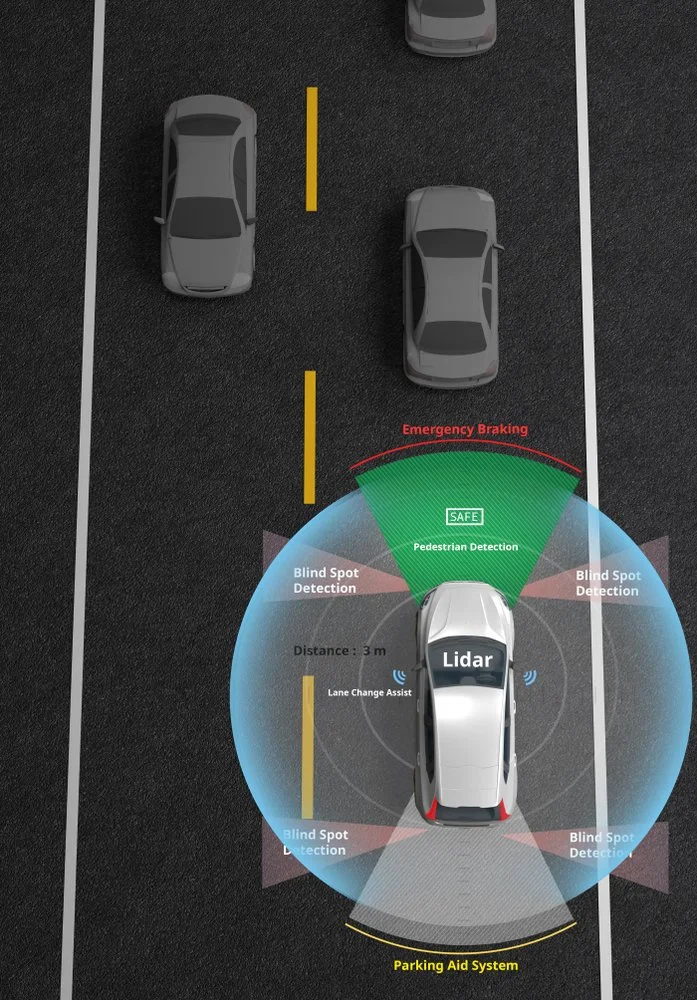

Synthetic data strengthens computer vision models used in analyzing imagery from electro-optical, infrared, and synthetic aperture radar sensors. These systems often operate in environments with limited visibility or under adversary countermeasures, where real-world examples are scarce. Synthetic datasets can replicate diverse conditions, such as nighttime operations, cluttered urban settings, or obscured targets, improving recognition accuracy and reliability.

Radar and RF Spectrum Analysis:

Modern battlefields are defined by contested electromagnetic environments where signals can be disrupted, masked, or intentionally manipulated. Training AI to distinguish legitimate signals from interference requires exposure to a wide variety of scenarios. Synthetic RF and radar data can generate those conditions at scale, enabling AI systems to identify and classify signals more effectively while preparing for adversarial tactics.

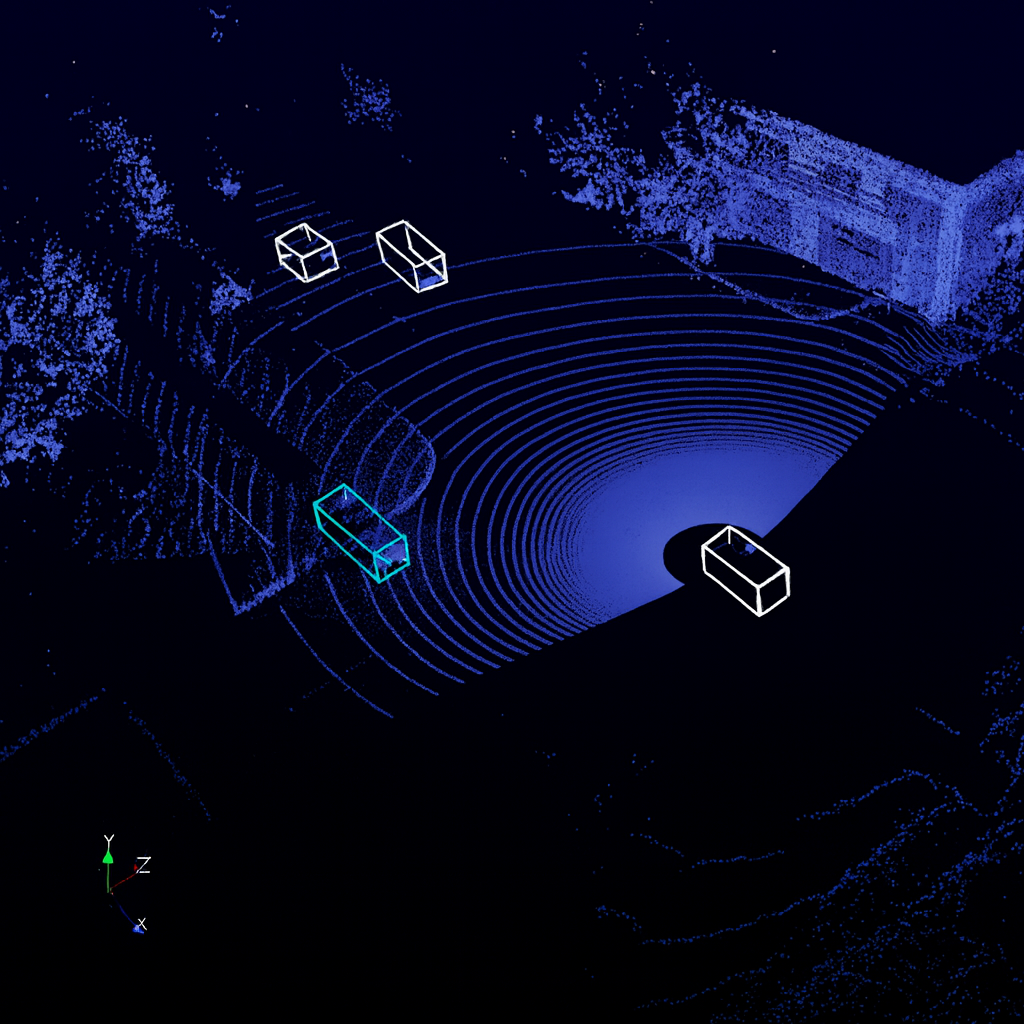

Autonomous Systems:

Unmanned aerial vehicles, ground robots, and maritime platforms depend on AI for navigation and decision-making in unpredictable conditions. Synthetic datasets allow these systems to be trained on diverse terrains, weather conditions, and threat scenarios without risking expensive equipment or personnel during live testing. The result is more resilient autonomy in environments where reliability is mission-critical.

Wargaming and Simulation:

Synthetic environments also play a crucial role in strategic decision-making. By creating artificial battle scenarios, commanders and analysts can test how AI-enabled systems might perform in various conflict settings. These simulations provide valuable insights into operational readiness and help refine strategies without the risks or costs of large-scale exercises.

Accelerating Training Cycles in Defense Tech

One of the most powerful advantages of synthetic data in defense is its ability to compress the time required to develop and deploy AI systems. Traditional military AI projects often face extended cycles of data collection, data annotation, model training, and field validation. Synthetic datasets streamline these steps, allowing teams to move from prototype to deployment at a much faster pace.

Rapid prototyping: Synthetic data enables AI teams to start building models without waiting for new data collection campaigns. With configurable simulators and generative tools, developers can quickly produce datasets that replicate the operational conditions of interest. This accelerates early experimentation and helps identify promising approaches sooner.

Domain randomization: Real-world environments are inherently unpredictable. Domain randomization techniques introduce controlled variation into synthetic datasets, exposing AI systems to a wide range of conditions such as shifting lighting, weather, terrain, or signal interference. By training on these diverse examples, models are better equipped to generalize to unseen situations.

Bridging the sim-to-real gap: While synthetic data is powerful, it works best when paired with smaller sets of real-world data. Combining the two allows models to benefit from the scale and diversity of synthetic datasets while grounding them in operational realities. This hybrid approach reduces the gap between training performance and field performance.

Continuous updates: Defense environments and adversary tactics evolve rapidly. Synthetic data pipelines allow for continuous refresh of training datasets, ensuring that AI systems can adapt without the delays associated with large-scale field data collection. This makes it possible to maintain operational relevance and resilience over time.

Risks and Limitations of Synthetic Data

While synthetic data offers transformative advantages for military AI, it is not without challenges. To realize its full potential, defense organizations must recognize and address the risks that come with relying on artificial datasets.

Fidelity challenges:

Synthetic data is only as good as the models and methods used to generate it. Poorly constructed simulations or generative tools may introduce unrealistic artifacts, leading AI systems to learn patterns that do not exist in real-world conditions. This risk can cause overfitting and undermine operational reliability if not carefully managed.

Validation needs:

No synthetic dataset can completely replace the ground truth offered by real-world data. AI models trained on synthetic examples must still be validated against real operational datasets to confirm accuracy and resilience. Without rigorous benchmarking, there is a danger of deploying systems that perform well in synthetic environments but fail in live scenarios.

Ethical and legal concerns:

Synthetic data also raises questions about oversight and governance. Defense applications inherently involve dual-use technologies that could be applied outside military contexts. Ensuring that synthetic data generation and use remain aligned with ethical standards and international regulations is essential to maintaining legitimacy and trust.

Resource balance:

Synthetic data is a powerful complement to real-world data, but it should not be seen as a replacement. Deciding when to use synthetic inputs and when to invest in collecting real examples requires careful judgment. An overreliance on synthetic sources may reduce exposure to the nuances and unpredictability of real operational conditions.

Read more: Guide to Data-Centric AI Development for Defense

The Road Ahead

The role of synthetic data in military AI is still evolving, but its trajectory points toward deeper integration into defense innovation pipelines. As both threats and technologies advance, synthetic data will become an indispensable element in ensuring that AI systems remain adaptable, resilient, and ready for deployment.

Integration with digital twins

Defense organizations are moving toward creating comprehensive digital twins of operational environments. These digital replicas can be used to model entire battlefields, fleets, or supply chains, generating continuous streams of synthetic data for AI training. This approach provides a closed-loop system where data, models, and operational insights are constantly refined together.

Advances in generative AI

Generative models are making synthetic datasets increasingly realistic and diverse. With the ability to mimic complex environments, adversary tactics, and multi-modal sensor outputs, generative AI ensures that training data captures the unpredictability of modern conflict. These advances reduce the gap between simulated and real-world conditions, improving the trustworthiness of AI systems.

Policy and standardization efforts

As synthetic data becomes more prominent, defense alliances are investing in frameworks to ensure consistency and interoperability. NATO and European partners are working toward standardizing synthetic training environments, while US initiatives focus on aligning government, industry, and research communities. These policies will help set benchmarks for quality, security, and ethical use.

A vision of adaptability

Looking ahead, synthetic data has the potential to redefine how military AI evolves. Instead of waiting months or years for new datasets, defense teams can adapt AI systems on demand as adversaries develop new strategies. This adaptability could shift the balance of technological advantage, allowing militaries to innovate at the pace of conflict.

Read more: Why Multimodal Data is Critical for Defense-Tech

How DDD Can Help

At Digital Divide Data (DDD), we understand that synthetic data alone does not guarantee effective AI in Defense Tech. The true value comes from how it is generated, validated, and integrated into mission-ready systems. Our expertise lies in building high-quality data pipelines that make synthetic data usable and reliable for defense applications.

By combining technical expertise with operational scalability, DDD helps defense organizations unlock the full potential of synthetic data. Our role is to ensure that synthetic datasets are not just abundant but also trustworthy, secure, and mission-ready.

Conclusion

Synthetic data is rapidly becoming more than just a tool for supplementing military AI. It is emerging as a strategic accelerator that addresses some of the most pressing challenges in defense innovation. By enabling scalable data generation, reducing reliance on sensitive or classified material, and preparing systems for rare and unpredictable scenarios, synthetic data empowers defense organizations to build AI that is both adaptable and resilient.

As defense organizations continue to modernize, the integration of synthetic ecosystems will shape the future of military AI. Those who invest in secure, scalable, and high-quality synthetic data pipelines today will be better positioned to respond to tomorrow’s challenges.

Embracing synthetic data is not simply a matter of efficiency. It is a matter of ensuring that military AI systems are prepared to operate effectively in the environments where they are needed most.

Partner with DDD to build secure, scalable, and high-quality synthetic data pipelines that power next-generation military AI.

References

NATO. (2024, November 27). NATO launches distributed synthetic training environment to meet rising demand. Retrieved from https://www.nato.int

Patel, A. (2024, June 14). NVIDIA releases open synthetic data generation pipeline for training large language models. NVIDIA Blog. https://blogs.nvidia.com/blog/nemotron-4-synthetic-data-generation-llm-training/

Novogradac, M. M. (2024, March 5). Soldiers test new synthetic training environment. U.S. Army. https://www.army.mil/article/274266/soldiers_test_new_synthetic_training_environment

FAQs

Q1. How does synthetic data differ from classified training data in terms of security?

Synthetic data can be generated without exposing sensitive details, making it safe to share across teams or with allied nations, unlike classified datasets, which must remain restricted.

Q2. Can synthetic data replace live training exercises?

No. While it can supplement and accelerate AI training, live exercises remain essential for validation and for testing the human-machine interface in real operational conditions.

Q3. What role does synthetic data play in electronic warfare?

It can generate diverse and contested spectrum scenarios, helping AI systems learn to recognize and adapt to adversarial jamming or deceptive signal tactics.

Q4. Is synthetic data equally valuable for small defense contractors as it is for large programs?

Yes. Smaller contractors benefit from faster prototyping and reduced costs by using synthetic datasets to train AI systems before moving into costly field trials.

Q5. How quickly can synthetic datasets be updated to reflect evolving threats?

With the right tools, synthetic pipelines can generate new datasets in weeks or even days, ensuring that AI models remain relevant as adversary tactics change.

How Synthetic Data Accelerates Training in Defense Tech Read Post »