3D LiDAR Data Annotation: What Precision Actually Demands

The consequences of getting LiDAR annotation wrong propagate directly into perception model failures. A bounding box that is too loose teaches the model an inflated estimate of object size. A box placed two frames late on a decelerating vehicle teaches the model incorrect velocity dynamics.

A pedestrian annotated as fully absent because occlusion made it difficult to label leaves the model with no training signal for one of the most safety-critical object categories. These are not edge cases in production LiDAR annotation programs. They are systematic failure modes that require specific annotation discipline and quality assurance infrastructure to prevent.

This blog examines what 3D LiDAR annotation precision actually demands, from the annotation task types and their quality requirements to the specific challenges of occlusion, sparsity, weather degradation, and temporal consistency. 3D LiDAR data annotation and multisensor fusion data services are the two annotation capabilities where Physical AI perception quality is most directly determined.

Key Takeaways

- 3D LiDAR annotation requires spatial precision in all three dimensions simultaneously; positional errors that are acceptable in 2D bounding boxes produce systematic model failures when placed on point cloud data.

- Temporal consistency across frames is a distinct annotation requirement for LiDAR: frame-to-frame box size fluctuations and incorrect object tracking IDs teach models incorrect velocity and motion dynamics.

- Occluded and partially visible objects must be annotated with predicted geometry based on contextual inference, not simply omitted; omission produces models that miss objects whenever occlusion occurs.

- Weather conditions, including rain, fog, and snow, degrade point cloud quality and introduce false returns, requiring annotators with the expertise to distinguish genuine objects from environmental artifacts.

- Camera-LiDAR fusion annotation requires cross-modal consistency that single-modality QA does not check; an object correctly labeled in one modality but incorrectly in the other produces a conflicting training signal.

What LiDAR Produces and Why It Requires Different Annotation Skills

Point Clouds: Structure, Density, and the Annotator’s Challenge

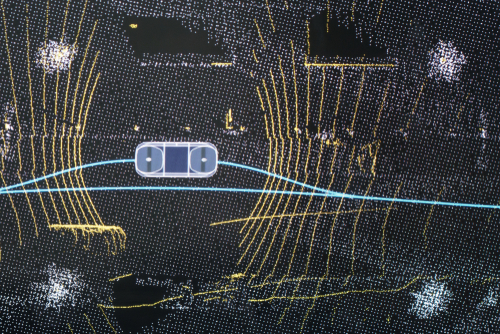

A LiDAR sensor emits laser pulses and measures the time each takes to return from a surface, building a three-dimensional map of the surrounding environment expressed as a set of x, y, z coordinates. Each point carries a position and typically a reflectance intensity value. The resulting point cloud has no inherent pixel grid, no colour information, and no fixed spatial resolution. Object density in the cloud varies with distance from the sensor: objects close to the vehicle may be represented by thousands of points, while an object at 80 metres may be represented by only a handful.

Annotators working with point clouds must navigate a three-dimensional space using software tools that allow rotation and zoom through the data, typically combining top-down, front-facing, and side-facing views simultaneously. Identifying an object’s boundaries requires understanding its three-dimensional geometry, not its visual appearance. The skills required are closer to spatial reasoning under geometric constraints than to the visual pattern recognition that image annotation demands, and the onboarding time for LiDAR annotation teams reflects this difference.

Why Point Cloud Data Is Not Just Another Image Format

Image annotation tools and workflows are not transferable to point cloud annotation without significant modification. The quality dimensions that matter are different: in image annotation, boundary placement accuracy is measured in pixels. In LiDAR annotation, it is measured in centimetres across three spatial axes simultaneously, and errors in any axis affect the model’s learned representation of object size, position, and orientation.

The model architectures trained on LiDAR data, including voxel-based, pillar-based, and point-based processing networks, are sensitive to annotation precision in ways that differ from convolutional image models. The relationship between annotation quality and computer vision model performance is more direct and more spatially specific in LiDAR contexts than in standard image annotation.

Annotation Task Types and Their Precision Requirements

3D Bounding Boxes: The Core Task and Its Constraints

Three-dimensional bounding boxes, also called cuboids or 3D boxes, are the primary annotation type for object detection in LiDAR point clouds. A well-placed 3D bounding box encloses all points belonging to the object while excluding points from the surrounding environment, with the box oriented to match the object’s heading direction. The precision requirements are demanding: box dimensions should reflect the actual physical size of the object, not the extent of visible points, which means annotators must infer full geometry for partially visible or occluded objects.

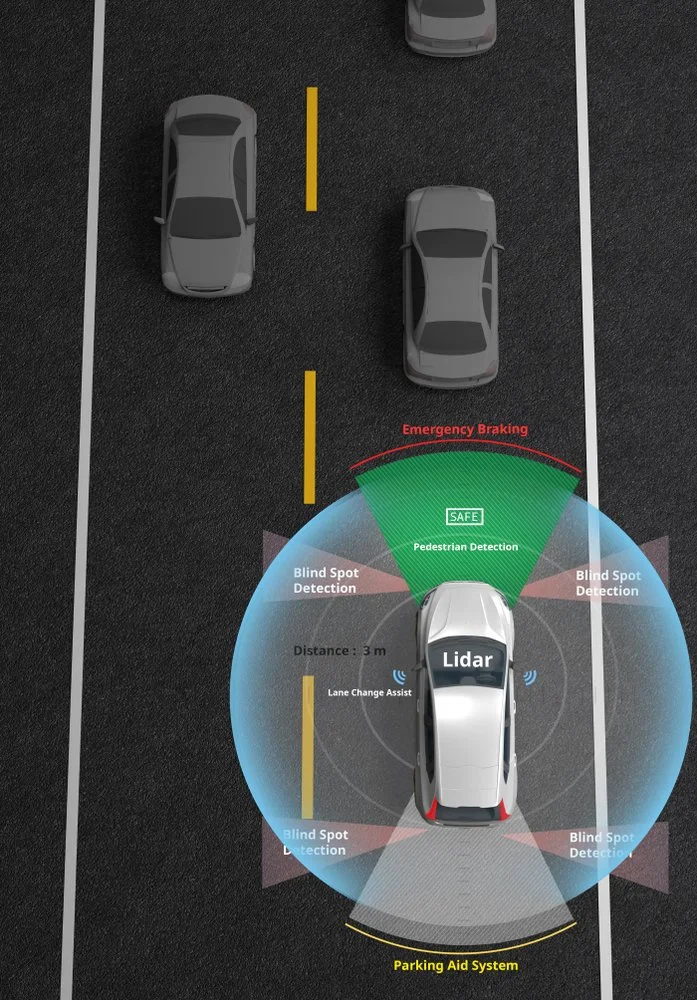

Orientation accuracy matters because the model uses heading direction for trajectory prediction and path planning. ADAS data services for safety-critical functions require 3D bounding box annotation at the precision standard set by the safety requirements of the specific perception function being trained, not a generic commercial annotation standard.

Semantic Segmentation: Classifying Every Point

LiDAR semantic segmentation assigns a class label to every point in the cloud, distinguishing road surface from sidewalk, building from vegetation, and vehicle from pedestrian at the point level. The precision requirement is higher than for bounding box annotation because every point contributes to the model’s learned class boundaries. Boundary regions between classes, where a road surface meets a kerb or where a vehicle body meets its shadow on the ground, are the areas where annotator judgment is most consequential and where inter-annotator disagreement is most likely. Annotation guidelines for semantic segmentation need to be specific about boundary point treatment, not just about object class definitions.

Instance Segmentation and Object Tracking

Instance segmentation distinguishes between individual objects of the same class, assigning unique instance identifiers to each car, each pedestrian, and each cyclist in a scene. It is the annotation type required for multi-object tracking, where the model must maintain the identity of each object across successive frames as the vehicle moves. Tracking annotation requires that each object receive the same identifier across every frame in which it appears, and that the identifier is consistent even when the object is temporarily occluded and reappears.

Maintaining this consistency across large annotation datasets requires systematic quality assurance that checks identifier continuity, not just frame-level box accuracy. Sensor data annotation at the quality level required for tracking-capable perception models requires this cross-frame consistency checking as a structural component of the QA workflow.

The Occlusion Problem: Annotating What Cannot Be Seen

Why Occlusion Cannot Simply Be Ignored

Occlusion is the most common source of annotation difficulty in LiDAR data. A pedestrian partially hidden behind a parked car, a cyclist whose lower body is obscured by road furniture, a truck whose rear is out of the sensor’s direct line of sight: these are not rare scenarios. They are the normal condition in dense urban traffic. Annotators who respond to occlusion by omitting the occluded object or reducing the bounding box to cover only visible points produce training data that teaches the model to be uncertain about or to miss objects whenever occlusion occurs. In a deployed autonomous driving system, this produces exactly the failure mode in dense traffic that is most dangerous.

Predictive Annotation for Occluded Objects

The correct annotation approach for occluded objects requires annotators to infer the full geometry of the object based on contextual information: the visible portion of the object, knowledge of typical object dimensions for that class, the object’s trajectory in preceding frames, and contextual cues from other sensors. A pedestrian whose body is 60 percent visible allows a trained annotator to infer full height, approximate width, and likely heading with reasonable accuracy.

Annotation guidelines must specify this inference requirement explicitly, with worked examples and decision rules for different occlusion levels. Annotators who are not trained in this inference discipline will default to visible-point-only annotation, which is faster but produces systematically degraded training data for occluded scenarios.

Occlusion State Labeling

Beyond annotating the geometry of occluded objects, many LiDAR annotation programs require that annotators record the occlusion state of each annotation explicitly, classifying objects as fully visible, partially occluded, or heavily occluded. This metadata allows model training pipelines to weight examples differently based on annotation confidence, to analyze model performance separately for different occlusion levels, and to identify where the training dataset is under-represented in high-occlusion scenarios. Edge case curation services specifically address the under-representation of high-occlusion scenarios in standard LiDAR training datasets, ensuring that the scenarios where annotation is most demanding and model failures are most consequential receive adequate coverage in the training corpus.

Temporal Consistency in LiDAR

Why Frame-Level Accuracy Is Not Enough

LiDAR data for autonomous driving is collected as continuous sequences of frames, typically at 10 to 20 Hz, capturing the dynamic scene as the vehicle moves. A model trained on this data learns not only to detect objects in individual frames but to understand their motion, velocity, and trajectory across frames. This means annotation errors that are consistent across a sequence are less damaging than inconsistencies between frames, because a consistent error teaches a consistent but wrong pattern, while frame-to-frame inconsistency teaches no coherent pattern at all.

The most common temporal consistency failure is bounding box size fluctuation: annotators placing boxes of slightly different dimensions around the same object in successive frames because the point density and viewing angle change as the vehicle moves. A vehicle that appears to change physical size between consecutive frames is producing a training signal that will undermine the model’s size estimation accuracy. Annotation guidelines need to specify size consistency requirements across frames, and QA processes need to measure frame-to-frame size variance as an explicit quality metric.

Object Identity Consistency Across Long Sequences

Maintaining consistent object identifiers across long annotation sequences is particularly challenging when objects temporarily leave the sensor’s field of view and re-enter, when two objects of the same class pass close to each other, and their point clouds briefly merge, or when an object is first obscured and then reappears from behind cover.

Annotation teams without systematic identity management protocols will produce sequences with identifier reassignment errors that teach the tracking model incorrect trajectory continuities. Video annotation discipline for temporal consistency in conventional video annotation carries over to LiDAR sequence annotation, but the three-dimensional nature of the data and the absence of visual cues make LiDAR identity management a harder problem requiring more structured annotator training.

Weather, Distance, and Sensor Challenges in LiDAR

How Adverse Weather Degrades Point Cloud Quality

Rain, fog, snow, and dust all degrade LiDAR point cloud quality in ways that create annotation challenges with no equivalent in camera data. Water droplets and snowflakes reflect laser pulses and produce false returns in the point cloud, appearing as clusters of points that do not correspond to any physical object. These false returns can superficially resemble real objects of similar reflectance, and distinguishing them from genuine objects requires annotators who understand both the physics of the degradation and the characteristic patterns it produces in the point cloud.

Annotation guidelines for adverse weather conditions need to specify how annotators should handle ambiguous clusters that may be environmental artifacts, what contextual evidence is required before annotating a possible object, and how to record uncertainty levels when annotation confidence is reduced. Programs that apply the same annotation guidelines to clear-weather and adverse-weather data without differentiation will produce an inconsistent training signal for exactly the conditions where perception reliability matters most.

Sparsity at Range and Its Annotation Implications

Point density decreases with distance from the sensor as laser beams diverge and fewer pulses return from any given object. An object at 10 metres may be represented by hundreds of points; the same object class at 80 metres may be represented by only a dozen. The annotation challenge at long range is that sparse representations make it harder to determine object boundaries accurately, to distinguish one object class from another of similar geometry, and to identify the orientation of an object with limited point coverage.

The ODD analysis for autonomous systems framework is relevant here: the distance ranges that fall within the system’s operational design domain determine the annotation precision requirements that the training data must satisfy, and ODD-aware annotation programs specify different quality thresholds for different distance bands.

Sensor Fusion Annotation

Why LiDAR-Camera Fusion Annotation Is Not Two Separate Tasks

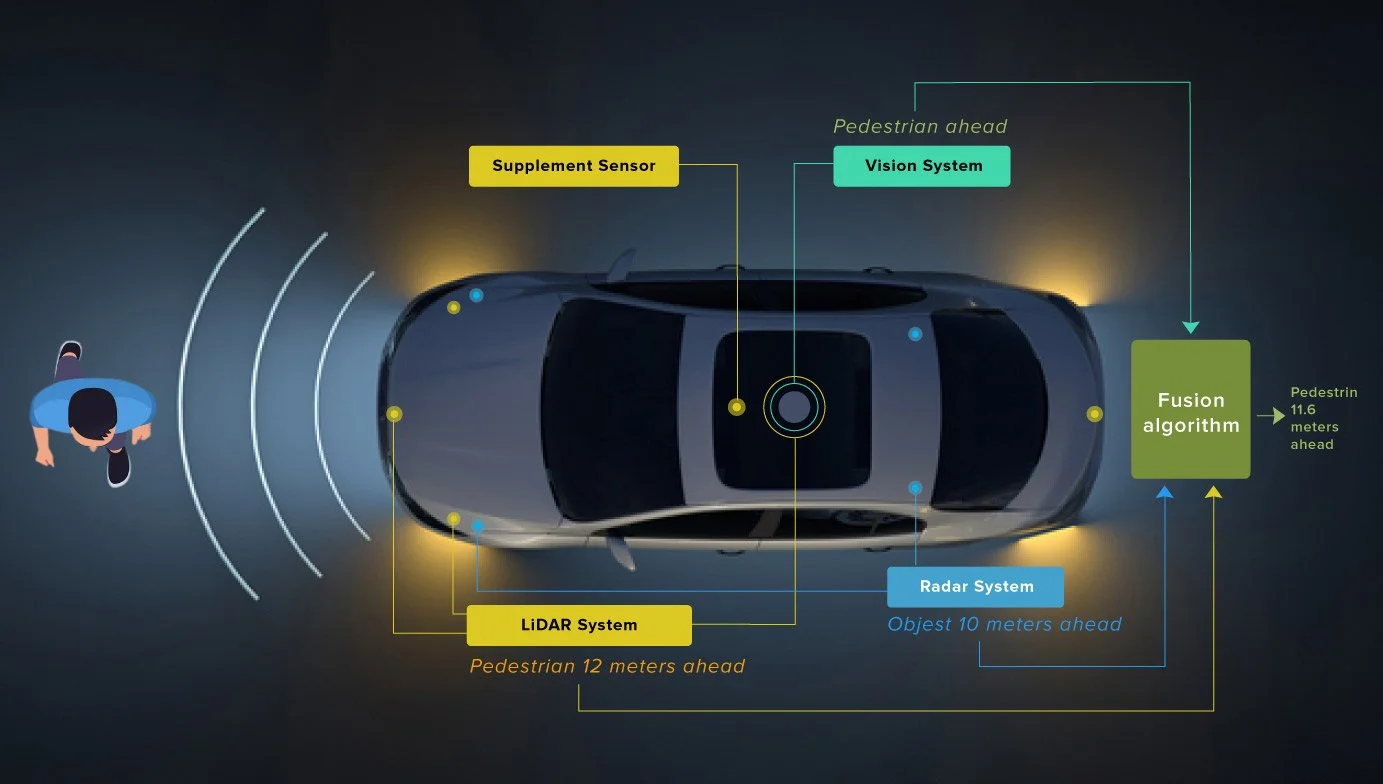

Autonomous driving perception systems increasingly fuse LiDAR point clouds with camera images to combine the spatial precision of LiDAR with the semantic richness of cameras. Training these fusion models requires annotation that is consistent across both modalities: an object labeled in the camera image must correspond exactly to the same object labeled in the point cloud, with matching identifiers, matching spatial extent, and temporally synchronized labels.

Inconsistency between modalities, where a pedestrian is correctly labeled in the camera frame but slightly offset in the point cloud or vice versa, produces conflicting training signal that degrades fusion model performance. The role of multisensor fusion data in Physical AI covers the full scope of this cross-modal consistency requirement and its implications for annotation program design.

Calibration and Coordinate Alignment

Camera-LiDAR fusion annotation requires that the sensor calibration parameters are correct and that both annotation streams are operating in a consistent coordinate system. If the extrinsic calibration between the LiDAR and camera has drifted or was not precisely determined, points in the LiDAR coordinate frame will not project accurately onto the camera image plane.

Annotators working on both streams simultaneously may compensate for calibration errors by adjusting their annotations in ways that introduce systematic inconsistencies. Annotation programs that treat calibration validation as a prerequisite for annotation, rather than as a separate engineering concern, produce more consistent fusion training data.

4D LiDAR and the Emerging Annotation Requirement

Newer LiDAR systems operating on frequency-modulated continuous wave principles add instantaneous velocity as a fourth dimension to each point, providing direct measurement of object radial velocity rather than requiring it to be inferred from position change across frames. Annotating 4D LiDAR data requires that velocity attributes are verified for consistency with observed object motion, adding a new quality dimension to the annotation task. As 4D LiDAR adoption increases in production autonomous driving programs, annotation services that can handle velocity attribute validation alongside spatial annotation will become a differentiating capability. Autonomous driving data services designed for next-generation sensor configurations need to accommodate this expanded annotation schema before 4D LiDAR becomes the production standard in new vehicle programs.

Quality Assurance for 3D LiDAR Annotation

Why Standard QA Metrics Are Insufficient

Annotation accuracy metrics for 2D image annotation, including bounding box IoU and per-class label accuracy, do not translate directly to LiDAR annotation quality assessment. A 3D bounding box that achieves an acceptable 2D IoU when projected onto a ground plane may still be incorrectly oriented or sized in the vertical dimension. Metrics that measure accuracy in the bird’s-eye view projection alone miss annotation errors in the height dimension that are consequential for object classification and for applications requiring accurate height estimation. Full 3D IoU measurement, orientation angle error, and explicit heading accuracy metrics are the quality dimensions that LiDAR QA frameworks should measure.

Gold Standard Design for LiDAR Annotation

Gold standard examples for LiDAR annotation QA present specific challenges that image annotation gold standards do not. A gold standard LiDAR scene needs to cover the full range of difficulty conditions: varying object distances, different occlusion levels, adverse weather representations, and the object classes that are most frequently annotated incorrectly.

Designing gold standard scenes that adequately represent the tail of the difficulty distribution, rather than the average of the annotation task, is what distinguishes gold standard sets that actually surface annotator quality gaps from those that measure performance on the easy cases. Human-in-the-loop computer vision for safety-critical systems describes the quality assurance architecture where human expert review is systematically applied to the most safety-consequential annotation categories.

Inter-Annotator Agreement in 3D Space

Inter-annotator agreement for 3D bounding boxes is harder to measure than for 2D annotations because agreement must be assessed across position, dimensions, and orientation simultaneously. Two annotators may agree perfectly on an object’s position and dimensions but disagree on its heading by 15 degrees, which produces a meaningful difference in the model’s learned orientation representation. Agreement measurement frameworks for LiDAR annotation need to decompose agreement into these separate spatial components, identify which components show the highest disagreement across annotator pairs, and target guideline refinements at the specific spatial dimensions where annotator interpretation diverges.

Applications Beyond Autonomous Driving

Robotics and Industrial Automation

LiDAR annotation requirements for robotics and industrial automation differ from automotive perception in ways that affect annotation standards. Industrial manipulation robots need highly precise 3D object pose annotation, including not just position and orientation but specific grasp point locations on object surfaces. Warehouse autonomous mobile robots need accurate annotation of dynamic obstacles at close range in environments with dense, reflective infrastructure.

The annotation standards developed for automotive LiDAR, which are optimized for road scene objects at driving speeds and distances, may not transfer directly to these contexts without domain-specific adaptation. Robotics data services address the specific annotation requirements of manipulation and mobile robot perception, including the close-range precision and object pose annotation that automotive-focused LiDAR annotation workflows do not typically prioritise.

Infrastructure, Mapping, and Geospatial Applications

LiDAR annotation for infrastructure inspection, corridor mapping, and smart city applications involves different object categories, different precision standards, and different temporal requirements from automotive perception annotation. Infrastructure LiDAR data needs annotation of linear features such as power lines and road markings, structural elements of varying scale, and vegetation that changes between survey passes.

The annotation challenge in these contexts is less about temporal consistency at high frame rates and more about spatial precision and category consistency across long survey corridors. Annotation teams calibrated for automotive LiDAR need specific domain training before working on infrastructure annotation tasks.

How Digital Divide Data Can Help

Digital Divide Data provides 3D LiDAR annotation services designed around the precision standards, temporal consistency requirements, and cross-modal fusion demands that production Physical AI programs require.

The 3D LiDAR data annotation capability covers all primary annotation types, including 3D bounding boxes with full orientation and dimension accuracy, semantic segmentation at the point level, instance segmentation with cross-frame identity consistency, and object tracking across long sequences. Annotation teams are trained to handle occluded objects with predictive geometry inference, not visible-point-only annotation, and occlusion state metadata is captured as a standard annotation attribute.

For programs requiring camera-LiDAR fusion training data, multisensor fusion data services provide cross-modal consistency checking as a structural component of the QA workflow, not a post-hoc audit. Calibration validation is treated as a prerequisite for annotation, and cross-modal annotation agreement is measured alongside single-modality accuracy metrics.

QA frameworks include full 3D IoU measurement, orientation angle error tracking, frame-to-frame size consistency metrics, and gold standard sampling stratified across distance bands, occlusion levels, and adverse weather conditions. Performance evaluation services connect annotation quality to downstream model performance, closing the loop between data quality investment and perception system reliability in the deployment environment.

Build LiDAR training datasets that meet the precision standards and production perception demands. Talk to an expert!

Conclusion

3D LiDAR annotation is technically demanding in ways that standard image annotation experience does not prepare teams for. The spatial precision requirements, the temporal consistency obligations across dynamic sequences, the occlusion handling discipline, the weather artifact identification skills, and the cross-modal consistency demands of fusion annotation are all distinct competencies that require specific training, specific tooling, and specific quality assurance frameworks.

Programs that approach LiDAR annotation as a harder version of image annotation, and apply image annotation standards and QA methodologies to point cloud data, will produce training datasets with systematic error patterns that surface in production as perception failures in exactly the conditions that matter most: dense traffic, occlusion, adverse weather, and long range.

The investment required to build annotation programs that meet the precision standards LiDAR perception models need is substantially higher than for image annotation, and it is justified by the role that LiDAR plays in the perception stack of safety-critical Physical AI systems. A perception model trained on precisely annotated LiDAR data is more reliable across the full operational envelope of the system. A model trained on imprecisely annotated data will fail in the scenarios where annotation difficulty was highest, which are also the scenarios where perception reliability matters most.

References

Valverde, M., Moutinho, A., & Zacchi, J.-V. (2025). A survey of deep learning-based 3D object detection methods for autonomous driving across different sensor modalities. Sensors, 25(17), 5264. https://doi.org/10.3390/s25175264

Zhang, X., Wang, H., & Dong, H. (2025). A survey of deep learning-driven 3D object detection: Sensor modalities, technical architectures, and applications. Sensors, 25(12), 3668. https://doi.org/10.3390/s25123668

Jiang, H., Elmasry, H., Lim, S., & El-Basyouny, K. (2025). Utilizing deep learning models and LiDAR data for automated semantic segmentation of infrastructure on multilane rural highways. Canadian Journal of Civil Engineering, 52(8), 1523-1543. https://doi.org/10.1139/cjce-2024-0312

Frequently Asked Questions

Q1. What is the difference between 3D bounding box annotation and semantic segmentation for LiDAR data?

3D bounding boxes place a cuboid around individual objects to define their position, dimensions, and orientation. Semantic segmentation assigns a class label to every individual point in the cloud, producing a complete spatial classification of the scene without object-level instance boundaries.

Q2. How should annotators handle occluded objects in LiDAR point clouds?

Occluded objects should be annotated with their full inferred geometry based on visible portions, object class size priors, and trajectory context from adjacent frames — not reduced to cover only visible points or omitted, as either approach produces models that miss or underestimate objects under occlusion.

Q3. Why is frame-to-frame bounding box consistency important for LiDAR training data?

Models trained on LiDAR sequences learn velocity and motion dynamics across frames. Box size fluctuations between frames for the same object produce conflicting signals about object dimensions and produce models with inaccurate size estimation and trajectory prediction capabilities.

Q4. What annotation challenges does adverse weather introduce for LiDAR data?

Rain, fog, and snow create false returns in the point cloud that can resemble real objects, requiring annotators with domain expertise to distinguish environmental artifacts from genuine objects and to record appropriate confidence levels when scan quality is degraded.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

3D LiDAR Data Annotation: What Precision Actually Demands Read Post »