Geospatial Intelligence and AI: Defense and Government Applications

The National Geospatial-Intelligence Agency describes geospatial AI as the integration of AI into GEOINT to automate imagery exploitation, detect change, classify objects, and extract patterns from spatial data at a scale that manual analysis cannot approach. For defense and government customers, this capability shift has operational consequences: the time between satellite collection and actionable intelligence can compress from days to minutes, and the coverage that was once limited by analyst capacity can expand to encompass entire theaters of operation continuously.

This blog examines where AI is being applied across defense and government geospatial use cases, what the annotation and data quality requirements are for each application, and where the critical gaps between current capability and mission-reliable performance remain. HD map annotation services and 3D LiDAR data annotation are the two annotation capabilities most directly relevant to government geospatial AI programs.

Key Takeaways

- The core data challenge in defense geospatial AI is not sensor capability, which has advanced dramatically, but annotation quality: models trained on poorly labeled satellite imagery produce false positives and missed detections that undermine the operational decisions they are meant to support.

- SAR imagery annotation requires domain expertise in radar physics that generic computer vision annotators do not possess, making specialist annotation capability a limiting factor for many defense programs.

- Change detection, the identification of differences between imagery of the same location at different times, requires temporally consistent annotation across multi-date datasets that standard single-image annotation workflows do not support.

- Government geospatial AI programs increasingly combine optical satellite imagery, SAR, LiDAR, and signals data; models trained on single-modality data fail at the fusion boundaries where most operationally interesting events occur.

- Humanitarian and emergency response applications of government geospatial AI share the same annotation requirements as defense intelligence programs, but operate under tighter time constraints and with less tolerance for model errors that affect aid distribution.

The Geospatial AI Landscape in Defense and Government

From Imagery Collection to Intelligence Production

The traditional geospatial intelligence workflow moves from satellite or aerial collection through manual imagery analysis to intelligence production. The bottleneck has always been the analysis step: a skilled imagery analyst can examine a limited number of images per day, and the volume of collected imagery has long exceeded what any analyst population can process. AI changes the economics of this step by automating the detection and classification tasks that consume most analyst time, allowing human analysts to focus on the complex interpretive judgments that remain beyond current model capability.

The operational shift this enables is significant. Rather than analyzing imagery of priority locations on a tasked collection schedule, AI-assisted GEOINT programs can monitor entire geographic areas continuously, flagging any change or anomaly for human review. The lessons from geospatial intelligence use in the Russia-Ukraine conflict have accelerated government investment in this capability: the conflict demonstrated that commercial satellite imagery combined with AI analysis can provide operationally relevant intelligence within hours of collection, compressing decision cycles in ways that traditional classified collection pipelines cannot match.

Government Use Cases Beyond Defense

Geospatial AI applications extend across the full scope of government operations beyond military intelligence. Border surveillance programs use AI to detect crossings and movement patterns across large perimeters that no physical patrol force could continuously monitor. Customs and trade enforcement use satellite imagery analysis to verify declared shipping activity against actual vessel movements.

Disaster response agencies use AI-processed imagery to assess damage and direct resources hours after an event. Critical infrastructure protection programs use change detection to identify construction or activity near sensitive installations. Each of these applications has distinct annotation requirements determined by the specific objects, events, and changes the model needs to detect.

Optical Satellite Imagery: Object Detection and Classification

What AI Needs to Detect in Satellite Imagery

Object detection in satellite imagery involves identifying specific targets within images that may cover hundreds of square kilometres. Target categories in defense applications include military vehicles, aircraft, vessels, weapons systems, and infrastructure. Target categories in government applications include buildings, road networks, agricultural land use, and economic activity indicators. The fundamental challenge in both contexts is that targets in satellite imagery are small relative to the image extent, may be partially obscured by shadows or clouds, and may be visually similar to background clutter that the model must not classify as a target.

Annotation for satellite object detection requires bounding boxes or polygon masks placed with spatial precision that accounts for the overhead viewing geometry. Unlike ground-level photography, where objects face a camera and present a familiar visual profile, satellite imagery shows objects from directly or near-directly above, where the visible surface may be a roof, a vehicle top, or a shadow rather than the identifying features an analyst would use in a ground-level view.

Annotators working on satellite imagery need specific training in overhead recognition that generic computer vision annotation experience does not provide. Why high-quality data annotation defines computer vision model performance examines how annotation precision requirements scale with the operational consequences of model errors, which in defense contexts are direct.

Resolution and Scale Dependencies

Satellite imagery is collected at varying spatial resolutions, from sub-meter commercial imagery capable of identifying individual vehicles to ten-meter government archives suited for land cover classification. A model trained on sub-meter imagery cannot be applied to ten-meter imagery without retraining, and vice versa.

This resolution dependency means that annotation programs must be designed around the specific imagery resolution that the deployed model will operate on, with separate annotation investments for each resolution band if the program needs to exploit multiple imagery sources. Recent research on AI in remote sensing confirms that deep learning models trained on one spatial resolution show significant accuracy degradation when applied to imagery at a different resolution, even when the same object categories are present.

SAR Imagery: The Specialist Annotation Challenge

Why SAR Is Operationally Critical and Annotation-Difficult

Synthetic Aperture Radar operates by emitting microwave pulses and measuring how they reflect from the Earth’s surface, producing imagery that is independent of daylight, cloud cover, and most weather conditions. This all-weather, day-and-night capability makes SAR indispensable for military and government programs that cannot wait for clear optical conditions before collection. Flood extent mapping, maritime vessel detection, ground deformation measurement, and damage assessment in obscured areas all rely on SAR data precisely because optical imagery is unavailable when these events occur.

The annotation challenge is that SAR imagery does not look like optical imagery. Objects appear as characteristic backscatter patterns that reflect the radar properties of their surfaces rather than their visual appearance. A metallic vehicle produces a bright, specular reflection. Water appears dark, absorbing radar energy. Vegetation creates a diffuse, textured return. Annotators who understand radar physics can reliably interpret these signatures; annotators with only optical imagery experience cannot. This domain expertise gap is one of the most significant bottlenecks in defense geospatial AI programs, particularly as SAR becomes more central to operational workflows. The role of multisensor fusion data in Physical AI describes how radar and optical modalities are combined at the data level to leverage the complementary strengths of each.

The Scarcity of Labeled SAR Data

Labeled SAR datasets for defense applications are scarce relative to optical imagery datasets. Collection restrictions on military vehicle imagery, the sensitivity of SAR signatures as intelligence sources, and the specialist expertise required for annotation have all limited the size and accessibility of SAR training datasets. Programs building SAR-based AI capabilities typically find that their annotation investment needs to be substantially higher per labeled example than for optical imagery, because each labeled example requires more time from a specialist annotator working with more complex data. The scarcity of existing labeled data also means that transfer learning from publicly available models is less effective for SAR than for optical imagery, where large pretrained models provide a useful starting point.

Change Detection: The Temporal Annotation Problem

What Change Detection Requires and Why It Is Difficult

Change detection identifies differences between satellite or aerial imagery of the same location captured at different times, flagging construction, demolition, movement of equipment, changes in land use, or any other modification of the physical environment. It is among the most operationally valuable geospatial AI capabilities because it automatically directs analyst attention to locations where something has changed, rather than requiring analysts to review entire areas for possible changes.

The annotation challenge is temporal consistency. A change detection model needs training examples that show the same scene at two or more time points, with the areas of genuine change labeled separately from the areas of apparent change caused by differences in illumination angle, cloud shadow, seasonal vegetation, or sensor calibration differences between collection dates. An annotator labeling a pair of images without understanding these sources of apparent change will produce training data that teaches the model to flag imaging artifacts as meaningful events. Building temporally consistent annotation protocols and training annotators to apply them consistently across multi-date image pairs requires a workflow design that single-image annotation programs do not address.

Multi-Temporal Annotation at Scale

Government programs that monitor large geographic areas for change need annotation datasets that cover the range of change types and magnitudes the model will be asked to detect, across the range of seasonal and atmospheric conditions in which collection occurs. A change detection model trained only on summer imagery will produce unreliable results on winter imagery, where vegetation state, snow cover, and shadow geometry all differ.

The European Union’s Copernicus programme, which provides open satellite imagery for environmental and humanitarian monitoring, has generated extensive multi-temporal datasets that demonstrate both the operational value and the annotation complexity of change detection at a continental scale: ensuring consistent labeling across imagery captured under different conditions by different sensors requires annotation infrastructure that treats temporal consistency as a first-class quality requirement.

Maritime Domain Awareness and Vessel Tracking

The AI Monitoring Problem at Sea

Maritime domain awareness requires tracking vessel movements across ocean areas too vast for any physical surveillance presence to cover. AI applied to satellite imagery, including both optical and SAR data, can detect vessels, classify them by type and size, and compare their positions against Automatic Identification System transmissions to identify vessels that are operating without broadcasting their location. This dark vessel detection capability is directly relevant to counter-piracy, counter-smuggling, sanctions enforcement, and illegal fishing interdiction programs across multiple government agencies.

Training a maritime AI system requires annotation of vessel detection across a wide range of sea states, vessel sizes, and imaging conditions. Small fishing vessels in high sea states present very different SAR signatures than large tankers in calm water, and a model trained predominantly on large vessel examples will have poor detection rates for the smaller vessels that often represent the highest-priority targets for enforcement programs. Integrating AI with geospatial data for autonomous defense systems examines the multi-sensor approach that combines satellite detection with signals intelligence to maintain vessel tracks through coverage gaps.

Port and Infrastructure Monitoring

Government programs monitoring port activity, airfield operations, and logistics infrastructure use AI to identify changes in vessel loading patterns, aircraft movements, and vehicle concentrations that indicate changes in operational status or activity levels. These applications require annotation of activity patterns rather than just object presence: the model needs to learn what normal port activity looks like to flag deviations that indicate something operationally significant. This behavioral pattern annotation is more demanding than static object detection because the training data needs to represent the full range of normal activity, not just the specific events to be detected.

Humanitarian and Disaster Response Applications

Where GEOINT Meets Crisis Response

Geospatial AI serves government programs beyond defense intelligence. Humanitarian organizations and government emergency management agencies use AI-processed satellite imagery to assess damage after earthquakes, floods, and conflicts, directing aid and response resources to the areas of greatest need. These applications face the same annotation requirements as defense programs, the same need for specialist annotators who understand overhead imagery, the same challenges with SAR data in adverse weather conditions, but with the additional constraint of time: damage assessments for humanitarian response must be produced within hours of an event to be operationally useful.

Building damage assessment models need to be trained on imagery from multiple geographic regions and multiple disaster types, because the visual signature of earthquake damage in a concrete-construction urban environment differs substantially from flood damage in a wooden-construction agricultural area. A model trained only on one disaster type or one geographic context will produce unreliable assessments when deployed for a different disaster, and humanitarian programs need to deploy quickly to novel events rather than having time to retrain on locally relevant data.

This geographic and disaster-type generalization requirement is one of the strongest arguments for pre-building annotation-rich training datasets across diverse contexts before operational need arises. Data collection and curation services that build geographically diverse geospatial training datasets across disaster types enable rapid deployment of damage assessment models to novel events without a retraining cycle.

Dual-Use Geospatial Data and Its Governance Implications

Geospatial imagery of civilian infrastructure, population movement, and land use patterns serves both legitimate government purposes and potential misuse. Government programs handling this data operate under legal frameworks including privacy law, data sovereignty requirements, and, in some contexts, international humanitarian law. The annotation programs that label this imagery need to manage data access controls, annotator vetting, and documentation of data provenance to satisfy the governance requirements of the programs they serve. These governance requirements are more demanding than those for commercial computer vision programs, and annotation service providers working on government geospatial programs need to demonstrate compliance with the relevant security and governance frameworks.

The Fusion Challenge: Building Models That Combine Data Sources

Why Single-Modality Models Fall Short

The most operationally interesting events in defense and government geospatial contexts rarely manifest clearly in any single data source. A military movement may be visible in optical imagery under clear conditions and in SAR imagery under cloud, but neither alone provides the full picture. A vessel conducting illegal activity may appear in satellite imagery, but can only be identified as suspicious by comparing its position against AIS data showing where it claimed to be. Infrastructure under construction may be detectable through building footprint change in optical imagery and through ground deformation in SAR, with the combination providing higher confidence than either alone.

Training fusion models requires annotation that is consistent across modalities: an object labeled in the optical channel must be co-registered with the corresponding annotation in the SAR or LiDAR channel, so that the model learns to associate corresponding features across data types. This cross-modal annotation consistency is technically demanding and requires annotation workflows that handle the co-registration of data from different sensors and collection times. Multisensor fusion data services address the cross-modal consistency requirement that single-modality annotation programs do not support.

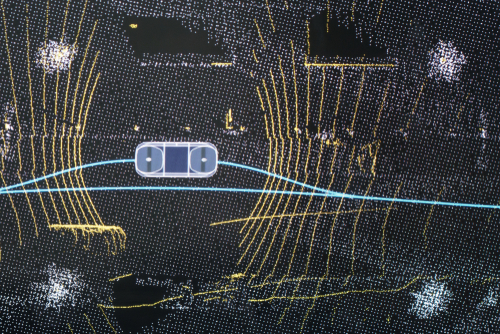

LiDAR Integration for Terrain and Structure Analysis

LiDAR data provides precise three-dimensional terrain models and building height information that satellite imagery cannot supply. Government programs use LiDAR for terrain analysis, urban structure mapping, vegetation height mapping, and infrastructure assessment. Annotating LiDAR point clouds for government geospatial applications requires the same specialist skills and three-dimensional annotation precision as defense-oriented LiDAR annotation programs. 3D LiDAR data annotation at the precision levels that terrain analysis and structure assessment require uses the same annotation discipline that enables reliable perception in autonomous driving, applied to geospatial rather than road scene contexts.

Data Governance, Security, and Annotation in Classified Contexts

The Security Requirements That Shape Annotation Programs

Defense and intelligence geospatial AI programs operate under security requirements that fundamentally shape how annotation can be conducted. Classified imagery cannot be annotated on standard commercial annotation platforms. Annotators may require security clearances at specific levels depending on the classification of the imagery they are labeling. Annotation results may themselves be classified if they reveal sensitive analytical methods, target identities, or collection capabilities. These constraints mean that annotation programs for classified geospatial AI cannot simply engage commercial annotation services without first establishing the data handling infrastructure and personnel clearance frameworks that classified work requires.

Unclassified geospatial AI programs, including those using commercial satellite imagery for civilian government applications, still face data governance requirements related to data sovereignty, privacy, and the acceptable use of imagery that may capture civilian populations. Government programs in European Union jurisdictions face GDPR requirements when geospatial imagery captures identifiable individuals, and the EU AI Act’s provisions for high-risk AI systems apply to government AI used in consequential decisions about individuals.

The Shift Toward Commercial Data and Open-Source Intelligence

A significant development in defense geospatial AI is the increasing use of commercial satellite imagery and open-source intelligence alongside classified government collection. Commercial providers now offer sub-meter resolution imagery with daily revisit rates that rival or exceed classified systems for many applications. This commercial imagery can be annotated and used to train models on unclassified infrastructure, with the trained models then applied to classified imagery in classified environments.

This approach reduces the annotation burden on classified programs by allowing training data development to proceed on unclassified commercial imagery before deployment against classified collection. The National Geospatial-Intelligence Agency’s GEOINT AI program reflects this direction, emphasizing the integration of commercial capabilities and open-source data into government intelligence workflows.

How Digital Divide Data Can Help

Digital Divide Data provides geospatial annotation services tailored to the specialist requirements of defense and government applications, from optical satellite imagery annotation and SAR interpretation to multi-temporal change-detection labeling and LiDAR point-cloud annotation.

The image annotation services capability for geospatial programs covers overhead object detection with the spatial precision and overhead-geometry expertise that satellite imagery requires, building and infrastructure segmentation for government mapping applications, and vehicle and vessel classification across the resolution ranges and imaging conditions that operational programs encounter. Annotation workflows are designed to preserve geospatial coordinate metadata through the annotation process, producing labeled datasets that are directly usable in geospatial AI training pipelines.

For multi-temporal programs, data collection and curation services build temporally consistent annotation protocols that distinguish genuine change from imaging artifacts, covering the range of seasonal and atmospheric conditions that change detection models need to handle reliably. Multisensor fusion data services support cross-modal annotation consistency for programs combining optical, SAR, and LiDAR data sources.

For programs building toward mission deployment, model evaluation services provide geographically stratified performance assessment across the imaging conditions, target categories, and resolution ranges the deployed model will encounter. HD map annotation services and 3D LiDAR annotation extend these capabilities to terrain modeling and precision mapping applications across government programs.

Build geospatial AI training data that meets the precision and domain expertise requirements of defense and government applications. Talk to an expert!

Conclusion

The AI transformation of defense and government geospatial intelligence is well underway. What remains the binding constraint in most programs is not sensor capability, which has advanced to the point where continuous global monitoring is technically achievable, but training data quality. Models trained on poorly annotated satellite imagery, on SAR data labeled by annotators without radar domain expertise, on single-date datasets that cannot support change detection, or on single-modality data that cannot be fused with complementary sensors will fail to deliver the operational reliability that mission-critical applications demand. The annotation investment required to close these gaps is substantial, specialized, and ongoing.

Government programs that invest in annotation quality as a primary capability, rather than as a data preparation step before the interesting AI work begins, build systems with materially better operational performance and greater reliability under the changing conditions that deployed systems encounter. Image annotation, LiDAR annotation, and multisensor fusion annotation built to the domain expertise standards that geospatial AI requires are the foundation that separates programs that perform in deployment from those that perform only in demonstration.

References

Kazanskiy, N., Khabibullin, R., Nikonorov, A., & Khonina, S. (2025). A comprehensive review of remote sensing and artificial intelligence integration: Advances, applications, and challenges. Sensors, 25(19), 5965. https://doi.org/10.3390/s25195965

National Geospatial-Intelligence Agency. (2024). GEOINT artificial intelligence. NGA. https://www.nga.mil/news/GEOINT_Artificial_Intelligence_.html

United States Geospatial Intelligence Foundation. (2024). GEOINT lessons being learned from the Russian-Ukrainian war. USGIF. https://usgif.org/geoint-lessons-being-learned-from-the-russian-ukrainian-war/

Frequently Asked Questions

Q1. Why does SAR imagery annotation require specialist expertise that optical imagery annotation does not?

SAR imagery captures radar backscatter rather than visual appearance. Objects appear as characteristic reflectance patterns determined by their material properties and surface geometry rather than their colour or shape. Annotators need training in radar physics to reliably interpret these signatures, which are not legible to annotators with only optical imagery experience.

Q2. What is change detection in geospatial AI, and why is annotation for it challenging?

Change detection identifies genuine physical changes between satellite images of the same location at different times. Annotation is challenging because images captured at different times differ due to illumination angle, seasonal vegetation state, cloud shadow, and sensor calibration variation, all of which can appear as a change but are not operationally significant. Annotation protocols must be specifically designed to distinguish genuine change from these imaging artifacts.

Q3. How do government geospatial AI programs handle security constraints on annotation?

Classified imagery cannot be annotated on standard commercial platforms and may require annotators with appropriate security clearances. Many programs address this by developing training data on unclassified commercial imagery and then applying trained models in classified environments, separating the annotation workflow from the most sensitive collection.

Q4. Why do geospatial AI models trained on single-modality data fail at sensor fusion applications?

Single-modality models learn features specific to one sensor type. When applied to fused data, they cannot associate corresponding features across modalities, and the cross-modal relationships that provide the most operationally useful intelligence are not represented in their training data. Fusion model training requires cross-modal annotation where the same objects are consistently labeled across all data sources.

Q5. What annotation requirements are specific to humanitarian and disaster response geospatial AI?

Humanitarian damage assessment models need annotation datasets that cover multiple geographic regions, construction types, and disaster types to generalize reliably to novel events. They also need to be trained and ready for rapid deployment, which requires pre-built, diverse training datasets rather than post-event annotation when response time is critical.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Geospatial Intelligence and AI: Defense and Government Applications Read Post »