What Is Occupancy Grid Mapping and Why Autonomous Vehicles Need It

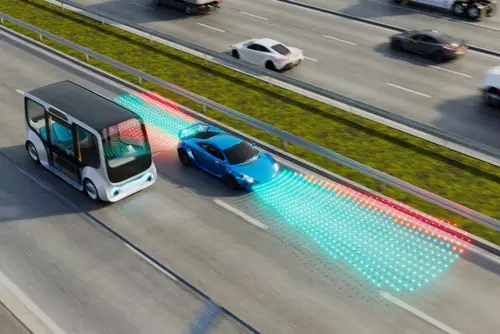

Object detection has been the dominant paradigm for autonomous vehicle perception. A model identifies a car, a pedestrian, and a traffic cone and assigns a bounding box to each. The approach works well for the objects it was trained to recognize. It fails on everything else. A cardboard box fallen from a truck, an unusually shaped barrier, a concrete block at the edge of a construction zone: anything outside the model’s defined object categories either gets misclassified or missed entirely. For a system where a missed detection can mean a collision, this limitation is not acceptable.

Consider a real intersection scenario: a vehicle is approaching a blind corner where a pedestrian is about to step off the curb. The vehicle’s LiDAR and cameras see nothing until the pedestrian enters their field of view, leaving almost no time to react. An occupancy grid model would detect the occupied voxels as soon as any part of the pedestrian’s body crosses into sensor range, even before a classifier could label them as “pedestrian.” That fraction of a second of earlier detection is what separates a near-miss from a collision. This is the safety argument for occupancy-first perception, and it is why the training data that builds these models carries such high operational stakes.

Occupancy grid mapping addresses this problem at the representation level rather than the detection level. Instead of asking what objects are present, it asks which portions of three-dimensional space are occupied and which are free to drive through. Every voxel in the grid around the vehicle is assigned an occupancy probability regardless of whether the thing occupying it has a name in the object taxonomy. A fallen ladder, an unmarked barrier, a pedestrian partially occluded behind a parked car: all of these register as occupied space. The vehicle’s planning system can avoid them without the perception system needing to classify them first.

This blog explains what occupancy grid mapping is, how it differs from object-detection-based perception, what training data it requires, and where the annotation challenges lie for teams building occupancy-based perception systems. 3D LiDAR data annotation and multisensor fusion data services are the two annotation capabilities most directly required for occupancy grid training data programs.

Key Takeaways

- Occupancy grid mapping represents the environment as a probabilistic three-dimensional grid where each cell encodes whether that space is occupied, free, or unobserved, rather than classifying objects within fixed taxonomies.

- The key advantage over object-centric perception is that occupancy grids handle unknown and out-of-category objects by treating them as occupied space, rather than missing or misclassifying them.

- Generating accurate ground truth occupancy labels for training is one of the hardest data problems in autonomous driving. Dense voxel-level labels require significantly more effort than bounding box annotation.

- Occupancy grids integrate naturally with multi-sensor fusion, combining LiDAR point clouds, camera imagery, and radar returns into a single unified spatial representation that no individual sensor can produce alone.

- Semantic occupancy prediction extends the basic grid by assigning class labels to occupied voxels, enabling the vehicle to understand not just that space is blocked but what kind of object is blocking it.

From Objects to Space: The Core Idea

The Limits of Bounding Box Perception

Bounding box object detection treats the environment as a collection of known object types. The model is trained on labeled examples of those types and learns to find them in sensor data. This works reliably for the object categories that are well-represented in training data and appear in forms the model has seen before. It becomes unreliable in precisely the conditions where reliable perception matters most: novel object configurations, unusual obstacles, partially visible objects, and anything that does not fit a predefined category with enough training examples.

Occupancy grid mapping reframes the perception problem. Rather than detecting specific objects, the model estimates the probability that each unit of three-dimensional space around the vehicle is occupied by any physical matter. This geometry-first approach handles novel objects and unusual configurations without requiring them to be labeled as specific categories during training. The survey by Xu et al. on occupancy perception for autonomous driving describes this as a shift from object-centric to grid-centric perception, where the fundamental representation is spatial rather than categorical.

What an Occupancy Grid Actually Contains

A three-dimensional occupancy grid divides the space around the vehicle into small cubes called voxels. Each voxel is assigned a value that encodes the probability of that space being occupied by a physical object. Free space has a low occupancy probability. Space occupied by a solid surface has a high occupancy probability. Space that no sensor has observed is marked as unobserved rather than assumed to be free. In semantic occupancy prediction, occupied voxels are additionally assigned a semantic class: vehicle, pedestrian, road surface, vegetation, and so on. This allows the planning system to use not just the occupancy state but the type of obstacle when computing trajectories.

How Occupancy Grids Are Generated from Sensor Data

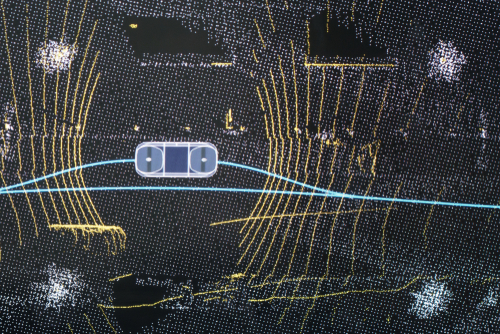

LiDAR as the Primary Input

LiDAR provides the most direct input for occupancy grid construction. Laser pulses measure the distance to surfaces in all directions, producing point clouds that represent where physical matter is present in three-dimensional space. Aggregating LiDAR returns over time accumulates a denser picture of the environment than any single scan can provide, and the resulting point cloud maps naturally onto the voxel grid structure of occupancy representation. LiDAR annotation for autonomous driving covers the annotation methods used to label point clouds, which are the same point clouds that occupancy grid training uses as both input and ground truth source.

The limitation of LiDAR-only occupancy grids is that LiDAR only samples surfaces that laser pulses reach. Occluded regions behind an obstacle, the sides of a vehicle that do not face the sensor, and overhead objects outside the sensor’s field of view all produce sparse or missing data. Training an occupancy prediction model requires ground truth that includes these occluded regions, which is one reason occupancy annotation is harder than standard point cloud labeling.

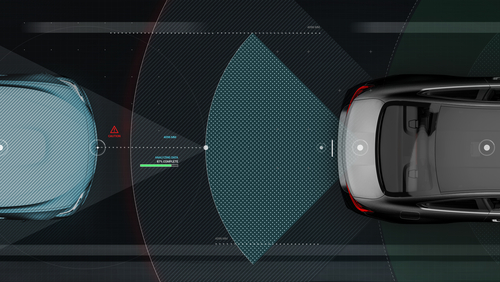

Camera and Radar Integration

Camera imagery provides semantic texture that LiDAR point clouds lack: color, surface appearance, lane markings, and the visual cues that allow objects to be classified rather than just located. Vision-centric occupancy prediction uses camera images as the primary input and lifts two-dimensional image features into three-dimensional voxel space. This approach is cheaper than LiDAR-centric systems at the hardware level but requires more sophisticated models and is more sensitive to lighting and weather conditions. The role of multisensor fusion data in Physical AI examines how radar returns, which penetrate adverse weather conditions that degrade camera and LiDAR performance, contribute to occupancy estimation in conditions where other sensors are unreliable.

Multi-modal occupancy models combine all three sensor types, using LiDAR for precise geometry, cameras for semantic information, and radar for all-weather robustness. The occupancy grid serves as the common spatial representation into which each sensor’s contribution is fused. This fusion architecture is more robust than any single-sensor approach but increases the complexity of the training data requirements, since ground truth needs to be consistent across all sensor modalities.

The Training Data Challenge

Why Occupancy Ground Truth Is Hard to Generate

Generating accurate ground truth for occupancy prediction is one of the most technically demanding problems in autonomous driving data. Standard bounding box labels identify the location and class of objects but do not specify the occupancy state of every voxel in three-dimensional space. An occupancy training dataset needs to know, for every voxel in the grid around the vehicle, whether that voxel is occupied, free, or unobserved. At typical grid resolutions, a single scene may contain tens of millions of voxels, making manual voxel-by-voxel annotation impractical.

The dominant approach to occupancy ground truth generation is semi-automatic: aggregating LiDAR scans over time to densify the point cloud, then using the densified cloud to determine voxel occupancy. Post-processing fills in occluded regions using geometric reasoning, and manual annotation corrects errors in the automatically generated labels. Even with automation, creating high-quality occupancy ground truth requires more effort per scene than bounding box annotation.

Sparse vs. Dense Occupancy Labels

A common quality problem in occupancy training data is sparsity. LiDAR-derived occupancy labels only mark voxels where laser returns were observed as occupied, leaving the interior of solid objects unmarked. A car in the scene may have LiDAR returns on its visible surfaces while its interior voxels are incorrectly treated as unobserved. Training on sparse labels teaches the model to predict sparse occupancy, which underrepresents the true physical volume of obstacles and causes the planning system to underestimate the space they actually occupy. Densification pipelines address this by filling the interior of solid objects using geometric and semantic reasoning, but densification requires validation to ensure it does not introduce errors in complex scenes with overlapping or partially occluded objects.

DDD’s 3D LiDAR data annotation capability is built around exactly this challenge. Annotation teams are trained in point cloud geometry and the specific failure modes that arise when densification is applied to occluded or multi-object scenes. That specialist depth is what separates labeled datasets that train reliable occupancy models from datasets that look complete on paper but introduce systematic errors at the decision boundaries that matter most. For programs combining LiDAR with camera and radar inputs, multisensor fusion data services extend this capability across modalities, ensuring that semantic labels remain consistent across all sensor streams feeding the occupancy model.

Semantic Occupancy: Adding Meaning to Space

From Binary to Semantic

Basic occupancy grids encode a binary state: occupied or free. Semantic occupancy grids add a class label to each occupied voxel, encoding not just that something is there but what kind of thing it is. This matters for planning because the appropriate response to occupied space depends on what is occupying it. A pedestrian voxel requires a different planning response than a static barrier voxel, even if both are physically blocking the same trajectory. Semantic occupancy prediction thus combines the geometric completeness of occupancy representation with the categorical richness of semantic segmentation.

The annotation requirement for semantic occupancy is correspondingly more demanding. Each occupied voxel needs not just an occupancy state but a class label, and class labels need to be consistent across all the voxels representing the same physical object. The class taxonomy also needs to include an explicit general-object category for things that are occupied but do not fit any predefined class, since one of the key advantages of occupancy representation is handling novel objects without missing them entirely. Sensor data annotation at the voxel level, with consistent semantic labeling across the full three-dimensional grid, is the operational capability that semantic occupancy training data requires.

Occupancy Grids and the Path to Full Scene Understanding

From Prediction to Forecasting

Current occupancy prediction models estimate the state of the grid at the current moment from recent sensor observations. Occupancy forecasting extends this to predict how the grid will change in the near future: how occupied voxels corresponding to moving vehicles will shift position, how pedestrian trajectories will evolve, and where free space will open or close over the next few seconds. This temporal extension is essential for planning, which needs to act on future scene states rather than just current ones. Forecasting models require training data that includes ground truth occupancy states across multiple consecutive time steps, annotated with the motion vectors that connect occupied voxels between frames.

The shift toward occupancy-based scene representation also connects to end-to-end autonomous driving architectures, where a single model takes sensor inputs and produces vehicle control outputs without an explicit object detection step in between. Occupancy grids provide a compact and complete spatial representation that these end-to-end models can use as an intermediate representation between raw sensor data and control decisions. Vision-language-action models and their implications for autonomy examine how unified architectures are changing the data requirements for full-stack autonomous driving systems.

How Digital Divide Data Can Help

Digital Divide Data provides the annotation services that occupancy-based perception programs require, from LiDAR point cloud labeling and densification validation through multi-modal sensor fusion annotation and semantic voxel labeling.

For programs generating LiDAR-based occupancy ground truth, 3D LiDAR data annotation covers point cloud labeling at the precision levels occupancy training requires, including annotation of occluded regions and validation of automatic densification outputs. Annotation workflows are designed to maintain geometric consistency across the voxel grid rather than labeling individual objects in isolation.

For multi-modal occupancy programs combining LiDAR, camera, and radar, multisensor fusion data services and sensor data annotation provide cross-modal annotation consistency so that semantic labels are coherent across all sensor inputs feeding the occupancy model. HD map annotation services support the static scene understanding that occupancy models rely on for distinguishing drivable surface from occupied obstacle space.

For ADAS programs at earlier stages of perception development, ADAS data services and autonomous driving data services cover the full range of perception annotation from bounding box labeling through the transition to occupancy-based ground truth generation as programs advance toward more complete scene representation.

Connect to build the occupancy training data that gives your autonomous vehicle perception genuine spatial completeness.

Conclusion

Occupancy grid mapping represents a fundamental shift in how autonomous vehicles understand their environment. Moving from object-centric detection to geometry-first spatial representation closes the gap between what a perception system can handle and what the real world actually contains. Objects that fall outside a predefined taxonomy, partially occluded obstacles, and unusual configurations that bounding box detection would miss all register as occupied space, and the planning system can respond appropriately without needing to classify them first.

The training data requirements that come with this shift are more demanding than those for standard object detection. Voxel-level ground truth annotation, dense occupancy label generation, cross-modal consistency, and temporal consistency for forecasting models all require annotation infrastructure and expertise that bounding box workflows do not address. Programs that invest in occupancy annotation quality build perception systems that are genuinely more robust. The role of multisensor fusion data in Physical AI examines the broader data architecture that occupancy prediction sits within, as one component of the multi-layer perception stack that full autonomy requires.

References

Xu, H., Chen, J., Meng, S., Wang, Y., & Chau, L.-P. (2024). A survey on occupancy perception for autonomous driving: The information fusion perspective. Information Fusion, 102649. https://doi.org/10.1016/j.inffus.2024.102649

Frequently Asked Questions

Q1. What is the difference between an occupancy grid and a standard object detection output?

Object detection outputs bounding boxes around specific object categories. An occupancy grid encodes the probability that each unit of three-dimensional space is occupied, regardless of object category. Occupancy grids can represent objects outside the training taxonomy and handle partial occlusion more completely, since they model space rather than assuming that detected objects account for all obstacles.

Q2. Why is occupancy ground truth harder to generate than bounding box labels?

Bounding box labels identify the location and class of objects. Occupancy ground truth requires the occupancy state of every voxel in a three-dimensional grid, which, at typical resolutions, means millions of voxels per scene. Manual annotation at that granularity is impractical, so occupancy labels are generated semi-automatically from aggregated LiDAR scans with post-processing and manual correction, a process that requires more infrastructure and validation effort than standard bounding box annotation.

Q3. What is semantic occupancy prediction, and how does it differ from basic occupancy?

Basic occupancy prediction assigns each voxel a binary state: occupied or free. Semantic occupancy prediction additionally assigns a class label to occupied voxels, indicating what kind of object is occupying that space. This allows the planning system to distinguish between different types of obstacles and respond appropriately, rather than treating all occupied space identically.

Q4. How do multiple sensors contribute to a single occupancy grid?

Different sensors provide complementary information. LiDAR provides precise geometry. Cameras provide semantic and texture information that enables class labeling. Radar provides occupancy estimation in weather conditions that degrade LiDAR and camera performance. Multi-modal occupancy models fuse these contributions into a single voxel grid, using each sensor’s strengths to fill the gaps left by the others.

Q5. What is occupancy forecasting, and why does it matter for autonomous vehicles?

Occupancy forecasting predicts how the occupancy grid will change over the next few seconds based on current observations. This is essential for planning, which needs to reason about future states of the environment rather than just the current state. A vehicle turning into an intersection, for example, needs to predict where other vehicles and pedestrians will be when it completes the turn, not just where they are now.

What Is Occupancy Grid Mapping and Why Autonomous Vehicles Need It Read Post »