Major Challenges in Scaling Autonomous Fleet Operations

The rapid emergence of autonomous fleet operations marks a transformative moment in the evolution of logistics and mobility.

From self-driving trucks navigating interstate highways to autonomous delivery robots operating in dense urban cores, the application of Autonomy in fleet operations is shifting from experimental pilots to real-world commercial deployments.

Yet, while technical demonstrations have proven the feasibility of autonomy in controlled environments, scaling these systems across regions, cities, and industries presents far more complex challenges.

This blog explores the systemic, operational, and technological challenges in scaling autonomous fleet operations from limited pilots to full-scale deployment, and outlines the best practices and emerging solutions that can enable scalable, reliable, and safe autonomy in real-world environments.

Current State of Autonomous Fleet Deployment

The landscape of autonomous fleet deployment has shifted dramatically in the past few years. What were once isolated pilot programs limited to test tracks or short, well-mapped urban loops are now evolving into broader, more ambitious initiatives aimed at commercial viability.

In the United States, companies such as Aurora, Waymo, and Kodiak Robotics are conducting regular autonomous freight runs across major highways, often with minimal human intervention. These pilots are not merely technological experiments; they are live operational tests of how autonomy performs in the unpredictable conditions of real-world logistics.

Automation offers potential reductions in operating costs, improved asset utilization, and mitigation of persistent driver shortages. Particularly in logistics and delivery sectors, where margins are tight and demand for on-time performance is high, autonomy can unlock efficiencies that traditional fleets struggle to achieve.

As promising as these developments are, the path to scalable deployment is fraught with challenges: technical, regulatory, operational, and social, that must be addressed with equal urgency and depth.

Major Challenges in Scaling Autonomous Fleet Operations

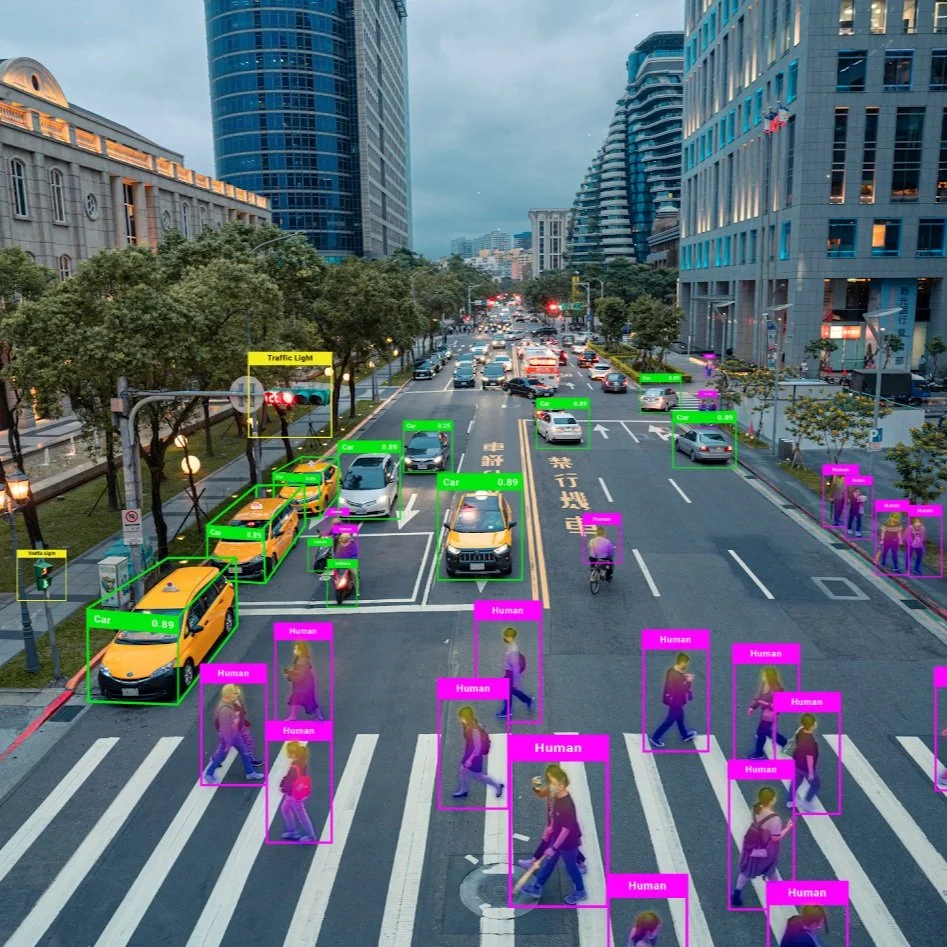

AI System Robustness and Testing

Despite the impressive progress in autonomous vehicle (AV) technology, ensuring consistent AI performance in unpredictable, real-world conditions remains a major barrier. AI models trained under constrained scenarios often struggle when exposed to novel edge cases, such as rare weather phenomena, complex pedestrian behavior, or unusual road geometry. The variability and complexity of mixed traffic environments, where human drivers, cyclists, and pedestrians coexist, further compound this issue.

Autonomous Driving Systems (ADS) and Advanced Driver Assistance Systems (ADAS) need to handle long-tail events without fail. This demands not just more training data, but smarter and more rigorous testing methodologies. Europe’s regulatory approach, including the AI Act, is pushing for transparent, auditable, and safety-verified AI systems. These legislative pressures are forcing developers to adopt explainability tools, synthetic data augmentation, and safety-case-based validation frameworks that go far beyond traditional software testing norms.

Data Management and Federated Learning

Autonomous fleets are only as smart as the data they consume, but scaling data collection and learning across regions introduces critical constraints. Instead of transmitting vast amounts of raw sensor data to central servers, federated learning enables vehicles to collaboratively train AI models while keeping data on the device, thus preserving privacy and reducing bandwidth consumption.

However, federated learning introduces new challenges of its own: maintaining consistency across heterogeneous data sources, handling asynchronous updates, and ensuring resilience to model drift. Privacy regulations like GDPR in Europe and data localization laws in parts of the U.S. complicate centralized approaches, making federated or hybrid solutions increasingly attractive but operationally complex.

Decentralized Coordination and Fleet Optimization

Scaling fleet operations across wide geographies and diverse environments demands more than centralized command-and-control systems. Decentralized coordination using multi-agent systems, where each vehicle or node operates semi-independently while collaborating toward a common fleet objective. This approach supports dynamic task allocation, adaptive routing, and more flexible responses to real-time conditions such as traffic congestion, weather, or shifting customer demands.

Yet implementing decentralized architectures introduces integration and reliability challenges. Ensuring coordination without creating conflicting behaviors across autonomous agents is difficult, especially when fleet members vary in capability or software versioning. Additionally, dynamic rebalancing of resources in open fleet systems, where vehicles might join or leave at will, requires robust protocols and fault-tolerant planning algorithms that are still in active development.

Infrastructure Readiness

For autonomous fleets to function reliably at scale, they must operate within a digitally responsive physical environment. Unfortunately, infrastructure readiness remains uneven, particularly across Europe’s urban and rural divides. Many regions still lack consistent roadside units, HD maps, and real-time connectivity such as V2X (Vehicle-to-Everything) networks.

This infrastructural gap limits operational design domains (ODDs) and forces fleet operators to restrict deployments to well-mapped, high-coverage areas. Moreover, discrepancies in infrastructure standards across countries and cities complicate fleet expansion. Without harmonization and public investment in smart infrastructure, the burden of compensating for environmental gaps falls entirely on the AV technology stack, raising costs and complexity.

Regulatory Fragmentation

While regulation is crucial for safety and accountability, inconsistent legal frameworks across jurisdictions create friction for scaling efforts. The European Union is moving toward cohesive AV legislation through the AI Act and mobility frameworks, but local interpretations and enforcement still vary. In the United States, autonomy laws are largely state-driven, leading to a patchwork of rules around testing, deployment, and liability.

This regulatory fragmentation is especially problematic for cross-border freight and intercity passenger services. Operators must customize their technology stacks and compliance protocols for each region, undermining economies of scale. Inconsistent liability regimes also leave uncertainty around insurance, legal responsibility in the event of a crash, and standards for remote or teleoperated oversight.

Cybersecurity and Safety Assurance

Connected fleets introduce new attack surfaces. From spoofed GPS signals to remote hijacking of control systems, cyber threats can undermine public trust and endanger lives. As fleet sizes grow, so do the risks of systemic vulnerabilities and cascading failures across shared software dependencies.

Safety assurance mechanisms must therefore go beyond redundancy. They must include real-time threat detection, hardened communication protocols, and robust incident response strategies. The absence of universally accepted safety-case frameworks makes it difficult for regulators and insurers to evaluate risk consistently. Industry consensus around standardized safety validation and transparent reporting mechanisms remains an urgent need.

Read more: How to Conduct Robust ODD Analysis for Autonomous Systems

Best Practices and Emerging Solutions

While the challenges in scaling autonomous fleet operations are significant, the industry is rapidly converging on a set of best practices and solution pathways that can enable progress.

Simulation and Real-World Hybrid Testing

A core principle in developing scalable autonomous systems is the integration of simulation and real-world testing. Simulation environments allow for accelerated training and validation across a wide range of scenarios, including edge cases that are rare or unsafe to reproduce in physical trials. Companies are increasingly building high-fidelity digital twins of roads, vehicles, and traffic behaviors to conduct continuous testing and model refinement.

However, real-world validation remains indispensable. The most successful teams use a hybrid approach, where insights from on-road deployments are used to enrich simulation models, and simulation outputs inform updates to perception, prediction, and control algorithms. This iterative loop improves model robustness and accelerates the safe expansion of operational design domains.

Hybrid Coordination Models for Fleet Management

In response to the limitations of both centralized and fully decentralized fleet management, many organizations are adopting hybrid coordination models. These architectures combine centralized oversight, critical for compliance, safety monitoring, and strategic planning, with local autonomy at the vehicle or node level.

For example, in dynamic environments like last-mile delivery or urban mobility, vehicles may make routing or navigation decisions independently within a set of rules or constraints defined by a central system. This balance allows for responsiveness and scalability while preserving fleet-wide coherence and reliability.

Modular and Standards-Based Software Architecture

To avoid vendor lock-in and ensure long-term flexibility, forward-looking operators are pushing for modular autonomy stacks and standards-based software integration. This includes open APIs for key services such as route planning, fleet diagnostics, and data exchange. It also involves participation in industry-wide efforts to standardize safety cases, logging formats, and cybersecurity protocols.

Modularity not only simplifies integration with existing IT systems but also facilitates component upgrades without requiring full system overhauls. It enables operators to adapt to technological innovation and evolving regulatory expectations without disrupting ongoing operations.

Collaborative Ecosystem Development

Scaling autonomy is not a task any single company can tackle alone. Partnerships between AV developers, fleet operators, infrastructure providers, city planners, and regulators are becoming central to successful deployment. These collaborations allow for coordinated rollout strategies, shared investment in infrastructure, and mutual learning across stakeholders.

In Europe, consortia such as those under the Horizon program are setting an example by bringing together cross-border players to test and refine interoperability standards. In the U.S., public-private partnerships are enabling autonomous freight corridors and pilot zones with shared data and governance models.

Read more: Semantic vs. Instance Segmentation for Autonomous Vehicles

How We Can Help

Digital Divide Data (DDD) enables autonomous fleet operation solutions to run smoother, safer, and more efficiently with real-time support, expert monitoring, and actionable insights. Our AV expertise allows us to deliver secure, scalable, and high-quality operational services that adapt to the needs of autonomy at scale. A brief overview of our use cases in fleet operations.

RVA UXR Studies: Enhance remote AV-human interactions by analyzing cognitive load, response times, and multi-vehicle control.

DMS / CMS UXR Studies: Improve driver and cabin safety systems with insights into attentiveness and in-cabin behavior for compliance and safety.

Remote Assistance: Provide real-time support via secure telemetry to help AVs navigate dynamic or unforeseen scenarios.

Remote Annotations: Deliver precise event tagging to support faster model training and reduce engineering workload.

Operating Conditions Classification: Track and label AV exposure to road, traffic, and weather conditions to improve model performance and readiness.

Video Snippet Tagging & Classification: Classify critical AV footage at scale to support training, compliance reviews, and incident analysis.

Operational Exposure Analysis: Analyze where and how AVs operate to inform better test strategies and ensure balanced real-world coverage.

Conclusion

Autonomous fleet operations are entering a critical phase; it has evolved far beyond early proofs of concept, and real-world deployments are now demonstrating the tangible potential of autonomy to transform logistics, public transportation, and mobility services. However, scaling these systems is not a matter of simply deploying more vehicles or writing better code. It requires aligning an entire ecosystem, technical infrastructure, regulatory frameworks, business models, and public trust.

Autonomous fleets are not just vehicles; they are complex, intelligent agents operating within dynamic human systems. Scaling them responsibly is not a sprint, but a long-term endeavor that will reshape the way societies move, work, and connect. The time to solve these challenges is now, while the industry still has the opportunity to build the right systems with intention, foresight, and shared accountability.

Let’s talk about how we can support your fleet operations.

References:

Fernández Llorca, D., Talavera, E., Salinas, R. F., Garcia, F. G., Herguedas, A. L., & Arroyo, R. (2024). Testing autonomous vehicles and AI: Perspectives and challenges. arXiv. https://arxiv.org/abs/2403.14641

Lujak, M., Herrera, J. M., Amorim, P., Lima, F. C., Carrascosa, C., & Julián, V. (2024). Decentralizing coordination in open vehicle fleets for scalable and dynamic task allocation. arXiv. https://arxiv.org/abs/2401.10965

McKinsey & Company. (2024). Will autonomy usher in the future of truck freight transportation? https://www.mckinsey.com/industries/automotive-and-assembly/our-insights/will-autonomy-usher-in-the-future-of-truck-freight-transportation

Edge AI Vision. (2024, October). The global race for autonomous trucks: How the US, EU, and China transform transport. https://www.edge-ai-vision.com/2024/10/the-global-race-for-autonomous-trucks-how-the-us-eu-and-china-transform-transport

Frequently Asked Questions (FAQs)

1. What is an Operational Design Domain (ODD), and why does it matter for scaling fleets?

An Operational Design Domain defines the specific conditions under which an autonomous vehicle is allowed to operate, such as weather, road types, speed limits, and geographic areas. As fleets scale, expanding and validating ODDs across new cities, climates, and terrains becomes critical to ensure safety and performance consistency.

2. How do autonomous fleets handle edge cases like emergency vehicles or construction zones?

Handling edge cases remains one of the hardest challenges in autonomy. AVs use perception models trained on vast datasets and real-time sensor input to detect and respond to unusual scenarios. However, most systems still rely on remote assistance or cautious fallback maneuvers when encountering unfamiliar or ambiguous situations.

3. What role does teleoperation play in autonomous fleet deployments?

Teleoperation allows human operators to remotely intervene when an AV encounters a situation it cannot handle autonomously. This is especially useful in early deployments and mixed-traffic environments. As fleets scale, teleoperation support must be robust, low-latency, and integrated with real-time fleet monitoring systems.

4. How do companies assess ROI when deploying autonomous fleets?

Return on investment is evaluated based on several factors: reduction in labor costs, increased uptime, improved fuel efficiency or energy use, safety improvements, and operational scale. However, ROI must also account for the significant up-front investment in technology, infrastructure, and compliance.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Major Challenges in Scaling Autonomous Fleet Operations Read Post »