Enhancing Image Categorization with the Quantized Object Detection Model in Surveillance Systems

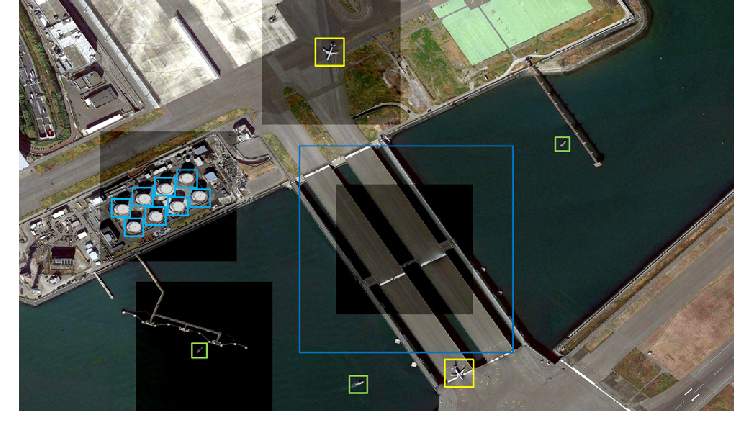

As surveillance technologies continue to evolve, their role in maintaining public safety, enforcing law and order, and monitoring critical infrastructure becomes increasingly indispensable. Central to the efficacy of these systems is the ability to process visual information rapidly and accurately. Image categorization is at the core of this capability, classifying visual data into predefined categories such as humans, vehicles, or suspicious objects.

With the rising deployment of surveillance systems across smart cities, airports, borders, and industrial zones, there’s a growing need to make these systems more intelligent and efficient. One promising approach that addresses both performance and resource constraints is the use of quantized object detection models. These models offer a compelling balance between computational speed and categorization accuracy, making them ideal for modern surveillance deployments.

In this blog, we will discuss object detection in surveillance systems and how quantized object detection models are reshaping image categorization. We’ll explore the challenges of categorizing visual data in real-world surveillance environments, define what quantized models are and how they work, and examine the specific advantages they bring to the table.

Image Categorization in Surveillance and Associated Challenges

Image recognition, at its core, involves assigning labels to objects or scenes captured in visual data. In the context of general computer vision, this might seem like a straightforward process. But when you introduce real-world surveillance environments into the equation, the complexity rises dramatically.

Surveillance systems aren’t operating in controlled lab conditions, they’re monitoring busy streets, crowded public transport terminals, remote borders, industrial facilities, and more. These environments are unpredictable, fast-paced, and often noisy, both visually and audibly.

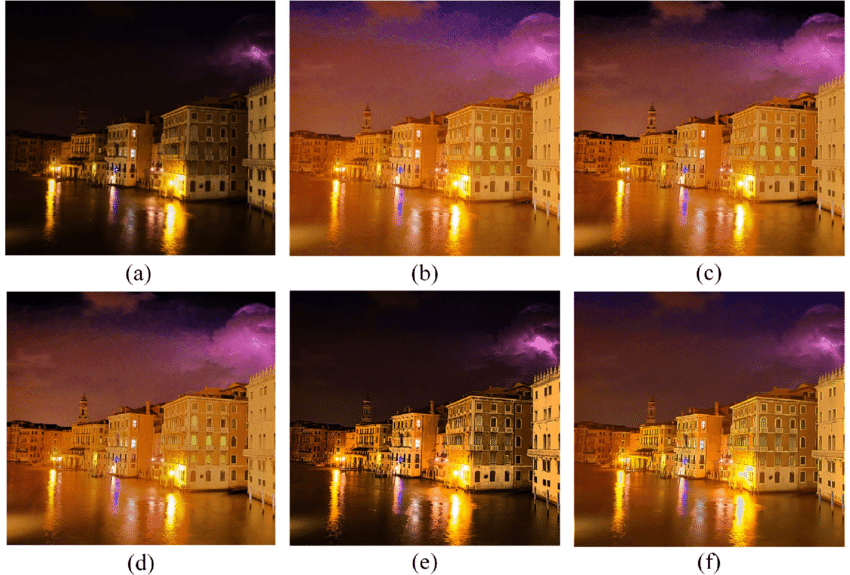

One of the biggest hurdles is the sheer variability in the data. Unlike curated datasets used to train traditional models, surveillance footage often includes obstructions, varying light conditions (nighttime, glare from headlights, heavy shadows), different angles, and partial views of people or objects. An object might be partially hidden by another or captured at a resolution that makes it hard to distinguish. For example, identifying a person wearing a hood in a shadowed alley or detecting a small object on a cluttered sidewalk is far more difficult than recognizing clearly labeled items in a dataset.

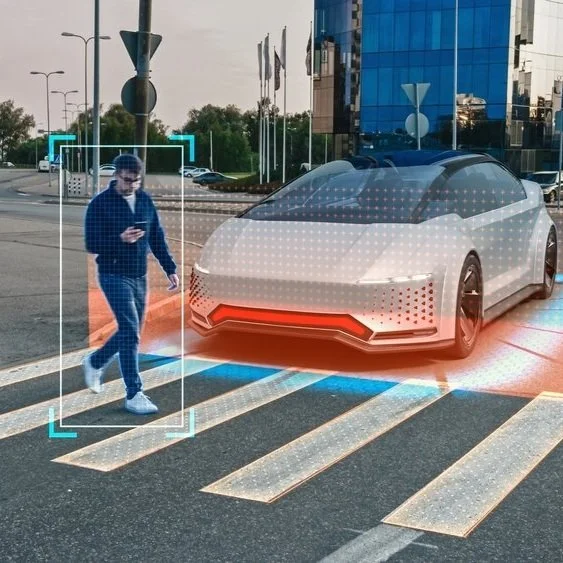

Another layer of complexity comes from the real-time performance expectations. Surveillance isn’t just about recording; it’s about actively analyzing and reacting. Whether it’s a city-wide camera network or a drone patrolling a perimeter, the system needs to process data continuously and make decisions.

The volume of data generated by surveillance systems is enormous. A single high-definition camera running 24/7 can produce terabytes of video data per week. Multiply that by dozens, hundreds, or thousands of cameras in a city or facility, and you’re dealing with an overwhelming amount of visual information. It’s not feasible, either technically or financially, to send all this data to the cloud for analysis. The processing has to happen closer to the source, which introduces another challenge: resource constraints.

Edge devices like cameras, drones, or embedded sensors typically don’t have the luxury of high-end GPUs or abundant memory. They’re designed to be lightweight and energy-efficient. Running large, traditional deep learning models on these devices is impractical. These models can be too slow, too power-hungry, and too demanding in terms of memory and thermal management. As a result, there’s a growing demand for models that are compact, efficient, and still capable of handling the nuanced demands of surveillance categorization.

In short, image categorization in surveillance is not just a technical problem, it’s an operational and logistical challenge that sits at the intersection of AI, hardware constraints, and real-world complexity. And this is precisely where innovations like quantized object recognition models come in, offering the potential to bridge the gap between what’s technically possible and what’s practically deployable.

What is a Quantized Object Recognition Model?

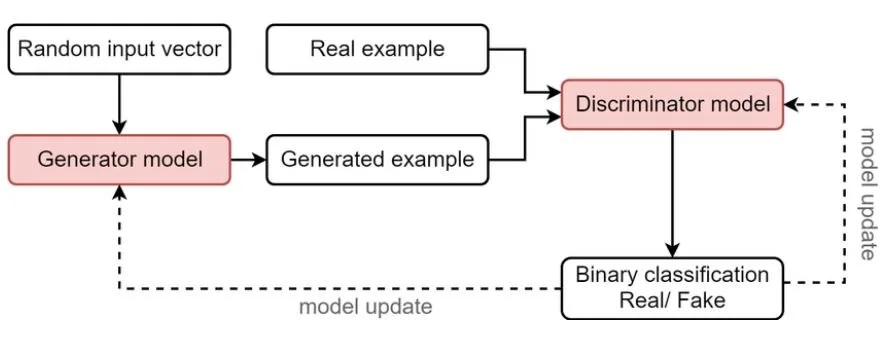

In the realm of machine learning, especially deep learning, models are traditionally built using high-precision numbers, specifically, 32-bit floating point (FP32) values. These numbers are used to represent everything from the weights of neural networks to the activation values calculated during inference.

While this level of precision ensures accuracy, it also comes with a significant computational cost. Large models can be slow to run, require a lot of memory, and consume substantial energy, especially problematic when deploying to edge devices like security cameras, drones, or embedded systems in surveillance environments.

This is where quantization enters the picture. Quantization is the process of reducing the precision of a model’s parameters and computations. Instead of using 32-bit floats, quantized models use lower-bit formats such as 16-bit, 8-bit, or even 4-bit integers. This seemingly simple reduction can lead to significant benefits: smaller model sizes, faster inference times, and lower power consumption. It allows developers to compress large neural networks into lightweight versions that can run efficiently on limited hardware, without having to fundamentally redesign the model architecture.

A quantized object recognition model is exactly what it sounds like: an object detection model, such as YOLO (You Only Look Once), SSD (Single Shot Multibox Detector), or MobileNet, that has been quantized to operate more efficiently. These models are trained to detect and classify objects (like people, vehicles, or bags) in an image or video feed, and quantization makes them more suitable for real-time use in edge-based surveillance systems.

There are two main types of quantization methods:

-

Post-Training Quantization – This is applied after the model is trained. It’s fast and easy but may result in slight drops in accuracy, especially if the original model is sensitive to precision loss.

-

Quantization-Aware Training (QAT) – In this approach, the model is trained with quantization in mind from the beginning. It simulates lower-precision operations during training, helping the model learn to adapt. This generally results in better performance after quantization, especially in complex tasks like object detection.

How Quantized Object Recognition Model Improves Image Categorization

Quantized models are reshaping how we approach image categorization in surveillance systems, primarily by making intelligent analysis possible on devices that were previously too resource-constrained to run modern deep learning models. Their impact is felt not only in technical efficiency but also in the way they influence operational workflows and real-time decision-making in high-stakes security environments. Let’s discuss how this model improves image categorization:

Real-Time Processing on Edge Devices

With quantized models, the image categorization task can happen locally on the device itself. A security camera equipped with a quantized model can identify vehicles, detect weapons, or differentiate between authorized and unauthorized personnel, right at the source, without the need to send video data to a data center. This dramatically shortens response time and also alleviates bandwidth demands, which is crucial for large-scale deployments where hundreds of devices are simultaneously streaming video.

Scalability and Cost Efficiency

Quantized models enable surveillance systems to scale more cost-effectively. When models require fewer resources, organizations can deploy them across a wider range of hardware: older devices, smaller drones, portable surveillance kits, and low-power embedded processors. This is particularly valuable in large-scale deployments like smart cities or airport security networks, where infrastructure costs can increase rapidly.

The cost savings go beyond just hardware. Quantized models reduce energy consumption, which extends the operational time of battery-powered devices and lowers overall energy costs. In military or remote applications where power sources are limited, this added efficiency means longer missions and fewer interruptions.

Improved Data Privacy and Security

Performing categorization tasks locally with quantized models also enhances privacy and data security. Instead of transmitting raw video footage, which may contain sensitive personal or strategic information, only metadata or categorization results (e.g., “suspicious vehicle detected in zone 3”) need to be sent back to a central system. This approach aligns with modern privacy protocols and regulatory requirements, especially in public surveillance scenarios where personal data protection is a concern.

Maintaining Accuracy in Resource-Limited Conditions

Quantized models can be fine-tuned on surveillance-specific datasets. This domain adaptation helps ensure the model continues to perform well in varied lighting, weather, and background conditions, hallmarks of real-world surveillance environments. In many cases, this tuned performance rivals or even exceeds that of bulkier, full-precision models running in idealized lab settings.

Enables Continuous Operation and Edge Learning

With lower processing demands, quantized models contribute to more stable and sustained system operation. Surveillance devices can remain active longer without overheating or needing to offload tasks. And as adaptive learning technologies mature, it’s becoming possible to retrain or fine-tune quantized models on-device using small amounts of new data, a concept known as edge learning. This allows surveillance systems to improve over time, adapting to new threats, behavioral patterns, or environmental changes without requiring a complete retraining cycle.

Application Scenarios

In border security applications, quantized models deployed on UAVs or thermal cameras help detect unauthorized crossings or movement patterns that deviate from the norm. Their efficiency allows them to process high-definition video feeds on the fly, delivering actionable intelligence directly to security personnel.

Another compelling use case is in public event monitoring. During large gatherings or protests, security forces use surveillance systems to detect anomalies such as sudden crowd dispersals, aggressive behavior, or the presence of weapons. With quantized models, such capabilities can be extended to mobile devices, allowing law enforcement teams to analyze video streams from body-worn cameras or drones in real time.

Learn more: Synthetic Data Generation for Edge Cases in Perception AI

Future Outlook

Looking ahead, the use of quantized models in surveillance is expected to expand significantly. As edge computing becomes more powerful and widespread, we can anticipate a shift toward fully decentralized AI surveillance systems capable of operating autonomously and securely.

The convergence of quantized models with other technologies, such as multi-modal learning, sensor fusion, and federated learning, will open new possibilities. For instance, future systems might combine audio, thermal, and visual data in quantized form to deliver holistic situational awareness. Furthermore, emerging standards around secure AI deployment will make it easier to validate and certify quantized models for use in sensitive applications.

Learn more: How AI-Powered Object Detection is Reshaping Defense

Conclusion

Quantized object recognition models represent a pivotal advancement in the field of AI-powered surveillance. By enabling efficient and accurate image categorization on edge devices, they solve one of the biggest challenges in scaling smart surveillance systems. These models are not just tools of convenience; they are strategic enablers that allow security systems to operate faster, smarter, and more autonomously. As technology continues to evolve, their role will only grow more central in the effort to build safe and resilient public and private spaces.

At DDD, we help organizations deploy and scale AI-powered object detection and categorization in real-world surveillance environments. Have questions about integrating advanced object recognition into your security systems? Talk to our experts today.

References:

NVIDIA. (n.d.). Jetson edge AI benchmark. NVIDIA Developer. https://developer.nvidia.com/embedded/jetson-benchmarks

Intel. (n.d.). OpenVINO™ toolkit overview. Intel Developer Zone. https://www.intel.com/content/www/us/en/developer/tools/openvino-toolkit/overview.html

Papers with Code. (n.d.). Object detection on COCO. https://paperswithcode.com/sota/object-detection-on-coco

Song, H., Wang, X., Bai, X., Wang, C., & Li, X. (2023). Vision-based object detection in autonomous driving: A survey. Expert Systems with Applications, 234, 120103. https://doi.org/10.1016/j.eswa.2023.120103

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.