Annotation for Night Driving: What AI Perception Models Need to See in the Dark

A perception model trained on daytime data does not automatically extend to nighttime conditions. The visual characteristics of the scene change fundamentally after dark: ambient illumination drops, headlight glare introduces high-contrast hotspots, pedestrians appear as fragmented silhouettes at the edge of headlight range, and objects that are clearly distinguishable in daylight become ambiguous overlapping shapes. Camera-based systems that perform reliably in daylight can degrade substantially in low-light conditions, and that degradation often shows up most severely in exactly the scenarios where detection failures are most dangerous.

Nighttime driving accounts for a disproportionate share of fatal road accidents. This blog examines what annotation programs need to account for when building training data for night driving perception. Video annotation services, image annotation services, and sensor data annotation are the three capabilities most directly involved in building the training data these models depend on.

Key Takeaways

- Models trained on daytime annotation data do not transfer reliably to nighttime conditions. Night driving perception requires annotation programs specifically designed for low-light visual characteristics.

- Camera-based perception degrades significantly in low-light conditions. Night driving annotation programs need to include thermal and infrared sensor data alongside camera data to give models light-independent perception inputs.

- Headlight glare, partial illumination, and object occlusion in low-light scenes create annotation challenges with no daytime equivalent. Annotators need specific training and guidelines for low-light visual interpretation.

- Temporal consistency across frames is more critical at night than in daytime annotation. Objects that are intermittently visible in low-light conditions must carry consistent labels across frames even when they temporarily fall below the illumination threshold for clear visual identification.

- Synthetic and augmented night driving data can supplement real-world nighttime datasets but cannot replace them. Annotation programs need to account for the different annotation requirements of synthetic versus real low-light data.

Why Daytime Training Data Does Not Transfer to Night

What Changes After Dark

The fundamental challenge of night driving perception is not simply reduced image brightness. It is a qualitative change in the visual characteristics of the scene that makes the training distribution of daytime models a poor match for nighttime inputs.

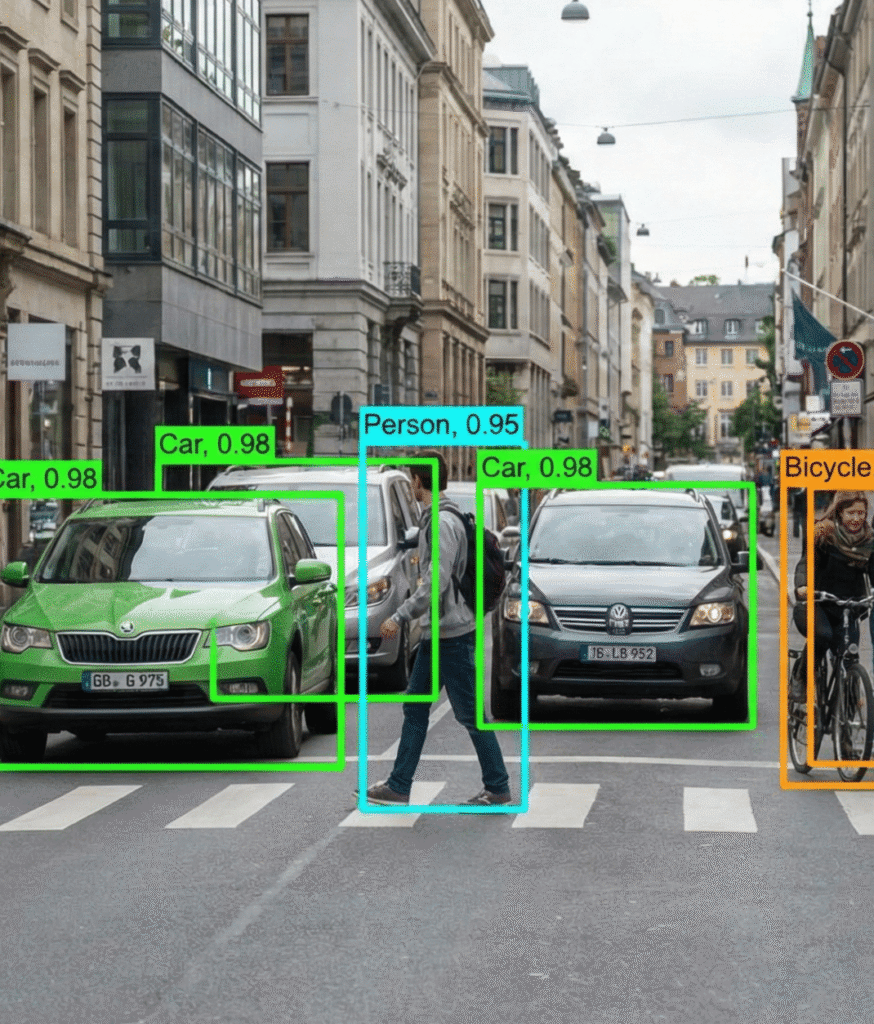

In daylight, objects have consistent surface texture, color information, and defined edges. A pedestrian at 40 meters is clearly distinguishable from the background in terms of shape, color, and texture. At night, the same pedestrian may be visible only as a partial silhouette at the edge of headlight range, with no color information, limited texture, and edges that blend into the surrounding darkness. The model needs to have been trained on examples of this specific visual presentation to recognize it reliably.

Vision-centric autonomous systems that perform well in good lighting face severe challenges in low-light conditions, as identified in research on perception algorithms for ADAS systems. Camera sensors that deliver reliable performance above a minimum illumination level have limited image features below that threshold, and CNN-based object detection models show degraded performance in dark scenarios. The implication for annotation programs is direct: a model that has not been trained on annotated low-light examples cannot reliably detect objects in those conditions. ADAS data services that include night driving as a distinct annotation category rather than as a subset of general driving data are the programs that produce models with genuine nighttime robustness.

The Dataset Coverage Gap

Most publicly available autonomous driving datasets are heavily skewed toward daytime conditions. Nighttime frames are underrepresented relative to their importance for safety-critical perception. A model trained on a standard dataset will have seen thousands of daytime pedestrian examples and a fraction of that number for nighttime pedestrian examples, producing a model that is much less capable at a condition where the safety stakes are higher.

Building night driving annotation programs specifically to address this coverage gap requires deliberate data collection in low-light conditions across a range of scenarios: urban night driving with streetlight coverage, rural night driving with no ambient illumination beyond headlights, dusk and dawn transitions where lighting is variable, and tunnels where the transition between illuminated and dark zones creates specific perception challenges.

Sensor Considerations for Night Driving Annotation

Where Camera-Based Systems Fall Short

Standard RGB cameras rely on ambient and reflected light to produce images. Below a minimum illumination threshold, image quality degrades in ways that affect downstream object detection. Noise increases. Dynamic range suffers when bright light sources such as headlights and streetlamps coexist with dark surroundings. Motion blur worsens because longer exposure times are needed in low light. Objects at the edge of headlight range may be barely visible for a fraction of a second before disappearing again.

These limitations are not surmountable purely through model improvements on camera data. The visual signal is genuinely degraded. The practical response in production ADAS systems is sensor fusion: combining camera data with thermal imaging, infrared sensors, radar, and LiDAR to provide light-independent perception inputs that maintain reliability when camera performance degrades.

Thermal and Infrared Annotation

Thermal cameras detect heat signatures rather than reflected light. They are not affected by ambient illumination levels, which makes them particularly valuable for pedestrian and cyclist detection at night, where a human body’s heat signature is clearly distinguishable from the environment regardless of lighting conditions. Far infrared sensors have been specifically evaluated for pedestrian detection in poor lighting and have demonstrated strong performance precisely in the conditions where camera systems degrade most.

Annotating thermal data requires different annotation approaches than visible-spectrum camera data: the visual characteristics are different, the object signatures are different, and the ambiguities are different. Sensor data annotation programs that include thermal modality annotation as a distinct workflow, rather than applying camera annotation guidelines to thermal data, produce annotations that reflect the specific visual logic of thermal imaging.

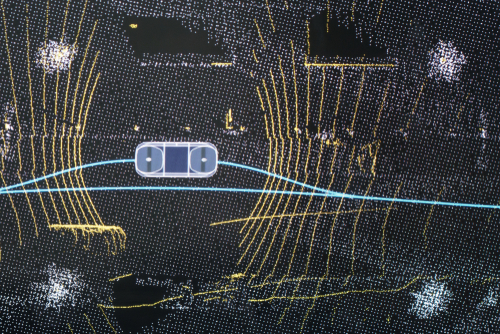

LiDAR and Radar in Low-Light Conditions

LiDAR operates by emitting laser pulses and measuring return times, which makes it largely independent of ambient illumination. A LiDAR scan at night produces the same spatial information as a daytime scan of the same scene. This light independence makes LiDAR annotation for night driving less challenging than camera annotation: the point cloud quality does not degrade with illumination, and bounding box placement can follow the same geometric logic as in daytime annotation.

Radar is similarly light-independent and has the additional advantage of providing Doppler velocity information. In nighttime scenarios where a camera may fail to detect a pedestrian moving across the headlight beam, radar may detect the velocity signature of that movement even without a clear spatial return. For fusion architectures that combine camera, LiDAR, and radar, nighttime conditions shift the relative weighting of each sensor: the camera contributes a less reliable signal, LiDAR and radar contribute more.

Annotation programs for night driving fusion data need to account for this shifting sensor reliability in the cross-modal consistency requirements they enforce. Multisensor fusion data services that treat nighttime as a distinct fusion scenario with its own annotation requirements produce fusion datasets that support robust nighttime perception rather than daytime fusion architectures applied to night conditions.

Annotation Challenges Specific to Night Driving

Headlight Glare and Partial Illumination

Headlight glare creates specific annotation challenges with no daytime equivalent. Oncoming headlights can saturate the camera sensor, creating bright regions that obscure objects immediately surrounding them. The headlights of the annotated vehicle illuminate a cone in front of the vehicle, leaving everything outside that cone in near-complete darkness. Objects at the edge of the illuminated zone are partially visible, requiring annotators to make inference-based judgments about object boundaries that are not fully visible in the frame.

Annotation guidelines for partial illumination need to address how to handle objects that are partially in the headlight beam and partially outside it. Bounding boxes that capture only the illuminated portion of an object produce models that learn a truncated object representation. Boxes that extend to the estimated full object boundary based on context require annotators to make inferences that go beyond direct visual observation, which introduces consistency challenges that standard annotation protocols do not address.

Temporal Consistency for Intermittently Visible Objects

In nighttime video annotation, objects frequently move in and out of visibility as they pass through illuminated and dark zones. A pedestrian crossing a street at night may be clearly visible as they cross through a streetlight beam, partially visible in the shadow between light sources, and invisible in the intervening darkness. Temporal consistency in annotation requires that the object carries a consistent label across the sequence, including the frames where it is not clearly visible, because models need to learn that objects persist through periods of low visibility rather than appearing and disappearing. Video annotation services that include multi-frame review and temporal consistency validation as part of the annotation workflow produce the sequence-level labels that nighttime perception models depend on for reliable tracking.

Annotator Training for Low-Light Visual Interpretation

Night driving annotation is a cognitively demanding task that requires annotators to make inference-based judgments that daytime annotation rarely requires. Identifying a pedestrian in a daytime image is primarily an observation task: the annotator sees the pedestrian and draws the box. Identifying a partially illuminated pedestrian at the edge of headlight range in a dark frame requires the annotator to integrate partial visual evidence with knowledge of typical pedestrian appearance, movement patterns, and the scene geometry.

Annotators working on night driving data need specific training in low-light visual interpretation. They need to understand how different object categories appear under different illumination conditions, how to reason about partially occluded or partially illuminated objects, and how to apply temporal context from adjacent frames when a single frame is insufficient for confident labeling. Programs that apply standard annotation onboarding to night driving tasks without modifying the training for low-light conditions consistently produce lower-quality annotations than programs that treat nighttime annotation as a distinct skill requiring specific preparation.

Synthetic and Augmented Night Driving Data

What Synthetic Night Data Can and Cannot Do

Generating synthetic night driving data through simulation or image-to-image translation is a common approach for supplementing real-world nighttime datasets, which are expensive and time-consuming to collect in sufficient volume. Synthetic approaches can generate large volumes of diverse nighttime scenarios, including rare or dangerous conditions that would be difficult to collect safely in real-world night driving.

The limitation of synthetic night data is the domain gap. Simulated illumination, headlight physics, and noise models do not perfectly replicate the characteristics of real nighttime camera data. Models trained heavily on synthetic night data and then deployed on real night driving imagery encounter a mismatch between their training distribution and the real-world visual characteristics they need to handle. Synthetic data is most valuable when used to supplement real nighttime data rather than replace it, particularly for augmenting coverage of rare scenarios that are underrepresented in real-world collections.

Annotation Requirements for Synthetic Night Data

Synthetic night driving data still requires annotation. The generation process produces images or sensor data, not labeled training examples. For simulation-generated data, annotations may be partially automated because the simulator knows the position and class of every object in the scene. But those auto-generated labels need human validation to catch cases where the rendering has produced visually ambiguous results that do not match the simulator’s ground truth. For image-to-image translated night data, where daytime images are transformed to simulate nighttime appearance, the original daytime annotations need to be reviewed and corrected for any cases where the transformation has changed the visual boundary or appearance of labeled objects. Image annotation services that include validation workflows for synthetic and augmented data treat annotation verification as a distinct quality step rather than assuming that automated labels from simulation are production-ready without human review.

How Digital Divide Data Can Help

Digital Divide Data supports ADAS and autonomous driving programs, building night driving training data across all relevant sensor modalities and annotation workflows.

For programs building camera-based night driving datasets, image annotation services and video annotation services include annotator training for low-light visual interpretation, guidelines for partial illumination and object occlusion, and temporal consistency validation across multi-frame sequences.

For programs building thermal and infrared annotation workflows, sensor data annotation covers thermal modality annotation as a distinct workflow with guidelines calibrated to the visual characteristics of thermal imaging rather than adapted from visible-spectrum camera annotation.

For programs building fusion datasets for nighttime perception, multisensor fusion data services maintain cross-modal label consistency across camera, LiDAR, radar, and thermal modalities, accounting for the shifted sensor reliability weights that characterize nighttime fusion scenarios.

Build night driving annotation programs that give your perception models what they actually need to see in the dark. Talk to an expert.

Conclusion

Night driving is one of the highest-stakes perception scenarios for autonomous and assisted driving systems, and one of the most systematically underserved by standard annotation programs. The visual characteristics of low-light scenes are different enough from daytime conditions that daytime training data does not extend to them reliably. Models need to be trained on annotated nighttime examples to perform in nighttime conditions.

Building that training data requires annotation programs designed specifically for low-light conditions: sensor coverage that includes thermal and infrared alongside camera and LiDAR, annotator training calibrated to low-light visual interpretation, temporal consistency requirements that handle intermittent object visibility, and validation workflows for synthetic night data. Physical AI programs that treat night driving annotation as a distinct discipline rather than as daytime annotation applied after dark are the ones that produce perception models with the nighttime robustness that safe deployment requires.

References

Intechopen. (2023). Latest advancements in perception algorithms for ADAS and AV systems using infrared images and deep learning. IntechOpen. https://www.intechopen.com/chapters/1169631

Huang, B., Allebosch, G., Veelaert, P., Willems, T., Philips, W., & Aelterman, J. (2025). Low-latency pedestrian detection based on dynamic vision sensor and RGB camera fusion. Journal of Intelligent and Robotic Systems. https://doi.org/10.1007/s10846-026-02361-5

Frequently Asked Questions

Q1. Why do models trained on daytime data underperform in nighttime driving conditions?

Because the visual characteristics of nighttime scenes are qualitatively different from daytime scenes, not just darker. Nighttime camera images have no color information in low-light areas, degraded texture, high-contrast glare from headlights and streetlamps, and object edges that blend into dark backgrounds. These characteristics mean that the feature patterns a model learns from daytime examples do not reliably match what it encounters in nighttime inputs. Models need to be trained on annotated nighttime examples to develop robust nighttime detection.

Q2. What sensors are most important for night driving perception, and how do their annotation requirements differ?

The key sensors for nighttime perception are RGB cameras, thermal cameras, infrared sensors, LiDAR, and radar. Camera annotation for night driving requires guidelines for partial illumination, headlight glare, and low-visibility edge cases that have no daytime equivalent. Thermal annotation requires different guidelines calibrated to heat signature interpretation rather than visible-spectrum visual interpretation. LiDAR and radar annotation is less affected by illumination conditions because those sensors are light-independent, but they carry different weighting in night fusion architectures, and the annotation cross-modal consistency requirements need to be reflected.

Q3. What is temporal consistency annotation, and why is it especially important at night?

Temporal consistency means that an object carries a consistent label across consecutive video frames even when it is not clearly visible in every frame. At night, objects frequently move in and out of the illuminated zone, making them intermittently visible or invisible. If annotators only label objects in frames where they are clearly visible, the model learns that objects appear and disappear rather than that they persist through low-visibility periods. Consistent labeling across frames, supported by multi-frame review tools and explicit annotation guidelines for low-visibility frames, produces training data that teaches the model to maintain object tracks through nighttime visibility fluctuations.

Q4. Can synthetic night driving data replace real nighttime annotation programs?

No. Synthetic night data is a useful supplement, particularly for rare scenarios that are difficult to collect in real-world conditions, but it cannot replace real nighttime data. The domain gap between simulated and real low-light imagery means that models trained primarily on synthetic night data encounter a distribution mismatch in deployment. Real nighttime datasets provide the authentic visual characteristics that synthetic approaches approximate but do not fully replicate. The practical approach is using synthetic data to augment real nighttime collections and improve coverage of underrepresented scenarios, not to substitute for real-world collections.

Annotation for Night Driving: What AI Perception Models Need to See in the Dark Read Post »