Cuboid Annotation for Depth Perception: Enabling Safer Robots and Autonomous Systems

Autonomous vehicles today are equipped with a variety of sensors, from monocular and stereo cameras to LiDAR and RADAR. These sensors generate vast amounts of raw data, but without interpretation, that data has limited value. Machine learning models rely on annotated datasets to translate pixels and points into a structured understanding. The quality and type of data annotation directly determine how effectively a model can learn to perceive depth, identify objects, and make real-time decisions.

Cuboid annotation plays a critical role in this process. By enclosing objects in three-dimensional bounding boxes, cuboids provide not only positional information but also orientation and scale. Unlike 2D annotations, which capture only height and width on a flat image, cuboids reflect the real-world volume of an object and its relationship to the surrounding environment.

In this blog, we will explore what cuboid annotation is, why it matters for depth perception, the challenges it presents, the future directions of the field, and how we help organizations implement it at scale.

What is Cuboid Annotation?

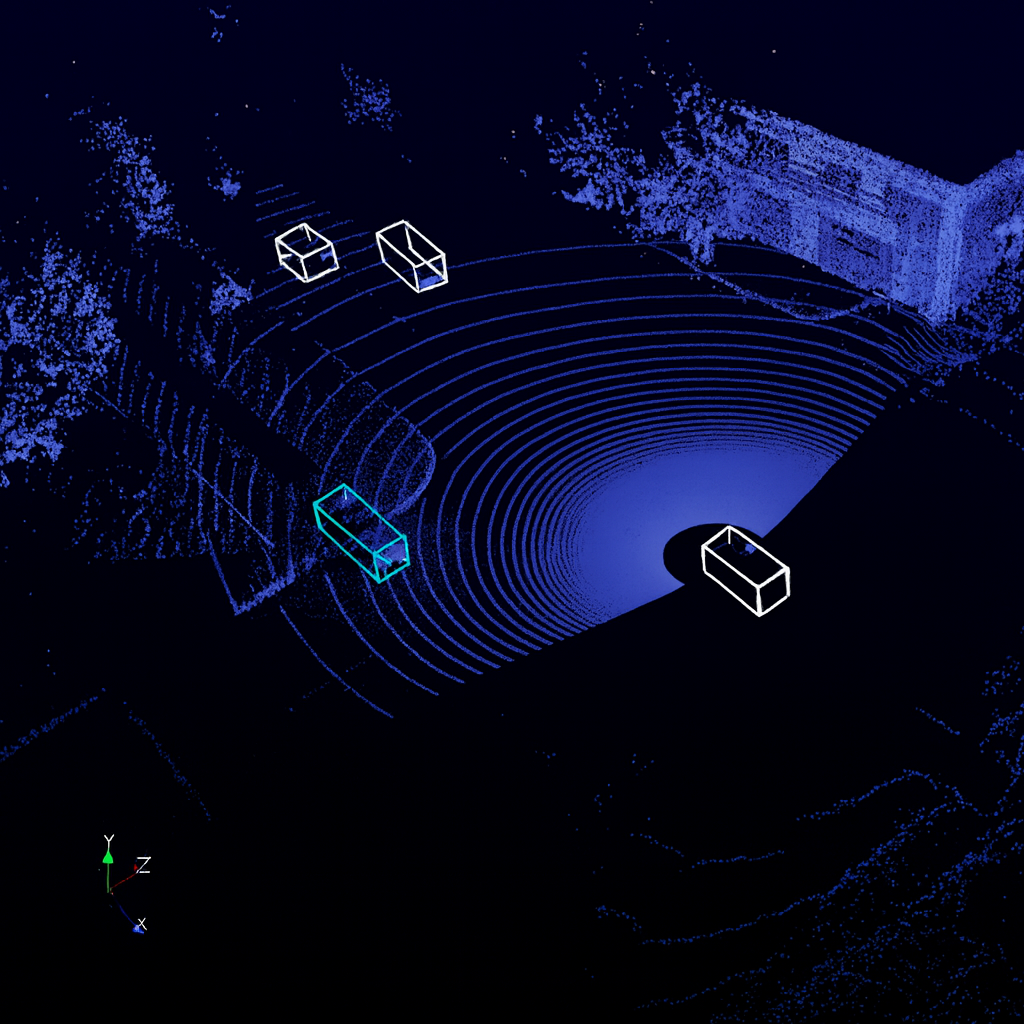

Cuboid annotation is the process of enclosing objects in three-dimensional bounding boxes within an image or point cloud. Each cuboid defines an object’s height, width, depth, orientation, and position in space, giving machine learning models the information they need to understand not only what an object is but also where it is and how it is aligned.

This approach goes beyond traditional two-dimensional annotations. A 2D bounding box can identify that a car exists in a frame and mark its visible outline, but it cannot tell the system whether the car is angled toward an intersection or parked along the curb. Polygons and segmentation masks improve boundary accuracy in 2D but still lack volumetric depth. Cuboids, by contrast, describe objects in a way that reflects the real world, making them indispensable for depth perception tasks.

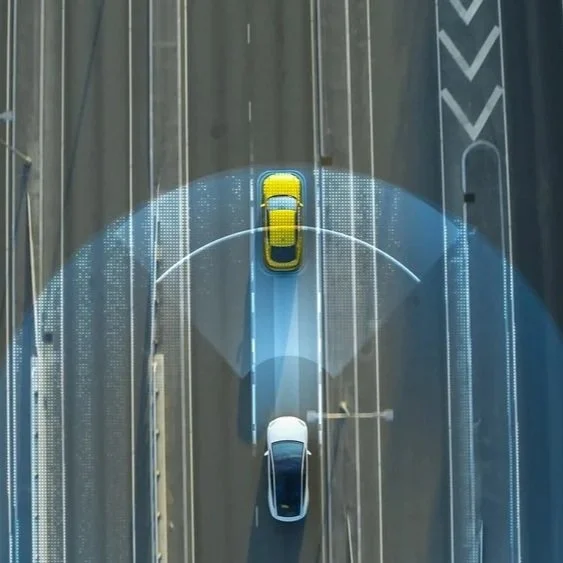

In autonomous vehicle datasets, a cuboid drawn around another car helps the system estimate its size, direction of travel, and distance from the ego vehicle. For warehouse robots, cuboid annotation of shelves and packages provides precise information for safe navigation through narrow aisles and accurate placement or retrieval of items. In both cases, the cuboid acts as a simplified yet powerful representation of reality that can be processed efficiently by AI models.

By capturing orientation, scale, and occlusion, cuboid annotation creates a richer understanding of the environment than 2D methods can achieve. This makes it one of the most critical annotation types for building systems that must operate reliably in complex, safety-critical settings.

Why Cuboid Annotation Matters for Depth Perception

Depth estimation is one of the most difficult challenges in computer vision autonomy. Systems rely on a range of inputs to approximate distance and spatial layout. Monocular cameras are cost-effective and widely used but often ambiguous, as a single image does not provide reliable depth cues. Stereo cameras offer improvements by simulating human binocular vision, but their accuracy depends heavily on calibration and environmental conditions. RGB-D sensors add a dedicated depth channel that can yield precise results, yet they are expensive and less practical in outdoor or large-scale environments.

Cuboid annotations help address these challenges by acting as geometric priors for machine learning models. A cuboid encodes an object’s volume and orientation, giving the system a reference for understanding its position in three-dimensional space. This additional structure stabilizes depth estimation, particularly in monocular setups where spatial ambiguity is common. In practice, cuboids ensure that the model learns not just to recognize objects but also to reason about how those objects exist in depth relative to the observer.

The importance of this capability becomes clear in safety-critical applications. In autonomous driving, cuboids allow vehicles to gauge the distance and orientation of other cars, cyclists, and pedestrians with greater confidence, supporting collision avoidance and safe lane merging. In warehouse automation, cuboid annotations help robots detect shelving units and moving packages at the right scale, allowing them to navigate efficiently in crowded, constrained spaces. In defense and security robotics, accurate cuboid-based perception reduces the risk of misidentification in complex, high-stakes environments where errors could have serious consequences.

By providing explicit three-dimensional information, cuboid annotation ensures that depth perception systems are not simply relying on inference but are grounded in structured representations of the real world. This makes them an essential component of building reliable and safe autonomous systems.

Challenges in Cuboid Annotation

Despite the clear benefits of cuboid annotation for depth perception, several challenges limit its scalability and effectiveness in real-world applications.

Scalability

Annotating cuboids across millions of frames in autonomous driving or robotics datasets is resource-intensive and time-consuming. Even with semi-automated tools, the need for human oversight in edge cases means costs rise quickly as projects scale. For companies building safety-critical systems, this creates a tension between the need for large, diverse datasets and the expense of producing them.

Ambiguity in labeling

Objects that are only partially visible, heavily occluded, or deformable are notoriously hard to annotate accurately with cuboids. A car that is half-hidden behind a truck or a package wrapped in uneven material can produce inconsistencies in annotation, which later translate into unreliable predictions during deployment.

Sensor fusion complexity

In modern robotics and AV systems, cuboids must align across multiple data sources such as LiDAR, RADAR, and RGB cameras. Any misalignment between these inputs can cause errors in cuboid placement, undermining the reliability of multi-sensor perception pipelines.

Standardization gap

While some datasets enforce strict annotation policies, many others lack detailed guidelines. This makes it difficult to transfer models trained on one dataset to another or to integrate annotations from multiple sources. The absence of unified standards slows down progress and creates inefficiencies for developers who need their models to perform reliably across domains and geographies.

Future Directions for Cuboid Annotation

The future of cuboid annotation lies in making the process faster, more accurate, and more aligned with the safety requirements of autonomous systems.

Automation

Advances in AI-assisted labeling are enabling semi-automatic cuboid generation, where algorithms propose initial annotations and human annotators verify or refine them. This hybrid approach significantly reduces manual effort while maintaining the accuracy required for safety-critical datasets.

Synthetic data generation

Using simulation environments and digital twins, developers can create annotated cuboids for rare or hazardous scenarios that would be difficult or unsafe to capture in reality. This approach not only enriches datasets but also ensures that autonomous systems are trained on edge cases that are crucial for robustness.

Hybrid supervision methods

By combining cuboids with other forms of annotation, such as segmentation masks and point-cloud labels, systems gain a richer, multi-layered understanding of objects. This helps bridge the gap between efficient geometric representations and high-fidelity object boundaries, resulting in improved depth perception across modalities.

Safety pipelines

Cuboids, with their clear geometric structure, are well-suited to serve as interpretable primitives in explainable AI frameworks. By using cuboids as a foundation for safety audits and system certification, developers can provide regulators and stakeholders with transparent evidence of how autonomous systems perceive and react to their environment.

Read more: Major Challenges in Text Annotation for Chatbots and LLMs

How We Can Help

At Digital Divide Data (DDD), we understand that the quality of annotations directly shapes the safety and reliability of autonomous systems. Our teams specialize in delivering high-quality, scalable 3D annotation services, including cuboid labeling for complex multi-sensor environments. By combining the precision of skilled annotators with AI-assisted workflows, we ensure that every cuboid is accurate, consistent, and aligned with industry standards.

We work with organizations across automotive, humanoids, and defense tech to tackle the core challenges of cuboid annotation: scalability, consistency, and cost-effectiveness. Our robust quality assurance frameworks are designed to minimize ambiguity and misalignment across LiDAR, RADAR, and camera inputs. This ensures that models trained on DDD-annotated datasets perform reliably in the field.

By partnering with us, organizations can accelerate development cycles, reduce labeling overhead, and focus on building safer, more capable autonomous systems.

Read more: Long Range LiDAR vs. Imaging Radar for Autonomy

Conclusion

Cuboid annotation has emerged as one of the most effective ways to translate raw sensor data into structured understanding for autonomous systems. By capturing not just the presence of objects but also their orientation, scale, and depth, cuboids provide the geometric foundation that makes reliable perception possible. This capability is essential in safety-critical domains such as autonomous driving, warehouse automation, and defense robotics, where even small errors in depth estimation can have serious consequences.

Ultimately, safer robots and autonomous systems begin with better data. Cuboid annotation represents a practical and interpretable solution for translating complex environments into actionable intelligence. As tools, datasets, and methodologies mature, it will continue to be a critical enabler of trust and reliability in autonomy.

Partner with DDD to power your autonomous systems with precise and scalable cuboid annotation. Safer autonomy starts with better data.

References

Sun, J., Zhou, M., & Patel, R. (2024). UniMODE: Unified monocular 3D object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (pp. 10321–10330). IEEE.

V7 Labs. (2024). Image annotation: Definition, use cases & types. V7 Labs Blog. https://www.v7labs.com/blog

Waymo Open Dataset. (2024). 3D annotation guidelines. Waymo. https://waymo.com/open

FAQs

Q1. How do cuboid annotations compare with mesh or voxel-based annotations?

Cuboid annotations provide a lightweight and interpretable geometric representation that is efficient for real-time applications such as autonomous driving. Meshes and voxels capture finer detail and shape fidelity but are computationally heavier, making them less practical for systems where speed is critical.

Q2. Can cuboid annotation support real-time training or only offline datasets?

While cuboid annotation is primarily used for offline dataset preparation, advances in active learning and AI-assisted labeling are enabling near real-time annotation for continuous model improvement. This is particularly useful in simulation environments and testing pipelines.

Q3. What role does human oversight play in cuboid annotation?

Human oversight remains essential, especially for ambiguous cases such as occluded objects or irregular shapes. Automated tools can generate cuboids quickly, but human review ensures accuracy and consistency that are critical for safety.

Q4. Are there specific industries beyond robotics and automotive that benefit from cuboid annotation?

Yes. Healthcare uses cuboids in medical imaging to annotate organs or anatomical structures in 3D scans. Retail and logistics apply cuboids to track package volumes and optimize warehouse operations. Augmented and virtual reality systems also rely on cuboids to align virtual objects with real-world environments.

Q5. How do annotation errors affect downstream models?

Errors in cuboid placement, orientation, or scale can mislead models into misjudging depth or object size, resulting in unsafe behaviors such as delayed braking in vehicles or misalignment in robotic manipulation. Rigorous quality control is therefore essential.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Cuboid Annotation for Depth Perception: Enabling Safer Robots and Autonomous Systems Read Post »