Geospatial Data for Physical AI: Challenges, Solutions, and Real-World Applications

Autonomy is inseparable from geography. A robot cannot plan a path without understanding where it is. A drone cannot avoid a restricted zone if it does not know the boundary. An autonomous vehicle cannot merge safely unless it understands lanes, curvature, elevation, and the behavior of nearby agents. Spatial intelligence is not a feature layered on top. It is foundational.

Physical AI systems operate in dynamic environments where roads change overnight, construction zones appear without notice, and terrain conditions shift with the weather. Static GIS is no longer enough. What we need now is real-time spatial intelligence that evolves alongside the physical world.

This detailed guide explores the challenges, emerging solutions, and real-world applications shaping geospatial data services for Physical AI.

What Are Geospatial Data Services for Physical AI?

Geospatial data services for Physical AI extend beyond traditional mapping. They encompass the collection, processing, validation, and continuous updating of spatial datasets that autonomous systems depend on for decision-making.

Core Components in Physical AI Geospatial Services

Data Acquisition

Satellite imagery provides broad coverage. It captures cities, coastlines, agricultural zones, and infrastructure networks. For disaster response or large-scale monitoring, satellites often provide the first signal that something has changed. Aerial and drone imaging offer higher resolution and flexibility. A utility company might deploy drones to inspect transmission lines after a storm. A municipality could capture updated imagery for an expanding suburban area.

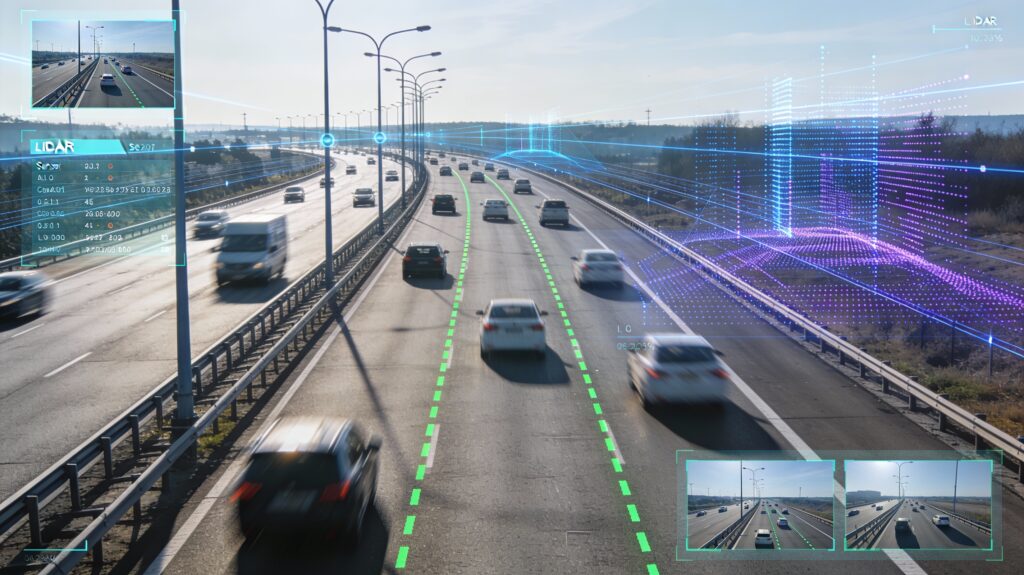

LiDAR point clouds add depth. They reveal elevation, object geometry, and fine-grained surface detail. In dense urban corridors, LiDAR helps distinguish between overlapping structures such as overpasses and adjacent buildings. Ground vehicle sensors, including cameras and depth sensors, collect street-level perspectives. These are particularly critical for lane-level mapping and object detection.

GNSS, combined with inertial measurement units, provides positioning and orientation. Radar contributes to perception in rain, fog, and low visibility conditions. Each source offers a partial view. Together, they create a composite understanding of the environment.

Data Processing and Fusion

Raw data is rarely usable in isolation. Sensor alignment is necessary to ensure that LiDAR points correspond to camera frames and that GNSS coordinates match physical landmarks. Multi-modal fusion integrates vision, LiDAR, GNSS, and radar streams. The goal is to produce a coherent spatial model that compensates for the weaknesses of individual sensors. A camera might misinterpret shadows. LiDAR might struggle with reflective surfaces. GNSS signals can degrade in urban canyons. Fusion helps mitigate these vulnerabilities.

Temporal synchronization is equally important. Data captured at different times can create inconsistencies if not properly aligned. For high-speed vehicles, even small timing discrepancies may lead to misjudgments. Cross-view alignment connects satellite or aerial imagery with ground-level observations. This enables systems to reconcile top-down perspectives with street-level realities. Noise filtering and anomaly detection remove spurious readings and flag sensor irregularities. Without this step, small errors accumulate quickly.

Spatial Representation

Once processed, spatial data must be represented in formats that AI systems can reason over. High definition maps include vectorized lanes, traffic signals, boundaries, and objects. These maps are far more detailed than consumer navigation maps. They encode curvature, slope, and semantic labels. Three-dimensional terrain models capture elevation and surface variation. In off-road or military scenarios, this information may determine whether a vehicle can traverse a given path.

Semantic segmentation layers categorize regions such as road, sidewalk, vegetation, or building facade. These labels support object detection and scene understanding. Occupancy grids represent the environment as discrete cells marked as free or occupied. They are useful for path planning in robotics. Digital twins integrate multiple layers into a unified model of a city, facility, or region. They aim to reflect both geometry and dynamic state.

Continuous Updating and Validation

Spatial data ages quickly. A new roundabout appears. A bridge closes for maintenance. A temporary barrier blocks a lane. Systems must detect and incorporate these changes. Online map construction allows vehicles or drones to contribute updates continuously. Real-time change detection algorithms compare new observations with existing maps.

Edge deployment ensures that critical updates reach devices with minimal latency. Humans in the loop quality assurance reviews ambiguous cases and validates complex annotations. Version control for spatial datasets tracks modifications and enables rollback if errors are introduced. In many ways, geospatial data management begins to resemble software engineering.

Core Challenges in Geospatial Data for Physical AI

While the architecture appears straightforward, implementation is anything but simple.

Data Volume and Velocity

Petabytes of sensor data accumulate rapidly. A single autonomous vehicle can generate terabytes in a day. Multiply that across fleets, and the storage and processing demands escalate quickly. Continuous streaming requirements add complexity. Data must be ingested, processed, and distributed without introducing unacceptable delays. Cloud infrastructure offers scalability, but transmitting everything to centralized servers is not always practical.

Edge versus cloud trade-offs become critical. Processing at the edge reduces latency but constrains computational resources. Centralized processing offers scale but may introduce bottlenecks. Cost and scalability constraints loom in the background. High-resolution LiDAR and imagery are expensive to collect and store. Organizations must balance coverage, precision, and financial sustainability. The impact is tangible. Delays in map refresh can lead to unsafe navigation decisions. An outdated lane marking or a missing construction barrier might result in misaligned path planning.

Sensor Fusion Complexity

Aligning LiDAR, cameras, GNSS, and IMU data is mathematically demanding. Drift accumulates over time. Small calibration errors compound. Synchronization errors may cause mismatches between perceived and actual object positions. Calibration instability can arise from temperature changes or mechanical vibrations.

GNSS denied environments present particular challenges. Urban canyons, tunnels, or hostile interference can degrade signals. Systems must rely on alternative localization methods, which may not always be equally precise. Localization errors directly affect autonomy performance. If a vehicle believes it is ten centimeters off its true position, that may be manageable. If the error grows to half a meter, lane keeping and obstacle avoidance degrade noticeably.

HD Map Lifecycle Management

Map staleness is a persistent risk. Road geometry changes due to construction. Temporary lane shifts occur during maintenance, and regulatory updates modify traffic rules. Urban areas often receive frequent updates, but rural regions may lag. Coverage gaps create uneven reliability.

A tension emerges between offline map generation and real-time updating. Offline methods allow thorough validation but lack immediacy. Real-time approaches adapt quickly but may introduce inconsistencies if not carefully managed.

Spatial Reasoning Limitations in AI Models

Even advanced AI models sometimes struggle with spatial reasoning. Understanding distances, routes, and relationships between objects in three-dimensional space is not trivial. Cross-view reasoning, such as aligning satellite imagery with ground-level observations, can be error-prone. Models trained primarily on textual or image data may lack explicit spatial grounding.

Dynamic environments complicate matters further. A static map may not capture a moving pedestrian or a temporary road closure. Systems must interpret context continuously. The implication is subtle but important. Foundation models are not inherently spatially grounded. They require explicit integration with geospatial data layers and reasoning mechanisms.

Data Quality and Annotation Challenges

Three-dimensional point cloud labeling is complex. Annotators must interpret dense clusters of points and assign semantic categories accurately. Vectorized lane annotation demands precision. A slight misalignment in curvature can propagate into navigation errors.

Multilingual geospatial metadata introduces additional complexity, especially in cross-border contexts. Legal boundaries, infrastructure labels, and regulatory terms may vary by jurisdiction. Boundary definitions in defense or critical infrastructure settings can be sensitive. Mislabeling restricted zones is not a trivial mistake. Maintaining consistency at scale is an operational challenge. As datasets grow, ensuring uniform labeling standards becomes harder.

Interoperability and Standardization

Different coordinate systems and projections complicate integration. Format incompatibilities require conversion pipelines. Data governance constraints differ between regions. Compliance requirements may restrict how and where data is stored. Cross-border data restrictions can limit collaboration. Interoperability is not glamorous work, but without it, spatial systems fragment into silos.

Real Time and Edge Constraints

Latency sensitivity is acute in autonomy. A delayed update could mean reacting too late to an obstacle. Energy constraints affect UAVs and mobile robots. Heavy processing drains batteries quickly. Bandwidth limitations restrict how much data can be transmitted in real time. On-device inference becomes necessary in many cases. Designing systems that balance performance, energy consumption, and communication efficiency is a constant exercise in compromise.

Emerging Solutions in Geospatial Data

Despite the challenges, progress continues steadily.

Online and Incremental HD Map Construction

Continuous map updating reduces staleness. Temporal fusion techniques aggregate observations over time, smoothing out anomalies. Change detection systems compare new sensor inputs against existing maps and flag discrepancies. Fleet-based collaborative mapping distributes the workload across multiple vehicles or drones.

Advanced Multi-Sensor Fusion Architectures

Tightly coupled fusion pipelines integrate sensors at a deeper level rather than combining outputs at the end. Sensor anomaly detection identifies failing components. Drift correction systems recalibrate continuously. Cross-view geo-localization techniques improve positioning in GNSS-degraded environments. Localization accuracy improves in complex settings, such as dense cities or mountainous terrain.

Geospatial Digital Twins

Three-dimensional representations of cities and infrastructure allow stakeholders to visualize and simulate scenarios. Real-time synchronization integrates IoT streams, traffic data, and environmental sensors. Simulation to reality validation tests scenarios before deployment. Use cases range from infrastructure monitoring to defense simulations and smart city planning.

Foundation Models for Geospatial Reasoning

Pre-trained models adapted to spatial tasks can assist with scene interpretation and anomaly detection. Map-aware reasoning layers incorporate structured spatial data into decision processes. Geo-grounded language models enable natural language queries over maps.

Multi-modal spatial embeddings combine imagery, text, and structured geospatial data. Decision-making in disaster response, logistics, and defense may benefit from these integrations. Still, caution is warranted. Overreliance on generalized models without domain adaptation may introduce subtle errors.

Human in the Loop Geospatial Workflows

AI-assisted annotation accelerates labeling, but human reviewers validate edge cases. Automated pre-labeling reduces repetitive tasks. Active learning loops prioritize uncertain samples for review. Quality validation checkpoints maintain standards. Automation reduces cost. Humans ensure safety and precision. The balance matters.

Synthetic and Simulation-Based Geospatial Data

Scenario generation creates rare events such as extreme weather or unexpected obstacles. Terrain modeling supports off-road testing. Weather augmentation simulates fog, rain, or snow conditions. Stress testing autonomous systems before deployment reveals weaknesses that might otherwise remain hidden.

Real World Applications of Geospatial Data Services in Physical AI

Autonomous Vehicles and Mobility

High definition map-driven localization supports lane-level navigation. Vehicles reference vectorized lanes and traffic rules. Construction zone updates are integrated through fleet-based map refinement. A single vehicle detecting a new barrier can propagate that information to others. Continuous, high-precision spatial datasets are essential. Without them, autonomy degrades quickly.

UAVs and Aerial Robotics

GNSS denied navigation requires alternative localization methods. Cross-view geo-localization aligns aerial imagery with stored maps. Terrain-aware route planning reduces collision risk. In agriculture, drones map crop health and irrigation patterns with centimeter accuracy. Precision matters as a few meters of error could mean misidentifying crop stress zones.

Defense and Security Systems

Autonomous ground vehicles rely on terrain intelligence. ISR data fusion integrates imagery, radar, and signals data. Edge-based spatial reasoning supports real-time situational awareness in contested environments. Strategic value lies in the timely, accurate interpretation of spatial information.

Smart Cities and Infrastructure Monitoring

Traffic optimization uses real-time spatial data to adjust signal timing. Digital twins of urban systems support planning. Energy grid mapping identifies faults and monitors asset health. Infrastructure anomaly detection flags structural issues early. Spatial awareness becomes an operational asset.

Climate and Environmental Monitoring

Satellite-based change detection identifies deforestation or urban expansion. Flood mapping supports emergency response. Wildfire spread modeling predicts risk zones. Coastal monitoring tracks erosion and sea level changes. In these contexts, spatial intelligence informs policy and action.

How DDD Can Help

Building and maintaining geospatial data infrastructure requires more than technical tools. It demands operational discipline, scalable annotation workflows, and continuous quality oversight.

Digital Divide Data supports Physical AI programs through end-to-end geospatial services. This includes high-precision 2D and 3D annotation, LiDAR point cloud labeling, vector map creation, and semantic segmentation. Teams are trained to handle complex spatial datasets across mobility, robotics, and defense contexts.

DDD also integrates human-in-the-loop validation frameworks that reduce error propagation. Active learning strategies help prioritize ambiguous cases. Structured QA pipelines ensure consistency across large-scale datasets. For organizations struggling with HD map updates, digital twin maintenance, or multi-sensor dataset management, DDD provides structured workflows designed to scale without sacrificing precision.

Talk to our expert and build spatial intelligence that scales with DDD’s geospatial data services.

Conclusion

Physical AI requires spatial awareness. That statement may sound straightforward, but its implications are profound. Autonomous systems cannot function safely without accurate, current, and structured geospatial data. Geospatial data services are becoming core AI infrastructure. They encompass acquisition, fusion, representation, validation, and continuous updating. Each layer introduces challenges, from data volume and sensor drift to interoperability and edge constraints.

Success depends on data quality, fusion architecture, lifecycle management, and human oversight. Automation accelerates workflows, yet human expertise remains indispensable. Competitive advantage will likely lie in scalable, continuously validated spatial pipelines. Organizations that treat geospatial data as a living system rather than a static asset are better positioned to deploy reliable Physical AI solutions.

The future of autonomy is not only about smarter algorithms. It is about better maps, maintained with discipline and care.

References

Schottlander, D., & Shekel, T. (2025, April 8). Geospatial reasoning: Unlocking insights with generative AI and multiple foundation models. Google Research. https://research.google/blog/geospatial-reasoning-unlocking-insights-with-generative-ai-and-multiple-foundation-models/

Ingle, P. Y., & Kim, Y.-G. (2025). Multi-sensor data fusion across dimensions: A novel approach to synopsis generation using sensory data. Journal of Industrial Information Integration, 46, Article 100876. https://doi.org/10.1016/j.jii.2025.100876

Kwag, J., & Toth, C. (2024). A review on end-to-end high-definition map generation. In The International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences (Vol. XLVIII-2-2024, pp. 187–194). https://doi.org/10.5194/isprs-archives-XLVIII-2-2024-187-2024

FAQs

How often should HD maps be updated for autonomous vehicles?

Update frequency depends on the deployment context. Dense urban areas may require near real-time updates, while rural highways can tolerate longer intervals. The key is implementing mechanisms for detecting and propagating changes quickly.

Can Physical AI systems operate without HD maps?

Some systems rely more heavily on real-time perception than pre-built maps. However, operating entirely without structured spatial data increases uncertainty and may reduce safety margins.

What role does edge computing play in geospatial AI?

Edge computing enables low-latency processing close to the sensor. It reduces dependence on continuous connectivity and supports faster decision-making.

Are digital twins necessary for all Physical AI deployments?

Not always. Digital twins are particularly useful for complex infrastructure, defense simulations, and smart city applications. Simpler deployments may rely on lighter-weight spatial models.

How do organizations balance data privacy with geospatial collection?

Compliance frameworks, anonymization techniques, and region-specific storage policies help manage privacy concerns while maintaining operational effectiveness.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Geospatial Data for Physical AI: Challenges, Solutions, and Real-World Applications Read Post »