How to Prepare Enterprise Knowledge for Runtime Access by AI Agents?

Agent-ready data is not the same as training data for AI agents. Training data shapes how an agent reasons; agent-ready data determines what that agent can actually find and use at runtime. Most enterprise knowledge, stored across file servers, CRMs, wikis, and legacy document repositories, is structurally inaccessible to AI agents without deliberate preparation. That preparation is what AI data operations services are increasingly being designed to solve.

Estimates from IBM suggest roughly 90% of enterprise data is in a state that agents cannot reliably use. The failure is rarely about data volume, rather it is about structure, discoverability, and permission-aware indexing. Enterprises that deploy agents on top of raw, unprepared knowledge bases consistently find that retrieval quality degrades faster than model quality improves. The gap between what agents are capable of and what they can actually access is a data collection and curation problem as much as it is a model problem.

Key Takeaways

- Training data shapes how an agent reasons, while agent-ready data determines what it can actually find and use when executing a task.

- Roughly 90% of enterprise data is currently unusable by AI agents because it lacks the structure, semantic indexing, and permission metadata that agents need to retrieve it reliably.

- An agent operating on a poorly prepared knowledge base will underperform regardless of how capable its underlying model is.

- Semantic chunking, metadata enrichment, and permission mapping are non-negotiable preparation steps that any enterprise knowledge layer agents will depend on.

- A knowledge layer that works at launch will degrade without active maintenance. Freshness management, retrieval validation, and ongoing human review need to be built into the operational pipeline from the start.

- The runtime knowledge layer and the model should be managed separately, with independent update cycles, so agents can access new information immediately without requiring retraining.

What Is Agent-Ready Data and How Does It Differ from Training Data?

Agent-ready data is the structured, semantically indexed, and permission-aware layer of enterprise knowledge that AI agents query at runtime to complete tasks. It is distinct from training data, which shapes the model’s parameters, reasoning style, and general capabilities during fine-tuning or pre-training. Training data is consumed once and baked into weights. Agent-ready data is consumed continuously, on demand, every time an agent executes a task.

A language model trained on general enterprise corpora may still fail at task execution if the knowledge it needs to retrieve e.g., a specific contract clause, a current pricing tier, or an access-controlled policy document, is not findable, correctly chunked, or linked to the right permissions. Agent performance is bounded not just by what the model knows but by what it can retrieve reliably.

Agent-ready data has three defining properties. First, it is structured so that agents can parse and chunk it predictably. Second, it is semantically indexed so that retrieval systems can surface contextually correct results, not just keyword matches. Third, it is permission-aware, meaning the agent’s access to a given piece of knowledge is governed by the same access controls that govern human access. Without all three, agents make decisions on incomplete or unauthorized information.

Why Do AI Agents Need a Dedicated Runtime Knowledge Layer?

AI agents operating in enterprise environments do not work from memory alone. They execute multi-step tasks; summarizing contracts, routing support tickets, and generating compliance reports by pulling relevant knowledge from external sources mid-task. That retrieval needs to be fast, accurate, and contextually bound. A retrieval system built for search-engine-style queries tends to underperform when agents need to compose answers from multiple documents across different access tiers.

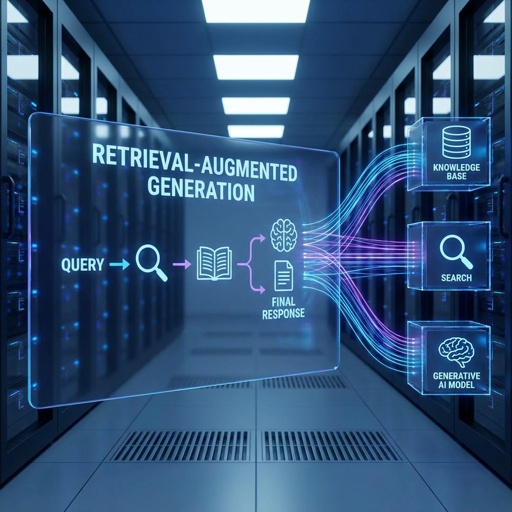

Retrieval-augmented generation (RAG) is currently the dominant architecture for giving agents runtime access to enterprise knowledge. But RAG systems are only as reliable as the knowledge base. Retrieval quality degrades when source documents are poorly chunked, inconsistently formatted, or missing metadata. The same failure modes apply to agent knowledge layers, often with higher stakes because agents act on retrieved content rather than just presenting it.

A dedicated runtime knowledge layer also enables agents to stay current without retraining. When new policies, product updates, or regulatory changes are added to the knowledge base with proper indexing, agents can access them immediately. Without this layer, teams are forced to retrain or fine-tune models each time domain knowledge changes.

What Makes Enterprise Data Structurally Inaccessible to AI Agents?

The 90% figure IBM cites is a structural indictment. Most enterprise data is rich with useful information. The problem is that it exists in formats, silos, and access structures that agents cannot navigate reliably.

The most common failure modes are:

- Unstructured formats: PDFs, scanned documents, slide decks, and email threads contain useful knowledge but are not chunked or indexed in ways that support semantic retrieval. Agents querying these sources tend to retrieve fragments rather than complete, contextually coherent answers.

- Implicit context: Enterprise documents often rely on organizational context that is not written down; e.g., acronyms, internal product names, team-specific jargon, etc. Without explicit metadata and entity linking, retrieval systems cannot resolve these references correctly.

- Permission fragmentation: Access controls in enterprise systems vary by document, folder, system, and user role. Agents that ignore these controls retrieve content that users should not see. Agents designed to enforce these controls often fail because the permission metadata is not captured in the knowledge layer.

- Stale content: Documents that are outdated, superseded, or archived are indistinguishable from current ones unless the knowledge layer explicitly tags version and validity status. Agents act on whichever version they retrieve.

The importance of data pipelines for AI systems becomes especially clear here. Agent-ready data does not emerge from existing repositories on its own. It requires active transformation: format normalization, semantic chunking, metadata enrichment, permission mapping, and ongoing freshness management.

How Do You Build a Semantically Indexed, Permission-Aware Knowledge Layer for AI Agents?

Building an agent-ready knowledge layer is sequenced data engineering. The sequence matters because each stage creates the conditions for the next one to work correctly.

Step 1: Inventory and format normalization

Start with a full inventory of enterprise knowledge sources: wikis, CRMs, document management systems, ticketing platforms, and policy repositories. Map each source to its format, update frequency, and access control model. Then normalize documents to a consistent format that supports reliable parsing and chunking. This is not simply file conversion, but rather a complex environment, e.g., scanned PDFs require OCR, slide decks require structured extraction of content by slide rather than bulk text, and Tables require column header preservation.

Step 2: Semantic chunking and entity linking

Chunking is the most consequential technical decision in knowledge layer design. Chunks that are too large dilute retrieval precision. Chunks that are too small lose context and produce incoherent completions. The right chunk size is domain-specific and depends on how agents will use the retrieved content. Entity linking mentions of products, people, policies, and locations to canonical identifiers is what allows agents to resolve cross-document references correctly.

Step 3: Metadata enrichment

Every chunk in the knowledge layer needs structured metadata: document type, date, author, department, access tier, version status, and relevant topic tags. This metadata serves two functions. It powers filtered retrieval, narrowing the search space before semantic similarity scoring. It also carries permission information, so agents inherit the correct access controls from the source document. This kind of structured data layer can be built at scale, including for legacy content that was never systematically tagged.

Step 4: Indexing and retrieval validation

Once content is chunked and enriched, it needs to be embedded and indexed in a vector store or hybrid search system. Indexing is not a one-time operation. It requires ongoing validation; checking retrieval precision and recall against representative agent queries, identifying content gaps, and monitoring for retrieval drift as the knowledge base grows. A reliable knowledge base for RAG-powered agents follows exactly this pattern.

What Role Does Metadata Play in Making Enterprise Knowledge Agent-Ready?

Metadata is the mechanism by which enterprise knowledge becomes navigable for agents. A document without metadata is a chunk of text. A document with structured metadata is a retrievable asset with defined scope, provenance, and access rules.

The specific metadata fields that matter most for agent-ready data are: document type (policy, contract, FAQ, technical spec), validity period (current, archived, under review), access tier (public, internal, restricted, confidential), owning team or department, and topic or domain tags. When retrieval is done against a metadata-filtered index, agents retrieve content from the right scope before semantic similarity scoring narrows to the best match. This two-stage retrieval (filter then rank) tends to outperform pure semantic search on enterprise knowledge tasks.

Permission metadata deserves particular attention. In most enterprise environments, access controls are stored in identity and access management systems that are separate from document repositories. Building a knowledge layer that accurately reflects these controls requires joining permission data with document metadata at ingestion time. This is an engineering problem with significant organizational complexity, but it is non-negotiable for any agent deployment that operates across information with different sensitivity levels.

How Digital Divide Data Can Help

DDD works with enterprise AI teams that are past the proof-of-concept stage and dealing with the real-world problem of knowledge accessibility at scale. The work typically starts with end-to-end data collection and curation, inventorying the knowledge sources an agent program depends on, normalizing formats, and building the chunking and indexing pipelines that make retrieval reliable. DDD’s teams have worked across document types that tend to cause the most problems in enterprise deployments, specifically scanned legacy documents, multi-format policy repositories, and CRM knowledge bases with inconsistent field usage.

Where metadata is the limiting factor, DDD’s metadata enrichment and classification services apply structured human review to content that automated classifiers handle poorly. This includes ambiguous document types, documents that span multiple topic domains, and content where access tier classification requires domain judgment rather than rule-based logic. The output is a knowledge layer that agents can retrieve from with precision, not just with recall.

Build an enterprise knowledge layer that AI agents can actually use. Talk to an Expert

Conclusion

Agent-ready data is a distinct class of data preparation work that sits between training-time data and the model deployment layer. Agents that cannot reliably retrieve accurate, current, and permission-appropriate knowledge from enterprise repositories will underperform regardless of their reasoning capabilities. The preparation work, normalization, semantic chunking, metadata enrichment, permission mapping, and retrieval validation determine how much of the model’s capability actually reaches production tasks.

Organizations that treat knowledge layer preparation as a one-time infrastructure task tend to find their agent programs degrading within the first operating year. Organizations that build ongoing data operations into their agent programs, with structured validation, freshness monitoring, and human review for edge cases, consistently achieve better retrieval precision over time. The difference is data discipline.

References

Gao, Y., Xiong, Y., Gao, X., Jia, K., Pan, J., Bi, Y., Dai, Y., Sun, J., Wang, M., & Wang, H. (2024). Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv preprint. https://arxiv.org/abs/2312.10997

Lewis, P., Perez, E., Piktus, A., Petroni, F., Karpukhin, V., Goyal, N., Küttler, H., Lewis, M., Yih, W.-t., Rocktäschel, T., Riedel, S., & Kiela, D. (2021). Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems (NeurIPS). https://arxiv.org/abs/2005.11401

Anthropic. (2024). Building Effective Agents. Anthropic Research Blog. https://www.anthropic.com/research/building-effective-agents

Frequently Asked Questions

What is agent-ready data and how is it different from training data for AI agents?

Agent-ready data is enterprise knowledge that has been structured, semantically indexed, and tagged with permission controls so AI agents can retrieve it accurately at runtime. Training data, by contrast, shapes the agent’s model weights during training and is consumed once. Agent-ready data is consulted continuously, every time the agent executes a task.

Why can AI agents not just use existing enterprise data repositories directly?

Most enterprise repositories were designed for human navigation; search boxes, folder structures, access portals. AI agents need content that is chunked into predictable units, embedded in a vector index, tagged with structured metadata, and linked to the correct access controls. Raw repositories lack all of these properties, which is why IBM estimates roughly 90% of enterprise data is currently unusable by AI agents without transformation.

What is semantic chunking and why does it matter for AI agent performance?

Semantic chunking is the process of dividing documents into units that preserve contextual meaning rather than splitting arbitrarily by character count or page boundary. Getting chunking right is domain-specific and tends to require iteration against real agent queries. When chunks are too large, retrieval becomes imprecise and agents receive more context than they need. When chunks are too small, agents receive fragments that lack enough context to generate coherent answers.

How often does an agent-ready knowledge layer need to be updated?

Update frequency depends on how quickly the underlying enterprise knowledge changes. Policy repositories and regulatory content may change monthly; product databases and CRM knowledge can change daily. The knowledge layer needs to match the update cadence of its source content, with validation built into each update cycle to catch freshness, metadata quality, and retrieval precision issues before they affect agent performance.

Udit Khanna leads the delivery of scalable AI and data solutions at Digital Divide Data, with a deep specialization in Physical AI. With a background in presales, solutioning, and customer success, he brings a mix of technical depth and business fluency, helping global enterprises move their AI projects from prototype to real-world deployment without losing momentum.

How to Prepare Enterprise Knowledge for Runtime Access by AI Agents? Read Post »