Digital Twin Validation for ADAS: How Simulation Is Replacing Miles on the Road

The argument for extensive real-world testing in ADAS development is intuitive. Drive enough miles, encounter enough situations, and the system will have seen the breadth of conditions it needs to handle. The problem is that the arithmetic does not support the strategy.

Demonstrating safety at a statistically meaningful confidence level for a full-autonomy system would require hundreds of millions, possibly billions, of real-world miles, run at a pace no single development program can sustain within any reasonable timeline.

The events that determine whether an automatic emergency braking system fires correctly when a cyclist cuts across at night, or whether a lane-keeping system handles an unmarked temporary lane on a construction approach, are not the events that accumulate steadily during normal testing. They surface occasionally, in conditions that make systematic analysis difficult, and often in circumstances where no one is watching carefully enough to capture what happened. The rarest events are precisely the ones that most need to be tested deliberately and repeatedly.

This blog examines what digital twin validation actually involves for ADAS programs, how sensor simulation fidelity determines whether results transfer to real-world performance, and what data and annotation workflows underpin an effective digital twin program.

What a Digital Twin for ADAS Validation Actually Is

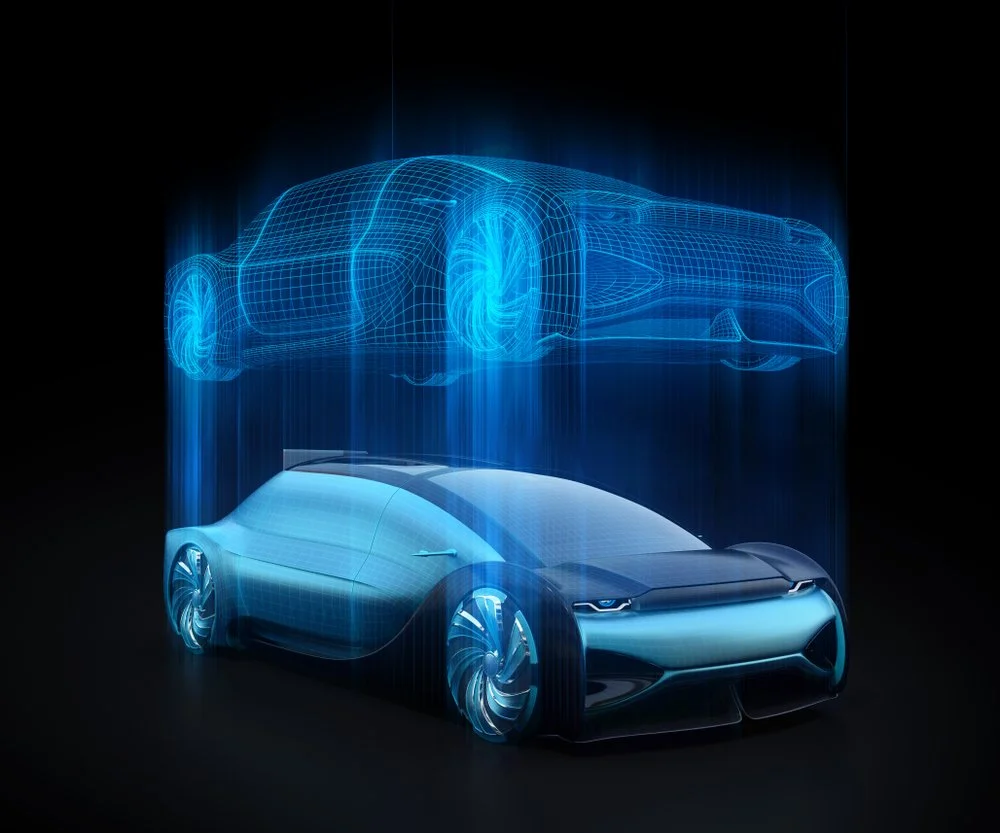

The term digital twin has accumulated enough promotional weight that it now covers a wide range of things, some genuinely sophisticated and some that amount to a conventional simulator with better graphics. In the specific context of ADAS validation, a digital twin has a reasonably precise meaning: a virtual environment that models the vehicle under test, the sensor suite on that vehicle, the road infrastructure the vehicle operates within, and the other road users it interacts with, at a fidelity level sufficient to produce sensor outputs that a real ADAS perception and control stack would respond to in the same way it would respond to the real-world equivalents.

The test of a digital twin’s validity is not whether it looks realistic to a human observer. It is whether the system being tested behaves in the digital twin as it would in the corresponding real scenario. A twin that produces beautiful photorealistic renders but whose simulated LiDAR point clouds have noise characteristics that differ from those of a real sensor will produce test results that do not transfer. A system that passes in simulation may fail in the field, not because the scenario was wrongly constructed but because the sensor simulation was insufficiently faithful to the physics of the hardware it was supposed to represent.

The components that define simulation fidelity

A production-grade digital twin for ADAS validation has several interdependent components. The vehicle dynamics model must replicate how the test vehicle responds to control inputs under realistic conditions, including stress scenarios like emergency braking on reduced-friction surfaces.

The environment model must replicate road geometry, surface material properties, and surrounding road user behavior in physically grounded ways. And the sensor simulation layer, where most of the difficulty lives, must replicate how each sensor in the multisensor fusion stack responds to the simulated environment, including the degradation modes that matter most for safety testing: LiDAR scatter in precipitation, camera behavior under low light, and radar multipath behavior near metallic infrastructure. Sensor simulation fidelity is the component that most frequently limits the usefulness of digital twin validation in practice, and it is the one most directly dependent on the quality of underlying real-world annotation data.

Sensor Simulation Fidelity: The Core Technical Challenge

LiDAR simulation and why physics matters

LiDAR is among the most demanding sensors to simulate accurately. Real sensors fire discrete laser pulses and measure the time of flight of reflected light. The returned point cloud is shaped by scene geometry, surface reflectivity, and atmospheric conditions affecting pulse propagation. Rain, fog, and airborne particulates all introduce scatter that modifies the point cloud in ways that directly affect the perception algorithms operating on it and the 3D LiDAR annotation used to build ground-truth training data for those algorithms.

A high-fidelity LiDAR simulator must model the angular resolution and range characteristics of the specific sensor being tested, apply realistic reflectivity based on material properties of every surface in the scene, and introduce atmospheric degradation that varies with simulated weather conditions.

High-fidelity digital twin framework incorporating real-world background geometry, sensor-specific specifications, and lane-level road topology produced LiDAR training data that, when used to train a 3D object detector, outperformed an equivalent model trained on real collected data by nearly five percentage points on the same evaluation set. That result illustrates the ceiling for what high-fidelity simulation can achieve. It also illustrates why fidelity is non-negotiable: a simulator that misrepresents surface reflectivity or atmospheric scatter will generate a training-validation gap that no amount of hyperparameter tuning will fully close.

Camera simulation and the domain adaptation problem

Camera simulation presents a structurally different set of challenges. Real automotive cameras are complex electro-optical systems with specific spectral sensitivities, noise floors, lens distortions, rolling shutter effects, and dynamic range limits. A simulation that renders scenes using a game engine’s default camera model produces images that differ from real sensor output in precisely the conditions where safety matters most: low light, the edges of dynamic range, and environments where lens flare or bloom are factors.

Two main approaches have emerged for closing this gap. Physics-based camera models, which simulate light propagation, surface material interactions, lens optics, and sensor electronics explicitly, produce high-fidelity outputs but are computationally intensive. Data-driven approaches using neural rendering techniques, including neural radiance fields and Gaussian splatting, can reconstruct real-world scenes with high realism at lower computational cost for captured environments, but they lack the flexibility to generate novel scenarios that differ substantially from the captured training distribution. Most mature programs use a combination, applying physics-based modeling for safety-critical validation scenarios where fidelity is paramount and data-driven rendering for large-scale scenario sweeps where throughput is the priority.

Radar simulation

Radar simulation is arguably harder than LiDAR or camera simulation because the electromagnetic phenomena involved are more complex and less amenable to the ray-tracing approximations that work reasonably well for optical sensors. Physically accurate radar simulation must model multipath reflections, Doppler frequency shifts from moving objects, polarimetric properties of target surfaces, and the interference patterns that arise in dense traffic.

Unreal Engine environment represents one of the more mature approaches to this problem, generating detailed radar returns including tens of thousands of reflection points with accurate signal amplitudes within a photorealistic simulation environment. For ADAS programs increasingly moving toward raw-data sensor fusion rather than object-list fusion, this level of radar simulation fidelity becomes necessary for meaningful validation rather than an optional enhancement.

The Data Infrastructure Behind a Reliable Digital Twin

Real-world data as the foundation

A digital twin does not materialize from scratch. The environment models, sensor calibration parameters, traffic behavior distributions, and road geometry that populate a production-grade digital twin all derive from real-world data collection and annotation. Building a digital twin of a specific urban intersection requires photogrammetric capture of the intersection’s three-dimensional geometry, material property data for each road surface element, and empirical traffic behavior data characterizing how vehicles and pedestrians actually move through the space. All of that data requires annotation before it becomes usable. DDD’s simulation operations services are built around exactly this dependency, ensuring that data feeding a simulation environment meets the standards the environment needs to produce trustworthy test results.

The quality chain is direct and unforgiving. An environment model built from inaccurately annotated photogrammetric data misrepresents road geometry in ways that propagate through every test run conducted in that environment. Surface material properties that are incorrectly labeled produce incorrect sensor outputs, which produce incorrect model responses, none of which will transfer to real hardware. The annotation quality of the underlying real-world data is not a secondary consideration in digital twin validation. It is the foundation on which everything else depends.

Scenario libraries and how they are constructed

The value of a digital twin validation program is proportional to the breadth and coverage quality of the scenario library it tests against. A scenario library is a structured collection of test cases, each specifying the environment type, initial vehicle state, behavior of surrounding road users, any infrastructure conditions relevant to the test, and the expected system response. Building a comprehensive library requires systematic analysis of the operational design domain, identification of safety-relevant scenario categories within that domain, and construction of specific annotated instances of each category in a format the simulation environment can execute.

This is where ODD analysis and edge case curation connect directly to the digital twin workflow. ODD analysis defines the boundaries of the operational domain the system is designed for, determining which scenario categories belong in the test library. Edge case curation identifies the rare, safety-critical scenarios that most need simulation coverage precisely because they cannot be reliably encountered in real-world fleet testing. Together, they determine what the digital twin program actually validates, and gaps in either translate directly into gaps in the safety case.

Annotation for sensor simulation validation

Validating sensor simulation fidelity requires annotated real-world data collected under conditions corresponding to the simulated scenarios. If the digital twin needs to simulate a junction at dusk in moderate rain, the validation process requires real sensor data from a comparable junction under comparable conditions, with relevant objects annotated to ground truth, so simulated sensor outputs can be quantitatively compared against what real hardware produces.

This is a specialized annotation task sitting at the intersection of ML data annotation and sensor physics. It requires annotators who understand multi-modal sensor data structures and the physical processes that determine whether a simulated output is genuinely faithful to real hardware behavior. Teams that treat this as a commodity annotation task tend to discover the inadequacy of that assumption when their simulation results diverge from real-world performance at an inopportune moment.

What Simulation Can Reach That Physical Testing Cannot

The categories simulation was designed for

The strongest argument for digital twin validation is the coverage it provides in scenario categories where physical testing is genuinely impractical. Dangerous scenarios top that list. A test of how an AEB system responds when a child runs from behind a parked car directly into the vehicle’s path cannot be safely conducted with a real child. In a digital twin, that scenario can be executed thousands of times, with systematic variation of the child’s speed, trajectory, starting distance, the vehicle’s initial speed, road surface friction, and ambient light. Each variation is reproducible on demand, producing runs that physical testing cannot replicate under controlled conditions.

Weather extremes offer another category where simulation provides coverage that physical testing cannot schedule reliably. Dense fog at sunrise over wet asphalt, heavy snowfall on a motorway approach, direct sun glare at a westward-facing junction at late afternoon: all can be parameterized in a high-fidelity digital twin and tested systematically. A physical program that wanted equivalent weather coverage would need to wait for the right meteorological conditions, mobilize quickly when they appeared, and accept that exact conditions could not be reproduced for follow-up runs after a system change. The reproducibility advantage of simulation alone, independent of scale, provides meaningful validation depth that physical testing cannot match.

The domain gap as a structural limit

The domain gap between simulation and reality remains the fundamental constraint on how far digital twin evidence can be pushed without physical corroboration. No matter how high the fidelity, there will be aspects of the real world that the simulation does not capture with full accuracy. The question is not whether the gap exists but how large it is for each relevant scenario category, which performance dimensions it affects, and whether the scenarios that produce passing results in simulation are the same scenarios that would produce passing results on a test track.

Quantifying the domain gap requires a systematic comparison of system behavior in matched simulation and real-world scenarios. This is expensive to do comprehensively, so most programs use it selectively, validating the twins’ fidelity for specific scenario categories and calibrating confidence in simulation evidence accordingly. Programs that skip this calibration, treating simulation results as equivalent to physical test results without establishing the fidelity basis, build a safety case on a foundation they have not verified.

Hardware-in-the-loop as a bridge

Hardware-in-the-loop testing, where real ADAS hardware connects to a virtual environment that provides synthetic sensor inputs in real time, occupies a useful middle ground between pure software simulation and track testing. HIL setups allow actual ADAS ECUs and perception stacks to process synthetic sensor data under real timing constraints, catching failure modes that arise from hardware-software interaction but would not surface in a purely software simulation. The sensor injection systems required for HIL testing, which convert simulated sensor outputs into the electrical signals a real ECU expects, are themselves complex engineering systems whose fidelity contributes to the overall validity of the results they produce.

What a Mature Digital Twin Validation Program Actually Looks Like

The validation pyramid

Mature digital twin validation programs organize their testing across a layered architecture. At the base are large-scale automated simulation runs testing individual functions across broad scenario spaces, potentially covering millions of test cases. In the middle layer are hardware-in-the-loop tests validating software-hardware integration for critical scenarios. At the top are track evaluations and limited real-world testing that calibrate confidence in simulation results and satisfy regulatory physical test requirements. Performance evaluation against a stable, versioned scenario library in simulation provides a consistent regression benchmark that physical testing cannot replicate, since track conditions and ambient environment vary unavoidably between test sessions.

The ratio of simulation to physical testing has been shifting steadily toward simulation as digital twin fidelity improves and regulatory acceptance grows. Programs that were running most of their validation miles on physical roads five years ago may now be running the majority of their scenario coverage in simulation, with physical testing focused on calibration runs, regulatory demonstrations, and specific scenario categories where the domain gap is known to be larger and where physical corroboration carries more weight.

Continuous integration and the speed advantage

One structural advantage of digital twin validation over physical testing is its natural compatibility with continuous integration development workflows. A software update that would take weeks to validate through track testing can be run against a full scenario library in simulation overnight. Development teams can catch regressions quickly and maintain a higher release cadence without sacrificing validation coverage.

Autonomous driving programs increasingly use simulation-based regression testing as a gating requirement for software changes, ensuring that every modification is validated against the full scenario library before being promoted to the next development stage. The economics of this approach favor programs that invest early in building a well-maintained, high-coverage scenario library that grows with the program.

The feedback loop from deployment

Digital twin environments are most valuable when they remain connected to real-world operational experience. Incidents from deployed vehicles, near-miss events flagged by safety operators, low-confidence detection events, and novel scenario types identified through ODD monitoring should all feed back into the digital twin scenario library, generating new test cases that directly address the failure modes operational deployment has revealed. This feedback loop transforms a digital twin from a static artifact built at program initiation into a living development tool that improves as the program matures. Programs that treat their scenario library as fixed after initial validation are leaving most of the long-term value of digital twin validation on the table.

Common Failure Modes in Digital Twin Validation Programs

Overconfidence in simulation results

The failure mode that most frequently undermines digital twin programs in practice is treating simulation results as equivalent to physical test results without establishing the fidelity basis that would justify that equivalence. A team that runs hundreds of thousands of simulation test cases and reports a high pass rate has produced meaningful evidence only if the simulation environment has been validated against real-world data for the tested scenario categories. Without that validation, high simulation pass rates can provide a false sense of security. The scenarios that fail in the real world may be precisely the scenarios for which the simulation was least faithful to actual physics.

Scenario library gaps

Another common failure mode is scenario library gaps, where the set of test cases run in simulation does not reflect the actual breadth of the operational design domain. Teams sometimes build libraries around the scenarios that are easiest to generate rather than the ones that are most safety-relevant. The edge case curation process is specifically designed to address this problem, identifying rare but high-consequence scenarios that must be covered regardless of the difficulty of constructing them in simulation. A digital twin program whose scenario library has not been systematically reviewed for ODD coverage gaps is likely to have tested the easy scenarios comprehensively and the important ones insufficiently.

Annotation quality in the simulation foundation

A third major failure mode is annotation quality problems in the underlying real-world data used to build or calibrate the simulation environment. Environmental geometry that is inaccurately captured, material properties that are mislabeled, or traffic behavior data that is unrepresentative of the actual deployment environment all degrade simulation fidelity in ways that are often invisible until real-world performance diverges from simulation predictions.

Teams that invest heavily in simulation tooling but treat the underlying data annotation as a commodity task typically discover this mismatch at the worst possible moment. High-quality annotation in the simulation foundation data is not optional. It is among the most cost-effective investments in overall simulation program quality available.

How DDD Can Help

Digital Divide Data provides dedicated digital twin validation services for ADAS and autonomous driving programs, supporting the data and annotation workflows that underpin effective simulation-based testing. DDD’s approach starts from the recognition that a digital twin is only as reliable as the data that builds and validates it, and that annotation quality in the underlying real-world data determines whether simulation results actually transfer to real-world performance.

On the simulation foundation side, DDD’s simulation operations capabilities support scenario library development, simulation environment data annotation, and systematic validation of sensor simulation fidelity against annotated real-world reference datasets. DDD annotation teams trained in multisensor fusion data produce the high-quality labeled datasets needed to validate whether simulated LiDAR, camera, and radar outputs match real-world sensor behavior under the conditions that matter most for safety testing.

For programs preparing regulatory submissions that include simulation-based evidence, DDD’s safety case analysis and performance evaluation services support the documentation and evidence generation required to demonstrate that the digital twin validation program meets the credibility standards regulators and certification bodies expect.

Talk to our expert and accelerate your ADAS validation program with a simulation-backed data infrastructure built to production quality.

Conclusion

Digital twin validation is not a shortcut around the hard work of ADAS development. It is a way of doing that work more thoroughly than physical testing can reach on its own. The scenarios that matter most for safety are precisely the ones physical testing cannot encounter efficiently: the rare, the dangerous, and the meteorologically specific.

A well-built digital twin, grounded in high-quality annotated data and systematically validated against real sensor outputs, makes it possible to test those scenarios deliberately, repeatedly, and at a scale that produces evidence meaningful enough to support both internal safety decisions and regulatory submissions. The teams that build this capability well, treating sensor simulation fidelity and annotation quality as foundational requirements rather than implementation details, will validate more completely, iterate more quickly, and produce safety cases that hold up under scrutiny from regulators who are themselves becoming more sophisticated about what credible simulation evidence actually looks like.

Regulators are not accepting all simulation results: they are accepting results from environments that have been demonstrated to be fit for purpose. That demonstration requires the same careful attention to data quality, annotation standards, and systematic validation that governs the rest of the Physical AI development pipeline. Digital twin validation does not reduce the importance of getting data right. If anything, it raises the stakes, because the credibility of every test result that flows through the simulation depends on the quality of the real-world data the simulation was built from and calibrated against.

References

Alirezaei, M., Singh, T., Gali, A., Ploeg, J., & van Hassel, E. (2024). Virtual verification and validation of autonomous vehicles: Toolchain and workflow. IntechOpen. https://www.intechopen.com/chapters/1206671

Volvo Autonomous Solutions. (2025, June). Digital twins: The ultimate virtual proving ground. Volvo Group. https://www.volvoautonomoussolutions.com/en-en/news-and-insights/insights/articles/2025/jun/digital-twins–the-ultimate-virtual-proving-ground.html

Siemens Digital Industries Software. (2025, August). Unlocking high fidelity radar simulation: Siemens and AnteMotion join forces. Simcenter Blog. https://blogs.sw.siemens.com/simcenter/siemens-antemotion-join-forces/

United Nations Economic Commission for Europe. (2024). Guidelines and recommendations for ADS safety requirements, assessments, and test methods. UNECE WP.29. https://unece.org/transport/publications/guidelines-and-recommendations-ads-safety-requirements-assessments-and-test

Frequently Asked Questions

How is a digital twin different from a conventional ADAS simulator?

A digital twin is continuously calibrated against real-world sensor data and validated to ensure its outputs match real hardware behavior, whereas a conventional simulator approximates reality without that ongoing fidelity verification and calibration loop.

What sensor is hardest to simulate accurately in a digital twin?

Radar is generally the most difficult to simulate with full physical accuracy because electromagnetic phenomena such as multipath reflection and Doppler effects require computationally expensive full-wave modeling, whereas LiDAR and camera simulation can be approximated more tractably with existing methods.

How often should a digital twin scenario library be updated?

Scenario libraries should be updated continuously as operational data reveals new edge cases, ODD boundaries shift, or system changes introduce new failure modes, rather than being treated as static artifacts constructed once at program initiation.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Digital Twin Validation for ADAS: How Simulation Is Replacing Miles on the Road Read Post »