Why Accurate Vulnerable Road User (VRU) Detection Is Critical for Autonomous Vehicle Safety

DDD Solutions Engineering Team

10 October, 2025

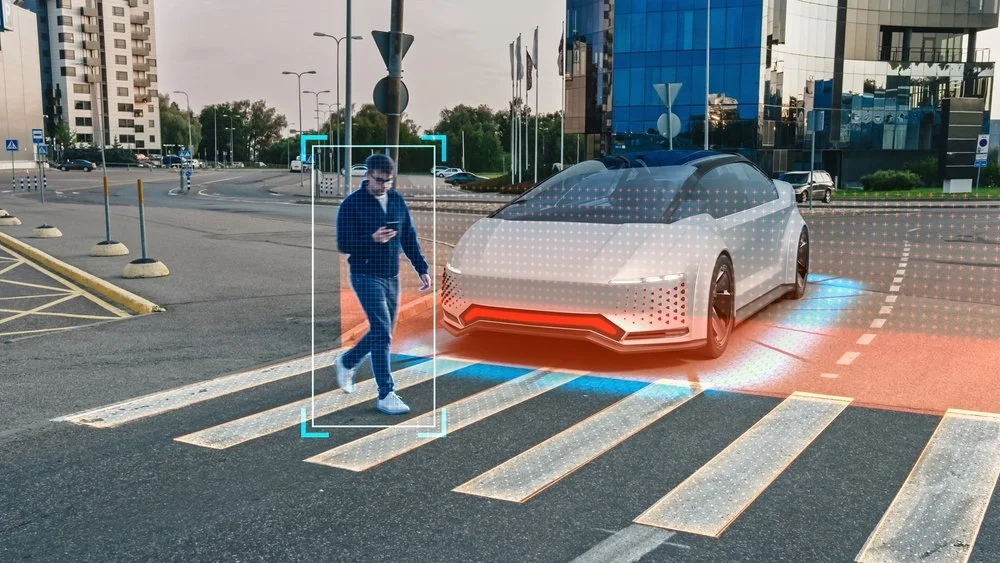

Even with the most advanced LiDAR arrays, radar systems, and AI vision models, autonomous vehicles still struggle with a fundamental challenge: human interaction. Pedestrians who dart across the street, cyclists weaving between lanes, or motorcyclists accelerating at unpredictable moments- these are the real stress tests for any self-driving system. Collectively known as Vulnerable Road Users, or VRUs, they represent the edge cases that determine whether autonomy can be called truly safe.

The sensors and models that govern AV behavior are improving rapidly, yet identifying and interpreting human movement, especially when it breaks expected patterns, remains the hardest task in the stack.

The idea that accurate VRU detection is merely a technical challenge misses the point. It is just as much about ethics and trust as it is about computer vision. A misread pedestrian gesture or a split-second delay in recognizing a cyclist is not an abstract algorithmic error; it’s a moment with real-world consequences.

This blog examines how detection precision, data diversity, and shared situational awareness are becoming the foundation for autonomous safety in Vulnerable Road User (VRU) Detection.

VRU Detection in AV Safety

VRU detection is about teaching machines to recognize and respond to the most unpredictable elements on the road: us. Autonomous vehicles rely on a layered perception system, comprising LiDAR for spatial mapping, cameras for color and context, radar for depth, and increasingly, V2X (Vehicle-to-Everything) signals for cooperative awareness. Together, these sensors attempt to identify pedestrians, cyclists, and motorcyclists who might cross paths with the vehicle’s trajectory.

The challenge, though, is not just technical range or resolution. It lies in behavioral complexity. A pedestrian looking at their phone behaves differently from one who makes eye contact with a driver. Cyclists may switch lanes abruptly to avoid a pothole, or ride close to the curb where they blend into background clutter. Motorcyclists appear and vanish from radar frames faster than most models can track. The variability in human movement, combined with lighting changes, partial occlusion, or reflections, makes consistent detection extraordinarily difficult.

Even the best models trained on large datasets can falter in real-world situations. A paper bag floating across a crosswalk may be flagged as a pedestrian. A child emerging from behind a parked SUV might not be detected at all until the last possible moment. These aren’t rare occurrences; they represent the kind of “edge cases” that engineers lose sleep over. The problem isn’t reaction time or braking performance; it’s perceptual precision. A fraction of a second spent misclassifying or failing to track a human figure can turn a routine encounter into a crash scenario.

VRU detection, then, is not just about seeing. It’s about interpreting movement in context, deciding whether that figure on the sidewalk might step forward, or whether a cyclist wobbling near the curb is likely to merge into traffic. The success of AV safety will depend less on how far a sensor can see and more on how deeply a system can understand the intent behind what it sees.

Foundations of Reliable Vulnerable Road User (VRU) Detection

If perception is the brain of an autonomous vehicle, then data is its memory, and right now, that memory is uneven. Most public datasets used to train VRU detection models are heavily skewed toward controlled conditions: clear daylight, adult pedestrians, predictable crosswalks. Real cities are far messier. They have fog, cluttered signage, reflective puddles, and kids darting between parked cars. When models trained on pristine data are deployed in that chaos, errors multiply in ways that seem obvious only in hindsight.

Capturing these scenarios is complicated, sometimes ethically questionable, and occasionally dangerous. No one can stage thousands of near-collision events just to enrich a dataset. This is where simulation begins to fill the gap. Synthetic data, when generated with realistic physics and textures, can introduce the rare edge cases that real-world collection tends to avoid.

In 2024, Waymo and Nexar published a large-scale VRU injury dataset that helped researchers understand the circumstances of real incidents. Their findings fed directly into simulation frameworks designed to reproduce those conditions safely. Similarly, the DECICE project in Europe used synthetic augmentation pipelines to expose detection models to low-visibility and high-occlusion environments, situations that traditional datasets underrepresent. The results suggested that even limited synthetic training can significantly improve generalization, especially in urban intersections.

Simulation also plays a critical role in testing. A recent initiative from Carnegie Mellon University’s Safety21 Center (2025) introduced “Vehicle-in-Virtual-Environment” (VVE) testing, which allows an autonomous car to operate in a blended reality: real sensors and hardware responding to virtual VRUs projected into the system. This setup makes it possible to evaluate how perception and decision-making interact during near-miss moments that would be too risky to replicate physically.

Still, there’s a balance to strike. Synthetic data can’t perfectly capture the unpredictability of human motion, the uneven gait of a pedestrian in a hurry, or the hesitation before a cyclist commits to a turn. Overreliance on simulation risks training models that look statistically impressive but lack behavioral nuance. The most promising work appears to blend both worlds: real-world data for grounding, synthetic data for coverage. Reliable detection doesn’t come from more data alone, but from the right mix of data that reflects how humans actually behave on the street.

Cooperative VRU Safety for Autonomy

For years, most VRU detection research focused on what an individual vehicle could see. The thinking was simple: give the car more sensors, better models, faster processors, and it would become safer. That assumption is starting to look incomplete. True safety may depend less on what one vehicle perceives and more on what the entire environment can sense and share.

Cooperative systems, what researchers call C-V2X, or Cellular Vehicle-to-Everything, are changing that narrative. By allowing vehicles, traffic lights, and roadside sensors to exchange information in real time, AVs can detect VRUs that their own cameras or LiDAR might miss. A cyclist hidden behind a truck, for instance, might still be detected by a nearby camera-equipped intersection node and broadcast to approaching vehicles within milliseconds.

The idea isn’t just about redundancy, it’s about foresight. If one system spots a potential risk early, others can react faster. Edge computing makes this possible. Rather than sending sensor data to distant servers for processing, it’s analyzed locally, close to where the event occurs. European pilots like DECICE (2025) have demonstrated this approach at urban intersections, where localized compute units identify and track VRUs, then relay warnings directly to nearby vehicles. The reduction in communication lag translates to faster braking decisions and smoother avoidance maneuvers.

There’s also a behavioral layer to this evolution. Some AV prototypes now adjust their behavior based on the predicted intent of nearby humans. If a pedestrian’s trajectory suggests hesitation, the car may ease acceleration to signal awareness. If a cyclist’s head movement hints at a lane change, the vehicle can create additional buffer space. These micro-adjustments, though still experimental, make AVs feel less robotic and more socially attuned, a subtle but important shift in public trust.

Cooperative safety is moving toward something more ecosystemic: a shared web of awareness connecting humans, infrastructure, and machines. The vision isn’t just that every car becomes smarter, but that every intersection, streetlight, and roadside sensor contributes to collective understanding. It’s a future where vehicles don’t operate as isolated agents but as participants in a city-wide dialogue about safety, a conversation where even the most vulnerable voices are finally heard.

Recommendations for Vulnerable Road User (VRU) Detection

Recognizing a person on the road is one thing; understanding what that person intends to do is another. Will that pedestrian at the curb actually cross, or are they just waiting for a rideshare? Will the cyclist glance over their shoulder before turning, or veer suddenly into the lane? These small contextual cues can mean the difference between a safe stop and a near-miss.

One notable example is VRU-CIPI (CVPRW 2025), a project focused on crossing-intention prediction at intersections. Rather than relying solely on bounding boxes and trajectories, it incorporates motion patterns, posture analysis, and even subtle environmental context, like nearby traffic lights or pedestrian signals, to forecast likely actions.

Another approach, PointGAN (IEEE VTC 2024), improves how LiDAR systems interpret sparse or noisy point clouds, a problem that often leads to missed detections in crowded or visually complex areas. By generating synthetic but physically consistent data, the model helps fill in those blind spots where traditional sensors fall short.

Still, the technology isn’t flawless. Intent-prediction networks can overfit to certain gestures or fail to generalize across cultures. People in Paris, for instance, cross differently than those in Phoenix. Lighting, weather, and even local driving etiquette can shift how “intention” manifests visually. The risk is that a system trained in one region might misread human behavior in another, an issue that global AV developers are still grappling with.

Engineers are leaning on multimodal sensor fusion, combining LiDAR depth accuracy with camera semantics, radar motion cues, and V2X infrastructure data. This hybrid approach appears to reduce false negatives and helps AVs “see” around corners by sharing signals from nearby vehicles or roadside units.

Despite the progress, the question remains open: can machines ever truly read human intent with enough subtlety to match the instincts of an experienced driver? The current trajectory suggests we’re getting closer, but understanding motion is not the same as understanding behavior. Bridging that gap will likely define the next decade of AV perception research.

Future Outlook of VRU

The next five years are likely to redefine what “safe autonomy” means. Instead of pushing for faster reaction times or higher detection accuracy in isolation, researchers are starting to design systems that learn collectively and think contextually. The lines between perception, prediction, and policy are blurring, giving rise to a more connected ecosystem of safety.

One direction gaining momentum is the integration of digital twins, virtual replicas of real streets and intersections that evolve in real time. These environments simulate how pedestrians and vehicles interact, allowing engineers to test new safety algorithms across thousands of what-if scenarios before a single wheel turns.

Another trend that’s emerging is federated learning across fleets. Rather than pooling all raw sensor data, which would raise privacy and bandwidth issues, vehicles share only the learned model updates from their experiences. This way, a near-miss event in Los Angeles might quietly improve a vehicle’s decision-making model in Amsterdam within days. It’s a small but meaningful shift toward collective intelligence that doesn’t rely on massive centralized data storage.

Technologically, the move is toward end-to-end perception models that not only detect but also understand motion dynamics. Instead of separate modules for object detection, tracking, and path prediction, these architectures unify the process, reducing latency and improving decision consistency. Some teams are even developing explainable AI frameworks to trace why an AV acted a certain way in a given situation, critical for regulatory transparency and public confidence.

What’s emerging isn’t just a smarter car, but a smarter environment: a cooperative mesh of vehicles, infrastructure, and AI systems that share responsibility for keeping people safe.

Read more: The Pros and Cons of Automated Labeling for Autonomous Driving

How We Can Help

Building safer autonomous systems isn’t just about algorithms; it begins with data that mirrors reality. At Digital Divide Data (DDD), our role often starts where most models struggle, in the nuance of annotation, the quality of simulation inputs, and the interpretation of behaviors that machines don’t yet fully grasp.

Our teams work across multimodal datasets that include synchronized LiDAR, radar, and camera feeds, capturing the world from multiple vantage points. It’s tedious work, but precision here is what allows perception models to tell a stroller apart from a cyclist, or to recognize when a person standing on the curb is more than just a static object. We annotate not only who is present in a scene but what they might be doing, walking, hesitating, turning, or looking toward a vehicle. These micro-labels are often what transform an average model into one capable of predicting intent, not just position.

We also help clients align synthetic and real-world data. Simulation is powerful, but only if the digital pedestrians behave like real ones. Our teams validate and calibrate simulated VRU behavior against real datasets to ensure the resulting models don’t inherit artificial bias. This process has become increasingly important for clients building digital twins and training reinforcement-learning-based planners.

Conclusion

Autonomous vehicles’ success will ultimately depend on how well they understand human behavior. Among all the technical challenges, accurate detection of vulnerable road users remains the most consequential. The progress made in the past two years, across datasets, cooperative systems, and predictive modeling, shows that this is no longer a peripheral research topic. It sits at the very center of what it means to make autonomy safe, ethical, and socially acceptable.

Vehicles must interpret context, predict intent, and act with a level of caution that mirrors human empathy. Getting there will require more than incremental improvements in sensor fidelity or algorithmic accuracy. It will demand deeper collaboration between engineers, policymakers, ethicists, and the data specialists who ensure the world inside the model looks like the world outside the windshield.

As these systems evolve, one truth becomes clearer: autonomy is not achieved when the vehicle can drive itself, but when it can share the road responsibly with those who cannot protect themselves. Accurate VRU detection is where that responsibility begins, and where the future of safe, human-centered mobility will be decided.

Learn how DDD can strengthen your VRU detection pipelines and help your systems understand what really matters in human movement and the intent behind it.

References

Aurora Innovation. (2024). Prioritizing pedestrian safety. Aurora Tech Blog. Retrieved from https://aurora.tech/blog

Carnegie Mellon University, Safety21 Center for Connected and Automated Transportation. (2025, July). Vehicle-in-Virtual-Environment (VVE) testing for VRU safety of connected and automated vehicles. U.S. Department of Transportation University Transportation Centers Program.

Computer Networks. (2024). Modeling and evaluation of cooperative VRU protection with VAM (C-V2X). Elsevier.

Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). (2025). VRU-CIPI: Crossing Intention Prediction at Intersections. IEEE.

DECICE Project. (2025). Intelligent edge computing for cooperative VRU detection: Project summary and findings. European Commission CORDIS Reports.

European New Car Assessment Programme (Euro NCAP). (2024, February). VRU protection protocols update 2024. Retrieved from https://www.euroncap.com/en

McKinsey & Company. (2025, June). The road to safer AVs in Europe: Managing urban mobility risk. McKinsey Mobility Insights.

Nexar & Waymo. (2024, November). Vulnerable Road User injury dataset and insights for autonomous perception systems. Waymo Research Publications.

Vehicular Technology Conference (VTC). (2024). PointGAN: Enhanced VRU detection in point clouds. IEEE.

FAQs

What kinds of sensors are most reliable for detecting VRUs in poor visibility conditions?

No single sensor performs best across all conditions. LiDAR handles depth and structure well, but can struggle in heavy rain or fog. Cameras offer rich color and texture but fail in low light. Increasingly, manufacturers combine thermal imaging and millimeter-wave radar with existing systems to maintain consistent detection at night or in adverse weather. The trade-off is cost and calibration complexity, which are still major barriers to large-scale deployment.

How does intent prediction differ from trajectory prediction in AV systems?

Trajectory prediction models where a VRU will move based on its current motion. Intent prediction goes a step deeper; it tries to infer why they might move, or if they plan to move at all. For example, a person standing near a crosswalk may be detected as stationary, but their posture or gaze direction might reveal an intention to step forward. This subtle shift from physics-based to behavior-aware modeling is what separates traditional perception from proactive safety.

What’s the next frontier after detection and prediction?

The next major step is explainability, understanding why an AV interpreted a situation the way it did. As regulators demand post-incident transparency, manufacturers are developing interpretable AI pipelines that can reconstruct decision logic in human-readable terms. This isn’t just for accountability; it’s also how the public begins to trust that these systems see and understand the world in ways compatible with human judgment.