Major Techniques for Digitizing Cultural Heritage Archives

Digitization is no longer only about storing digital copies. It increasingly supports discovery, reuse, and analysis. Researchers search across collections rather than within a single archive. Images become data. Text becomes searchable at scale. The archive, once bounded by walls and reading rooms, becomes part of a broader digital ecosystem.

This blog examines the key techniques for digitizing cultural heritage archives. We will explore foundational capture methods to advanced text extraction, interoperability, metadata systems, and AI-assisted enrichment.

Foundations of Cultural Heritage Digitization

Digitizing cultural heritage is unlike digitizing modern business records or born-digital content. The materials themselves are deeply varied. A single collection might include handwritten letters, printed books, maps larger than a dining table, oil paintings, fragile photographs, audio recordings on obsolete media, and physical artifacts with complex textures.

Each category introduces its own constraints. Manuscripts may exhibit uneven ink density or marginal notes written at different times. Maps often combine fine detail with large formats that challenge standard scanning equipment. Artworks require careful lighting to avoid glare or color distortion. Artifacts introduce depth, texture, and geometry that flat imaging cannot capture.

Fragility is another defining factor. Many items cannot tolerate repeated handling or exposure to light. Some are unique, with no duplicates anywhere in the world. A torn page or a cracked binding is not just damage to an object but a loss of historical information. Digitization workflows must account for conservation needs as much as technical requirements.

There is also an ethical dimension. Cultural heritage materials are often tied to specific communities, histories, or identities. Decisions about how items are digitized, described, and shared carry implications for ownership, representation, and access. Digitization is not a neutral technical act. It reflects institutional values and priorities, whether consciously or not.

High-Quality 2D Imaging and Preservation Capture

Imaging Techniques for Flat and Bound Materials

Two-dimensional imaging remains the backbone of most cultural heritage digitization efforts. For flat materials such as loose documents, photographs, and prints, overhead scanners or camera-based setups are common. These systems allow materials to lie flat, minimizing stress.

Bound materials introduce additional complexity. Planetary scanners, which capture pages from above without flattening the spine, are often preferred for books and manuscripts. Cradles support bindings at gentle angles, reducing strain. Operators turn pages slowly, sometimes using tools to lift fragile paper without direct contact.

Camera-based capture systems offer flexibility, especially for irregular or oversized materials. Large maps, foldouts, or posters may exceed scanner dimensions. In these cases, controlled photographic setups allow multiple images to be stitched together. The process is slower and requires careful alignment, but it avoids folding or trimming materials to fit equipment.

Every handling decision reflects a balance between efficiency and care. Faster workflows may increase throughput but raise the risk of damage. Slower workflows protect materials but limit scale. Institutions often find themselves adjusting approaches item by item rather than applying a single rule.

Image Quality and Preservation Requirements

Image quality is not just a technical specification. It determines how useful a digital surrogate will be over time. Resolution affects legibility and analysis. Color accuracy matters for artworks, photographs, and even documents where ink tone conveys information. Consistent lighting prevents shadows or highlights from obscuring detail.

Calibration plays a quiet but essential role. Color targets, gray scales, and focus charts help ensure that images remain consistent across sessions and operators. Quality control workflows catch issues early, before thousands of files are produced with the same flaw.

A common practice is to separate preservation masters from access derivatives. Preservation files are created at high resolution with minimal compression and stored securely. Access versions are optimized for online delivery, faster loading, and broader compatibility. This separation allows institutions to balance long-term preservation with practical access needs.

File Formats, Storage, and Versioning

File format decisions often seem mundane, but they shape the future usability of digitized collections. Archival formats prioritize stability, documentation, and wide support. Delivery formats prioritize speed and compatibility with web platforms.

Equally important is how files are organized and named. Clear naming conventions and structured storage make collections manageable. They reduce the risk of loss and simplify migration when systems change. Versioning becomes essential as files are reprocessed, corrected, or enriched. Without clear version control, it becomes difficult to know which file represents the most accurate or complete representation of an object.

Text Digitization: OCR to Advanced Text Extraction

Optical Character Recognition for Printed Materials

Optical Character Recognition, or OCR, has long been a cornerstone of text digitization. It transforms scanned images of printed text into machine-readable words. For newspapers, books, and reports, OCR enables full-text search and large-scale analysis.

Despite its maturity, OCR is far from trivial in cultural heritage contexts. Historical print often uses fonts, layouts, and spellings that differ from modern standards. Pages may be stained, torn, or faded. Columns, footnotes, and illustrations confuse layout detection. Multilingual collections introduce additional complexity.

Post-processing becomes critical. Spellchecking, layout correction, and confidence scoring help improve usability. Quality evaluation, often based on sampling rather than full review, informs whether OCR output is fit for purpose. Perfection is rarely achievable, but transparency about limitations helps manage expectations.

Handwritten Text Recognition for Manuscripts and Archival Records

Handwritten Text Recognition, or HTR, addresses materials that OCR cannot handle effectively. Manuscripts, letters, diaries, and administrative records often contain handwriting that varies widely between writers and across time.

HTR systems rely on trained models rather than fixed rules. They learn patterns from labeled examples. Historical handwriting poses challenges because scripts evolve, ink fades, and spelling lacks standardization. Training effective models often requires curated samples and iterative refinement.

Automation alone is rarely sufficient. Human review remains essential, especially for names, dates, and ambiguous passages. Many institutions adopt a hybrid approach where automated recognition accelerates transcription, and humans validate or correct the output. The balance depends on accuracy requirements and available resources.

Human-in-the-Loop Text Enrichment

Human involvement does not end with correction. Crowdsourcing initiatives invite volunteers to transcribe, tag, or review content. Expert validation ensures accuracy for scholarly use. Assisted transcription tools suggest text while allowing users to intervene easily.

Well-designed workflows respect both human effort and machine efficiency. Interfaces that highlight low-confidence areas help reviewers focus their time. Clear guidelines reduce inconsistency. The result is text that supports richer search, analysis, and engagement than raw images alone ever could.

Interoperability and Access Through Standardized Delivery

The Need for Interoperability in Digital Heritage

Digitized collections often live on separate platforms, developed independently by institutions with different priorities. While each platform may function well on its own, fragmentation limits discovery and reuse. Researchers searching across collections face inconsistent interfaces and incompatible formats.

Isolated digital silos also create long-term risks. When systems are retired or funding ends, content may become inaccessible even if files still exist. Interoperability offers a way to decouple content from presentation, allowing materials to be reused and recontextualized without constant duplication.

Image and Media Interoperability Frameworks

Standardized delivery frameworks define how images and media are served, requested, and displayed. They enable features such as deep zoom, precise cropping, and annotation without requiring custom integrations for each collection.

These frameworks support comparison across institutions. A scholar can view manuscripts from different libraries side by side, zooming into details at the same scale. Annotations created in one environment can travel with the object into another.

The same concepts increasingly extend to three-dimensional objects and complex media. While challenges remain, especially around performance and consistency, interoperability offers a foundation for collaborative access rather than isolated presentation.

Enhancing User Experience and Scholarly Reuse

For users, interoperability translates into smoother experiences. Images load predictably. Tools behave consistently. Annotations persist. For scholars, it enables new forms of inquiry. Objects can be compared across time, geography, or collection boundaries.

Public engagement benefits as well. Educators embed high-quality images into teaching materials. Curators create virtual exhibitions that draw from multiple sources. Access becomes less about where an object is held and more about how it can be explored.

Metadata and Knowledge Representation

Descriptive, Technical, and Administrative Metadata

Metadata gives digitized objects meaning. Descriptive metadata explains what an object is, who created it, and when. Technical metadata records how it was digitized. Administrative metadata governs rights, restrictions, and responsibilities. Consistency matters. Controlled vocabularies and shared schemas reduce ambiguity. They allow collections to be searched and aggregated reliably. Without consistent metadata, even the best digitized content remains difficult to find or understand.

Digitization Paradata and Provenance

Beyond describing the object itself, paradata documents the digitization process. It records equipment, settings, workflows, and decisions. This information supports transparency and trust. It helps future users assess the reliability of digital surrogates.

Paradata also aids preservation. When files are migrated or reprocessed, knowing how they were created informs decisions. What might seem excessive at first often proves valuable years later when institutional memory fades.

Knowledge Graphs and Semantic Linking

Knowledge graphs connect objects to people, places, events, and concepts. They move beyond flat records toward networks of meaning. A letter links to its author, recipient, location, and historical context. An artifact links to similar objects across collections.

Semantic linking supports richer discovery. Users follow relationships rather than isolated records. For institutions, it opens possibilities for collaboration and shared interpretation without merging databases.

AI-Driven Enrichment of Digitized Archives

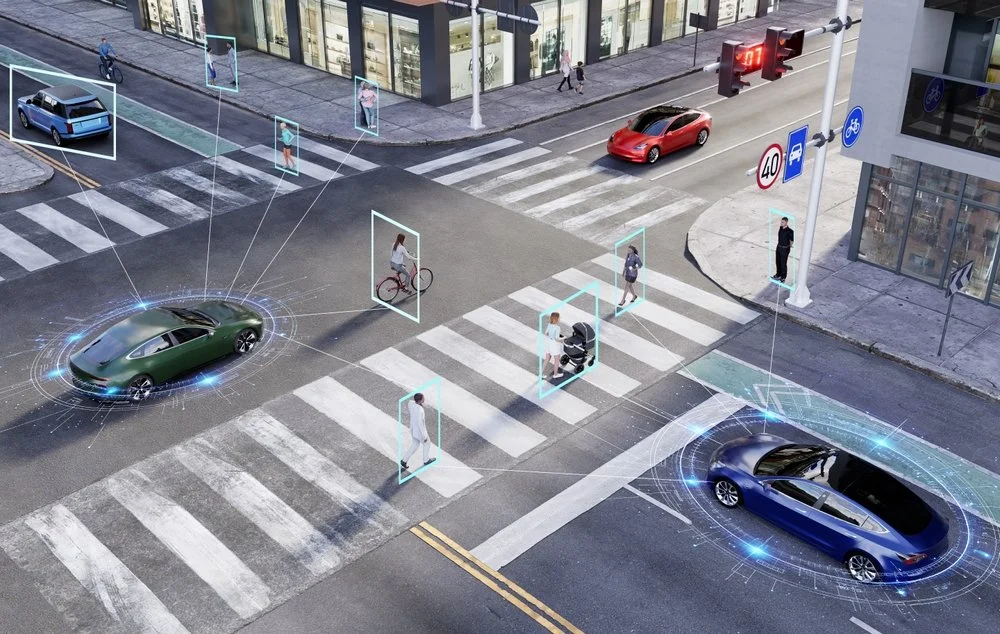

Automated Classification and Tagging

As collections grow, manual cataloging struggles to keep pace. Automated classification offers assistance. Image recognition identifies objects, scenes, or visual features. Text analysis extracts names, places, and themes. These systems reduce repetitive work, but they are not infallible. They reflect the data they were trained on and may struggle with underrepresented materials. Used carefully, they augment human expertise rather than replace it.

Multimodal Analysis Across Text, Image, and 3D Data

Increasingly, digitized archives include multiple data types. Multimodal analysis links text descriptions to images and three-dimensional models. A user searching for a location may retrieve maps, photographs, letters, and artifacts together. Cross-searching media types changes how collections are explored. It encourages connections that were previously difficult to see, especially across large or distributed archives.

Ethical and Quality Considerations

AI introduces ethical questions. Bias in training data may distort representation. Automated tags may oversimplify complex histories. Context can be lost if outputs are treated as authoritative. Human oversight remains essential. Review processes, transparency about limitations, and ongoing evaluation help ensure that AI supports rather than undermines cultural understanding.

How Digital Divide Data Can Help

Digitizing cultural heritage archives demands more than technology. It requires skilled people, carefully designed workflows, and sustained quality management. Digital Divide Data supports institutions across this spectrum.

From high-volume 2D imaging and text digitization to complex OCR and handwritten text recognition workflows, DDD combines operational scale with attention to detail. Human-in-the-loop processes ensure accuracy where automation alone falls short. Metadata creation, quality assurance, and enrichment workflows are designed to integrate smoothly with existing systems.

DDD also brings experience working with diverse materials and multilingual collections. This helps institutions move beyond pilot projects toward sustainable digitization programs that support long-term access and reuse.

Partner with Digital Divide Data to turn cultural heritage collections into accessible, high-quality digital archives.

FAQs

How do institutions decide which materials to digitize first?

Prioritization often considers fragility, demand, historical significance, and funding constraints rather than aiming for comprehensive coverage at once.

Is higher resolution always better for digitization?

Not necessarily. Higher resolution increases storage and processing costs. The optimal choice depends on intended use, material type, and long-term goals.

Can digitization replace physical preservation?

Digitization complements but does not replace physical preservation. Digital surrogates reduce handling but cannot fully substitute original materials.

How long does a digitization project typically take?

Timelines vary widely based on material condition, complexity, and scale. Planning and quality control often take as much time as capture itself.

What skills are most critical for successful digitization programs?

Technical expertise matters, but project management, quality assurance, and domain knowledge are equally important.

References

Osborn, C. (2025, May 19). Volunteers leverage OCR to transcribe Library of Congress digital collections. The Signal: Digital Happenings at the Library of Congress. https://blogs.loc.gov/thesignal/2025/05/volunteers-ocr/

Paranick, A. (2025, April 29). Improving machine-readable text for newspapers in Chronicling America. Headlines & Heroes: Newspapers, Comics & More Fine Print. https://blogs.loc.gov/headlinesandheroes/2025/04/ocr-reprocessing/

Romein, C. A., Rabus, A., Leifert, G., & Ströbel, P. B. (2025). Assessing advanced handwritten text recognition engines for digitizing historical documents. International Journal of Digital Humanities, 7, 115–134. https://doi.org/10.1007/s42803-025-00100-0

Major Techniques for Digitizing Cultural Heritage Archives Read Post »