By Aaron Bianchi

November 22, 2023

When it comes to artificial intelligence, computer vision is fast gaining immense ground. It’s estimated to grow from $9.03 billion in 2021 to $95.08 billion in 2027!

If you run a business looking to take advantage of an AI human vision system in the coming days, there are specific trends to keep in mind. Some of which we will mention below.

1. Edge Computing and Computer Vision

Definition: Edge computing typically refers to use cases where computing and data processing happens on a local device instead of on the cloud or some other server type solution. This means that you don’t need to be connected to the cloud to complete your computation!

Advantages: Computer vision requires enormous computing time and bandwidth. Complex models and large volumes of data heavily impact the overall computational power requirements.

Also, in many cases, computer vision data must get processed almost instantaneously. For example, when logging a user into their phone using facial recognition.

This is where edge computing can help computer vision by reducing bandwidth, improving response times, and keeping personally identifying information (PIII) locally contained.

Examples: Facial recognition on smartphones is a big application of edge computing and computer vision. As is, analyzing products on the assembly line to detect defective items.

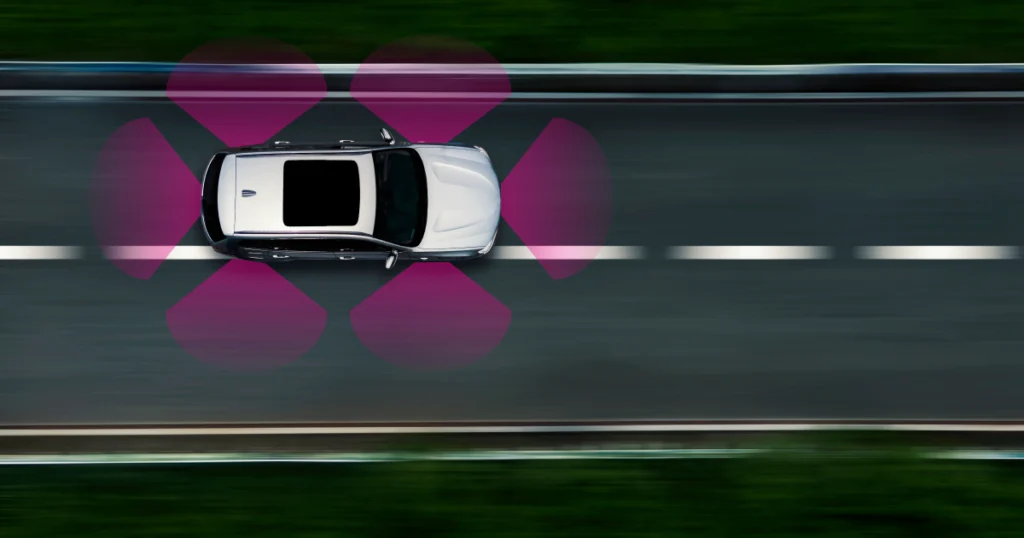

2. 3D Computer Vision

Definition: When trying to recognize objects using depth and geometry, 3D computer vision comes into play. It involves the construction of 3D objects within a machine, such as a computer.

Advantages: It provides much richer information than the typically used 2D computer vision. It also allows for manipulating said 3D object models in many ways for various purposes.

Examples: The most prominent usage of 3D computer vision is in self-driving cars or autonomous driving. Also, AR/VR headsets which are becoming very popular nowadays, use 3D computer vision.

3. Natural Language Processing and Computer Vision

Definition: Natural Language Processing (NLP) enables the understanding of spoken or written language. The software learns how to string together words to communicate a prescribed message, just like humans do every single day.

Advantages: Computers are well-suited to repeatedly detect objects, recognize patterns and communicate back what they see. They can perform these tasks flawlessly repeatedly over time. Computers can then start creating accurate descriptions of pictures.

Examples: Medical images like CT, PET, MRI, and X-ray imagery get used to diagnose patients and determine the best treatment options. With Computer vision and NLP, these images can be analyzed and an initial report of its findings can be generated.

Learn more: Applications powered by NLP

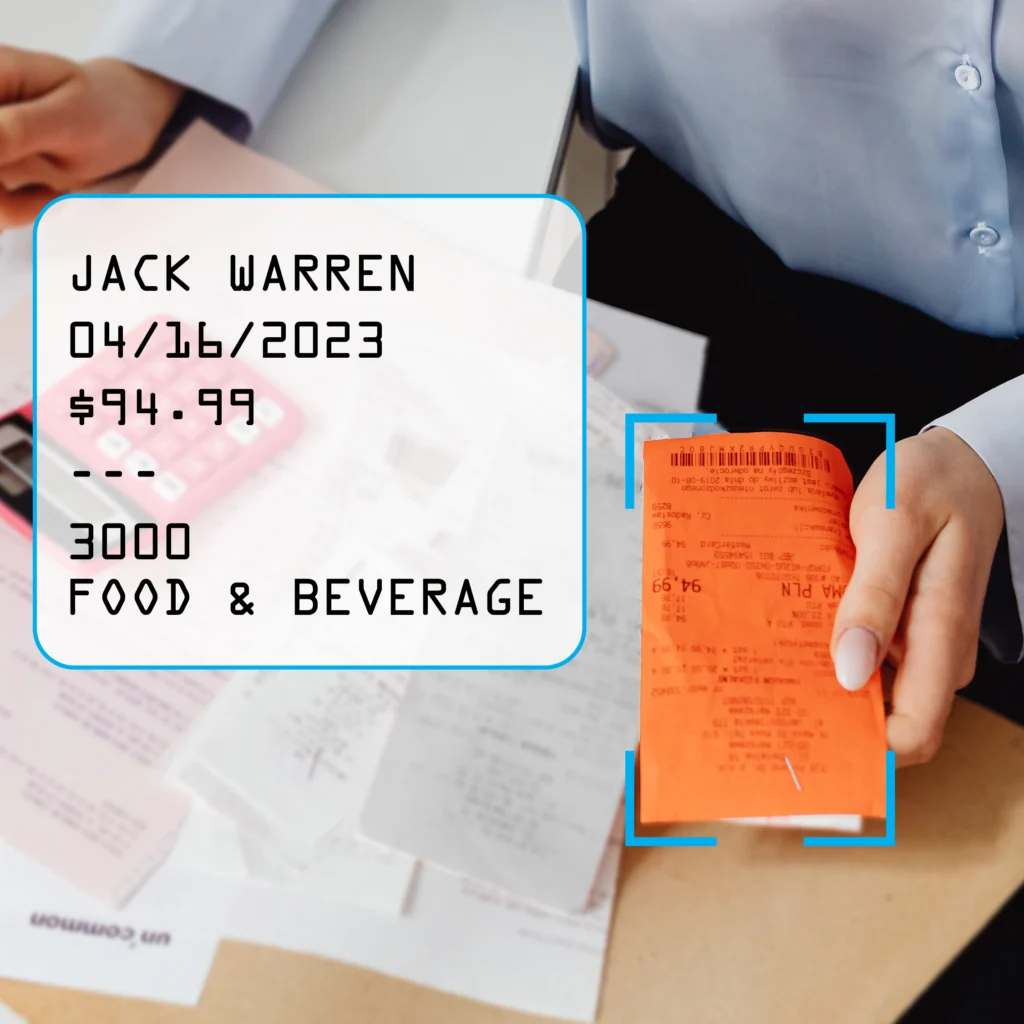

4. Image Recognition and Computer Vision

Definition: A machine can “see” images using algorithms and other techniques. They label and categorize the content of the picture. This is also known as image classification and image labeling.

Advantages: The machine can identify objects, people, entities, and other variables in images. This data can then be used to segment the images or filter them for various purposes.

Examples: This machine learning method gets used in manufacturing to see if labels got attached properly to items or if they were packed correctly into boxes. This relieves pressure on customer service and the Quality Assurance team.

Similarly applied in the pharmaceutical industry to ensure the correct number of pills get packed and in the right color, length, and width. This way, patients don’t run out of their medication in the middle of their treatment. This reduces medical errors due to prescription medications.

5. Object Detection and Computer Vision

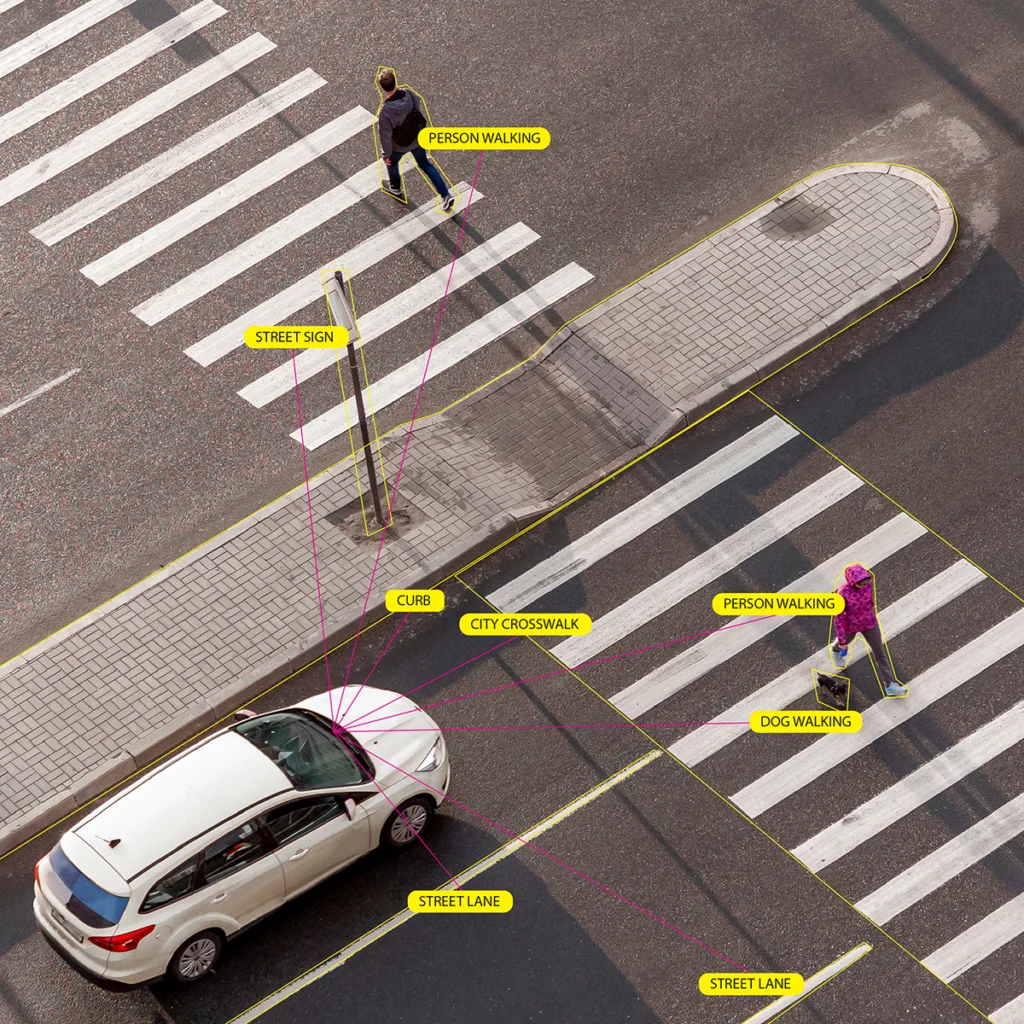

Definition: Object identification or detection is used to identify and count objects in a scene and then determine and track their precise locations. All while accurately labeling them. This can be done in an image or a video.

Advantages: It can extend and act as an artificial offset of human perception. Also, it can help identify, detect, and recognize our surroundings for various purposes.

Examples: You can improve security in the private sector using object detection. Businesses can monitor their territory and check for any uninvited guests at night. Object detection can also determine the personality of the person using identification technologies in the system.

Parking lots also use object detection to determine parking lot occupancy and thus inform drivers which lot has more space available for them. This way, drivers aren’t driving around looking for a space in a packed lot.

Cancer detection is another real-world application of object detection and computer vision.

6. Facial Recognition and Computer Vision

Definition: This technology is used to match images containing people’s faces with their identities by computers and machines. They do this by detecting facial features in images. Then compare them to various databases.

Advantages: Facial recognition has become a widely used computer vision application in various applications.

Examples: Google Photos and Facebook use facial recognition to determine who’s in a photo. Then label them using the person’s name with just one click.

This application is also used at country borders by customs to identify people. And then match them with their passports.

Google Maps uses facial recognition for privacy purposes by blurring out any faces in street view images.

7. Data Labeling and Computer Vision

Definition: This is when you add tags to raw data, such as images and videos. Each tag is associated with predetermined object classes in the data. Thus, unclassified data can soon have a semblance of organization and categorization using data labeling.

Remember that most of the world’s data is unlabeled. So, AI and machines would have no idea what these images contain without computer vision and data labeling.

Advantages: Using data labeling, you can segment and tag images or videos in seconds rather than hours, when done traditionally by humans. This makes the whole process cheaper and more lucrative in general.

Examples: These highlighted images with labels get used to training AI and machine learning models. They can become better at labeling and identifying objects within photos and videos. Soon they will be able to use machine learning models to recognize objects on their own without any help from humans.

8. Semi-supervised Learning and Computer Vision

Definition: This machine learning technique utilizes labeled and unlabeled data for learning, hence the term “semi-supervised learning.” A pseudo label is generated and benefits from a large amount of unlabeled data.

In many computer vision techniques (object detection is one), machines use supervised learning algorithms to learn how to identify objects in images. But in semi-supervised learning, a predictive model is created using some labeled data and lots of unlabeled data.

Advantages: This semi-supervised learning can improve the generalization and performance of the model over time. In countless scenarios, labeled data isn’t available.

In such cases, semi-supervised learning can achieve impeccable results even with only a fraction of the data labeled. Labeling is expensive. So semi-supervised learning can help save on costs for businesses when dealing with unlabeled data.

Examples: Google uses semi-supervised learning to rank and label web pages in search results. Image and video analysis is also done using semi-supervised learning, as much of this data is unlabeled.

9. Transfer Learning and Computer Vision

Definition: This is a machine learning method where you reuse a pre-trained model as the starting point for a model on a new task. A model trained on one task will be repurposed and reused for a second task. The second task has to be related to the first one, as that allows for optimization and rapid progress on the second task.

Advantages: Significant progress can get made on related tasks using only a model and a small amount of data. This can help save not only on time but also on the resources allocated to these models.

The machines don’t require training from scratch, which is computationally expensive. You don’t need large amounts of data with transfer learning, either. You can achieve better results with a small data set.

Examples: Tech companies like Microsoft, IBM, Nvidia, and AWS use transfer learning toolkits. This helps eliminate the need to build models from scratch every single time. It saves them time and money in the long run.

Noise removal from images is another application of transfer learning. It requires basic knowledge and pattern recognition of familiar images (modeling).

10. Synthetic Data in Computer Vision

Definition: In the realm of computer vision, synthetic data refers to artificially generated visual information that replicates real-world scenarios. It involves creating images or videos through algorithms and simulations to train and improve computer vision models.

Advantages: Synthetic data plays a pivotal role in enhancing the performance of computer vision systems. One key advantage lies in the augmentation of training datasets. By generating diverse synthetic images, models can be exposed to a broader range of scenarios, leading to improved generalization when applied to real-world situations.

Moreover, synthetic data helps overcome limitations associated with the availability of labeled datasets. Annotated real-world data for specific tasks may be scarce, but synthetic data allows for the creation of labeled examples, facilitating more robust model training.

The cost-effectiveness of synthetic data generation is another notable advantage. Acquiring and annotating large datasets can be resource-intensive, while synthetic data offers a more economical solution without compromising the quality of model training.

Examples: In autonomous vehicle development, synthetic data is extensively used to simulate various driving conditions. This enables training computer vision models to recognize and respond to diverse scenarios such as adverse weather, complex traffic situations, and rare events, contributing to the safety and reliability of autonomous systems.

For facial recognition technology, synthetic data aids in training models to recognize faces across different demographics and under varying lighting conditions. This ensures that the algorithm performs effectively in real-world scenarios, minimizing biases and improving overall accuracy.

In essence, synthetic data emerges as a valuable asset in the evolution of computer vision, propelling advancements in technology by broadening the scope of training datasets and addressing challenges associated with real-world data limitations.

11. Generative AI in Computer Vision: Transforming Visual Understanding

Definition: Generative AI in computer vision refers to the utilization of algorithms that can create and enhance visual content. These algorithms go beyond recognizing existing patterns and instead generate new images or modify existing ones. This dynamic approach enhances the capabilities of computer vision systems, allowing them to adapt to a broader range of scenarios.

Advantages: The integration of generative AI into computer vision brings forth several advantages. One notable benefit is the ability to generate synthetic data for training models. By creating diverse visual scenarios, generative AI aids in building robust computer vision models that can effectively handle a variety of real-world situations.

Another advantage lies in image synthesis and enhancement. Generative AI algorithms can transform low-resolution images into high-resolution counterparts, improve image quality, and even fill in missing visual information. This proves invaluable in applications such as medical imaging, where enhanced visuals contribute to more accurate diagnoses.

Examples: In autonomous vehicles, generative AI is employed to simulate and augment visual data. This includes creating realistic scenarios such as different weather conditions, diverse landscapes, and challenging road situations. This synthetic data enhances the training of computer vision models, ensuring they can navigate effectively in the complexities of the real world.

For facial recognition systems, generative AI contributes to the generation of facial images across various demographics and expressions. This broadens the scope of training datasets, leading to more inclusive and accurate algorithms capable of recognizing faces in diverse contexts.

Generative AI in computer vision exemplifies the fusion of artificial intelligence and visual understanding, pushing the boundaries of what these systems can achieve and adapt to in an ever-evolving technological landscape.

12. Detecting Deepfakes for Computer Vision: Safeguarding Businesses

Definition: Detecting deepfakes in computer vision involves the use of advanced algorithms and techniques to identify manipulated or synthetic visual content. Deepfakes are digitally altered images or videos that can deceive viewers by realistically depicting events or individuals that never occurred. Businesses utilize detection methods to ensure the authenticity of visual content in various applications.

Advantages: The ability to detect deepfakes is paramount for businesses in preserving trust, credibility, and security. In sectors like media, finance, and e-commerce, where visual content plays a crucial role, ensuring the authenticity of images and videos is essential. By implementing deepfake detection in computer vision systems, businesses can mitigate the risks associated with misinformation, fraud, and reputational damage.

Moreover, industries relying on video conferencing and online communication platforms benefit from deepfake detection to prevent malicious activities. This safeguards sensitive information, maintains the integrity of communications, and protects against potential threats to organizational security.

Examples: In the entertainment industry, where the use of celebrities in advertisements is prevalent, deepfake detection is vital. Businesses can employ computer vision algorithms to verify the authenticity of celebrity endorsements and promotional content, preventing the spread of misleading information.

Financial institutions leverage deepfake detection to secure transactions and prevent fraudulent activities. By ensuring the legitimacy of visual data in identity verification processes, businesses can enhance the overall security of their operations and protect both clients and the organization itself.

Detecting deepfakes in computer vision is an indispensable tool for businesses, offering a proactive approach to maintaining trust, security, and the reliability of visual content in an increasingly digital and interconnected world.

13. Ethical Computer Vision for Businesses: Navigating the Digital Landscape Responsibly

Definition: Ethical computer vision for businesses entails the responsible development, deployment, and use of computer vision technologies. It involves ensuring that these systems adhere to ethical principles, respect privacy, avoid biases, and contribute positively to society.

Advantages: Embracing ethical considerations in computer vision provides businesses with several advantages. Firstly, it fosters trust among users and customers. By prioritizing privacy and transparency, businesses can build stronger relationships with their clientele, assuring them that their data and interactions are handled with integrity.

Ethical computer vision also mitigates the risk of bias in algorithms, ensuring fair and unbiased decision-making processes. This is particularly crucial in sectors like hiring and finance, where biased algorithms can perpetuate societal inequalities. By prioritizing ethical practices, businesses contribute to a more inclusive and just technological landscape.

Examples: In recruitment, businesses can use ethical computer vision to ensure fairness and impartiality. By removing demographic identifiers from resumes and employing algorithms that focus solely on skills and qualifications, companies can avoid perpetuating biases and promote diversity in hiring processes.

Retail businesses can implement ethical computer vision in surveillance systems by being transparent about data collection and usage. This includes informing customers about the presence of security cameras and clearly outlining how their data is handled, fostering a sense of security without compromising privacy.

In healthcare, businesses can use ethical computer vision to ensure patient confidentiality. By implementing robust security measures and anonymizing patient data, healthcare organizations can harness the benefits of computer vision for diagnostics and treatment planning without compromising sensitive information.

Embracing ethical considerations in computer vision is not just a moral imperative but a strategic move for businesses, fostering trust, fairness, and societal well-being in an increasingly digitized world.

14. Satellite Computer Vision for Businesses: Gaining Insights from Above

Definition: Satellite computer vision for businesses involves the utilization of advanced imaging and analysis techniques applied to satellite imagery. This technology enables businesses to extract valuable insights, monitor environmental changes, and make informed decisions based on high-resolution satellite data.

Advantages: The integration of satellite computer vision offers businesses a plethora of advantages. One primary benefit is the ability to gather geospatial information on a large scale. Industries such as agriculture, urban planning, and environmental monitoring can leverage this data to optimize resource allocation, plan infrastructure development, and track changes in land use over time.

Cost-effectiveness is another key advantage. Instead of relying on ground-based surveys or physical reconnaissance, businesses can utilize satellite computer vision to obtain real-time data and insights without the need for extensive fieldwork. This streamlined approach enhances efficiency and reduces operational costs.

Examples: In agriculture, businesses leverage satellite computer vision to monitor crop health, assess soil conditions, and optimize irrigation. This data-driven approach enhances precision farming practices, leading to increased yields and sustainable agricultural practices.

Urban planning and development benefit from satellite computer vision by providing detailed information on infrastructure, population density, and land use. This data aids businesses and city planners in making informed decisions regarding zoning, transportation, and sustainable development.

The energy sector utilizes satellite computer vision for monitoring pipelines, assessing the environmental impact of energy projects, and identifying potential risks. This proactive approach enhances safety measures and contributes to responsible and sustainable energy practices.

Satellite computer vision empowers businesses with a bird’s-eye view, enabling them to make strategic decisions, enhance operational efficiency, and contribute to environmentally conscious practices in an ever-evolving global landscape.