By Aaron Bianchi

Sep 20, 2022

“Check all the images that contain traffic lights.”

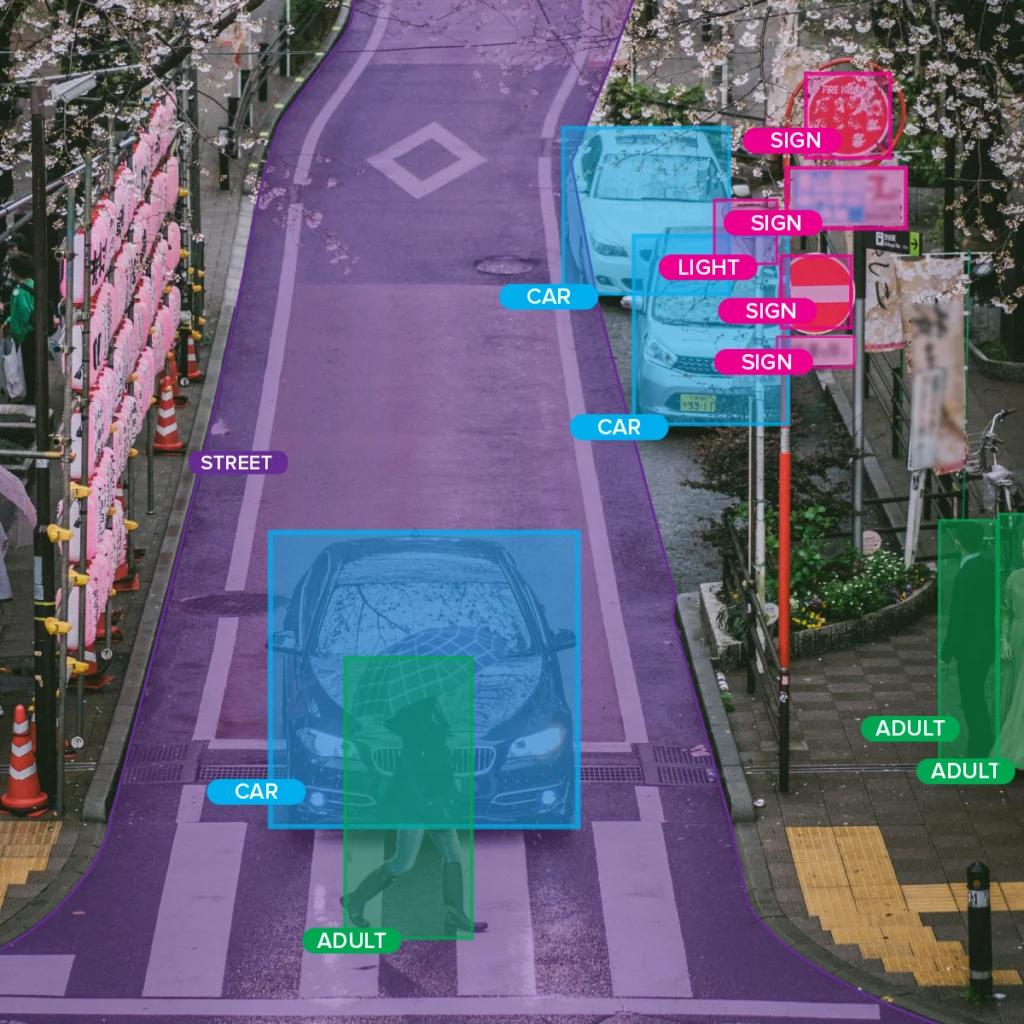

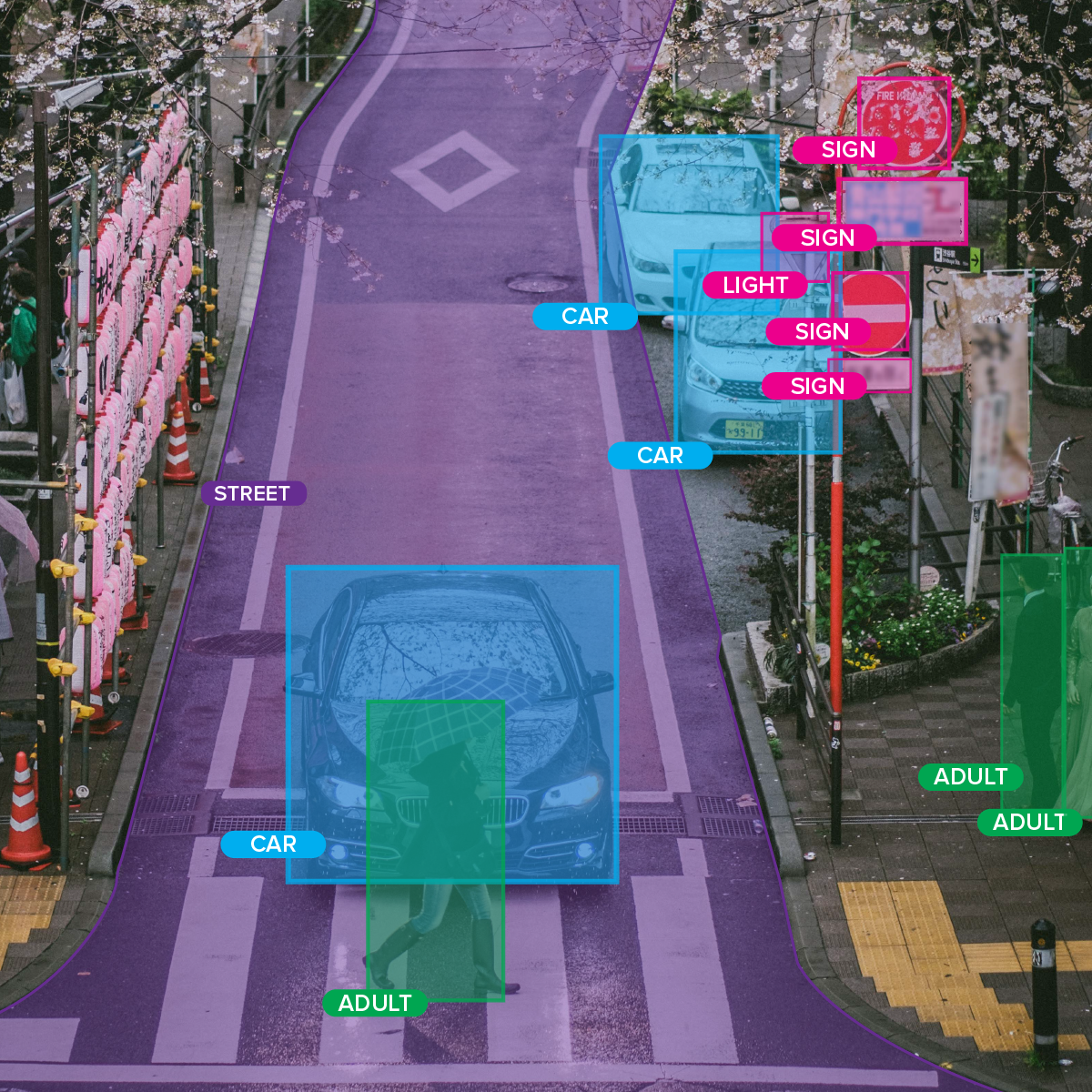

For some, these increasingly difficult CAPTCHAs are a source of endless frustration. But they give us something interesting to consider. If we prove that we are human by correctly identifying objects, how can a computer check our work? The answer lies in a domain of artificial intelligence called machine learning (ML).

Before CAPTCHA pictures get to you, data scientists train computers to recognize objects by providing lots of examples (training sets). If you’re wondering where those training sets come from, you’re right on the money! They come from a process called data annotation or data labeling.

Then, a model is developed to recognize specific objects. If the model is good, the computer can use it to identify the same objects in new pictures.

Artificial intelligence can’t create working models without well-trained data sets—garbage in, garbage out – this has always been the rule of thumb.

1. We Get the Big Picture

Imagine that you could talk to a computer to teach it new things. If you wanted to teach this computer to recognize a pest that is disrupting your crop yield, how might you approach this?

Chances are, you’d show it some pictures of pests you are interested in spotting and say, “Hey computer, look for these!”.

Machine learning works in the same way. Data annotation is like gathering the pictures you would like to show the computer and circling the important parts.

Unlike the computer, we understand the end goal of the model. We’ve likely defined, or at least have an understanding of its use case. As humans, understanding how the entire process works gives us an advantage when developing a data annotation strategy.

For instance, you can use your judgment to pick out a picture that wouldn’t be the best to include in the set. In this way, you’re telling the computer, “This isn’t a great example; let’s move on to a different one.”

This type of human logic is what artificial intelligence cannot yet replicate. The human side of understanding what the data means offers greater flexibility and understanding that create more substantial outcomes. Outcomes are not as strong with automated training set preparation.

2. We are Natural Language Processors

Natural Language Processing, or NLP, is the branch of artificial intelligence working to make computers understand human speech. We interact with NLP almost every day through “smart” devices.

“Hey Alexa, tell me more about Natural Language Processing.”

Like other areas of machine learning, NLP requires large training data sets. One type of data set consists of transcribed audio to train AI to turn speech into text. Another data set contains large amounts of text with annotations to highlight specific areas.

Both need humans to curate and pre-process the data before moving forward. As humans, we have an obvious advantage: we create and use language constantly. Human-powered data annotation for NLP is a great way to optimize model development.

The applications of NLP are endless. Sentiment analysis helps companies mine affective states or moods from customer messages/feedback. NLP can break down language barriers in unprecedented ways. This means people can communicate about weather patterns or pest attacks in real-time using different languages!

3. The Promise of Innovation

With so many advances in artificial intelligence and machine learning, we can be sure that our work is only getting started. AI won’t innovate itself, and researchers in computer science are the ones moving the field forward.

Of course, thinking about the importance of humans in the data preparation process does not diminish the role of technology—new software solutions to machine learning enter the market daily. Human innovation is needed to translate theoretical advances into practice.

An essential part of assembling a data annotation strategy is determining which tools to use and when to use them. Experienced professionals draw from experience to select the right tools for specific situations.

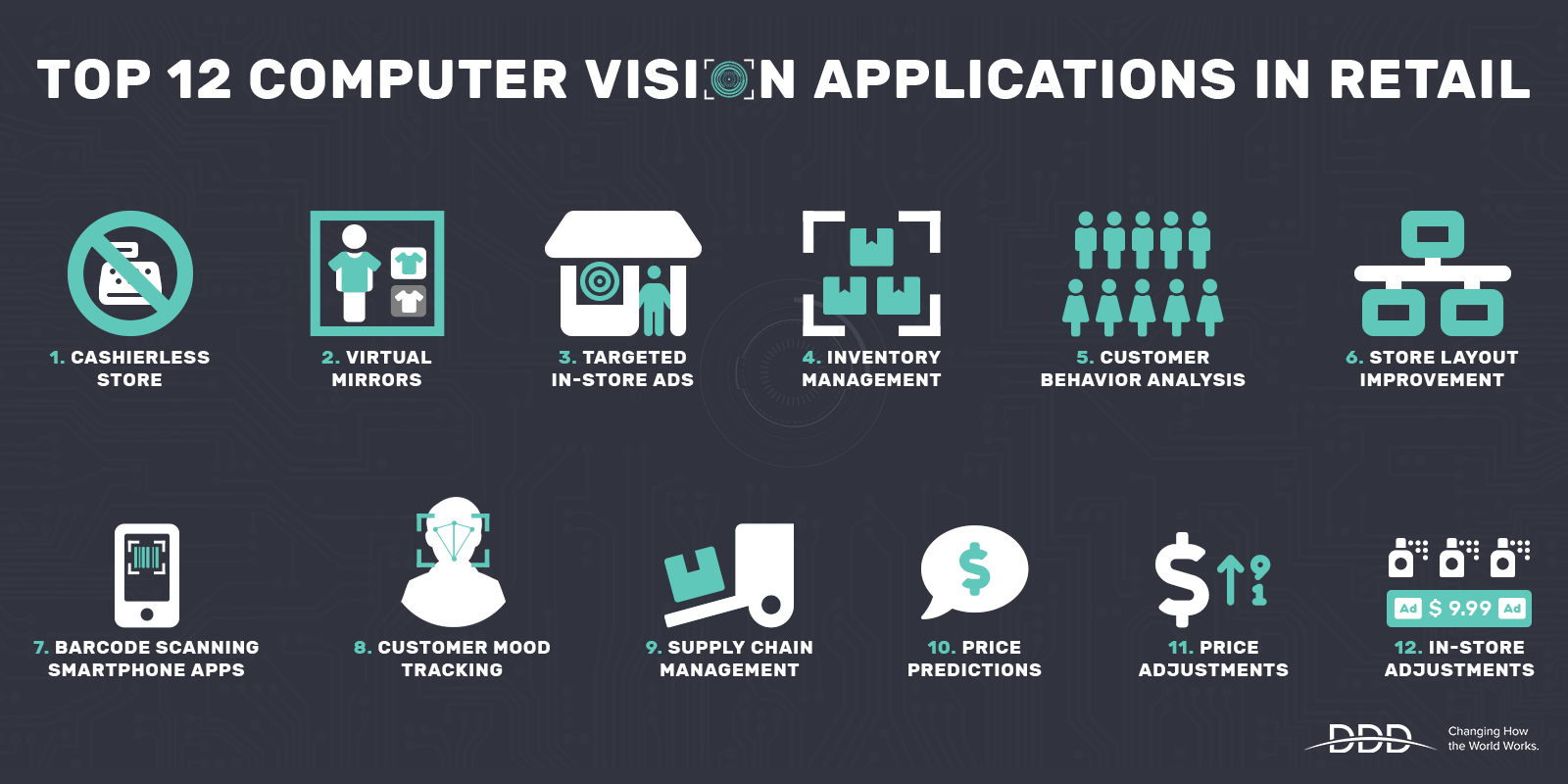

With so much raw data available in the agricultural tech industry, companies realize that the best solution is often a combination of software. Check out how machine learning has use cases across industries.

4. Data Annotation Professionals See the Process Through

Data can be messy. And let’s be honest: humans can be messy too! In the case of machine learning, this shared characteristic works to our advantage.

We need workers to clean data, address inconsistencies, and format data in a way that works for training AI. We use the term “data wrangling” to describe this process. Although “wrangling” may seem like a harsh term, it captures the actual amount of effort needed to prep data before use.

Part of the benefit of using a data annotation provider is that they can help you through the entire process. This includes:

-

data creation or collection

-

data cleaning and curation

-

data labeling or annotation

Consider using artificial intelligence to detect potential disease in a large field of crops by periodically analyzing photos of crops. This is likely a massive undertaking for an organization. First, enough data to compile a training data set is needed.

Once you’ve created a clean training data set for supervised learning, the story isn’t over.

Human intervention is needed to assess how well the AI can correctly identify diseased crops in the future. In situations where the machine cannot perform accurately, people need to determine the parameters of a new training set. Then, the process repeats, once again under human supervision.

Harness the Power of Data Annotation

With machine learning driving global industries forward, organizations need access to high-quality training sets. Organizations might not have in-house resources to handle data annotation at scale.

Fortunately, Digital Divide Data offers across-the-board support to get companies to the finish line, no matter where they start. As a non-profit organization, DDD is challenging the industry’s status-quo with impact sourcing, youth outreach, and more.

To get started, see how DDD’s suite of fully managed services (CV, NLP, Data and Content) can exceed your expectations.

ServicesIndustriesClientsWhy DDDAboutBlogContactTerms of UsePrivacy Policy

Copyright © 2022 • DDD • All Rights Reserved