How Image Segmentation and AI is Revolutionizing Traffic Management

By Umang Dayal

July 26, 2024

As cities become larger and more crowded, managing city traffic has become increasingly difficult. Traffic accidents, air pollution, and inefficient transportation systems are leading to higher economic and social costs for autonomous vehicles.

To address these concerns, many cities have currently established various systems in charge of traffic surveillance using Intelligent Transportation System (ITS) technologies.

For example, the United States employs many advanced control systems for traffic in most urban areas. The advanced Traffic Management Centers (TMCs) in the US use digital video cameras to monitor traffic conditions, as well as systems that can control freeway ramp meters and keep vacant lanes for emergencies.

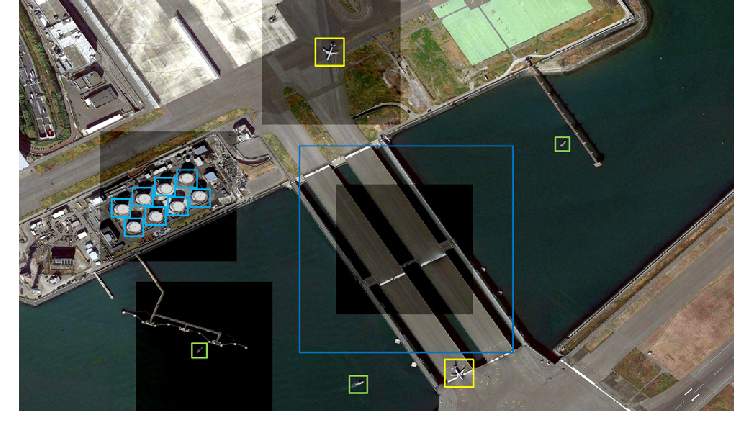

Various AI vision-based ITS systems have been developed that automate the detection, tracking, and classification of road users and traffic events.

Understanding Image Segmentation in Traffic Management

The challenges of traffic management cannot all be solved simply by building a better object detection system. The vast majority of vehicles that need to be tracked in real-time are stationary vehicles that have just parked at the roadside. Also, most object detection systems do not have any information about the spatial occupancy of the parking positions.

Successful vehicle tracking is essential for intelligent traffic scheduling, safe autonomous driving, and essentially all other traffic management advancements. The effort of developing a real-time and accurate system that achieves this goal has always involved extensive research and resource allocation.

Applications of AI in Traffic Management

While much work rests with traffic and local government agencies to plan and implement alterations to improve current traffic flow, AI has a wide number of applications that can alleviate traffic-related problems.

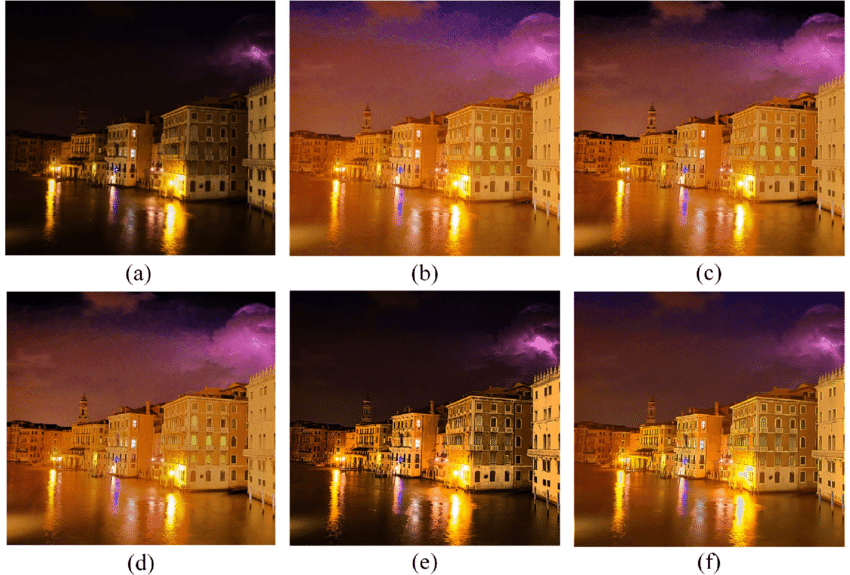

One of these applications is image segmentation. With the help of super-advanced machines and cloud resources that enable the segmentation of static images on a larger scale, it becomes possible to analyze historical images of roadways over time. This enables traffic managers to discern patterns, collect data, and plan for changes that over time offer impact on traffic management.

Alongside this vital role, researchers are also investigating methods to analyze the image sequences derived from time-lapse video from roadway cameras in real time. This enables the development and implementation of new smart traffic signal management systems that can substantially improve traffic flow on our roadway networks.

Traffic Flow Prediction

Traffic congestion has become a major problem in both developing and developed countries. Most major cities around the world are suffering, or will soon suffer, from severe traffic congestion. This problem is not only related to the amount of traffic in the city but also to the inappropriate management of city roads and inadequate traffic management systems.

The traffic management system involves multi-discipline activities such as setting traffic signals at the intersection and providing real-time information using various sensors which is called an intelligent traffic management system.

Traffic flow prediction is the forecasting of traffic state in the future, such as speed, volume, or occupancy. Traffic flow prediction is an important part of the intelligent traffic management system. Along with the development of machine learning and deep learning, the accuracy of traffic flow prediction has greatly improved.

Furthermore, the operations of intelligent traffic management systems can be optimized using accurate traffic flow prediction. The significant increase in the amount of data poses both opportunities and challenges for traffic flow prediction. In fact, traffic flow prediction is a multistep time series forecasting problem, where future time windows have a large range of desired differences between the input feature and model predictions.

Anomaly Detection

A very popular application of AI for automatically finding the events that are of particular interest in the traffic images. The purpose of traffic monitoring is to detect possible traffic jamming, vehicle flow, accidents, and so on. It could massively benefit the decision-making process of the administrator. The most recent approaches use a lot of ADAS techniques and often deep learning models to outperform all the classical models in what is referred to as object detection.

The main problem with the object detection method is that the learned features are capable of recognizing the predefined behaviors or objects but not recognizing abnormal behavior compared with the traffic analysis. There is a wide range of methods based on the features that are taken in the digital images for the purpose of figure representation and/or recognition by an AI: The scale-invariant feature transform (SIFT) which describes the image at different scales, color SIFT (CSIFT) using color information of the detected feature, or Speeded-Up Robust Feature (SURF). The reinforcement Learning model is another model that offers a better flow time series of about 86% when compared to the competition.

Traffic Sign Detection

Traffic sign detection is usually the first stage of traffic sign recognition or classification. Each sign-specific algorithm uses different techniques such as color segmentation, pattern matching, and template or model matching. The motivation for using template matching is that traffic signs are both large and relatively rigid.

Some algorithms are developed based on decision-making and image partitioning techniques able to increase the detection performances. A camera-based onboard system of intelligent vehicles to detect road and bridge signs on Iran roads was implemented and the results were quite positive. The problem of sign detection under low light conditions was studied by implementing the information gathered from sensor fusion based on the data obtained from image and LIDAR sensors. The proposed method reduces the number of false positives during night-time driving.

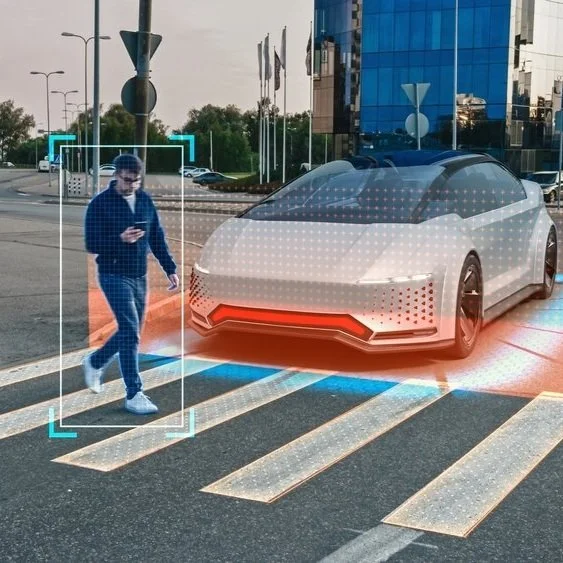

Pedestrian Detection

In urban environments, the detection of pedestrians is one of the most relevant tasks within automatic pedestrian monitoring systems. For this reason, a wide range of methods to perform pedestrian detection have been introduced in the last few years. According to the used strategy, it can be divided into three main categories:

-

Region-based methods

-

Regression-based methods

-

One-stage methods.

The region-based algorithms first set regions containing pedestrians to speculate their presence, and then calculate and classify those regions using features. The method provides good performances in terms of precision but tend to have slower execution times. On the other hand, regression-based methods use feature extraction to calculate the position of the pedestrian. Additionally, one-stage methods estimate the position of the pedestrian and perform classification in a unique stage. This category provides lower precision but has faster execution times.

Challenges and Limitations

There are various limitations to the image segmentation methods with AI and computer vision for traffic management. A few drawbacks pertaining to the challenges and limitations are provided below.

-

In addition to vans, trucks, and buses, image segmentation might fail for the detection of clean vehicles since their shape, size, and symmetry are opposed to the watercraft case.

-

If there is a road sign or traffic light with the same color (i.e., red, yellow, or green) in the background, the recognition accuracy further deteriorates with higher vehicle locations and larger-sized road signs.

-

The training process is not timely. How to expand the training data by excluding the background information or adding the vehicle mask image? So that there is room for the vehicle in addition to the vehicle of a specific color (e.g., red?) to improve the learning algorithm.

-

The segmentation accuracy is not yet in place. The work simply uses the PixelLink algorithm to obtain coarse vehicle pixel-level boundary, and simply by adjusting the boundary, there will be many noise locations since some road signs and traffic lights in the background are recognized as vehicles.

-

The algorithm may respond slowly and unsatisfactorily. Extensive experiments are to be conducted to further optimize and improve the performance of the used algorithm.

Future Trends and Innovations

AI can revolutionize everything, from data management to supply chain and consumer relationships. IoT is greatly beneficial in yielding real-time data for decision-making. Though there are various means to monitor transport conditions, IoT is going to make it available in real-time on a widespread scale. As IoT gets its due priority in traffic data management, the means of acquiring and storing such petabytes of data should also be addressed systematically.

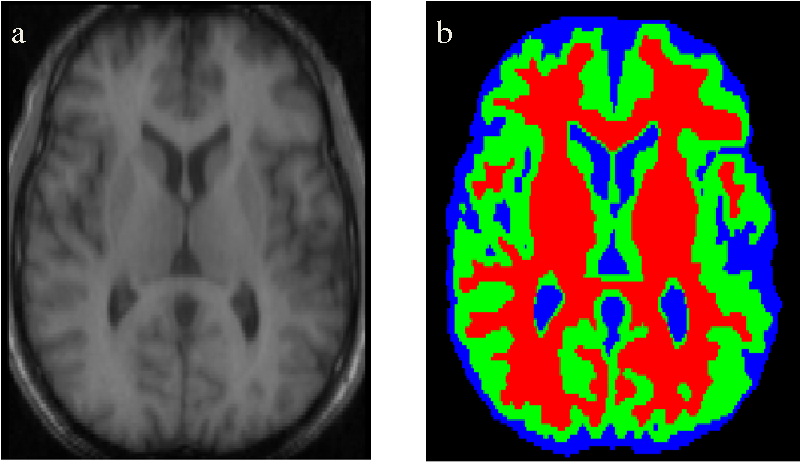

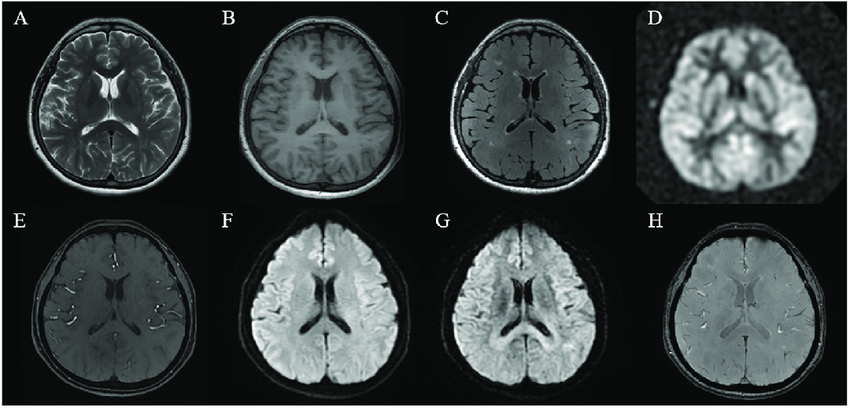

Image segmentation is an AI technique used to partition the pixels of a digital image, and it can be used in traffic control measures to delineate different traffic-obstructing objects present in a traffic image. The fusion of information from various sources into a cohesive model that portrays the current traffic status accurately is another trend to be seen in the near future. Various research initiatives are currently testing the feasibility of combining such databases with the objective of software attack prevention.

An all-inclusive and constantly updated modeling of the traffic network could be very useful in enacting various transport and land-use planning policies. Every single day, the use of means of transportation and infrastructure optimization may shape the city’s future, overcoming these constraints and making it more resilient. A smarter city should be founded on smart mobility that enhances safety, efficiency in the transport network manager, and environmental sustainability.

Read more: Data Annotation Techniques in Training Autonomous Vehicles and Their Impact on AV Development

Final Thoughts

Given the challenging urban environments, detecting and counting vehicles accurately is a challenging task necessitating the use of large datasets from multiple viewpoints.

Importantly, it can be used as a feature to detect vehicles in other types of traffic data. It is believed that this is the first time that deep CNNs have been trained and tested on the Brisbane traffic camera network dataset. A live feed demonstration application is generated that can unleash the potential capabilities of Vehicle IoT. In practice, the proposed approach along with future work can be used by city planners to optimize traffic flow. Such a tool can be used to prioritize traffic management interventions to minimize commuter travel times during rush hours.

Based on real-time traffic congestion levels, action plans can be generated for individuals to follow. Finally, the use of significant research in Traffic Management to enable a major city to better manage its traffic forecast and monitor its use by commuters. These steps will then later lead to the safe implementation of emerging autonomous vehicles and reduce the so-much-feared traffic congestion that autonomous vehicles might bring to our future cities.

As one of the leading data annotation companies, we always focus on prioritizing future innovations by specializing in delivering accurate and comprehensive data annotation solutions for autonomous driving and ADAS applications.

How Image Segmentation and AI is Revolutionizing Traffic Management Read Post »