Multi-Sensor Data Fusion in Autonomous Vehicles — Challenges and Solutions

By Umang Dayal

November 5, 2024

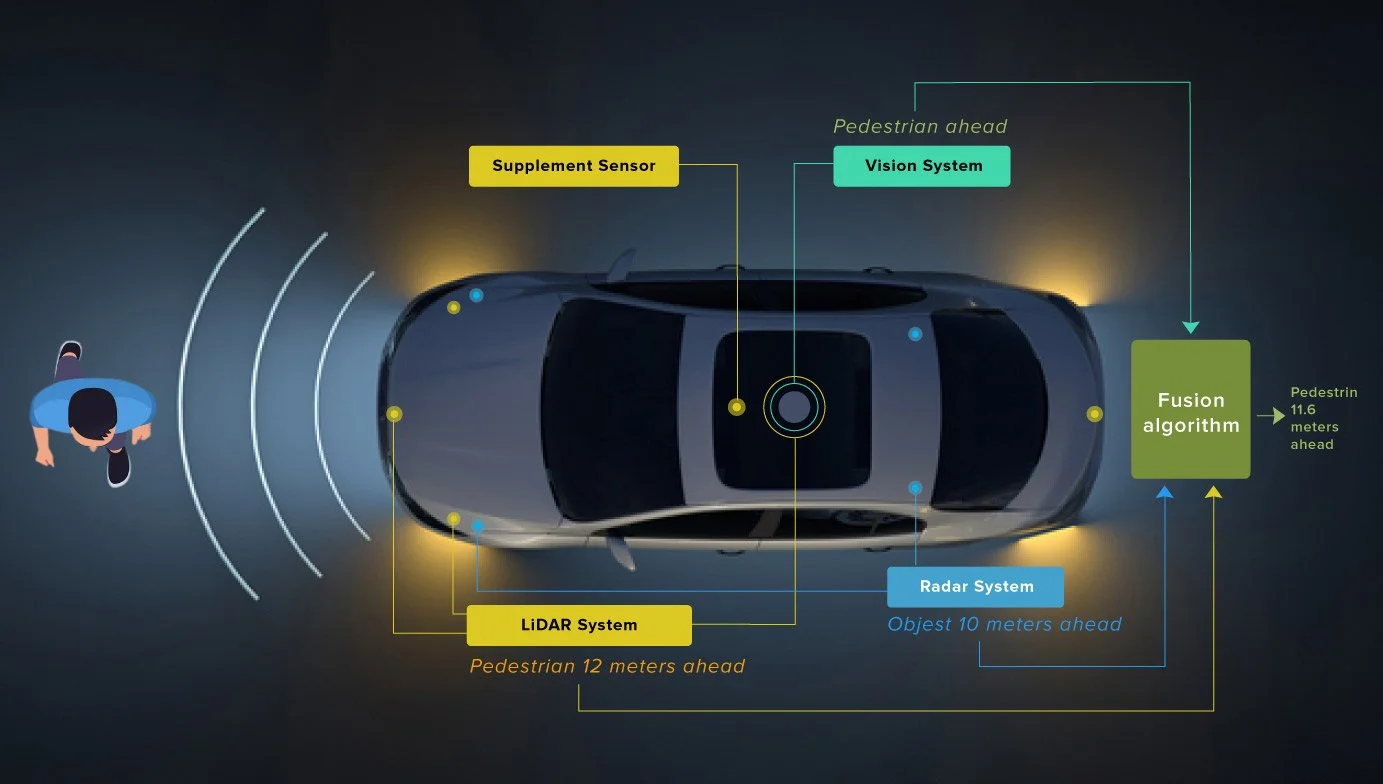

Autonomous driving remains a rapidly evolving field and automotive multi-sensor systems are needed to navigate or comprehend the field of vision. With the trend focusing on advanced technologies from manufacturers and policymakers, the use of multi-sensor data fusion has become critical. These techniques fuse information from multiple sensors to create a 360° view of a vehicle’s environment, which is necessary for safe and reliable autonomous vehicles.

Nevertheless, the combination of the various data streams poses a significant challenge due to the complexity and the variability of the sensor outputs. In this blog, we will discuss some of the challenges in fusing data from different sensors. At the same time, explore scalable recommendations on how to combine these technologies, and explain why fusing multiple sensors is important for autonomous driving.

Importance of Multi-Sensor Data Fusion in Autonomous Driving

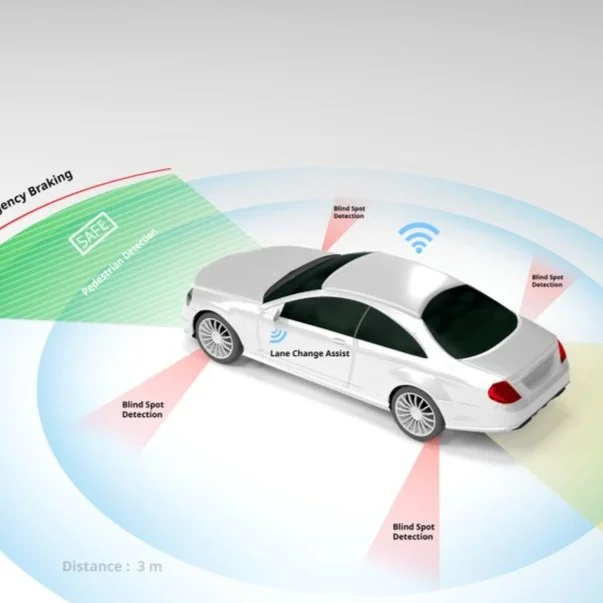

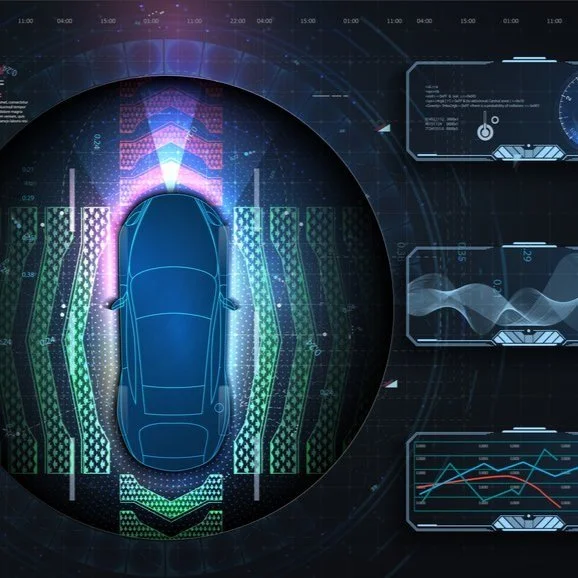

Multi-sensor data fusion is a key element to improve safety and reliability for autonomous vehicles offering driverless cars a multitude of sensors to safely navigate their environment. LIDAR excels at producing precise 3D maps of the environment, while radar is ideal at measuring the distance and speed of nearby objects. Cameras on the other hand do not have the resolution of LIDAR or radar, but they are critical in producing a rich amount of visual information.

These sensors complement each other helping the vehicle understand much more than any single sensor ever could. As an example, cameras deliver rich information regarding the visual environment where the car is driving. However, radar provides reliable measurements of targets and speeds, which is important for making dynamic driving decisions.

Synthesizing this data from sensors helps the ADAS to make better decisions and improves situational awareness and reliability. This multi-sensor fusion is an important aspect to overcome the limitation of depending on one type of sensor that may not have the necessary data for autonomous vehicles.

But sensor fusion is more than just data collection; the data must be computed, interpreted, and acted upon constantly due to the fact that driving situations change in real-time. The ability to compute data in real-time is critical for self-driving cars to understand their environment and react accordingly.

With the increasing automation of vehicles, the requirement for more advanced and dependable sensor systems becomes even more critical. To gain the household assurance of the general public on self-sufficient vehicles and perform properly in varied weather conditions, high-performing multi-sensor model systems are inevitable. Therefore, multi-sensor data fusion is necessary for the evolution of autonomous driving systems that can consistently provide safer, and reliable transportation solutions.

Challenges in Interlinking Multi-Sensor Data Fusion

The primary challenge in autonomous vehicles is fusing data from multiple sensors, mainly due to the diffidence in the sensor technologies. Lidars, radars, cameras, and other sensors all have different principles of operation and yield data at different times, formats, and dimensions. In turn, this combination requires an accurate per-sensor type real-world analysis to provide reliable asynchronous detections, which are then needed as input to implement the reliable behavior for autonomous systems.

Let’s discuss more challenges in multi-sensor data fusion in autonomous vehicles:

Overcoming Sensor Diversity

To ensure a safe and efficient functioning, autonomous vehicles make use of a host of sensors. These sensors include lidars, radars, vision sensors, and many more which have different accuracy, resolution, and noise characteristics, making data fusion a very difficult task. As an example, a radar system that is great at distance detection in bad weather and a vision sensor is adequate at providing information in normal conditions to return great imagery. Merging these different streams of data together requires a strong method capable of managing the inconsistencies between sensor outputs.

Response to these challenges requires the development of algorithms that would provide general functions to accommodate the heterogeneous properties of sensor outputs. This software layer is an intermediary step that essentially transforms diverse data into a common format that can be leveraged by the decision-making algorithms running on the car. Moreover, modeling each sensor to make reliable models is also essential. Such autonomous models assist in classifying and processing data from these sensors efficiently and make the integration process more convenient.

Simplifying Data Integration & Alignment

Performing effective multi-sensor data fusion demands greater attention to detail while syncing and aligning data. Even when all sensors are synchronized to a central clock, timing discrepancies can occur because of the different speeds in data collection for different sensors. For some, data from camera and RF classifiers are usually processed sooner than lidar data, and there is potential for temporal mismatch.

It is an essential requirement to correct these discrepancies to ensure the credibility of the data fusion process. This means preprocessing all the temporal and spatial data from the sensors to make sure everything is correct and updated in real-time. Keeping this data in sync is important for the vehicle navigation system that makes safe and efficient decisions when executing maneuvers. Proper alignment contributes to error reduction and system efficiency and consequently leads to safer autonomous driving.

If these technical issues are tackled with the right solution and software tools, it’s possible to make multi-sensor data integration significantly better. This enhances both the operational dependability of autonomous vehicles and their effectiveness and safety, thus enabling the proliferation of this transformative technology.

Techniques and Strategies for Resolving Interlinking Challenges

Data from multiple sensors and input delivery technology systems that process streams of diverse information face significant challenges in integrating sensor data. That means addressing these issues is key to enabling the effectiveness and efficiency of operations. Below are a few of the methods to address these issues.

Sensor Calibration for Data Synergy

Sensor calibration is one of the most important things that helps align and merge the data from different sensors accurately. This process calibrates the sensors to give accurate measurements for physical quantities, making it essential that devices give similar outputs when they are identical. However, two types of calibration help with this process. They are as follows.

Static Calibration: This includes fixating static parameters of sensors such as internal bias values, and others to calibrate inherent inaccuracies. For example, calibrating inertial sensors, for instance, so that they do not have a bias that impacts readings.

Dynamic Calibration: This includes calibrating factors that are time-varying to establish methods for real-time processing of the sensor outputs using dynamic calibration, this allows data to remain accurate even with the impact of external parameters.

By fine-tuning not only the static characteristic of a sensor but also its dynamic behavior, the data quality can be improved, and proper data fusion is achieved from independent sources.

Read more: Utilizing Multi-sensor Data Annotation To Improve Autonomous Driving Efficiency

Improving data fusion with the help of Deep Learning

Deep learning has changed the way the systems analyze and study huge data sets. Ever since the early 20’s, this method has been beneficial for the fusion of data from multiple sensors because it can autonomously learn features from large datasets and manipulate them. Some of the benefits of deep learning multi-sensor fusion techniques include:

Feature Hierarchies: Deep learning algorithms automatically develop layered terms of data features. These captured levels comprise abstraction, which can be fundamental in integrating and interpreting sensor data.

High-Dimensional: Deep learning handles high-dimensional data naturally from regular sensors, making it a suitable candidate for fusion tasks. This allows the system to identify intricate patterns and connections that may not be captured by conventional approaches.

Use in Sensor Fusion: Deep learning frameworks have successfully been applied to a combination of sensors that include radar, LiDAR, ultrasonics, and others. Thus, resulting in an enhanced understanding of the environment and more informed decision-making in sensor-dependent systems.

Fusing the data of multiple sensors helps improve the functionality and accuracy of a technology system to a great extent. It offers a systematic approach to managing the complexities associated with the various data types involved, ensuring that systems can manage complexity in a seamless and efficient manner.

Read more: Data Annotation Techniques in Training Autonomous Vehicles and Their Impact on AV Development

Conclusion

Multi-sensor data fusion is essential to improve the quality of sensor outputs, making them more accurate and reliable in delivering information allowing innovations in autonomous systems. While substantial strides have been achieved in tackling the complexities of multi-sensor data integration, some challenges still exist. Over the past decade, many of the problems have been resolved by automotive engineers, but some remain and continue to be the focus of continuous research and development.

At Digital Divide Data, we focus on calibrating different sensors with data training and multi-sensor data fusion techniques. To learn more about how we can help you calibrate multiple sensors you can talk to our experts.

Multi-Sensor Data Fusion in Autonomous Vehicles — Challenges and Solutions Read Post »