Utilizing Multi-sensor Data Annotation To Improve Autonomous Driving Efficiency

DDD Solutions Engineering Team

October 8, 2024

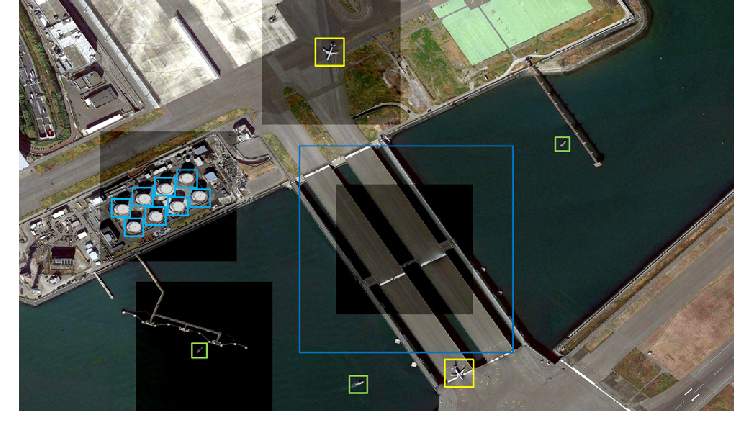

Autonomous vehicle sensors such as LiDAR, radar, and cameras detect objects in highly dynamic scenarios. These objects can be cars, motorbikes, pedestrians, traffic signs, roadblocks, etc. To improve the reliability of these autonomous driving systems it’s critical to improve the accuracy of the final result when performing multi-sensor data annotation.

In this blog, we will briefly discuss the implementation of LiDAR, radar, and cameras in autonomous driving and how to improve AD efficiency using multi-sensor data annotation.

What is LiDAR?

LiDAR or Light Detection and Ranging, is a remote sensing technology that uses advanced laser beams to quickly scan surrounding environments and calculate distance by measuring the time it takes for the light to reach back. Each laser pulse in LiDAR reflects off different wavelengths and surfaces, measuring precise location mapping. These collected data points create a point cloud that forms an accurate 3D depiction of the scanned objects.

How is LiDAR used in Autonomous Driving?

LiDAR sensors receive real-time data from thousands of laser pulses every second, it uses an onboarding system to analyze these ‘point clouds’ to animate a 3D representation of the surrounding terrain or environment. To ensure that LiDAR technology can create accurate 3D representations training is required to onboard AI models with annotated point cloud datasets. This annotated data allows ADAS to identify, detect, and classify different objects in the environment. For example; image and video annotations help autonomous vehicles to accurately identify road signs, moving lanes, objects, traffic flow, etc.

What is LiDAR Annotation?

LiDAR annotation is also known as point cloud annotation, this process classifies the point cloud data generated through LiDAR sensors. During this annotation process, each point is assigned a unique label, such as “pedestrian”, “roadblock”, “building”, “vehicle”, etc. These labeled data points are important to train machine learning models, giving them necessary information about the location and nature of the object present in the real world. In autonomous vehicles, accurate LiDAR annotations allow systems to identify road signs, pedestrians, and moving vehicles, therefore allowing safe navigation. To achieve an accurate understanding of the scene and to recognize objects a high quality of LiDAR annotation is required.

Use of Camera in Autonomous Driving

Cameras can be termed as the most adopted technology for perceiving surroundings for an autonomous vehicle. A camera detects lights emitted from the environment on a photosensitive surface through a camera lens to produce clear images of the surroundings. Cameras are relatively cost-effective and allows the system to identify traffic lights, road lanes, traffic signals. In some autonomous applications, more advanced monocular cameras are used for dual-pixel autofocus hardware, collecting depth information and calculating complex algorithms. For most effective utilization two cameras are installed in autonomous vehicles to form a binocular camera system.

Cameras are ubiquitous technology that delivers high-resolution images and videos, including the texture and color of the perceived surroundings. The most common use of cameras in autonomous vehicles is detecting traffic signs and recognizing road markings.

Using Radar in Autonomous Driving

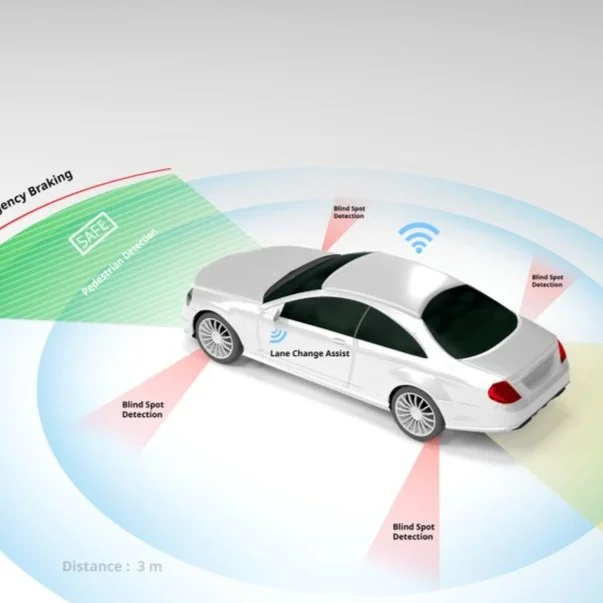

Radar uses the Doppler property of EM waves to specify the relative position and relative speed of the detected objects. Doppler shift or Doppler effect refers to the deviations or shifts in wave frequencies due to relative motion between a moving target and wave source. For example; the frequency of the signal received shows shorter waves as the signal increases and moves toward the direction of the radar system. The Radar sensors in autonomous vehicles are integrated into several locations such as, near the windshield and behind the vehicle bumper. These radar sensors detect any moving objects that come closer to the sensors integrated with the autonomous system.

What is Sensor Fusion?

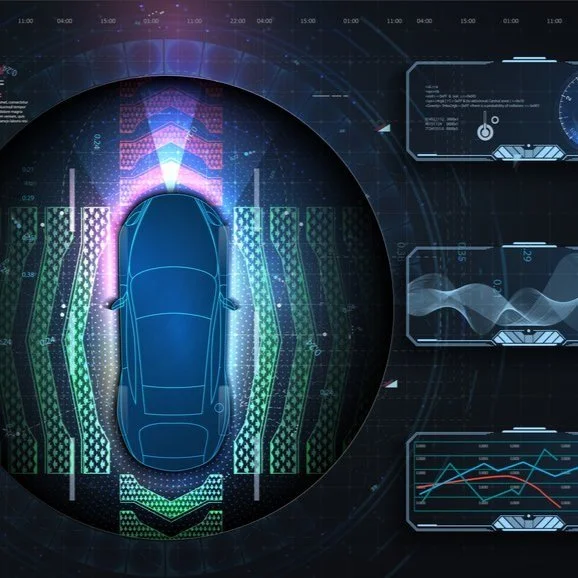

Sensor fusion is the process of collecting inputs from Radar, LiDAR, Camera, and Ultrasonic sensors collectively, to interpret surroundings accurately. As it’s difficult for each sensor to deliver all the information individually these sensors need to fuse together to provide the highest degree of safety in autonomous vehicles.

Sensor calibration is the foundation block of all autonomous systems and is a requisite step before implementing sensor fusion algorithms or techniques for autonomous driving systems. This informs the AD system about the sensor’s orientation and position in the real-world coordinates by comparing the relative position of known features as detected by the sensors. Precise sensor calibration is critical for sensor fusion and implementation of ML algorithms for localization and mapping, object detection, parking assistance, and vehicle control.

How is Sensor Fusion Executed?

There are three major approaches for combining multi-sensor data from different sensors.

High-Level Fusion: In the HLF approach, each sensor performs object detection, and subsequently fusion is achieved. HLF approach is most suitable for a lower relative complexity. When there are several overlapping obstacles, HLF delivers inadequate information.

Low-Level Sensor Fusion: In the LLF approach, data from each sensor are fused at the lowest level of raw data or abstraction. This allows all information to be retained and can potentially improve the accuracy of detecting obstacles. LLF requires precise extrinsic calibration of sensors to fuse accurately with their perception of the surrounding environment. The sensors are also required to counterbalance ego motion and are calibrated temporarily.

Mid-Level Fusion: Also known as feature-level sensor fusion is an abstraction level between LLF and HLF. This method fuses features extracted from interconnected raw measurements or sensor data, such as color inputs from images or locations using radar and LiDAR, and subsequently recognizes and classifies fused multi-sensor features. MLF is still insufficient to achieve an SAE Level 4 or Level 5 in autonomous driving due to its limited sense of the surroundings and missing contextual information.

Read More: Annotation Techniques for Diverse Autonomous Driving Sensor Streams

How to improve operational efficiency for Autonomous vehicles using Multi-Sensor Data Annotation?

Utilizing multi-sensor data fusion from numerous detectors such as cameras, radar, Lidara, and ultrasonic sensors improves the accuracy of perceptions of the environment. Sensor fusion allows a comprehensive understanding and information of the surroundings, from all individual sensors combined. When integrating data from multiple sensors AD systems can better detect road signals, assist automated parking, read road markings, and offer enhanced safety such as emergency braking systems, collision warnings, and cross-traffic alerts.

3D Mapping and Localization allow self-driving cars to navigate the environment with high accuracy using point cloud data and map annotations. This high level of accuracy in localization allows autonomous vehicles to detect if lanes are forking or merging, subsequently, it can plan lane changes or determine lane paths. Localization provides the 3D position of the autonomous car inside a high-definition map, 3D orientation, and any uncertainties.

Accurately Annotated Sensor Data allows ML models to detect and classify objects such as vehicles, obstacles, pedestrians, and more with better accuracy. Labeling 3D point clouds using LiDAR and combining its data with other sensors ensures that the car can identify objects not just its shape and position but their identities and intentions. This is highly essential in real-world situations when a pedestrian is about to cross a street or when there is any obstruction on the road.

Preprocessing Data to remove irrelevant information and noise from point clouds improves the overall performance and safety of the autonomous vehicle. Techniques like downsampling, filtering, and noise removal, make the annotation process much more efficient. This step is critical to ensure that only relevant data and features are highlighted for the annotators to enhance the accuracy of the final AD models.

Conclusion

Autonomous vehicles rely on the accuracy of the multi-sensor annotated data to recognize objects, pedestrians, and road signs when perceiving real-world surroundings. The safety and reliability of AD systems rely on multi-sensor fusion, 3d mapping and location, accurately annotated sensor data, and preprocessing data. The safety of autonomous driving is uncertain without accurately annotated multi-sensor data annotation. At Digital Divide Data we offer ML data operation solutions specializing in computer vision, Gen-AI, & NLP for ADAS and autonomous driving.

Utilizing Multi-sensor Data Annotation To Improve Autonomous Driving Efficiency Read Post »