Mastering Data Annotations Techniques for Autonomous Driving: Key Types & Guidelines

By Umang Dayal

November 26, 2024

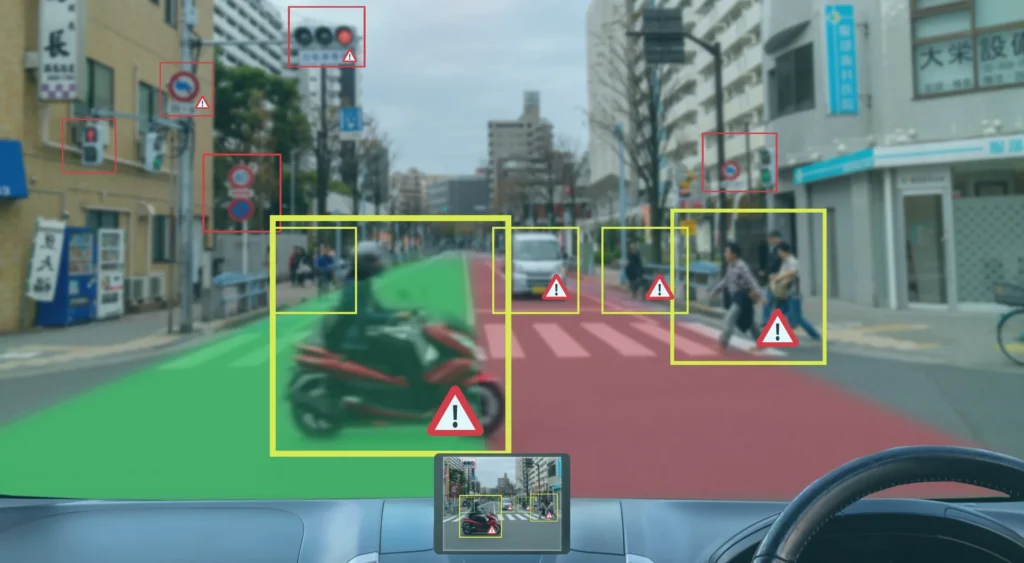

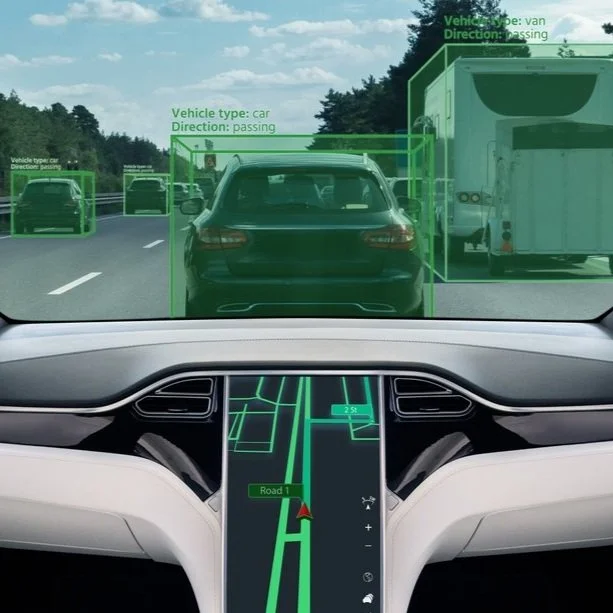

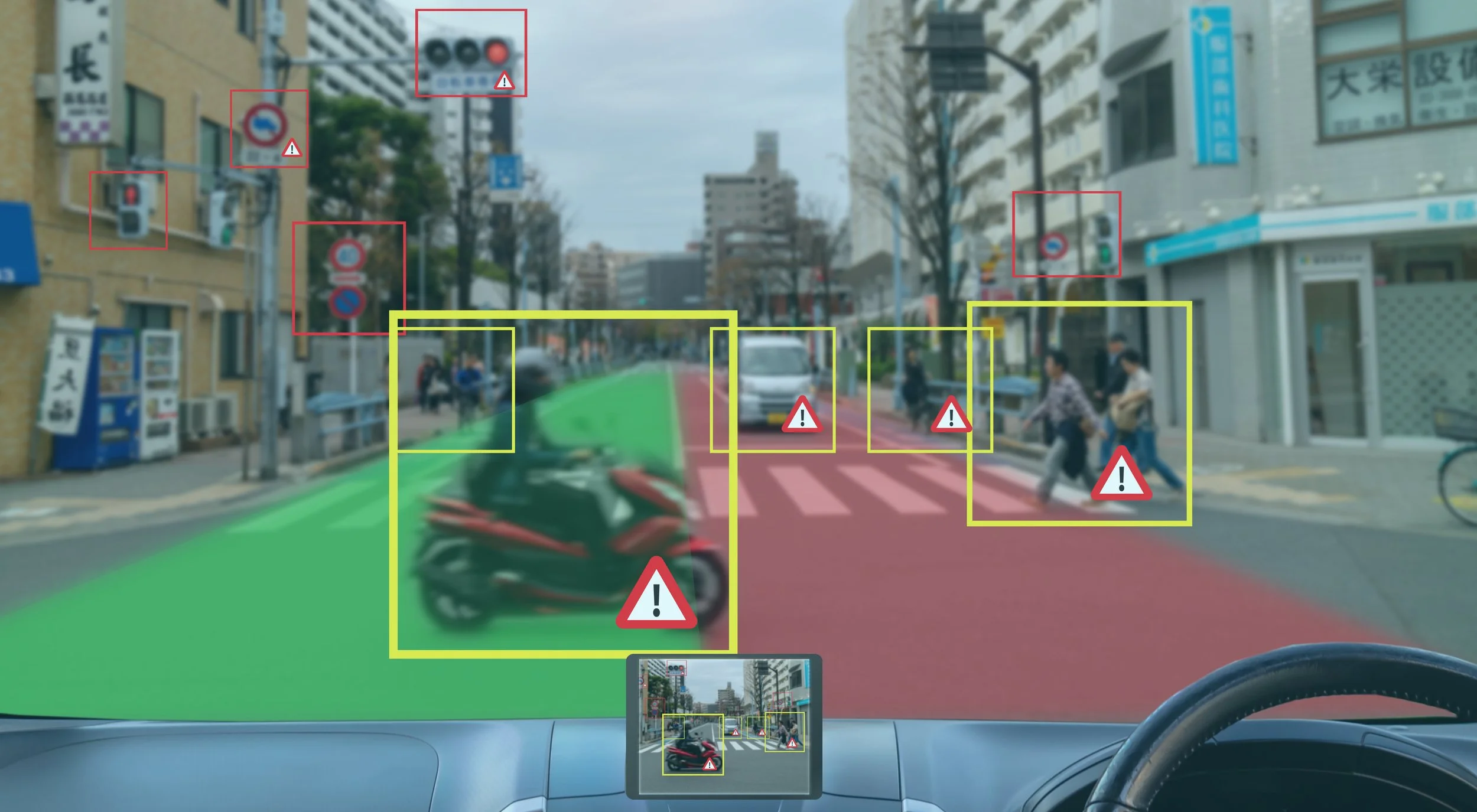

Autonomous driving is a revolutionary change in the field of transportation, offering promising benefits such as road safety, reduced traffic, and shorter travel time. Machine learning algorithms are used by self-driving cars to sense the environment and act on immediate decisions. This ability is based on its underpinning, “data annotation techniques for autonomous driving.” a process of adding labels to data, such as images, video, or sensor output, so that machine learning models gain the power to “see” and comprehend the world around them.

In this blog, we will dig deeper into the various types of data annotation techniques for autonomous vehicles and the best guidelines to follow.

Why Data Annotation is Crucial for Autonomous Vehicles?

Let’s say that you are driving a car on a busy street. You note road signs, predict the paths of pedestrians, and respond to cars that are behind or in front of you, all in the span of seconds. For a self-driving car, mimicking these human instincts involves processing huge quantities of data in real-time. Annotated datasets are essential for training algorithms. Some of these functionalities are provided as follows.

-

Detect Objects such as cars, pedestrians, traffic lights, etc.

-

Interpret Scenarios like rationalizing behavior between objects, like a cyclist running a junction.

-

Determining paths to pursue, and performing maneuvers resulting from detecting obstacles and studying traffic flow.

Machine learning models need to be labeled to understand these tasks, and this is exactly why data annotation is considered critical for autonomous vehicles.

Autonomous Driving Annotation Techniques

Real-world environments are highly variable, and ADAS require various types of annotations. Thus, they are classified into different fields and types. Let’s discuss a few of them below.

2D Bounding Boxes

One of the most common annotation types is bounding boxes. A rectangular box that is drawn around the objects of interest (cars, pedestrians, or animals) to show their location and dimensions in an image. Applicable in annotating car, bike, and pedestrian detection and recognition of traffic lights and signs.

3D Bounding Boxes (Cuboids)

3D bounding boxes extend this to three dimensions, enclosing objects with depth, width, and height. This practice is particularly useful for vehicles’ depth perception, or the relative position of things in a three-dimensional space. Applicable in judging the distance and the size of other vehicles and making accurate spatial maps for navigation.

Polygon Annotation

The annotation takes outlines of things to annotate, outlining the accurate contours of a wide variety of shapes. This is best suited for people, animals, or miscellaneous vegetation (trees or bushes).

Semantic Segmentation

Semantic segmentation refers to the task of assigning a class label to each pixel in an image to segment it into parts that make sense. This level of detail on a pixel level allows autonomous systems to identify a road surface as different from a sidewalk or other object in the field of view. Beneficial for detection of farthest and nearby road boundaries and differentiating between vehicles, pedestrians, and objects.

Instance Segmentation

Instance segmentation unifies semantic segmentation and object-level differentiation, where models can distinguish between individual objects of the same class and label them separately (e.g., two pedestrians or two cars). applicable in the personal identification of road users in complex scenarios and tracking objects over time (i.e., counting)

Line and Spline Annotation

Annotation of lines and splines refers to linear elements such as lanes, road edges, or crosswalks. This is an essential technique for lane-keeping and path-planning systems. Highly beneficial for lane departure warnings automatic lane changes and detection of boundaries on roads in the city/village.

Key point Annotation

Key point annotation indicates the coordinates of particular points of interest on objects, for example, the surrounding landmarks on pedestrians or joints on cyclists. Annotation of this type is crucial for pose estimation. Applicable for predicting behaviors of pedestrians and cyclists and utilizing gesture recognition to interact with road users outside of the vehicle.

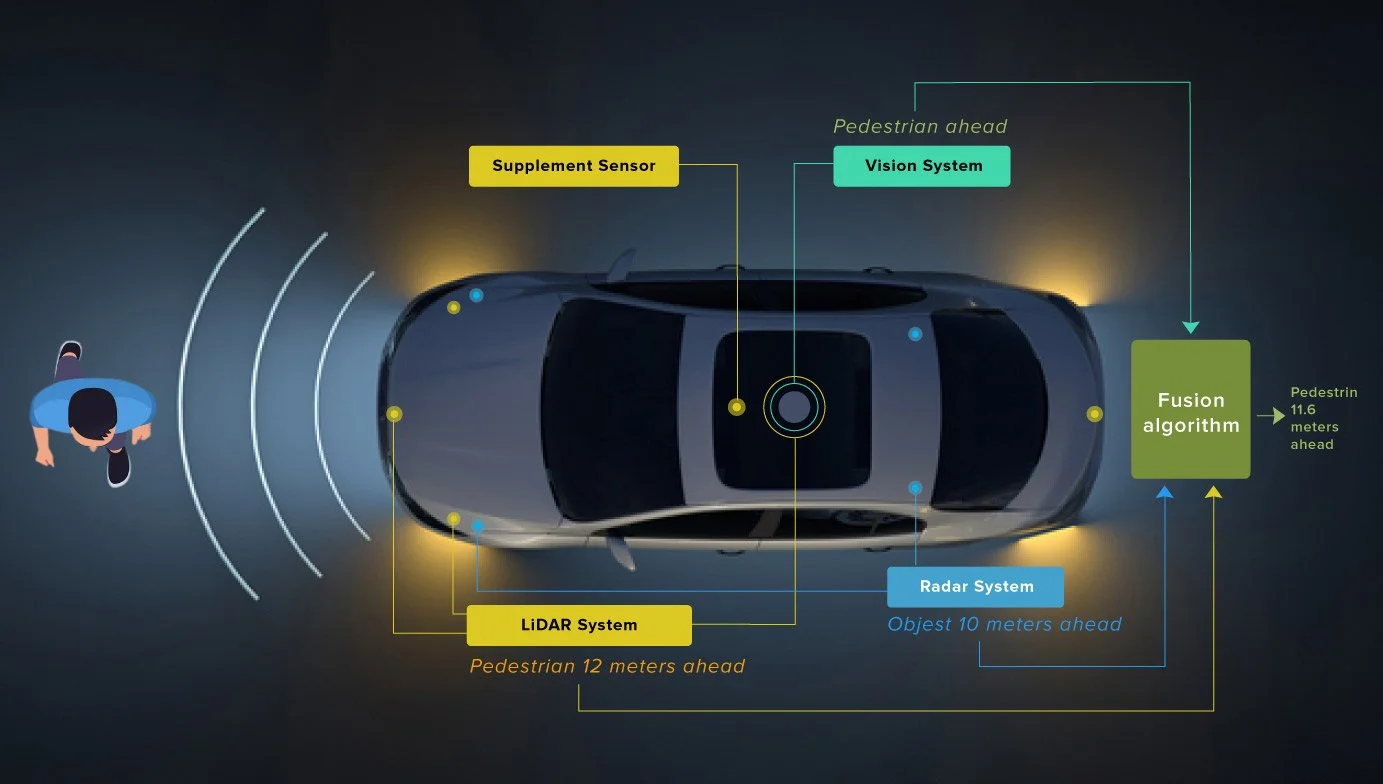

LiDAR and Radar Annotation

LiDAR and radar sensors (point cloud sensors) generate their own unique data that needs to be annotated with the objects in the data as well as their spatial properties. The depth of information from point clouds is key in mastering low-visibility surroundings. This annotation technique is highly beneficial in 3D mapping, obstacle avoidance, and navigating in fog, rain, or darkness.

Read more: The Critical Role of Data Annotation in Autonomous Vehicle Safety

Guidelines to Follow for Accurate Data Annotation

-

Create standard protocols for annotation to ensure consistency.

-

Make use of advanced tools for automation & collaboration.

-

Ensure rigorous checks to eliminate errors and maintain quality.

-

Provide appropriate training for annotators; make sure annotators know the specific role key point annotation plays for autonomous driving.

-

Regularly enhance the methodology of annotation in accordance with the outcomes of the models and the provided feedback.

How Can We Help?

We provide comprehensive data annotation services, trusted by Fortune 500 companies and pioneering mobility, ADAS, and autonomous driving innovators worldwide. We ensure that you achieve the highest safety and performance of your AI/ML model training with our human-in-the-loop approach. We specialize in image, video, Lidar labeling and annotation, multi-sensor data fusion, mapping & localization, and digital twin validation.

As a leading data annotation and labeling company we offer end-to-end support, regardless of the scale of your project, and come with a guaranteed level of quality, a global workforce with 24 x 7 x 365 labeling capacity, and best-in-class SOC 2 Type 2 and ISO 27001 data security and confidentiality.

Read more: The Critical Role of Data Annotation in Autonomous Vehicle Safety

Conclusion

From bounding boxes to complex LiDAR point cloud annotations, each has its own purpose, enabling self-driving cars to navigate safely and efficiently through their surroundings. There are certain challenges in undertaking this annotation process, from scaling to quality assurance but adopting annotation best practices, and hiring an experienced data annotation company can help your ADAS models deliver better results and build reliable autonomous systems.

Mastering Data Annotations Techniques for Autonomous Driving: Key Types & Guidelines Read Post »