Democratizing Scenario Datasets for Autonomy

March 04, 2025

Developing safer, reliable Autonomy and commercializing Autonomous Vehicles (AVs) necessitates rigorous testing and product validation. While real-world testing is indispensable, it is capital-intensive and limits scalability to encompass the range of potential driving conditions and edge cases in the target Operational Design Domain (ODD). Over the years, AV Companies have adopted a multitude of strategies to boost test coverage without incurring prohibitive costs. A few of these strategies are as follows:

-

Simulation-First Testing – Validates AV software in a scalable, cost-effective, and risk-free virtual environment. Shifting the testing paradigm to the left to discover known, predictable issues provides a cost and time advantage over real-world data.

-

Edge Case & Adversarial Testing – Evaluates AV performance in rare, unpredictable, and high-risk situations mostly in simulated environments (e.g. Pedestrians crossing in front of the AV out of occlusion).

-

Closed-Course Structured Testing – Tests (verifies) AVs in a physical world but with a controlled set of scenarios (test tracks) before the public road deployment.

-

Real-World Testing – Tests (validates) AV performance in an uncontrolled, real-world environment (public roads) for maximizing the Autonomy stack exposure.

In this article, we will explore how a set of services built around Scenario Curation, Analysis, and Management can accelerate the AV product development lifecycle. Let’s dive in!

The Curse of Rarity

Diverse weather conditions (snow storm, rain, low visibility, etc.), dense downtown areas with a high number of pedestrians, unprotected left turns, and occluded motorbikes – all of these are ODD conditions that can lead to an edge case interaction with the AV and real-world physical element. If the Autonomy model is developed and evaluated using scenarios accounting for these edge cases after a thorough ODD analysis, then the risk of a safety-critical incident can be reduced to unknown unknowns. A scenario-based approach for training Autonomy models and performance evaluation provides a safer AV without spending years of effort in real-world testing.

The Cost of Developing a Safer AV

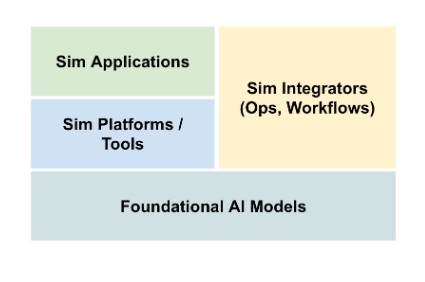

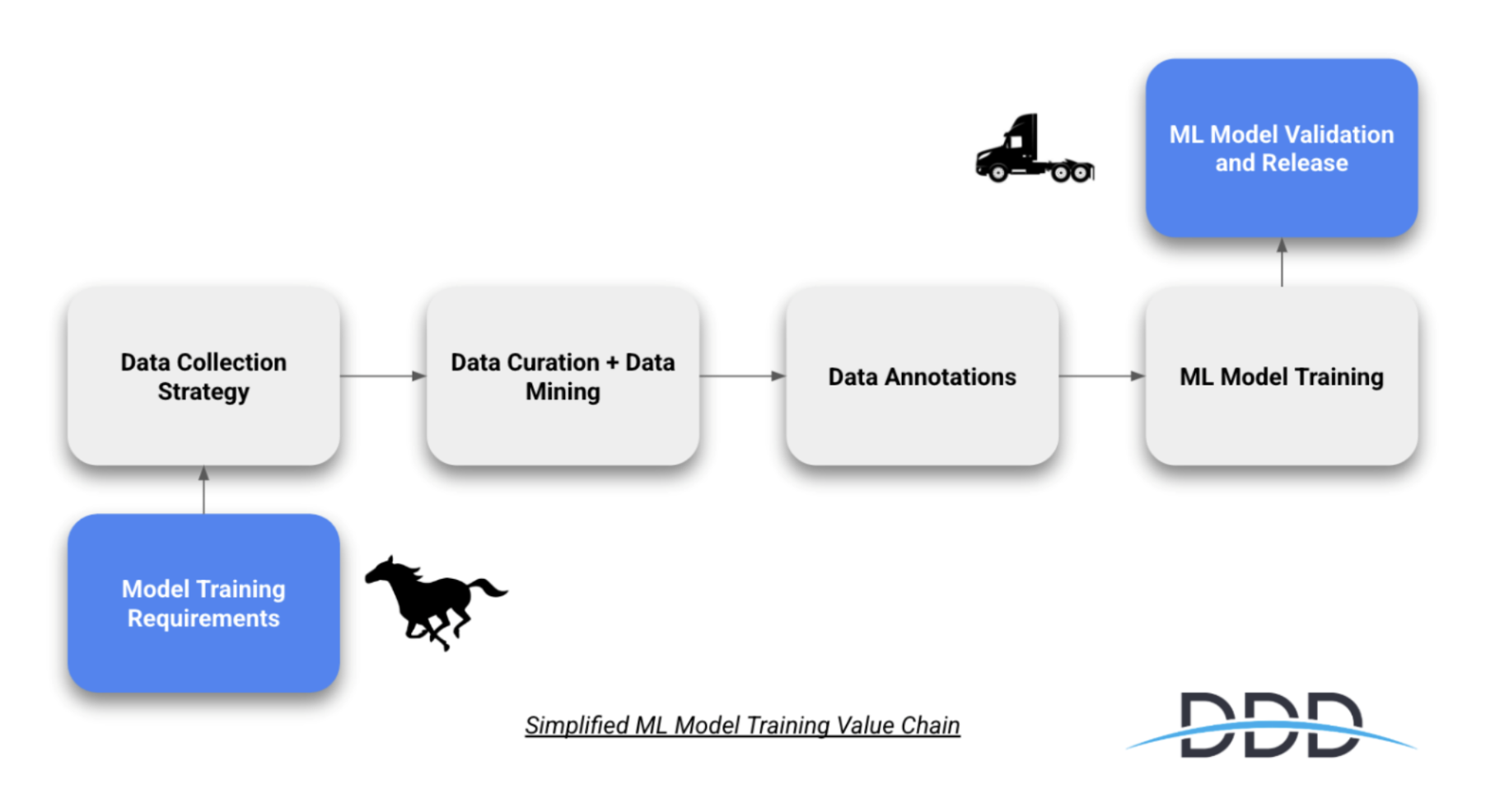

According to a news article published by The Information in 2020, the AV Industry has cumulatively spent a whopping $16 billion to develop AVs. A significant chunk of this capital has been spent on data collection, training, and performance evaluation efforts. All of these problems can be alleviated by using bespoke scenario datasets. All the well-funded large companies (Waymo, Cruise, Uber/Aurora, Baidu, etc.) have developed their infrastructure ground-up to support generating synthetic or hybrid scenarios. These companies plus many others in the space have heavily invested in sim infrastructure and scenario-based operations.

One might think that these large well-funded companies have the first-mover advantage and it is difficult for new entrants to catch up. At the very least, it is difficult to outspend the incumbents. However, with advancements in silicon chip design, computing power, and network speeds: we are at the cusp of a revolution in the usage of Simulation and synthetic scenarios. In the present-future terms, we expect many of these platforms, and data services to be available off-the-shelf and democratize the adoption of scenario-based performance evaluation.

Recent Trends

The last few years have witnessed the launch of multiple foundational physical AI models. These models make it easy to construct scenarios on-demand and run Simulation engines for various performance evaluation use cases. A few prominent examples of technological advances include:

-

NVIDIA has recently launched its Cosmos platform. Along with NVIDIA’s Scenario Editor, developers can now speedily build synthetic scenarios or generate new scenarios from existing ground truth data.

-

Waabi’s UniSim is a neural closed-loop sensor simulator that can generate multiple scenarios from a single recorded log captured by a sensor-equipped vehicle. Provides far better test coverage using such base scenario variations.

-

PD Replica Sim by Parallel Domain allows AV companies to create simulation scenarios from their own captured data.

-

Companies like Nexar are crowdsourcing automotive scenario generation and reconstruction using dash-cams or ADAS cameras on a fleet of millions of production vehicles.

Such platforms have removed the initial barriers of entry and reduced the need for:

-

Sourcing ground-truth ML data for training Autonomy models, dropping data collection costs significantly

-

Large-scale infrastructure setup for scenario-based simulated performance evaluation, dropping overhead engineering costs

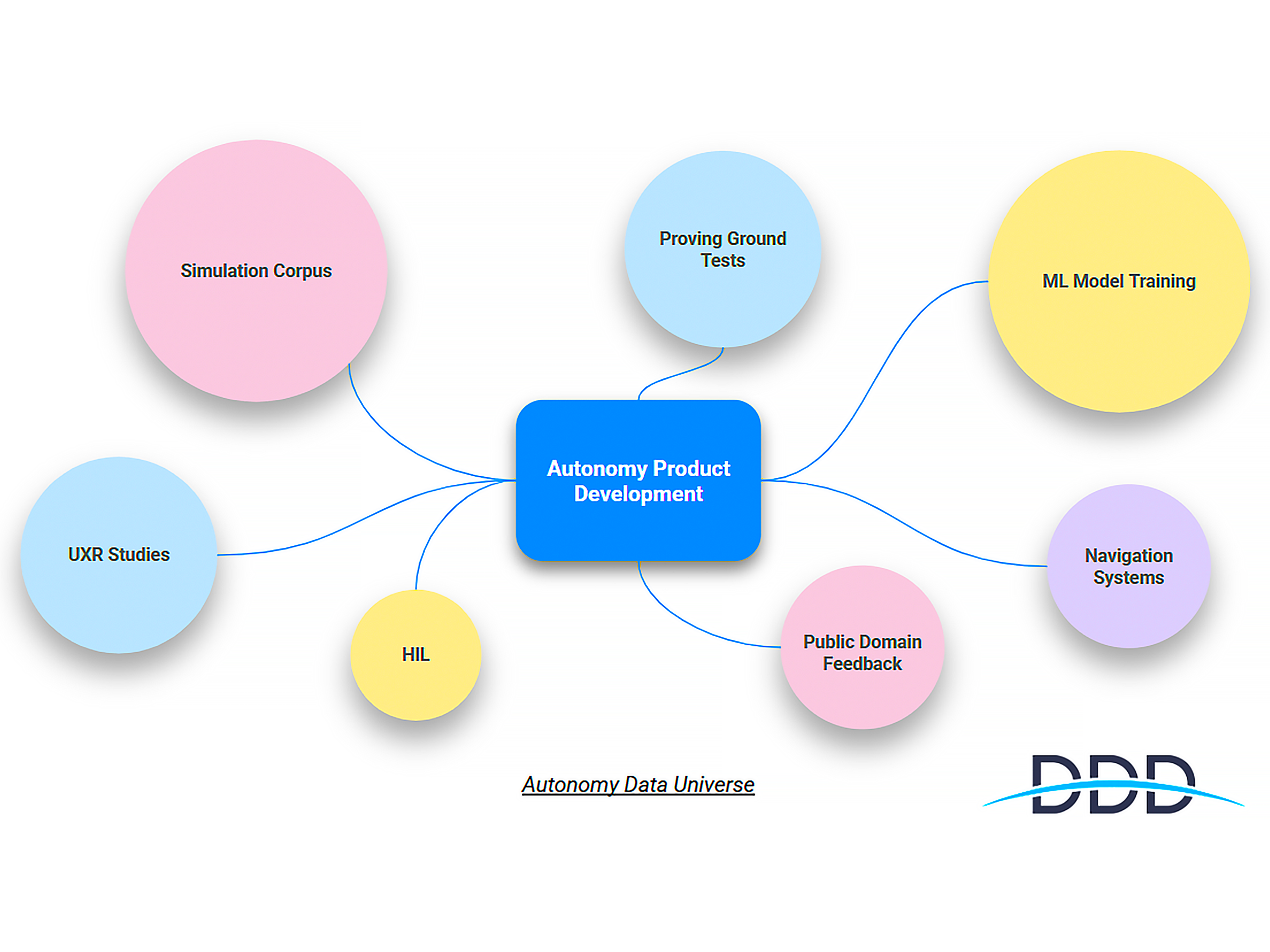

The current trend conclusively shows that scenario management and simulation-based processes need not be built ground-up anymore compared to ~5 years back. To take full advantage of the ecosystem, there is a need for a system integrator and service provider who can manage the lifecycle of scenario datasets from scenario generation, scenario management, edge case curation, and analysis. At DDD, with deep expertise in AV Safety and Performance Analysis, we are well-equipped to take up this role as an important catalyst in the democratization of AV development and adoption.

Scenario Datasets Services

So far we have delved into a scenario-based approach for AV development; the problems it solves and how the recent technological trajectory makes it easily accessible to a wider set of stakeholders. In this section, we will focus on the services that can be built around Scenario Management and the Applications this will facilitate.

At a high level, the following services are the backbone of the solution suite:

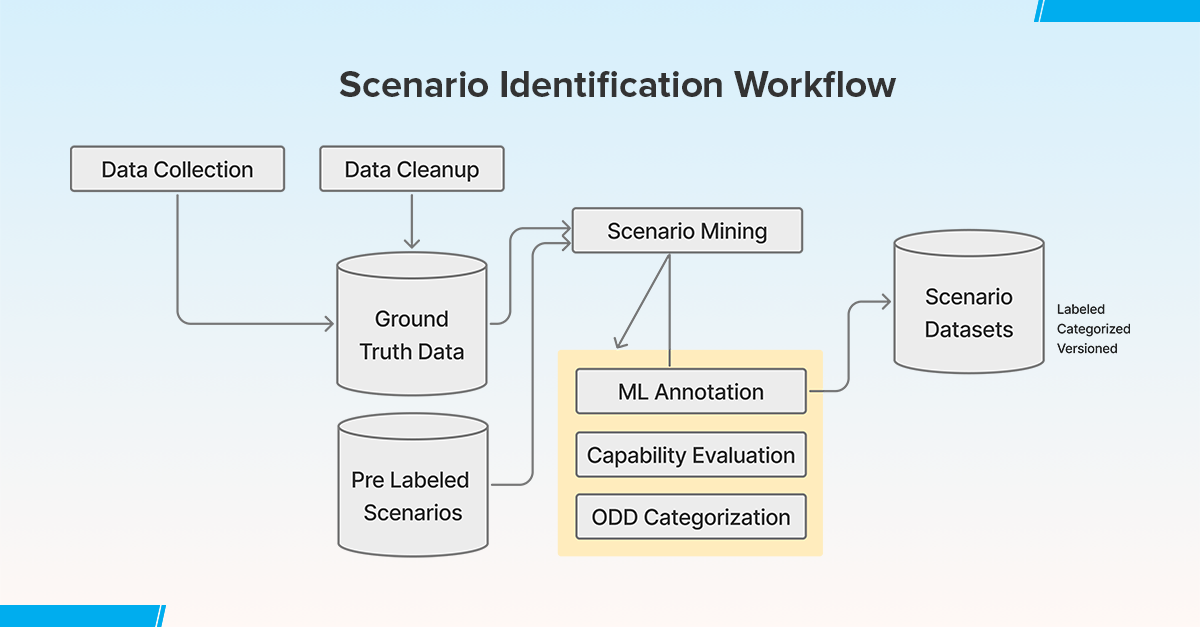

Scenario Identification: The real-world driving situations that the AV must handle are mined. The net collected data is reviewed further for identifying relevant scenarios using the ODD context and for further labeling, performance analysis, or other taxonomic classification. The identified scenarios are then categorized into normal (everyday) and edge-case (rare, high-risk) situations. Factors like weather, traffic density, road conditions, unexpected obstacles, etc. are considered. This library of categorized Scenario Datasets are versioned and kept ready for ML foundational models or analytical product development to build the prioritized Autonomy capability set. This process can be entirely manual or semi-automated, in combination with other parameters such as downstream tech stack requirements.

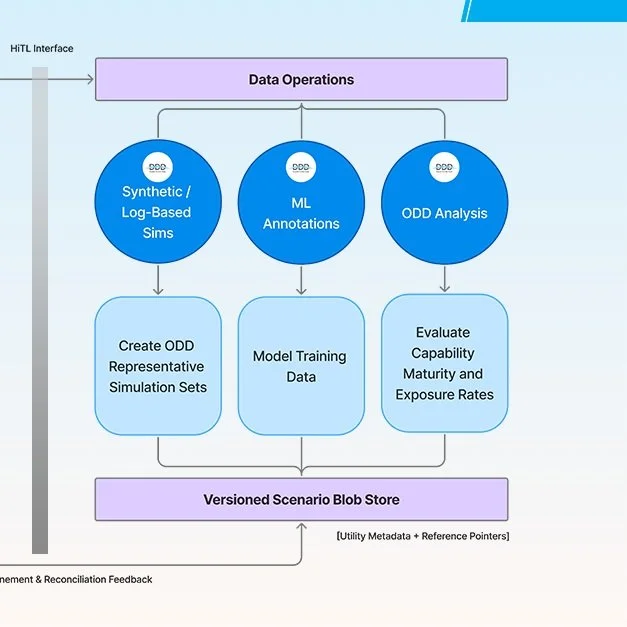

Fig 1: Scenario Identification Workflow

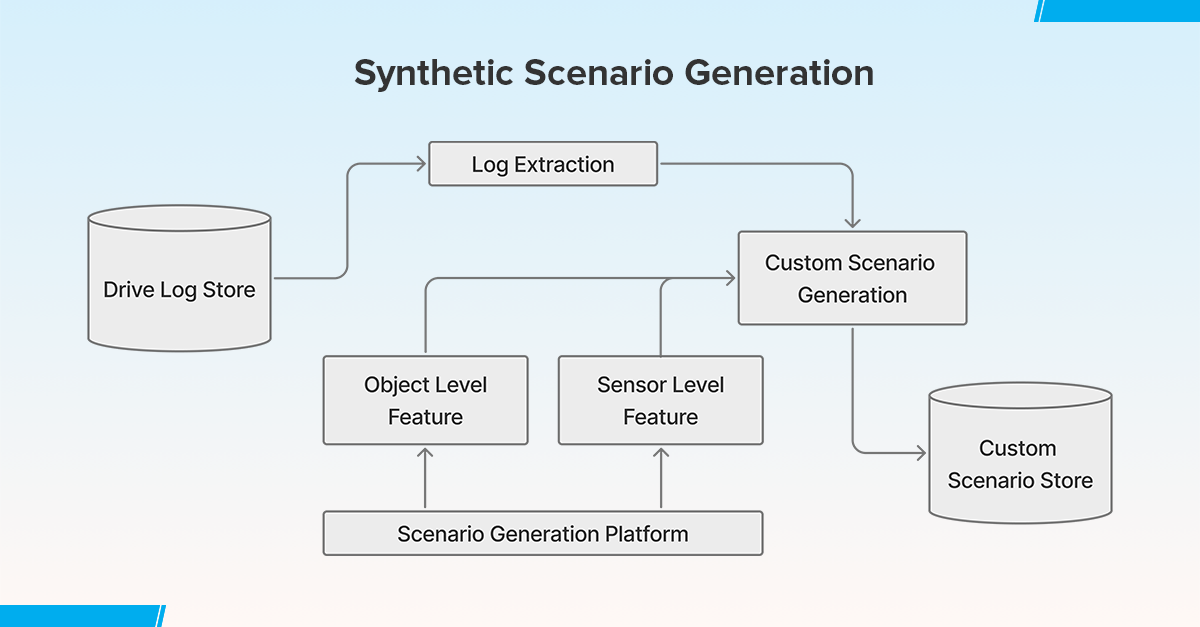

Synthetic Scenario Generation: Scenarios are created synthetically and from real-world driving logs. Object-level and sensor-level scenarios are created with GUI tools and parameterized approaches. These synthetic or hybrid scenarios bridge the gap between real-world datasets and rare edge cases an AV may encounter. These scenarios can be labeled and then used to train, validate new autonomy model versions, or evaluate existing models against evolved requirements.

Fig 2: Synthetic Scenario Generation

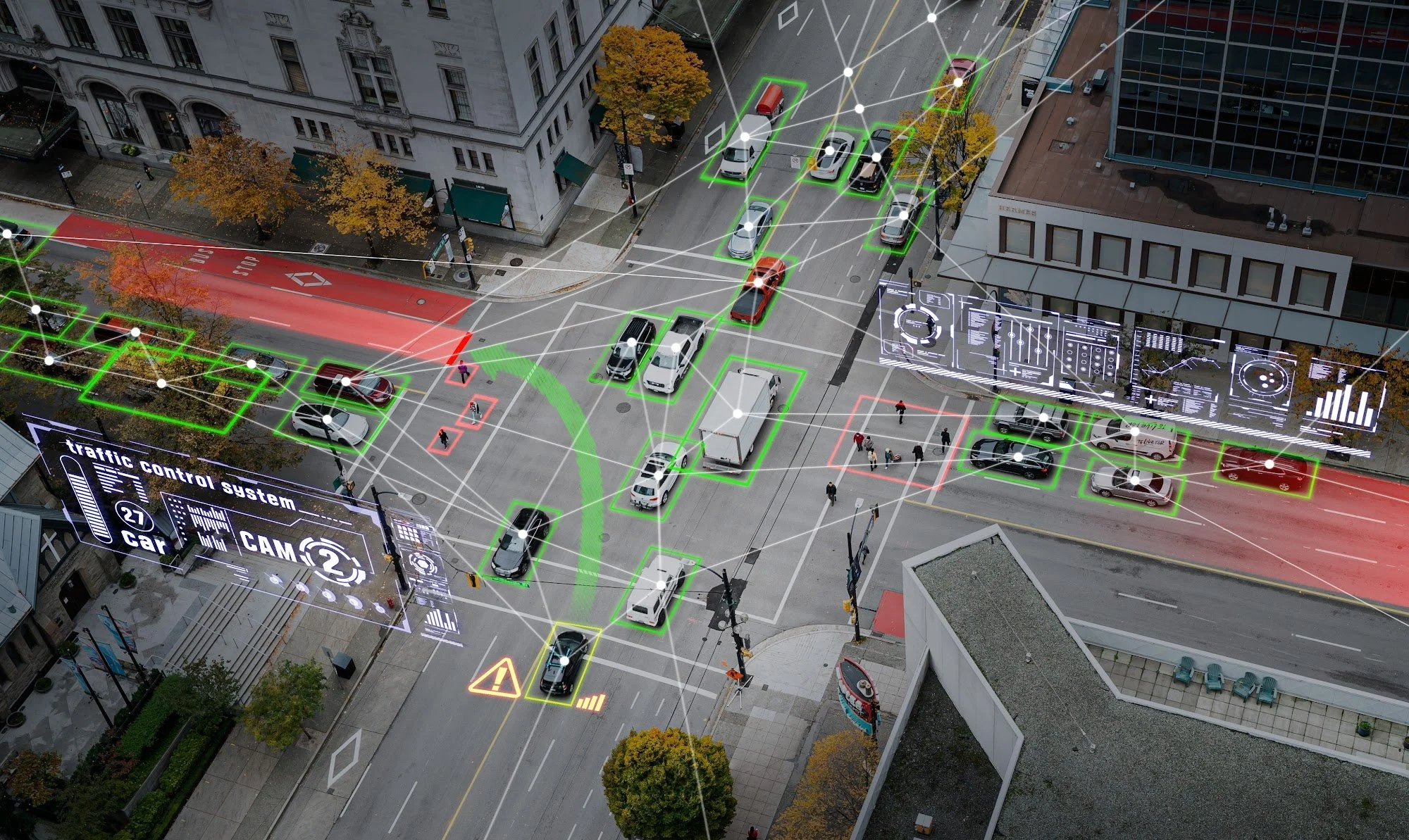

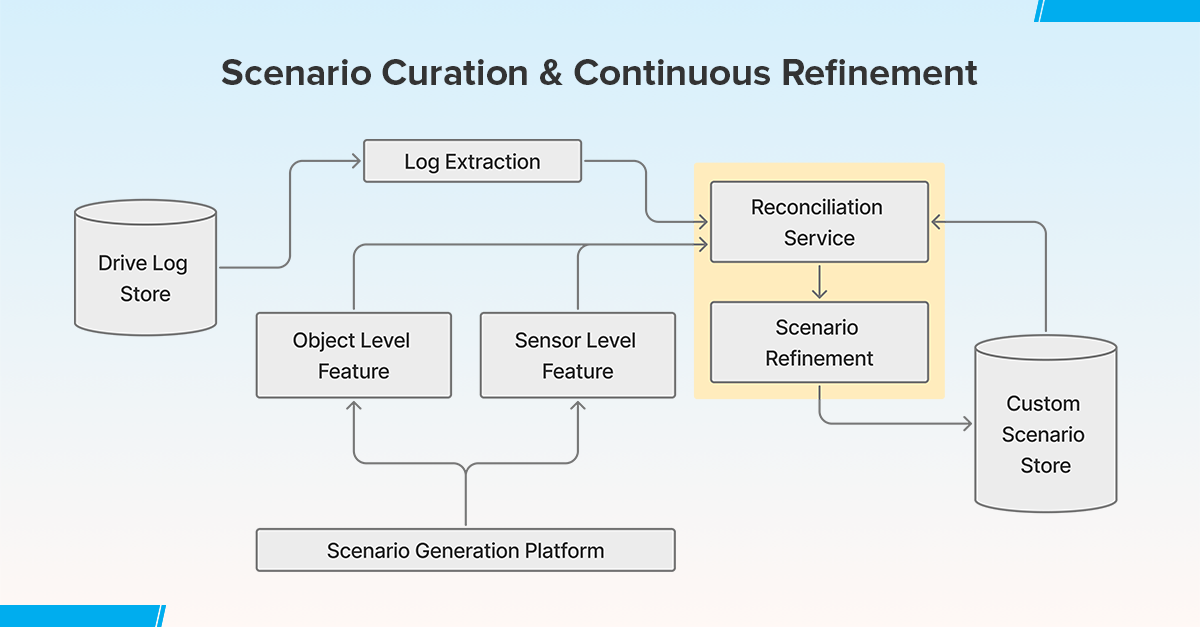

Curation & Continuous Refinement: To maintain scenarios relevant to the AV development lifecycle, there is a need to optimize existing scenario datasets to account for real-world events and changes to ground truth. Analyzing and fine-tuning scenarios can be used to isolate systemic defects, perform CAPA analysis, and implement changes for further training, testing, and validation. This is particularly useful for the matured stage of product development.

Fig 3: Scenario Curation & Continuous Refinement

With the above services, we can develop a library of curated, categorized, and up-to-date Scenario Datasets which can then be utilised for various applications and use cases. We will discuss a few of the applications in the next section.

Key Applications of Scenario Dataset Services

1. Working with Edge Cases – Accelerating AV Development

A diverse curated Scenario Dataset library is particularly useful to address the challenge of data scarcity in edge-case scenarios, such as adverse weather conditions, temporary construction zones, low-visibility environments, etc. This approach enhances the availability of diverse and high-risk driving scenarios, which are often underrepresented in real-world datasets (Dosovitskiy et al., 2017; Sun et al., 2020). By integrating both real-world log data and synthetically generated scenarios, the dataset library enables comprehensive training, validation, and performance evaluation of autonomous vehicle (AV) systems.

Synthetic scenario generation, leveraging simulation platforms such as CARLA or LGSVL, provides scalable and repeatable test cases for rare but critical situations. Additionally, scenario augmentation techniques using generative models and domain adaptation enhance the robustness of AV perception systems (Sadeghi & Levine, 2017).

This methodology significantly accelerates the AV product development lifecycle by reducing the time required for data collection and annotation, thereby expediting training, validation, and performance evaluation cycles. Moreover, by continuously updating the dataset library with newly encountered real-world edge cases, the AV system can iteratively improve its decision-making capabilities.

2. Safety & Compliance in AVs

Scenario datasets play a crucial role in AV development to enhance safety and regulatory compliance, particularly in handling safety-critical incidents. These incidents often involve non-conforming and erratic vehicle behavior, vulnerable road users (VRUs), and unexpected road hazards, which require extensive dataset coverage to ensure robust AV decision-making.

By leveraging readily available high-fidelity scenario datasets, AV developers can systematically improve the detection of potentially harmful situations and develop response mechanisms, particularly for pedestrians, cyclists, emergency vehicles, and other unpredictable entities in urban and highway environments. Furthermore, scenario augmentation techniques using generative models and reinforcement learning facilitate the expansion of dataset variability to improve generalization across diverse ODDs.

From a compliance perspective, readily available scenario datasets increase test coverage and ensure alignment with regulatory frameworks such as ISO 26262 (functional safety), ISO 21448 (safety of the intended functionality, SOTIF), and NCAP assessment protocols. By integrating synthetic and real-world scenarios into safety validation pipelines, AV manufacturers can systematically address regulatory testing requirements, ensuring that AVs can safely operate under complex, high-risk driving conditions.

3. Global Expansion for AVs

The Scenario Dataset Library can be filtered for specific locations to essentially generate a region-specific dataset. This is highly useful for AV developers who want to expand to new locations (cities, regions, countries). Region-specific datasets (hybrid and synthetic) account for different road infrastructures, traffic laws, weather, and pedestrian behaviors. Usage of region-specific datasets drastically reduces the time and effort to fine-tune autonomy models as per specific ODDs relevant to the new location.

4. Collision & Near Collision Analysis:

Retrospective collision and near collision (or near misses) identification is part of the safety critical event performance evaluation, necessary for extracting crucial information on potential system failures. It is a systematic approach to dissect the problem, root cause analysis, and hazard analysis for minimizing the exposure of your autonomous system to similar situations. Existing scenario datasets can be used in addition to logs of safety-critical incidents to analyse and identify root causes. The findings can then be used to reconstruct scenarios for relabeling and re-simulation to actively prevent recurrence. The availability of a library of datasets for safety-critical scenarios provides an accelerated mechanism for handling retrospective analysis for safety issues.

Conclusion

Scenario dataset services play an integral role in Autonomous Vehicle (AV) development including model training, validation, and evaluation. By leveraging a library of high-fidelity datasets, developers can enhance AV performance, ensure regulatory compliance, and improve real-world safety outcomes. As the AV industry advances, the continued evolution of scenario dataset services — coupled with Machine Learning advancements and standardized validation frameworks — will be pivotal in shaping the future of safe and reliable Autonomous Mobility.

DDD’s Scenario Dataset Services can be used for such end-to-end applications and derivative use cases that will help AV developers expedite their product development and take it to market.

References:

-

Riedmaier, S., et al. (2020). “Scenario-Based Testing for Automated Driving.” IEEE Transactions on Intelligent Vehicles

-

Nidhi Kalra, Susan M. Paddock (2016) “Driving to Safety:How Many Miles of Driving Would It Take to Demonstrate Autonomous Vehicle Reliability?”. RAND.

-

Simulated terrible drivers cut the time and cost of AV testing by a factor of one thousand

-

Dosovitskiy, A., Ros, G., Codevilla, F., Lopez, A., & Koltun, V. (2017). CARLA: An open urban driving simulator. Conference on Robot Learning (CoRL).

-

Rong, G., Zhao, H., Bian, J., Xia, Y., Zhao, Y., … & Li, K. (2020). LGSVL Simulator: A high-fidelity simulator for autonomous driving. arXiv preprint arXiv:2005.03778

-

Sadeghi, F., & Levine, S. (2017). CAD2RL: Real single-image flight without a single real image. Robotics: Science and Systems (RSS).

-

Bansal, M., Krizhevsky, A., & Ogale, A. (2018). ChauffeurNet: Learning to drive by imitating the best and synthesizing the worst. NeurIPS.

-

Philion, J., Kar, A., Lebedev, V., Kolve, E., Fidler, S., & Urtasun, R. (2020). Learning to evaluate perception models using planner-centric metrics. CVPR.

-

Geiger, A., Lenz, P., Stiller, C., & Urtasun, R. (2013). Vision meets robotics: The KITTI dataset. International Journal of Robotics Research (IJRR).

-

Richter, S. R., Vineet, V., Roth, S., & Koltun, V. (2017). Playing for data: Ground truth from computer games. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI).

-

Winner, H., Hakuli, S., Lotz, F., & Singer, C. (2017). Handbook of Driver Assistance Systems: Basic Information, Components and Systems for Active Safety and Comfort. Springer.

-

Koopman, P., & Wagner, M. (2017). Autonomous vehicle safety: An interdisciplinary challenge. IEEE Intelligent Transportation Systems Magazine.

Democratizing Scenario Datasets for Autonomy Read Post »