Data Annotation Techniques in Training Autonomous Vehicles and Their Impact on AV Development

By Umang Dayal

October 28, 2024

When artificial intelligence (AI) was introduced to the public, many people associated it with autonomous driving. Whether it is a robot playing a soccer match or a smart car figuring its path in heavy traffic, AI algorithms are not shy in attracting huge crowds. We are living with pixels that are constantly evolving and, as a result, we generate data, in the petabytes of scale every second of every day. The driving force behind autonomous driving technology predominantly revolves around safety, particularly in fatality prevention: ML data operations support and accurate data annotation techniques go a long way to preventing accidents on the roads.

In this blog, we will explore various data annotation techniques used in training autonomous vehicles and their impact on AV development.

What is Data Annotation?

Data annotation is essential for autonomous driving, creating structured training data that teaches AV systems to interpret real-world environments. Ensuring all critical scenarios are captured accurately enhancing AV safety and performance.

Autonomous driving aims to create a maximum amount of annotated training data that can improve automatically due to fleet and posterior learning, among other things. However, an increasing part of the vision in autonomous driving development is to guarantee that all relevant real-world traffic scenarios are simulated at some point. With the greater power of a car’s automatic system, collecting large amounts of annotated data becomes feasible for improving automatic driving technology.

Key Techniques and Tools in Data Annotation

Data annotation takes a lot of time and effort, but it is really an essential step of data pre-processing because only noise-free and reliable data can allow these algorithms to work effectively. There are various technical annotation methods and tools for autonomous driving, including manual annotation, semi-automated annotation, and machine learning-based annotation.

Manual Annotation

The human-driven process of generating annotations for data is often referred to as manual annotation. Manual annotation is slower than the other techniques used, but this often results in accurate annotations that are valuable in the training of neural networks. Majorly data annotation companies that rely on humans-in-the-loop process utilize this technique. Further, this technique can be broken down into three segments.

Bounding box annotation

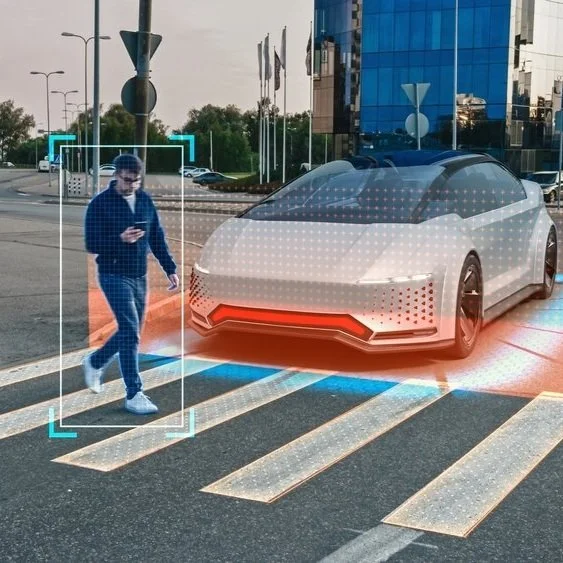

Bounding box annotation places rectangular labels around objects like vehicles, pedestrians, and road signs, helping AVs recognize and respond to obstacles and traffic patterns. This approach is easier than producing a classification and segmentation model, as the labor requirements are reduced.

Data Classification

Data classification categorizes objects such as cars, pedestrians, and road markings, allowing AVs to differentiate between elements in dynamic traffic environments. The common annotations for the classification model are vehicles, pedestrians, and others. The common phrase is referred to as “car” for the vehicle model, “person” for the pedestrian model, and “no object” for the other model.

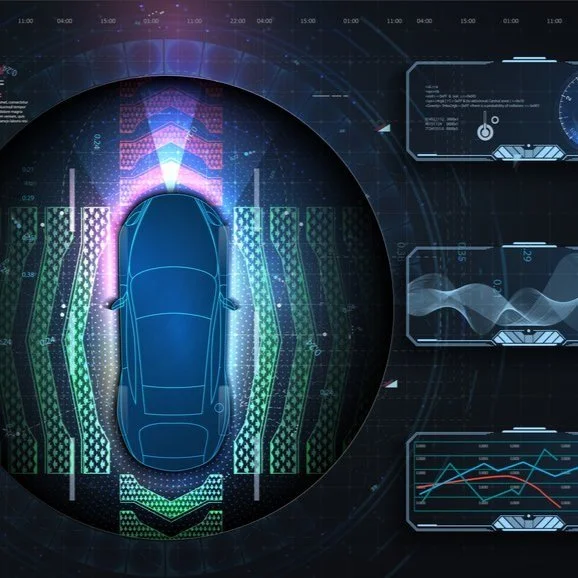

Data Segmentation

The segmentation model focuses on the annotation of parts of the scene that require specific processing. This contrasts with the bounding box model, which only annotates generic elements of the scene. The annotated data is segmented into ground, road, obstacles, route, and road boundaries. Each of these segments is unique and has a labeled ID that ingresses the training system of the sector model.

Each of these areas has its distinctive value and is used differently within the training of autonomous vehicles. As data needs to be labeled to be useful as training data, these manual annotations are turned into data and input directly into the ADAS deep learning systems.

Semi-Automated Annotation

Most of the widely used and commercially available annotation approaches still rely heavily on human expertise. In terms of temporal modes of processing, there are three different approaches:

-

Proactive

-

Reactive

-

Interactive

In proactive approaches, human expertise is needed at the beginning to train the systems. In reactive or interactive approaches, the software requests feedback in uncertain cases or does not process elements that it does not master. It is especially crucial in autonomous driving, and also in general, as image analysis has certain limitations in diverse environments. In this context, the human decides based on onboard systems, but there are switches between manual control and automatic control.

The semi-automated annotation, where we can find the combination between human skill and the power of machines, is the most common way to carry out the annotation task. In the field of computer vision, this mixed type of processing is valuable considering the vendor’s expertise in creating AI tools and the unique use-case knowledge of every company in the application field. In highly complex solutions, where the challenge of the use-case cannot be solved only with computer vision tools, personalized algorithms are being created, requiring the expertise of data scientists and reconstructions of certain models from scratch.

Machine Learning-Based Annotation

Machine learning-based annotation uses predictive models to handle vast data volumes, improving scalability and accuracy in AV training datasets. An automatic machine learning-based annotation has the ability to recognize and correct human-supervised mistakes, returning a refined prediction. The human expert can still accept this prediction or submit an entirely new data annotation. Semi-automatic machine learning annotation projects often initially leverage human ability and, once sufficient trained outputs are generated, start to automatically predict a certain percentage of the data.

Therefore, machine learning is fully capable of performing annotations that may come close to automating self-driving engineering, due to predictive modeling related to autonomous driving being built primarily on machine learning. So, it becomes evident that researchers study the potential capabilities of machine learning annotations. Thus, machine learning is already firm in the development of artificial intelligence solutions and can help large-scale data annotation to a certain extent.

Impact on Autonomous Driving Development

When developing autonomous driving and driver-assisting technology, well-labeled data is of paramount importance. The labeled data in a dataset provides reference data points, or ground truths, for the complex process of machine learning. Labeling refers to the act of placing labels, such as bounding boxes in an image or tracking the position of a pedestrian as they move across a scene. This annotated data vastly improves the overall accuracy of a model or the effectiveness of the performance of the technology you are developing. The performance of an autonomous vehicle or advertising system is only as good as the data used to train it.

Enhanced Training Data Quality

Annotating data plays a key role in building self-driving systems. A large number of trained examples helps to perceive more complex practical scenes. Image annotation aids autonomous vehicles by providing recognizable feedback on object features including obstacles, roads, and traffic signals. When training an object detection, localization, and recognition model, labeled training datasets are needed. This model receives images as input and generates a hypothesis about the contents of the image in terms of label or probability. The degree of correlation between the actual object images and those predicted by the model is then compared.

Data Volume

Labeled data not only defines individual instances but also allows algorithms to ignore information about the rest of the frame. This results in smarter algorithms and fewer false positive error signals. Similar to face detection, one can halve their training data for the same improvement by providing an object recognizer with the coordinates of the objects of interest.

Variability

Automatically annotated or synthesized data is only as good as the data it is trained on, any mistakes or patterns in the original data will be learned by the split. Labeled data can be used to focus learning difficulties on hard positive cases rather than easy negative cases. This feature is essential when the negative data is small. Since the learning patterns are adjusted, the model can focus on the boundary regions that are most important for classification providing much better localization and classification results.

Response

Interest is shifted to the region of actual interest so that many more resources are dedicated to this region and less to redundant data. Object recognition algorithms trained on annotated data outperform standard object recognition. Highly localized models, as opposed to standard big-rectangle models, result in better performance when accuracy needs to be improved.

Improved Model Performance

The model performance of computer vision and deep learning-based algorithms improves with the quantity and quality of data. Because autonomous driving also utilizes such models and algorithms, the role of data annotation professionals is critical. Data labeling services are typically sought in a hierarchical manner for low, mid, and high-level annotations such as 2D bounding boxes, 3D bounding boxes, semantic maps, lane markers, and instance segmentation masks. Data annotation takes data from the real domain and makes it more understandable to machines that the algorithms can work with. The annotators provide ground truth information about the data they label that guide learning processes in real-world applications.

Read more: The Critical Role of Data Annotation in Autonomous Vehicle Safety

Final Thoughts

Annotated data cannot effectively be operated without an established understanding of deep learning or manual techniques of feature removal and deployment, or at least a vast pool of the latest annotations in developing tools and equipment in existing production systems that are all too literal. If the available tools are to be utilized on collected data, one should stay informed and maintain expertise about more than one tool.

The rapid advancement in machine learning/deep learning algorithms has seen a rapid increase in the volume of annotated data. The efficacy of these algorithms in improving performance can no longer be denied. Scalability of annotation services is no longer a choice; it is critical. Therefore, organizations that generate data for deep learning algorithms may need to process large volumes of data. It can be challenging for new organizations to scale their data annotation tasks.

Once requirements have been established to generate data for a project, an organization has to ensure that data is annotated to maintain a high level of accuracy and precision. The level of feature analysis required for the annotation of data might be rigorous or straightforward. Rigorous feature analysis might be required where behavior, actions, and object detection are critical requirements for use cases such as traffic simulation and autonomous driving scenarios. Therefore, ensuring quality, defining processes, and building systems/tools for annotation are key regulatory processes for generating such datasets.

As an expert data labeling and annotation company, we provide reliable and expert data annotation services to support AV innovation. Connect with us to learn more about our autonomous vehicle solutions.