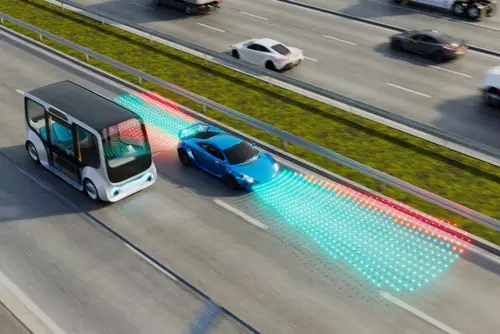

A single autonomous vehicle perceiving the world through its own sensors has hard limits on what it can see and how far ahead it can respond. A vehicle approaching a blind intersection cannot detect a pedestrian stepping off the kerb until they come into sensor range. A vehicle following a truck cannot see the road conditions or sudden braking of vehicles further ahead in the queue. These are not sensor hardware problems that better LiDAR or cameras can solve. They are geometry problems. The information the vehicle needs exists, but it cannot reach the vehicle through on-board sensing alone.

Vehicle-to-Everything communication, known as V2X, addresses this directly. It enables vehicles to exchange position, speed, and hazard information with other vehicles, with road infrastructure, with pedestrians carrying compatible devices, and with network systems that aggregate traffic data. The result is a perception picture that extends beyond what any individual vehicle can see. For AI safety systems, this expanded awareness opens new possibilities for collision avoidance, intersection management, and vulnerable road user protection. But those systems need training data that reflects how V2X communication actually behaves: with latency, packet loss, variable signal quality, and the full messiness of real network conditions.

This blog examines what V2X is, how it extends the perception capabilities of autonomous vehicles, and what the training data requirements for V2X-enabled AI safety systems look like. ADAS data services and multisensor fusion data services are the two annotation capabilities most relevant to programs building V2X-integrated perception models.

Key Takeaways

- V2X extends vehicle perception beyond the limits of on-board sensing by sharing data between vehicles, infrastructure, and road users. AI safety systems trained on V2X data can respond to hazards before they enter sensor range.

- The main V2X communication types are V2V (vehicle-to-vehicle), V2I (vehicle-to-infrastructure), and V2P (vehicle-to-pedestrian). Each carries different data types and has different latency and reliability characteristics that training data must reflect.

- Training AI safety systems on V2X data requires annotated examples of communication degradation scenarios, including latency, packet loss, and signal dropout, not just clean, ideal-condition data.

- V2X data is fundamentally multi-agent: the model needs to learn from interactions between multiple communicating road users simultaneously, which requires training data with synchronized multi-agent annotations rather than single-vehicle perspectives.

- The most significant V2X training data gap is coverage of vulnerable road users. Pedestrians, cyclists, and e-scooter riders are the hardest to protect and the most underrepresented in existing V2X datasets.

What V2X Is and How It Works

The Communication Modes

V2X is an umbrella term covering several specific communication modes. Vehicle-to-Vehicle communication lets nearby vehicles share their position, speed, heading, and brake status in real time, giving each vehicle visibility of what other vehicles around it are doing even when direct sensor contact is blocked. Vehicle-to-Infrastructure communication connects vehicles to roadside units at intersections, highway gantries, and traffic signal controllers, enabling the vehicle to receive information about signal timing, road conditions, and hazards ahead. Vehicle-to-Pedestrian communication allows vehicles to detect and receive data from smartphones or wearable devices carried by pedestrians and cyclists, extending protection to road users who would otherwise only appear in the vehicle’s sensor field when physically close.

DSRC and C-V2X: The Two Protocol Families

V2X communication operates primarily through two technology families. Dedicated Short-Range Communication is a WiFi-based standard that has been deployed in research programs for over a decade and operates without network infrastructure, enabling direct vehicle-to-vehicle communication. Cellular V2X uses the mobile network to carry V2X messages and benefits from the coverage and capacity of 4G and 5G infrastructure. Research on C-V2X published in PMC demonstrates that cellular V2X achieves substantially lower latency than DSRC in high-traffic scenarios, which is critical for safety applications where milliseconds determine whether a collision avoidance maneuver is possible. The two protocols produce somewhat different data characteristics, and training data for V2X AI systems needs to reflect the protocol environment in which the deployed system will operate.

What V2X Data Actually Contains

Basic Safety Messages

The fundamental V2X data unit is the Basic Safety Message, a small packet broadcast by each vehicle containing its current position, speed, heading, acceleration, and brake status. These messages are transmitted multiple times per second so that receiving vehicles have a continuously updated picture of their immediate V2X-connected environment. For an AI safety system, the training signal in this data is the relationship between these message streams and the safety-relevant events that follow: the vehicle that was braking hard two seconds ago is now stopped across the lane; the vehicle merging from the right was signaling a lane change in its messages thirty metres before it appeared in sensor range.

Basic Safety Messages sound simple, but annotating them for training purposes is not. The model needs to learn which message patterns are predictive of hazardous events. That requires training data where the message sequences leading up to incidents are labeled with the outcomes they preceded. Building this requires either real-world incident data with V2X logs, which is scarce and difficult to collect safely, or simulated scenarios where communication and incident data are generated together, and ground truth is available by design.

Infrastructure and Intersection Data

Vehicle-to-Infrastructure messages carry different information from V2V messages. Traffic signal phase and timing data tell the vehicle how long the current signal phase has been running and when it will change, enabling the AI to plan deceleration or acceleration well before the intersection rather than reacting to the visual signal at close range. Road hazard alerts from infrastructure sensors can notify approaching vehicles of accidents, debris, or poor surface conditions ahead of where on-board sensing would detect them. Speed recommendation messages can optimize fuel efficiency and reduce stop-start behavior at signalized intersections. Training AI systems to use this infrastructure data requires annotated examples of how vehicles should respond to each message type under different conditions, including traffic density, vehicle speed, and the reliability of the infrastructure signal itself. HD map annotation services support the static scene representation that V2I-enabled AI systems use as the spatial context within which dynamic V2X messages are interpreted.

The Training Data Challenge: Communication Imperfection

Why Clean Data Is Not Enough

The most common error in V2X training data programs is building datasets from ideal communication conditions: perfect message delivery, no latency, no packet loss, and consistent signal quality. Models trained on this data learn to make decisions assuming the V2X feed is reliable. In real deployment, it is not. Urban environments with dense radio frequency congestion create packet collisions. High vehicle density overwhelms channel capacity. Building obstructions and terrain features create coverage shadows. Network handover events in cellular V2X create brief communication gaps at exactly the moments when continuous data is most needed.

A model that has never been trained on degraded V2X conditions will fail unpredictably when communication quality drops in deployment. Training data needs to include scenarios where messages arrive late, where packets are missing, where the V2X feed disagrees with on-board sensor data, and where the model needs to fall back on sensor-only perception because V2X has dropped out entirely. The role of multisensor fusion data in Physical AI examines how V2X fits into the broader sensor fusion architecture and why the training data for V2X-integrated perception needs to cover the full range of communication quality rather than just the ideal case.

Latency Annotation

Latency is a specific communication degradation that needs explicit annotation in V2X training data. When a vehicle receives a Basic Safety Message that was transmitted 200 milliseconds ago, the sender’s position in the message is already stale. How stale depends on the sender’s speed: a vehicle traveling at 100 kilometres per hour moves nearly six metres in 200 milliseconds. A model that treats a latent V2X message as current will act on a position that is no longer correct. Training the model to account for latency requires training examples where the time difference between message transmission and receipt is annotated alongside the sender’s speed and the resulting position uncertainty. This level of temporal annotation is not present in most existing V2X datasets.

V2P: The Underserved Vulnerable Road User Problem

Why Pedestrians Are the Hard Case

Vehicle-to-Pedestrian communication is technically the most challenging V2X mode and the one with the most safety relevance. Pedestrians are the road users most likely to be killed in a collision with a vehicle. They are also the hardest to detect through V2X because they typically carry smartphones rather than dedicated V2X hardware; their communication is therefore less reliable, and their unpredictable movement patterns make position prediction harder than for vehicles with defined lanes and trajectories.

The gap in V2P training data is severe. Most V2X datasets focus on vehicle-to-vehicle and vehicle-to-infrastructure scenarios. Pedestrian V2X scenarios are underrepresented, partly because collecting real-world pedestrian V2X data requires pedestrian participants with compatible devices in traffic environments, which raises both practical and ethical data collection challenges. This data gap means that AI safety systems trained on available V2X datasets are typically much weaker at pedestrian protection than at vehicle hazard avoidance, which is the opposite of where the safety benefit is greatest. ADAS data services that specifically address vulnerable road user annotation are addressing this gap directly, building training datasets that give V2P perception models the coverage of pedestrian and cyclist scenarios they currently lack.

Multi-Agent Annotation: The Defining Data Requirement

Why V2X Training Data Cannot Be Single-Vehicle

V2X data is inherently multi-agent. A vehicle does not just receive messages from one other vehicle. It receives messages from dozens of surrounding vehicles simultaneously, from roadside infrastructure, and potentially from pedestrians. The safety-relevant signals are often relational: the vehicle in front is braking while the vehicle to the right is accelerating, and there is a pedestrian message originating from a position that will intersect the vehicle’s path in three seconds. No individual vehicle’s data stream contains that safety picture. Only the combined, synchronized data from all communicating participants does.

Training data for V2X AI systems, therefore, needs multi-agent annotation: synchronized logs from all communicating participants in a scenario, labeled to show how the combined data stream should inform a safety decision. This is a fundamentally different annotation task from single-vehicle perception annotation, and it requires data collection infrastructure, annotation workflows, and quality assurance processes designed for multi-agent scenarios. Sensor fusion explained describes how multi-source data streams are architecturally combined in perception systems, providing the framework within which V2X multi-agent annotation sits.

Synchronization as a Ground Truth Problem

For multi-agent V2X training data, synchronization between communication logs and sensor data is a ground truth requirement. If the V2X message timestamps and the LiDAR scan timestamps are not precisely aligned, the model cannot learn the correct relationship between what the V2X network reports and what the vehicle’s own sensors observe. Misalignment at the millisecond level is enough to corrupt the training signal for time-critical safety events like sudden braking or pedestrian crossings. Data collection programs that build V2X training datasets need synchronization infrastructure designed for this level of precision, and annotation programs need to verify synchronization quality as part of quality assurance rather than assuming it.

How Digital Divide Data Can Help

Digital Divide Data provides annotation services for V2X-integrated ADAS and autonomous driving programs, covering the multi-agent annotation, communication degradation labeling, and vulnerable road user scenario coverage that V2X AI training data requires.

For programs building V2X perception training datasets, multisensor fusion data services cover the synchronized multi-agent annotation that V2X training data requires, maintaining temporal alignment between communication logs and sensor data across all participants in a scenario. Annotation workflows are designed for multi-source data rather than being adapted from single-vehicle pipelines.

For programs that need broader ADAS data coverage, including V2X scenarios, ADAS data services, and autonomous driving data services, build scenario-stratified datasets that cover the communication quality range from ideal to degraded, ensuring models train on the full distribution of conditions they will encounter in deployment rather than only the clean cases.

For programs where V2X integrates with HD map and infrastructure data, HD map annotation services provide the static scene context that V2I-enabled AI needs to correctly interpret signal phase data, roadside hazard alerts, and infrastructure positioning messages within the physical geometry of the deployment environment.

Build V2X training data that reflects how communication actually works, not how you wish it would. Talk to an expert!

Conclusion

V2X communication gives AI safety systems access to information that on-board sensing alone cannot provide: what is happening beyond line of sight, what other vehicles are about to do before the action is visible, and where vulnerable road users are, even when they have not entered sensor range. For that capability to translate into reliable safety performance, the AI models need training data that reflects the real behavior of V2X networks: variable latency, packet loss, multi-agent interactions, and the degradation scenarios that ideal-condition datasets systematically exclude.

The training data requirements for V2X AI are more demanding than for single-vehicle perception, not because the underlying annotation is more complex per item, but because the data collection, synchronization, and scenario coverage requirements are harder to meet. Programs that invest in multi-agent annotation infrastructure and communication-aware data collection build V2X safety systems that perform in the field. Programs that train on clean simulated data without real-network imperfections will discover the gap when they test in real traffic conditions. The role of multisensor fusion data in Physical AI covers how V2X sits within the broader data architecture that complete autonomous driving programs require.

References

Takacs, A., & Haidegger, T. (2024). A method for mapping V2X communication requirements to highly automated and autonomous vehicle functions. Future Internet, 16(4), 108. https://doi.org/10.3390/fi16040108

Wang, J., Topilin, I., Feofilova, A., Shao, M., & Wang, Y. (2025). Cooperative intelligent transport systems: The impact of C-V2X communication technologies on road safety and traffic efficiency. Applied Sciences, 15(7), 3878. https://pmc.ncbi.nlm.nih.gov/articles/PMC11990983/

Frequently Asked Questions

Q1. What does V2X stand for, and what does it cover?

V2X stands for Vehicle-to-Everything. It covers several communication modes: Vehicle-to-Vehicle (V2V), where cars share position and speed data; Vehicle-to-Infrastructure (V2I), where vehicles communicate with traffic signals and roadside units; and Vehicle-to-Pedestrian (V2P), where vehicles receive data from smartphones or devices carried by pedestrians and cyclists.

Q2. Why is clean, ideal-condition V2X data insufficient for training AI safety systems?

Because real V2X networks experience latency, packet loss, channel congestion, and coverage gaps. A model trained only on perfect communication conditions learns to make decisions that assume reliable data delivery. In deployment, when communication degrades, that model will fail in ways it was never trained to handle. Training data must include degraded communication scenarios so the model learns to function safely across the full range of network conditions it will encounter.

Q3. What makes V2P more difficult than V2V for training data programs?

Pedestrians typically carry smartphones rather than dedicated V2X hardware, making their communication less reliable and their data less consistent than vehicle V2X. Their movement is also less predictable than vehicles constrained to lanes. Real-world V2P data collection requires pedestrian participants with compatible devices in traffic environments, raising practical and ethical challenges. As a result, V2P scenarios are severely underrepresented in existing V2X training datasets.

Q4. What does multi-agent annotation mean for V2X training data?

Multi-agent annotation means labeling synchronized data from all communicating participants in a scenario simultaneously, not just from a single vehicle’s perspective. A safety event involving multiple vehicles and a pedestrian requires annotated data from all of them together to capture the relational signals the model needs to learn. Single-vehicle annotation cannot produce this, and annotation workflows designed for single-vehicle perception data need to be redesigned for the multi-agent V2X case.

Q5. How does V2X relate to on-board sensor perception systems?

V2X supplements on-board sensors rather than replacing them. On-board sensors, including cameras, LiDAR, and radar, provide high-resolution local perception. V2X extends the vehicle’s awareness beyond sensor range using communicated data. AI safety systems fuse both inputs, using on-board data for close-range, high-resolution decisions and V2X data for extended-range situational awareness and coordination. Training data for these fused systems needs to cover both modalities and the interactions between them.