High-Quality Training Data for Autonomous Vehicles in 2023

Self-driving or autonomous vehicles are one of the most fascinating applications of machine learning and artificial intelligence. These vehicles are able to navigate and drive without human intervention. But how do autonomous vehicles learn to drive?

The answer is, with lots and lots of data. How is this training data obtained? Who can help you gather high-quality training data for autonomous vehicles in 2023? In this guide, we’ll discuss all of that. So, let’s begin!

What is meant by Training Data?

When we talk about training data, we’re talking about a specific set of data that’s used to train a machine learning model. This data is used to teach the model (in this case, the technology used in autonomous vehicles) what to look for and how to make predictions. The training data is a collection of examples that the autonomous vehicle uses to learn. Each training example includes a set of input values (known as features) and a corresponding set of output values (known as labels).

The vehicle looks at the training data and “learns” the relationship between the input features and the output labels. Once it has learned this relationship, it can then be used to make predictions on new data.

It’s important to note that the autonomous vehicle can only learn from the training data. If there is no training data, then the model will not be able to learn anything. The quality of the training data is very important. If the training data is of poor quality, then the model will not be able to learn anything useful. In summary, training data is a specific set of data that’s used to train a machine learning model.

Importance of Training Data for Autonomous Vehicles

As the development of autonomous vehicles continues, the importance of high-quality training data becomes increasingly apparent. In order to ensure that autonomous vehicles are able to operate safely and effectively, it is essential that they are trained on a variety of data that is representative of the real world.

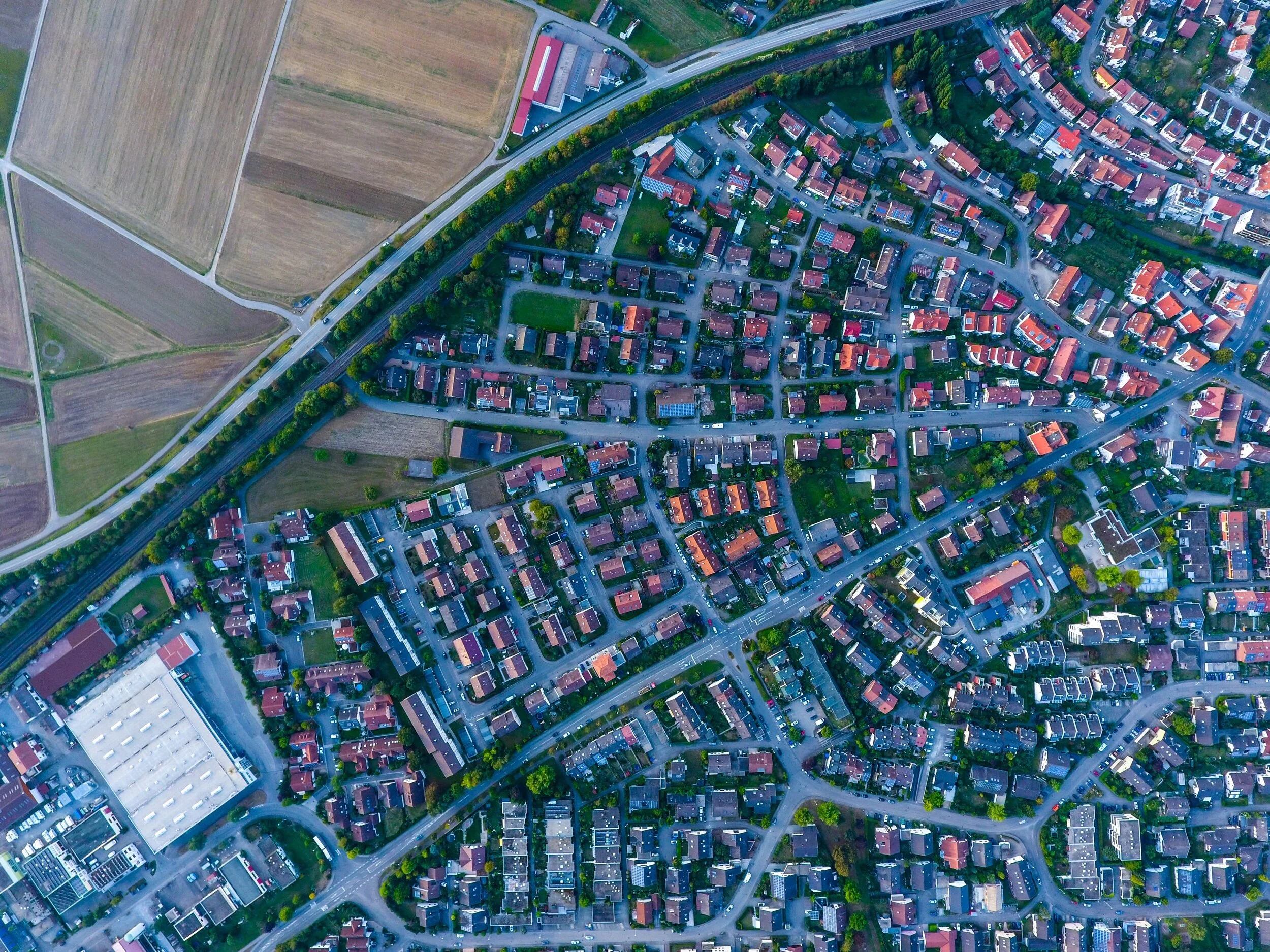

There are a number of factors that need to be considered when collecting training data for autonomous vehicles. First, the data must be of high quality in order to accurately represent the real world. Second, the data must be diverse in order to account for different scenarios that the vehicle may encounter. Finally, the data must be representative of the areas in which the autonomous vehicle will be operated.

High-quality training data is essential for the development of autonomous vehicles because of the following reasons:

-

Autonomous Vehicles Can’t Operate Without Accurate Data

Without accurate data, autonomous vehicles will not be able to learn how to properly operate in the real world. In order to ensure that the data is of high quality, it is important to use data that has been collected from a variety of sources. This will ensure that the data is representative of the real world and will not be biased in any way. -

Training Data Helps Vehicles Navigate Different Situations

In addition to being of high quality, the training data must also be diverse. This is because autonomous vehicles need to be able to learn how to handle a variety of different situations. The data must be representative of different weather conditions, terrain, and traffic patterns. By having a diverse set of data, autonomous vehicles will be able to learn how to properly operate in a variety of conditions. -

Training Data Helps Vehicles With Specific Rules

The training data must be representative of the areas in which the autonomous vehicle will be operated. This is because the vehicle needs to be able to learn the specific rules and regulations of the area in which it will be driving. By having data that is representative of the area, the autonomous vehicle will be able to learn the rules and regulations that are specific to that area.

Collecting high-quality, diverse, and representative training data is essential for the development of autonomous vehicles.

Where does Training Data come from?

When it comes to machine learning, data is key. Without data, there can be no training, and without training, there can be no machine learning. So where does this training data come from?

There are a few different ways to get training data. The first is to simply collect it yourself. This is often referred to as data scraping, and it can be a very tedious and time-consuming process. However, it can also be very rewarding, as you have complete control over the data that you collect.

Another way to get training data is to purchase it from a data provider. This is usually much easier and faster than collecting it yourself, but it can be quite expensive.

Finally, you can also use public data sets. These are data sets that have been made available by governments or other organizations for anyone to use. There are many different public data sets out there, and they can be very helpful for training machine learning models.

What Technology is Used to Gather Training Data?

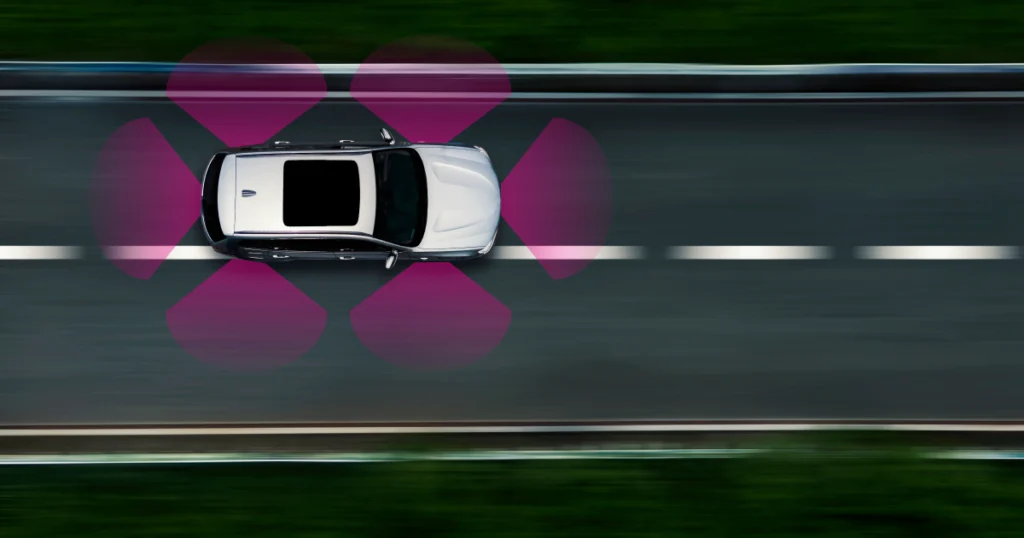

Autonomous driving training data is used to teach self-driving cars how to navigate roads and traffic. This data is collected through a process called sensor fusion, which involves combining data from various sensors (including cameras, lidar, and radar) to build a comprehensive picture of the car’s surroundings.

-

LiDAR: LiDAR (Light Detection and Ranging) is a remote sensing technology that uses laser pulses to measure distance. This information can then be used to create 3D maps of the area being surveyed. LiDAR can be used to measure the distance to objects, as well as their shape, size, and other characteristics. This information can be used to create 3D models of the area being surveyed. The technology is used for a variety of applications, including mapping the surface of the Earth, measuring the height of trees, and surveying land for archaeological sites and is helpful for autonomous vehicles.

-

Radar: Radar technology is used extensively in data training. It is basically a technology that uses radio waves to identify objects and measure their distance, speed, and other characteristics. It provides such information about the target object that is being tracked. Radar technology can be used to track both moving and stationary objects.

-

Camera: Another method that can help with data training is the use of cameras to take pictures of various objects. These pictures can then be used to train the model. This can be done with a variety of different types of cameras, including traditional cameras, infrared cameras, and X-ray cameras.

Data Annotation Types for Autonomous Vehicles

Data annotation is the process of labeling data to provide context and enable machines to understand it. This is a critical step in training autonomous vehicles, as it allows the vehicles to learn from and make decisions based on data that has been specifically labeled for that purpose. Once the data has been labeled, it can be used to train the autonomous vehicle algorithms. This process is typically done with a supervised learning approach, where the labeled data is used to train a model that can then be applied to new data. This allows the autonomous vehicle to learn from and make decisions based on real-world data, rather than just simulated data.

Data annotation is a critical part of training autonomous vehicles, and it is important to ensure that the process is done accurately and with high quality data. Here are some data annotation and labeling tools used in the autonomous vehicle industry:

-

2D Boxing: This is a process of creating a virtual box around an object in order to better track its movements. This is especially important for autonomous vehicles, as they need to be able to accurately track the movements of other objects in order to avoid collisions. There are a few different methods of 2D boxing, but the most common is to use lasers to create the box.

2D boxing can be used to track the movements of multiple objects at the same time. This is important for avoiding collisions, as the vehicle will be able to see the movements of all of the objects in its vicinity.

-

Polygon: For precise object detection and positioning in images and videos, polygon is employed. Polygon is more accurate than 2D boxing, but it can be a time consuming process and costs more money. It’s especially useful when the objects are complex and irregular.

-

3D Cuboids: This is similar to 2D boxing, but as the name suggests, the process creates 3D cuboids around objects. An anchor point is placed at each edge of the item after the annotator forms a box around it. Based on the characteristics of the item and the angle of the picture, the annotator makes an informed guess as to where the edge may be if it is absent or blocked by another object.

-

Video annotation: This can be done by adding labels to specific frames or regions of frames. Video annotation is widely used for autonomous vehicles in the driving prediction models as it helps track objects in a constant series of images.

-

Semantic Segmentation: This technology identifies objects in their environment. Semantic segmentation is a technique that uses artificial intelligence to classify each pixel in an image. This allows the vehicle to distinguish between different objects, such as cars, pedestrians, and traffic signs. Semantic segmentation requires a large amount of data to train the algorithms that identify objects.

-

Lines and Splines: Lines and splines are used to create a virtual map of the area around the vehicle. The map is then used by the vehicle’s computer to navigate. These lines and splines are created by sensors on the autonomous vehicle. The sensors send data to the computer that is then used to create the map.

-

3D point cloud: 3D point cloud is a technology used in autonomous vehicles to create a three-dimensional map of the environment. LiDAR sensors are used to scan the environment and create a point cloud. The point cloud is then used to create a three-dimensional model of the environment that the autonomous vehicle can use to navigate. This helps vehicles plan their route and avoid obstacles.

How to Get Training Data for Autonomous Driving?

If you want to get training data for autonomous driving, there are a few options available to you. You can either purchase it from a data provider, or collect it yourself.

If you choose to purchase data, there are a few things to keep in mind:

-

Make sure that the data is of high quality and has been collected from a variety of different environments.

-

Consider the cost of the data. It can be expensive to purchase large amounts of high-quality data.

If you decide to collect data yourself, you must understand the following:

-

You will need to have a vehicle that is equipped with the necessary sensors for collecting data.

-

You will need to drive in a variety of different environments to collect data from.

-

You should have proper technology to label the data that you collect.

This entire process can be time-consuming and full of hurdles. It’s not easy to collect and label data, especially for autonomous driving where there can be no room for error. One mistake can eventually cost lives, which is why it’s important to know the challenges of collecting this data on your own.

Challenges of Collecting Training Data On Your Own

-

One of the challenges of collecting training data is that it must be diverse enough to cover all potential driving scenarios. This means that data must be collected in a wide variety of locations and conditions, including both urban and rural areas, and in all weather conditions.

-

Another challenge is that data must be collected continuously over time in order to capture changes in the environment, such as new construction or road closures. This can be a difficult and expensive proposition.

-

High quality and accurate data is needed for rare events or extreme conditions in order to make autonomous driving error-free. This can be tough if done individually.

It’s best to weigh both options before narrowing down on one as this decision of how to obtain your training data for autonomous vehicles can have big consequences.

DigitalDivideData as a Reliable Data Labeling Partner

As you can see, gathering training data for autonomous cars isn’t a piece of cake. Not only does the data need to be of high-quality, but it should also be collected using all kinds of annotations for various scenarios and objects. Another important factor is maintaining the timely inflow of data to speed up the process of building your autonomous vehicle.

Digital Divide Data can provide your business with all of this. With a qualified team of highly-skilled tech professionals and data scientists, you’ll not have any doubts about the source and quality of your data. Get in touch with us for your data labeling and training needs.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

High-Quality Training Data for Autonomous Vehicles in 2023 Read Post »