Edge Case Curation in Autonomous Driving

Current publicly available datasets reveal just how skewed the coverage actually is. Analyses of major benchmark datasets suggest that annotated data come from clear weather, well-lit conditions, and conventional road scenarios. Fog, heavy rain, snow, nighttime with degraded visibility, unusual road users like mobility scooters or street-cleaning machinery, unexpected road obstructions like fallen cargo or roadworks without signage, these categories are systematically thin. And thinness in training data translates directly into model fragility in deployment.

Teams building autonomous driving systems have understood that the long tail of rare scenarios is where safety gaps live. What has changed is the urgency. As Level 2 and Level 3 systems accumulate real-world deployment miles, the incidents that occur are disproportionately clustered in exactly the edge scenarios that training datasets underrepresented. The gap between what the data covered and what the real world eventually presented is showing up as real failures.

Edge case curation is the field’s response to this problem. It is a deliberate, structured approach to ensuring that the rare scenarios receive the annotation coverage they need, even when they are genuinely rare in the real world. In this detailed guide, we will discuss what edge cases actually are in the context of autonomous driving, why conventional data collection pipelines systematically underrepresent them, and how teams are approaching the curation challenge through both real-world and synthetic methods.

Defining the Edge Case in Autonomous Driving

The term edge case gets used loosely, which causes problems when teams try to build systematic programs around it. For autonomous driving development, an edge case is best understood as any scenario that falls outside the common distribution of a system’s training data and that, if encountered in deployment, poses a meaningful safety or performance risk. That definition has two important components.

First, the rarity relative to the training distribution

A scenario that is genuinely common in real-world driving but has been underrepresented in data collection is functionally an edge case from the model’s perspective, even if it would not seem unusual to a human driver. A rain-soaked urban junction at night is not an extraordinary event in many European cities. But if it barely appears in training data, the model has not learned to handle it.

Second, the safety or performance relevance

Not every unusual scenario is an edge case worth prioritizing. A vehicle with an unusually colored paint job is unusual, but probably does not challenge the model’s object detection in a meaningful way. A vehicle towing a wide load that partially overlaps the adjacent lane challenges lane occupancy detection in ways that could have consequences. The edge cases worth curating are those where the model’s potential failure mode carries real risk.

It is worth distinguishing edge cases from corner cases, a term sometimes used interchangeably. Corner cases are generally considered a subset of edge cases, scenarios that sit at the extreme boundaries of the operational design domain, where multiple unusual conditions combine simultaneously. A partially visible pedestrian crossing a poorly marked intersection in heavy fog at night, while a construction vehicle partially blocks the camera’s field of view, is a corner case. These are rarer still, and handling them typically requires that the model have already been trained on each constituent unusual condition independently before being asked to handle their combination.

Practically, edge cases in autonomous driving tend to cluster into a few broad categories: unusual or unexpected objects in the road, adverse weather and lighting conditions, atypical road infrastructure or markings, unpredictable behavior from other road users, and sensor degradation scenarios where one or more modalities are compromised. Each category has its own data collection challenges and its own annotation requirements.

Why Standard Data Collection Pipelines Cannot Solve This

The instinctive response to an underrepresented scenario is to collect more data. If the model is weak on rainy nights, send the data collection vehicles out in the rain at night. If the model struggles with unusual road users, drive more miles in environments where those users appear. This approach has genuine value, but it runs into practical limits that become significant when applied to the full distribution of safety-relevant edge cases.

The fundamental problem is that truly rare events are rare

A fallen load blocking a motorway lane happens, but not predictably, not reliably, and not on a schedule that a data collection vehicle can anticipate. Certain pedestrian behaviors, such as a person stumbling into traffic, a child running between parked cars, or a wheelchair user whose chair has stopped working in a live lane, are similarly unpredictable and ethically impossible to engineer in real-world collection.

Weather-dependent scenarios add logistical complexity

Heavy fog is not available on demand. Black ice conditions require specific temperatures, humidity, and timing that may only occur for a few hours on select mornings during the winter months. Collecting useful annotated sensor data in these conditions requires both the operational capacity to mobilize quickly when conditions arise and the annotation infrastructure to process that data efficiently before the window closes.

Geographic concentration problem

Data collection fleets tend to operate in areas near their engineering bases, which introduces systematic biases toward the road infrastructure, traffic behavior norms, and environmental conditions of those regions. A fleet primarily collecting data in the American Southwest will systematically underrepresent icy roads, dense fog, and the traffic behaviors common to Northern European urban environments. This matters because Level 3 systems being developed for global deployment need genuinely global training coverage.

The result is that pure real-world data collection, no matter how extensive, is unlikely to achieve the edge case coverage that a production-grade autonomous driving system requires. Estimates vary, but the notion that a system would need to drive hundreds of millions or even billions of miles in the real world to encounter rare scenarios with sufficient statistical frequency to train from them is well established in the autonomous driving research community. The numbers simply do not work as a primary strategy for edge case coverage.

The Two Main Approaches to Edge Case Identification

Edge case identification can happen through two broad mechanisms, and most mature programs use both in combination.

Data-driven identification from existing datasets

This means systematically mining large collections of recorded real-world data for scenarios that are statistically unusual or that have historically been associated with model failures. Automated methods, including anomaly detection algorithms, uncertainty estimation from existing models, and clustering approaches that identify underrepresented regions of the scenario distribution, are all used for this purpose. When a deployed model logs a low-confidence detection or triggers a disengagement, that event becomes a candidate for review and potential inclusion in the edge case dataset. The data flywheel approach, where deployment generates data that feeds back into training, is built around this principle.

Knowledge-driven identification

Where domain experts and safety engineers define the scenario categories that matter based on their understanding of system failure modes, regulatory requirements, and real-world accident data. NHTSA crash databases, Euro NCAP test protocols, and incident reports from deployed AV programs all provide structured information about the kinds of scenarios that have caused or nearly caused harm. These scenarios can be used to define edge case requirements proactively, before the system has been deployed long enough to encounter them organically.

In practice, the most effective edge case programs combine both approaches. Data-driven mining catches the unexpected, scenarios that no one anticipated, but that the system turned out to struggle with. Knowledge-driven definition ensures that the known high-risk categories are addressed systematically, not left to chance. The combination produces edge case coverage that is both reactive to observed failure modes and proactive about anticipated ones.

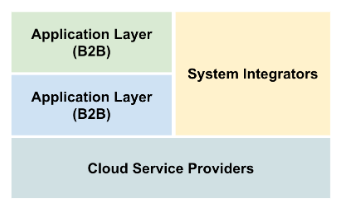

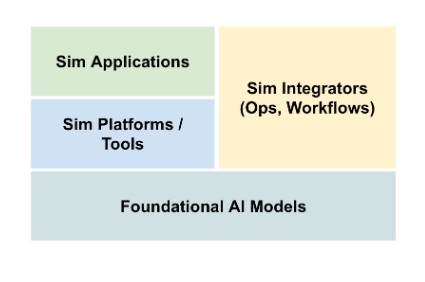

Simulation and Synthetic Data in Edge Case Curation

Simulation has become a central tool in edge case curation, and for good reason. Scenarios that are dangerous, rare, or logistically impractical to collect in the real world can be generated at scale in simulation environments. DDD’s simulation operations services reflect how seriously production teams now treat simulation as a data generation strategy, not just a testing convenience.

Straightforward

If you need ten thousand examples of a vehicle approaching a partially obstructed pedestrian crossing in heavy rain at night, collecting those examples in the real world is not feasible. Generating them in a physically accurate simulation environment is. With appropriate sensor simulation, models of how LiDAR performs in rain, how camera images degrade in low light, and how radar returns are affected by puddles on the road surface, synthetic scenarios can produce training data that is genuinely useful for model training on those conditions.

Physical Accuracy

A simulation that renders rain as a visual effect without modeling how individual water droplets scatter laser pulses will produce LiDAR data that looks different from real rainy-condition LiDAR data. A model trained on that synthetic data will likely have learned something that does not transfer to real sensors. The domain gap between synthetic and real sensor data is one of the persistent challenges in simulation-based edge case generation, and it requires careful attention to sensor simulation fidelity.

Hybrid Approaches

Combining synthetic and real data has become the practical standard. Synthetic data is used to saturate coverage of known edge case categories, particularly those involving physical conditions like weather and lighting that are hard to collect in the real world. Real data remains the anchor for the common scenario distribution and provides the ground truth against which synthetic data quality is validated. The ratio varies by program and by the maturity of the simulation environment, but the combination is generally more effective than either approach alone.

Generative Methods

Including diffusion models and generative adversarial networks, are also being applied to edge case generation, particularly for camera imagery. These methods can produce photorealistic variations of existing scenes with modified conditions, adding rain, changing lighting, and inserting unusual objects, without the overhead of running a full physics simulation. The annotation challenge with generative methods is that automatically generated labels may not be reliable enough for safety-critical training data without human review.

The Annotation Demands of Edge Case Data

Edge case annotation is harder than standard annotation, and teams that underestimate this tend to end up with edge case datasets that are not actually useful. The difficulty compounds when edge cases involve multisensor data, which most serious autonomous driving programs do.

Annotator Familiarity

Annotators who are well-trained on clear-condition highway scenarios may not have developed the visual and spatial judgment needed to correctly annotate a partially visible pedestrian in heavy fog, or a fallen object in a point cloud where the geometry is ambiguous. Edge case annotation typically requires more experienced annotators, more time per scene, and more robust quality control than standard scenarios.

Ground Truth Ambiguity

In a standard scene, it is usually clear what the correct annotation is. In an edge case scene, it may be genuinely unclear. Is that cluster of LiDAR points a pedestrian or a roadside feature? Is that camera region showing a partially occluded cyclist or a shadow? Ambiguous ground truth is a fundamental problem in edge case annotation because the model will learn from whatever label is assigned. Systematic processes for handling annotator disagreement and labeling uncertainty are essential.

Consistency at Low Volume

Standard annotation quality is maintained partly through the law of large numbers; with enough training examples, individual annotation errors average out. Edge case scenarios, by definition, appear less frequently in the dataset. A labeling error in an edge case scenario has a proportionally larger impact on what the model learns about that scenario. This means quality thresholds for edge case annotation need to be higher, not lower, than for common scenarios.

DDD’s edge case curation services address these challenges through specialized annotator training for rare scenario types, multi-annotator consensus workflows for ambiguous cases, and targeted QA processes that apply stricter review thresholds to edge case annotation batches than to standard data.

Building a Systematic Edge Case Curation Program

Ad hoc edge case collection, sending a vehicle out when interesting weather occurs, and adding a few unusual scenarios when a model fails a specific test, is better than nothing but considerably less effective than a systematic program. Teams that take edge case curation seriously tend to build it around a few structural elements.

Scenario Taxonomy

Before you can curate edge cases systematically, you need a structured definition of what edge case categories exist and which ones are priorities. This taxonomy should be grounded in the operational design domain of the system being developed, the regulatory requirements that apply to it, and the historical record of where autonomous system failures have occurred. A well-defined taxonomy makes it possible to measure coverage, to know not just that you have edge case data but that you have adequate coverage of the specific categories that matter.

Coverage Tracking System

This means maintaining a map of which edge case categories are adequately represented in the training dataset and which ones have gaps. Coverage is not just about the number of scenes; it involves scenario diversity within each category, geographic spread, time-of-day and weather distribution, and object class balance. Without systematic tracking, edge case programs tend to over-invest in the scenarios that are easiest to generate and neglect the hardest ones.

Feedback Loop from Deployment

The richest source of edge case candidates is the system’s own deployment experience. Low-confidence detections, unexpected disengagements, and novel scenario types flagged by safety operators are all of these are signals about where the training data may be thin. Building the infrastructure to capture these signals, review them efficiently, and route the most valuable ones into the annotation pipeline closes the loop between deployed performance and training data improvement.

Clear Annotation Standard

Edge cases have higher annotation stakes and more ambiguity than standard scenarios; they benefit from explicitly documented annotation guidelines that address the specific challenges of each category. How should annotators handle objects that are partially outside the sensor range? What is the correct approach when the camera and LiDAR disagree about whether an object is present? Documented standards make it possible to audit annotation quality and to maintain consistency as annotator teams change over time.

How DDD Can Help

Digital Divide Data (DDD) provides dedicated edge case curation services built specifically for the demands of autonomous driving and Physical AI development. DDD’s approach to edge case work goes beyond collecting unusual data. It involves structured scenario taxonomy development, coverage gap analysis, and annotation workflows designed for the higher quality thresholds that rare-scenario data requires.

DDD supports edge-case programs throughout the full pipeline. On the data side, our data collection services include targeted collection for specific scenario categories, including adverse weather, unusual road users, and complex infrastructure environments. On the simulation side, our simulation operations capabilities enable synthetic edge case generation at scale, with sensor simulation fidelity appropriate for training data production.

Annotation of edge case data at DDD is handled through specialized workflows that apply multi-annotator consensus review for ambiguous scenes, targeted QA sampling rates higher than standard data, and annotator training specific to the scenario categories being curated. DDD’s ML data annotations capabilities span 2D and 3D modalities, making us well-suited to the multisensor annotation that most edge case scenarios require.

For teams building or scaling autonomous driving programs who need a data partner that understands both the technical complexity and the safety stakes of edge case curation, DDD offers the operational depth and domain expertise to support that work effectively.

Build the edge case dataset your autonomous driving system needs to be trusted in the real world.

References

Rahmani, S., Mojtahedi, S., Rezaei, M., Ecker, A., Sappa, A., Kanaci, A., & Lim, J. (2024). A systematic review of edge case detection in automated driving: Methods, challenges and future directions. arXiv. https://arxiv.org/abs/2410.08491

Karunakaran, D., Berrio Perez, J. S., & Worrall, S. (2024). Generating edge cases for testing autonomous vehicles using real-world data. Sensors, 24(1), 108. https://doi.org/10.3390/s24010108

Moradloo, N., Mahdinia, I., & Khattak, A. J. (2025). Safety in higher-level automated vehicles: Investigating edge cases in crashes of vehicles equipped with automated driving systems. Transportation Research Part C: Emerging Technologies. https://www.sciencedirect.com/science/article/abs/pii/S0001457524001520

Frequently Asked Questions

How do you decide which edge cases to prioritize when resources are limited?

Prioritization is best guided by a combination of failure severity and the size of the training data gap. Scenarios where a model failure would be most likely to cause harm and where current dataset coverage is thinnest should move to the top of the list. Safety FMEAs and analysis of incident databases from deployed programs can help quantify both dimensions.

Can a model trained on enough common scenarios generalize to edge cases without explicit edge case training data?

Generalization to genuinely rare scenarios without explicit training exposure is unreliable for safety-critical systems. Foundation models and large pre-trained vision models do show some capacity to handle unfamiliar scenarios, but the failure modes are unpredictable, and the confidence calibration tends to be poor. For production ADAS and autonomous driving, explicit edge case training data is considered necessary, not optional.

What is the difference between edge case curation and active learning?

Active learning selects the most informative unlabeled examples from an existing data pool for annotation, typically guided by model uncertainty. Edge case curation is broader: it involves identifying and acquiring scenarios that may not exist in any current data pool, including through targeted collection and synthetic generation. Active learning is a useful tool within an edge case program, but it does not replace it.

Umang architects and drives full-funnel content marketing strategies for AI training data solutions, spanning computer vision, data annotation, data labelling, and Physical and Generative AI services. He works closely with senior leadership to shape DDD’s market positioning, translating complex technical capabilities into compelling narratives that resonate with global AI innovators.

Edge Case Curation in Autonomous Driving Read Post »