Audio Annotation for Gen AI

Human-Verified Audio Data Built for Real-World AI

Use Cases We Support

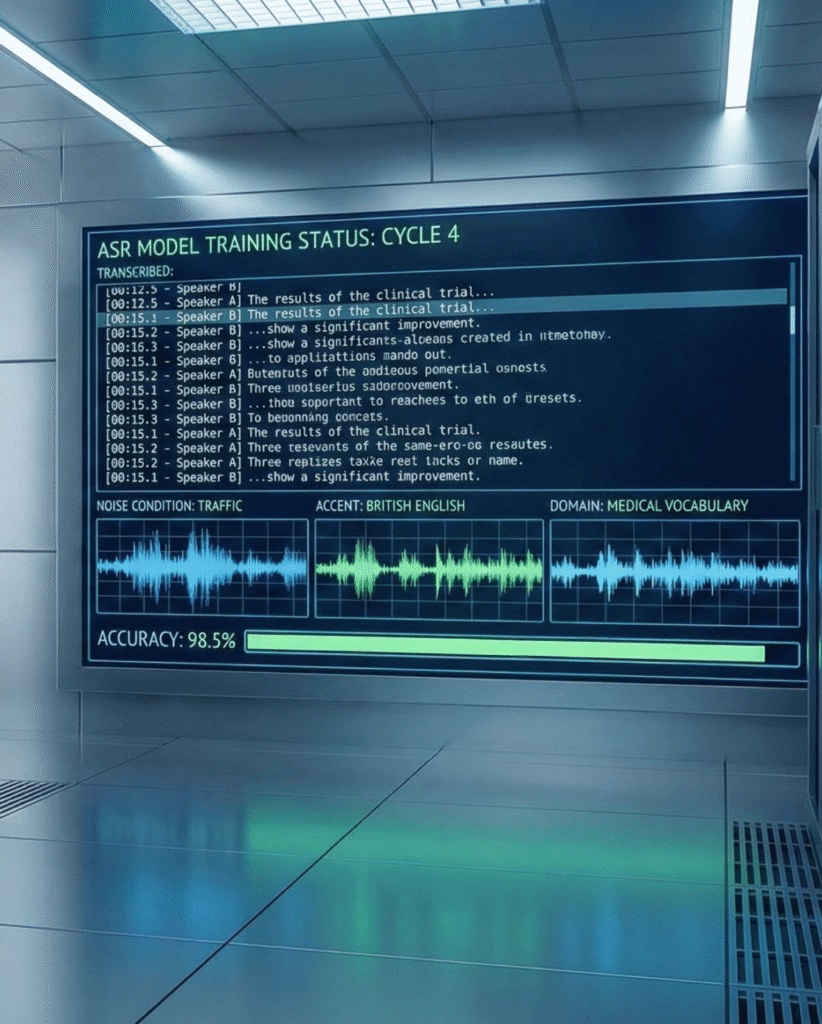

High-accuracy transcription with timestamps, speaker diarization, accents, noise conditions, and domain-specific vocabularies.

Intent labeling, utterance segmentation, sentiment and emotion tagging, and conversational flow annotation.

Native-speaker annotation for regional languages, dialects, and code-mixed speech.

Annotation of environmental sounds, alarms, machinery noise, medical signals, and contextual audio cues.

Tone, stress, hesitation, sentiment, and speaker state labeling for empathy-driven AI systems.

Call monitoring, keyword spotting, redaction tagging, and quality-assurance datasets.

Synchronization of audio with transcripts, subtitles, metadata, and visual signals for generative and retrieval models.

Industries We Support

AV/ADAS

Annotating in-cabin speech, alerts, and environmental audio, voice commands, warning signals, and driver feedback annotation for intelligent assistance systems.

Robotics

Healthcare

Secure transcription and annotation of clinical dictations, telehealth conversations, and diagnostic audio.

Government

Multilingual speech processing, archival audio digitization, and compliant transcription workflows.

Retail & E-Commerce

Voice search, customer service analytics, and conversational AI training data.

Finance & Accounting

Call transcription, intent tagging, and compliance-ready speech datasets.

Cultural Heritage

Preservation, transcription, and annotation of historical audio recordings across languages.

End-to-End Audio Annotation Workflow

Define annotation goals, audio types, languages, quality benchmarks, and edge-case requirements.

Define annotation goals, audio types, languages, quality benchmarks, and edge-case requirements.

Audio cleaning, segmentation, noise categorization, and format normalization.

Define label taxonomies, transcription rules, emotion schemas, acoustic events, and metadata standards.

Combine automated pre-labeling with expert human annotation for speed and accuracy.

Inter-annotator agreement checks, sampling audits, and linguistic validation.

Specialized reviewers ensure annotations align with industry, regulatory, and model-training needs.

Deliver structured, reusable datasets with timestamps, speaker IDs, confidence scores, and context tags.

Secure delivery, iteration support, and retraining feedback integration.

What Our Clients Say

Their annotators understood nuance, emotion, intent, and context, not just words.

DDD’s structured audio datasets significantly reduced our model error rates in real-world conditions.

The combination of speed, scale, and human expertise made DDD a long-term partner for us.

From legacy audio archives to modern AI pipelines, DDD handled everything end-to-end.

Why Choose DDD?

Human-in-the-Loop Expertise

Expert linguists and domain specialists add nuance, context, and judgment that automation alone can’t deliver.

Multilingual & Low-Resource Language Strength

Native-speaker teams enable high-fidelity annotation in low-resource and underrepresented languages.

Security-First Operations

Bias-Aware Dataset Design

DDD’s Commitment to Security & Compliance

Your audio data, often sensitive and personal, is protected at every stage through rigorous global standards and secure operational infrastructure.

SOC 2 Type 2

Verified controls across security, confidentiality, and system reliability

ISO 27001

Comprehensive information security management with continuous audits

GDPR & HIPAA Compliance

Responsible handling of personal, biometric, and medical voice data

TISAX Alignment

Automotive-grade security for mobility and in-vehicle audio workflows

Turn Raw Audio into AI-Ready Intelligence

Frequently Asked Questions

Audio annotation involves labeling speech, sounds, and acoustic events, such as transcripts, speaker IDs, emotions, and environmental cues, to train, evaluate, and fine-tune speech, voice, and multimodal AI models.

We use a human-in-the-loop approach that combines AI-assisted pre-labeling with trained linguists, domain experts, and multi-layer quality assurance, including inter-annotator agreement and expert validation.

Yes. DDD specializes in native-speaker annotation across global languages, dialects, and code-mixed speech, including low-resource and underrepresented regions often missed by generic providers.

We support transcription, timestamps, speaker diarization, intent and entity labeling, emotion and sentiment tagging, audio event classification, redaction, and multimodal audio-text alignment.